Will

1.1K posts

Flash-KMeans Fast and Memory-Efficient Exact K-Means paper: huggingface.co/papers/2603.09…

3月3日,阿里巴巴集团和蚂蚁集团的核心管理层罕见地全员聚齐,出现在杭州云谷学校。马云与阿里巴巴集团主席蔡崇信、CEO吴泳铭、风险委员会主席邵晓锋、电商事业群CEO蒋凡,蚂蚁集团董事长井贤栋和CEO韩歆毅悉数到场。

At the end of the interview this professor goes completely off script and names the illuminati and other secret societies in running the world and pushing for the war in Iran Absolutely wild I think it took the interviewers completely off guard

Claude Code Remote is rolling out now for Pro users

User Interview #3

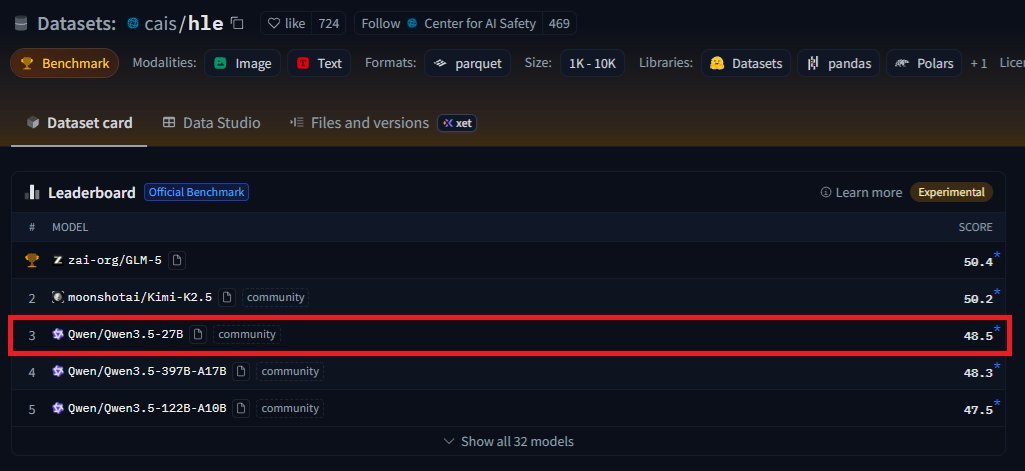

The latest DGX-Spark leaderboard shows Qwen3.5-35B-A3B 🏆 decode at 30 tps (unquantized), which beats GPT-OSS-120B across all benchmarks and nearly all of Sonnet 4.5 & GPT5-Mini. Run this at home on your DGX-Spark or Spark Clone. 🦞 Clawdbot Friendly

Introducing cmux: the open-source terminal built for coding agents. - Vertical tabs - Blue rings around panes that need attention - Built-in browser - Based on Ghostty When Claude Code needs you, the pane glows blue and the sidebar tells you why. No Electron/Tauri. Just Swift/Appkit.