sanguyen.eth

934 posts

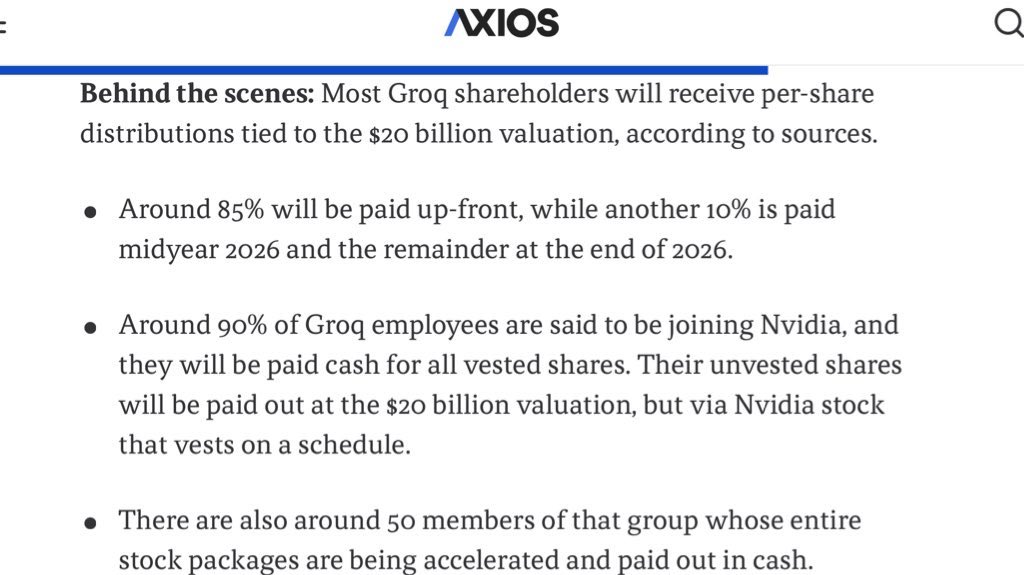

Attention startup employees: Demand that in the event of a Groq-like hacquisition, you get accelerated vesting and pro rata compensation like in a real acquisition. Don’t get Windsurf’d.

Nvidia is buying Groq for two reasons imo. 1) Inference is disaggregating into prefill and decode. SRAM architectures have unique advantages in decode for workloads where performance is primarily a function of memory bandwidth. Rubin CPX, Rubin and the putative “Rubin SRAM” variant derived from Groq should give Nvidia the ability to mix and match chips to create the optimal balance of performance vs. cost for each workload. Rubin CPX is optimized for massive context windows during prefill as a result of super high memory capacity with its relatively low bandwidth GDDR DRAM. Rubin is the workhorse for training and high density, batched inference workloads with its HBM DRAM striking a balance between memory bandwidth and capacity. The Groq-derived "Rubin SRAM" is optimized for ultra-low latency agentic reasoning inference workloads as a result of SRAM’s extremely high memory bandwidth at the cost of lower memory capacity. In the latter case, either CPX or the normal Rubin will likely be used for prefill. 2) It has been clear for a long time that SRAM architectures can hit token per second metrics much higher than GPUs, TPUs or any ASIC that we have yet seen. Extremely low latency per individual user at the expense of throughput per dollar. It was less clear 18 months ago whether end users were willing to pay for this speed (SRAM more expensive per token due to much smaller batch sizes). It is now abundantly clear from Cerebras and Groq’s recent results that users are willing to pay for speed. Increases my confidence that all ASICs except TPU, AI5 and Trainium will eventually be canceled. Good luck competing with the 3 Rubin variants and multiple associated networking chips. Although it does sound like OpenAI’s ASIC will be surprisingly good (much better than the Meta and Microsoft ASICs). Let’s see what AMD does. Intel already moving in this direction (they have a prefill optimized SKU and purchased SambaNova, which was the weakest SRAM competitor). Kinda funny that Meta bought Rivos. And Cerebras, where I am biased, is now in a very interesting and highly strategic position as the last (per public knowledge) independent SRAM player that was ahead of Groq on all public benchmarks. Groq’s “many chip” rack architecture, however, was much easier to integrate with Nvidia’s networking stack and perhaps even within a single rack while Cerebras’s WSE almost has to be an independent rack.

Nvidia is buying Groq for two reasons imo. 1) Inference is disaggregating into prefill and decode. SRAM architectures have unique advantages in decode for workloads where performance is primarily a function of memory bandwidth. Rubin CPX, Rubin and the putative “Rubin SRAM” variant derived from Groq should give Nvidia the ability to mix and match chips to create the optimal balance of performance vs. cost for each workload. Rubin CPX is optimized for massive context windows during prefill as a result of super high memory capacity with its relatively low bandwidth GDDR DRAM. Rubin is the workhorse for training and high density, batched inference workloads with its HBM DRAM striking a balance between memory bandwidth and capacity. The Groq-derived "Rubin SRAM" is optimized for ultra-low latency agentic reasoning inference workloads as a result of SRAM’s extremely high memory bandwidth at the cost of lower memory capacity. In the latter case, either CPX or the normal Rubin will likely be used for prefill. 2) It has been clear for a long time that SRAM architectures can hit token per second metrics much higher than GPUs, TPUs or any ASIC that we have yet seen. Extremely low latency per individual user at the expense of throughput per dollar. It was less clear 18 months ago whether end users were willing to pay for this speed (SRAM more expensive per token due to much smaller batch sizes). It is now abundantly clear from Cerebras and Groq’s recent results that users are willing to pay for speed. Increases my confidence that all ASICs except TPU, AI5 and Trainium will eventually be canceled. Good luck competing with the 3 Rubin variants and multiple associated networking chips. Although it does sound like OpenAI’s ASIC will be surprisingly good (much better than the Meta and Microsoft ASICs). Let’s see what AMD does. Intel already moving in this direction (they have a prefill optimized SKU and purchased SambaNova, which was the weakest SRAM competitor). Kinda funny that Meta bought Rivos. And Cerebras, where I am biased, is now in a very interesting and highly strategic position as the last (per public knowledge) independent SRAM player that was ahead of Groq on all public benchmarks. Groq’s “many chip” rack architecture, however, was much easier to integrate with Nvidia’s networking stack and perhaps even within a single rack while Cerebras’s WSE almost has to be an independent rack.

Presenting UAP Class 1 – Tetra. This class is characterized by a tumbling or rotation-like motion, observed mid-air without any visible means of propulsion. Coordinated formations are also common, appearing consistently across both electro-optical (EO) and infrared (IR) sensors. It’s one of the most reliably observed classes to date—and often where the most pressing questions start. Learn more here → skywatcher.ai/research/tetra