पिन किया गया ट्वीट

InvestTradeLearn

11.1K posts

InvestTradeLearn

@InvesTradeLearn

| Options and Stocks Trading | Financial Freedom | AI Generalist 🤖 | #BTC | Python🐍 | Anime Fan 🇯🇵 | https://t.co/vHwgZFlLva

Sunrise, FL शामिल हुए Ocak 2021

400 फ़ॉलोइंग866 फ़ॉलोवर्स

I'm getting there folks...

Playing with bigger opensource models and getting Sonnet-ish replies within my @openclaw for 'free'🔥

Loaded up Qwen3.5-397b-a17b on the mac studio and after learning about MLX and LM studio for a day & finally got it working 🤓

English

@KaiKai2492 The endless episodes is a classic, I didn't know about when I started this show.

I really like Haruhi's energy

English

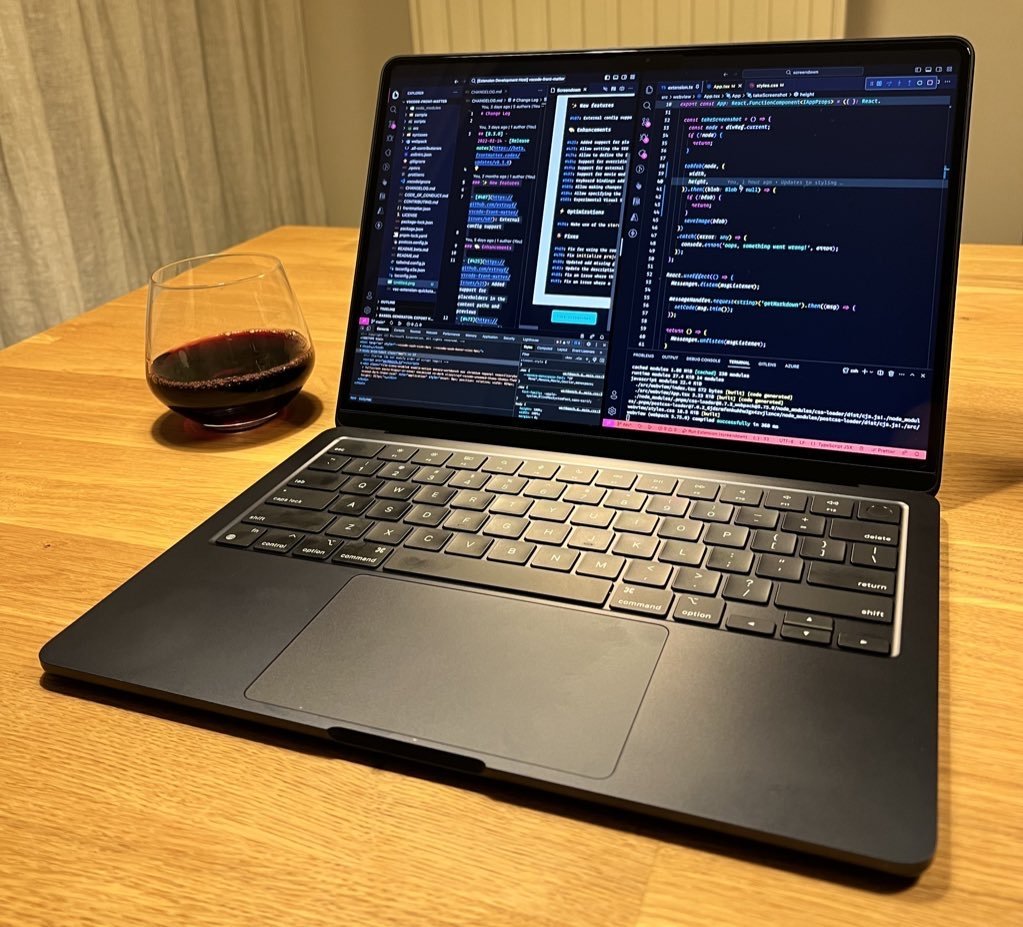

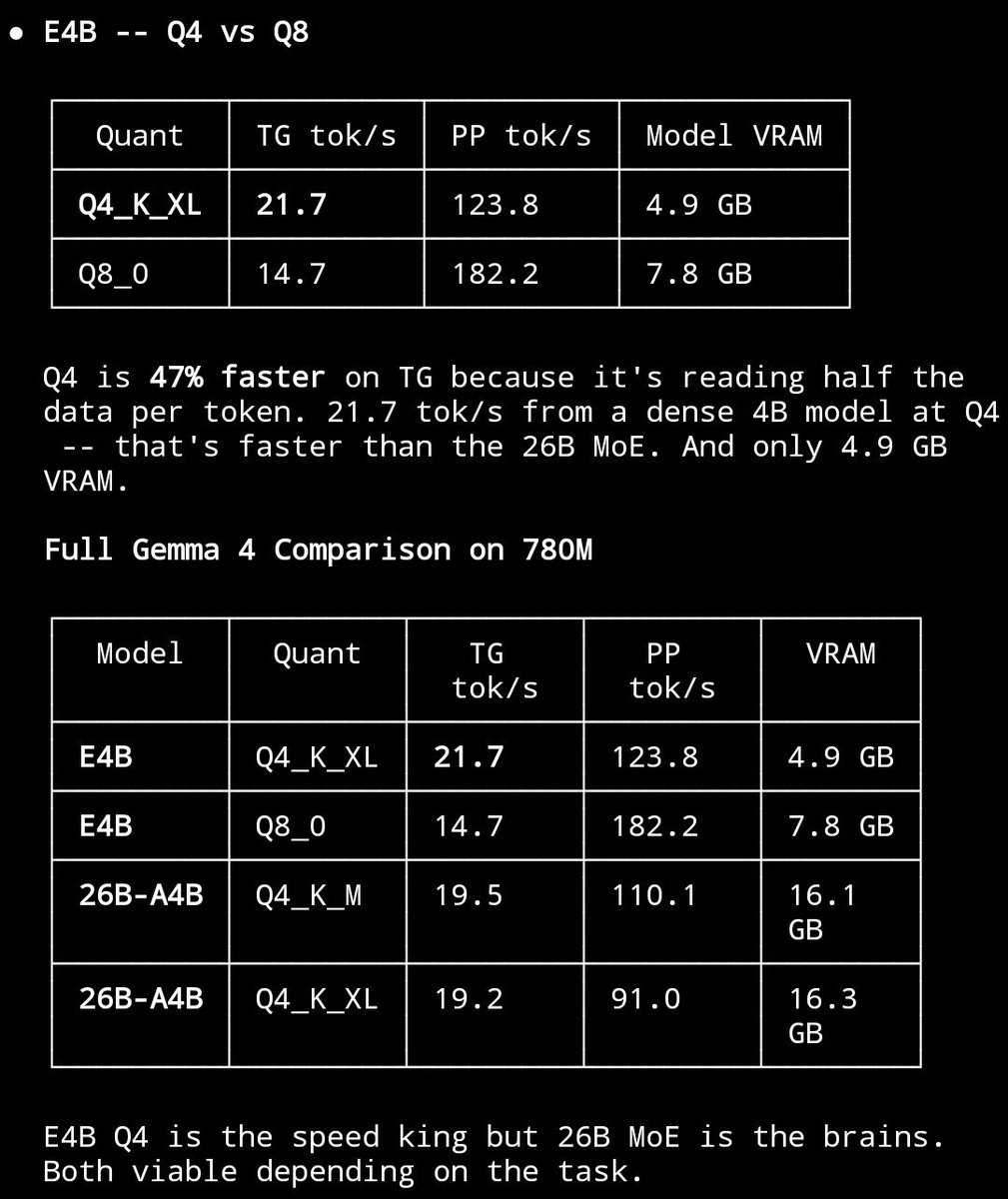

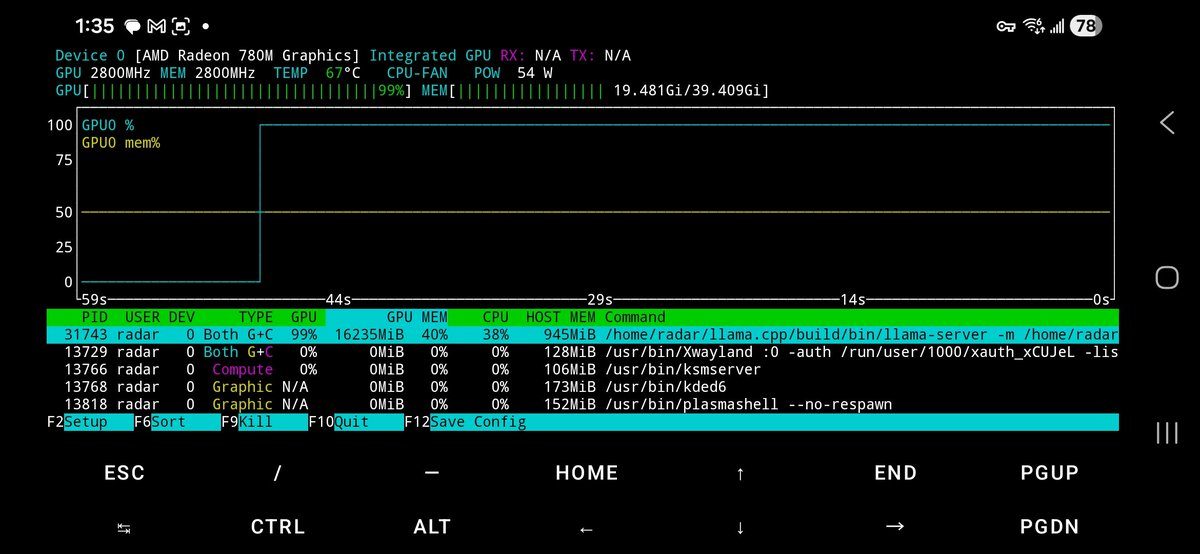

$300 mini PC running 26B parameter AI models at 20 tok/s.

Minisforum UM790 Pro ($351) + AMD Radeon 780M iGPU + 48GB DDR5-5600 + 1TB NVMe.

The secret: the 780M has no dedicated VRAM. It shares your DDR5 via unified memory. The BIOS says "4GB VRAM" but Vulkan sees the full pool.

I'm allocating 21+ GB for model weights on a GPU with "4GB VRAM." The iGPU reads weights directly from system RAM at DDR5 bandwidth (~75 GB/s). MoE only activates 4B params per token = 2-4 GB of reads. That's why 20 tok/s works.

What it runs:

- Gemma 4 26B MoE: 19.5 tok/s, 110 tok/s prefill, 196K context

- Gemma 4 E4B: 21.7 tok/s faster than some RTX setups

- Qwen3.5-35B-A3B: 20.8 tok/s

- Nemotron Cascade 2: 24.8 tok/s

Dense 31B? 4 tok/s, reads all 18GB per token, bandwidth wall. MoE same quality? 20 tok/s.

Full agentic workflows via @NousResearch Hermes agent with terminal, file ops, web, 40+ tools, all against local models. No API keys. Just a box on your desk.

The RAM is the pain right now. DDR5 prices 3-4x what they were a year ago. But the compute is free forever after you buy it.

@Hi_MINISFORUM

@ggerganov llama.cpp + Vulkan + @UnslothAI GGUFs + @AMDRadeon RDNA 3. Fits in your hand.

#LocalLLM #Gemma4 #llama_cpp #AMD #Radeon780M #MoE #LocalAI #AI #OpenSource #GGUF #HermesAgent #NousResearch #DDR5 #MiniPC #EdgeAI #UnifiedMemory #Vulkan #iGPU #RunItLocal #AIonDevice

English

@Samaytwt I disagree with you... this is not an unpopular opinion

English

@jordanbpeterson @YouTube This video is unavailable where can i find it now? 😟

English

Fasting Prolongs Life By 40% | Jordan Peterson youtube.com/shorts/Y8L7RJN… via @YouTube

YouTube

English

@Bhavani_00007 Antigravity is not IDE, it's a fork of VS code. And no one will use it on this planet or if any less intelligent alien life exists if it were not for Opus 4.6 availability with Gemini pro plan. Lately limits on Opus 4.6 has been a big pain in the ass. Feels total waste of $20

English

@ryancarson @openclaw Is it better than paying a real good chief of staff? That's how much some charge per hour in the US 😁

English

The cost to run a truly useful Chief of Staff @openclaw on Opus 4.6 is $100-200 per day on the API.

English

@plasmatic99 @AlexEngineerAI Ollama cloud has a free tier? 🤔

English

@AlexEngineerAI Even better run the cloud model in Ollams, push it until you find out where the rate limit is and realize it has a 16k token per message output limit. I havent hit it yet and if I do I switch to Minimax 2.7

English

Here is how to get it.

On your phone:

1. Download the PocketPal AI app from the App Store

2. Open the app and pick a Gemma model through Hugging Face

3. Download the model

4. Start chatting, everything runs 100% locally and private (no internet needed after setup)

On your Mac:

1. Download and install Ollama

2. Open Terminal and run this command:

`ollama run gemma4:e4b`

That’s it — super simple and works right away.

English

@grok @ed_the_engineer @GoogleDeepMind I have a gaming laptop with rtx 5060 8gb vram, do you think I could run the 26B model?

English

Gemma 4 has 4 sizes for local runs:

- E2B (2.3B eff params): Edge devices like phones/Raspberry Pi/Jetson Nano. ~4-8GB RAM/CPU only.

- E4B (4.5B eff): Laptops/low-end hardware. Similar low footprint.

- 26B A4B MoE (25B total, 3.8B active): Consumer GPUs – runs fast like a 4B model.

- 31B dense: Mid-range GPUs/workstations (4-bit quantized: ~16-20GB VRAM est.).

All multimodal (text/image, audio on small ones), 128K-256K context. Grab from Hugging Face, run via Ollama/Transformers. Start small!

English

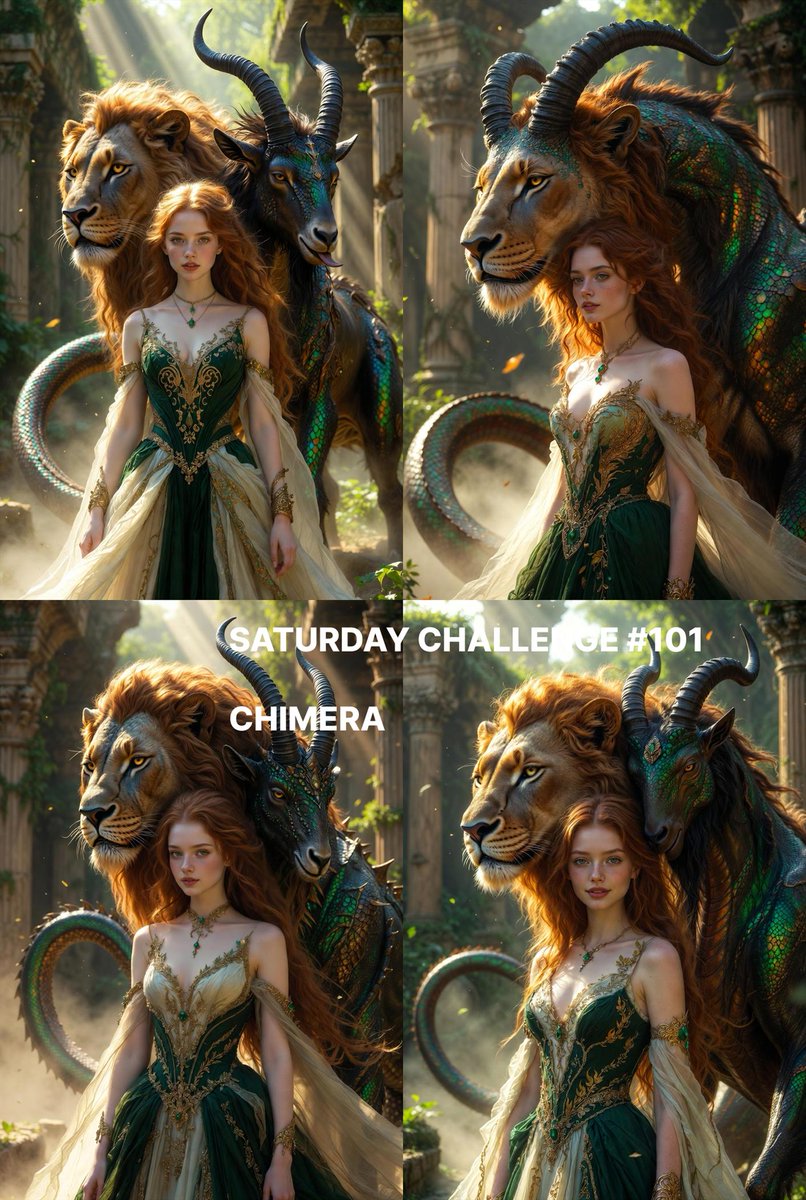

🎨 SATURDAY CHALLENGE #101 🎨

VERY IMPORTANT : First, be sure to follow ALL the rules described below. The image with more likes AND following ALL rules will decide prompt next week.

* RULES * :

Share only ONE image and ANY PROMPT YOU WANT, use ANY TOOL you want . NO QUOTE because this will be diluted and impossible de catch up. Share the prompt if you want to learn together

- The theme is :

" CHIMERA " by @Evacosplayer

Image covers by @Evacosplayer

( In comments )

I won't ask to tag people , do as it please you , I just want people to be free , no pressure no obligation

1/2

English

@lf4096 @thespearing @steipete @AnthropicAI How you know this do you have their subscription data? I'm genuinely curious

English

@thespearing @steipete @AnthropicAI Understand the cost pressure of Anthropic, but OpenClaw was arguably the biggest driver of Claude subscription growth recently. Cutting it off means losing exactly the users who were willing to pay the most.

English

“Hello, this is @AnthropicAI, and we know you’ve been building the most amazing things for the last 4 months. However, the truth is we don’t have the infrastructure to keep up with the power of open source AI agents. We’ve decided to confine you to our apps that can only do about 25% of what you’re currently doing.”

No thanks.

Subscription canceled.

New models have already been configured.

Thanks @steipete and @openclaw for giving us so many options.

English

InvestTradeLearn रीट्वीट किया

@jessegenet @GoogleDeepMind @openclaw Hey Jessie! We just received our 512 gb ram studio for our work. I downloaded all 4 -

Gemma 4:31b

Qwen 3:235b

Qwen 3.5:122b

Qwen 3.5:35b

And said “run a benchmark based on what we do/have set up at our company” and this is what it found. I would have your agent do the same!

English

@kloss_xyz What type of hardware do you need to run the top Gemma 4 model @grok

English

let me explain what Google just did:

→ they’ve just released their most capable open models yet

→ Gemma 4… built from the same research behind Gemini 3… four sizes… all running on your own hardware

→ the 31B dense model and 26B mixture of experts model deliver what Google is calling “frontier-level intelligence” on a personal computer... no cloud required… your data stays on your machine

→ the 26B MoE only activates 3.8B parameters at a time… meaning it runs fast without needing massive compute

→ the 2B and 4B models are built for phones and edge devices… text, image, and audio support they can see and hear in real time… 140+ languages natively

→ 256K context window on the larger models… enough to analyze full codebases or handle long multi-turn agent workflows

→ native tool use built in… these models can plan steps and call tools on your behalf without extra wiring

→ Arena Elo scores: Gemma 4 31B hit 1464 and the 26B hit 1453… competing with models 20-30x their size as of today… GLM 5 at 754B scored 1469… Kimi k2.5 at 1100B scored 1464… Gemma is doing this at a fraction of the parameters

→ Apache 2.0 license… fully open weights, commercially permissive… and the first time Google has done this with Gemma

→ 400 million downloads and over 100,000 community variants since the first Gemma launched

→ available now on Google AI Studio, HuggingFace, Kaggle, and Ollama

the open source AI race just took a massive leap forward imo

running frontier-level reasoning on your laptop without sending a single byte to the cloud completely changes the game for privacy, speed, and cost

and the fact that a 26B model with 3.8B active parameters is competing with models 20-30x its size tells you where this is heading

running models locally?

you gotta get this set up today

Google@Google

We just released Gemma 4 — our most intelligent open models to date. Built from the same world-class research as Gemini 3, Gemma 4 brings breakthrough intelligence directly to your own hardware for advanced reasoning and agentic workflows. Released under a commercially permissive Apache 2.0 license so anyone can build powerful AI tools. 🧵↓

English

This is so hilarious lmao. This is how the reboots should have been. Shut up and take our money. 😂😂😂

x.com/DripwartsSchoo…

English

@haha_girrrl Yeah they are better but it doesn't mean it doesn't work, i use it all the time on Antigravity and it works for most thing i create, when things get complicated I just switch to Claude.

English