Andy Walters

2.6K posts

Andy Walters

@andywalters

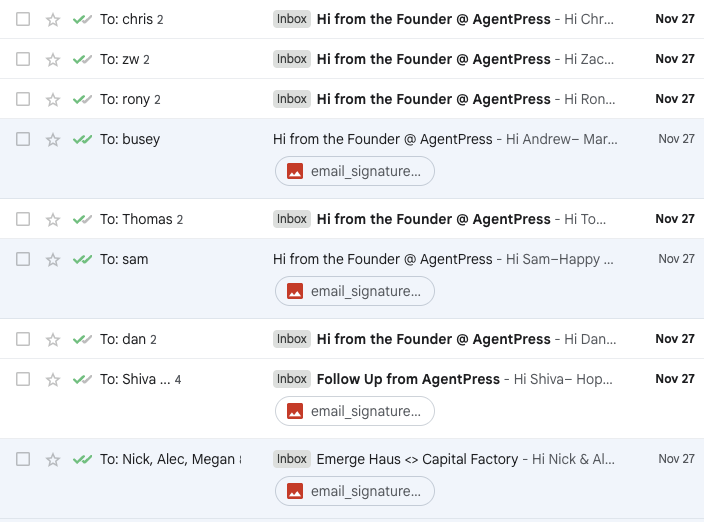

founder & ceo at https://t.co/PqCFXyWUb6 -- AI agents to grow SaaS businesses

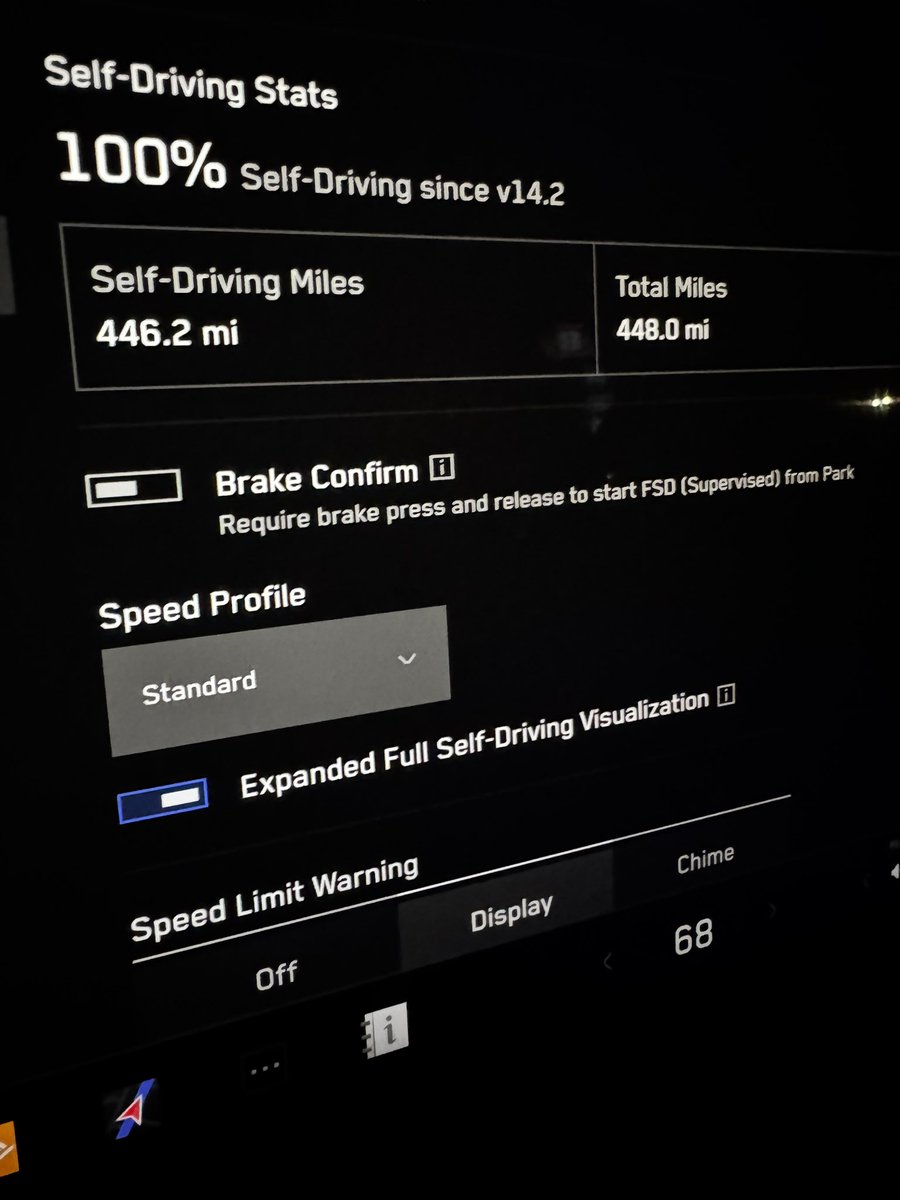

Tesla says FSD was off before Cybertruck crash — but the video tells a different story electrek.co/2026/03/18/tes… by @fredlambert

Deeply strange @nytimes article about @DavidSacks Leading in AI is good for America. And there is no way for America to lead in AI without American investors in AI doing well. Irrespective of whether those investors are David’s friends or his enemies. And like everyone who has been in Silicon Valley for a long time, David has enemies in Silicon Valley who are also doing well by investing in AI. The most disappointing part of the article is that there an interesting debate to be had about the wisdom of selling deprecated GPUs to China that are 18 months ahead of Chinese domestic alternatives and roughly 15 months behind our state of the art. As someone who is an active investor in national defense and super patriotic, I think this is a good idea but reasonable minds can disagree and zero attempt was made to engage with the relevant issues. From a conflict of interest perspective, I think they are being appropriately managed and this has been to David’s economic detriment. His defamation attorneys letter to the NYTimes makes it clear that an exhaustive, good faith effort was made to divest from all potential conflicts. But it is quasi-impossible for David to fully divest from *every* company he and/or Craft has invested in that might *conceivably* benefit from good AI policy making. At the limit, theoretically every company in America and the American government itself (i.e. government bonds) benefit from good AI policy making. I would guess that most of David’s assets are in private companies - if he were to leave the private sector entirely and put his assets into a blind trust he would still know what he owns as they are not liquid. Even if he were to do some dog and pony show of full divestment and a blind trust, does any reasonable person think he would not be able to walk back into Craft with his current economics intact? And everyone who is even remotely qualified to shape AI policy has the same theoretical conflicts of interest. I am 100% ok with talented citizens being able to have a dual role in the government and the private sector. That is actually the entire point of the SGE program. I think there is an argument to be made that it promotes and incentivizes ethical behavior. The downside of malfeasance for David is enormous and there is minimal upside relative to what he already has. Separately, the @nytimes urgently needs to provide remedial math education for these journalists and their editors. The idea that 500,000 GPUs sold to the UAE could generate anywhere near $200 billion in revenue to Nvidia is ridiculous. I look forward to the correction that will be assiduously posted to the @NYTimesPR account which has 90k followers vs. the main account with 52.8m followers. I should note that while I do not know David well, we have many good friends in common and I like him personally. More importantly, I am grateful for his service, which has unquestionably cost him a vast amount of money. And my superstar sister-in-law is a partner at Craft, for which David is lucky.