Tweet Disematkan

Matthew Gregory

3.5K posts

Matthew Gregory

@Autonomy_CEO

Founder of https://t.co/UugAFHqPpp Previously: @Ockam @Azure @Heroku @wunderground @theoceanrace @americascup @Umich

San Francisco, CA Bergabung Mayıs 2012

192 Mengikuti1.3K Pengikut

Matthew Gregory me-retweet

If you want agent-powered products that can run safely in real production environments, this model is worth digging into 🔗 bit.ly/4qfB5Yw

English

Matthew Gregory me-retweet

We worked with @autonomy_comp to show a better pattern with 1Password: • just-in-time access • least privilege by default • no standing creds • no hardcoded secrets

English

@edsim @levie @Ockam You miss a critical feature for Agent-to-Agent communication:

Real-time

These communications will be created and shut down in real time. Basically, these are serverless systems/functions.

We've solved that too. @Ockam's cold starts in ms.

ockam.io/for/serverless…

English

@edsim @levie It's a solved problem.

@Ockam does all of this. It's not even AI agent specific, it can connect anything, anywhere, to everything else.

ex.

ockam.io/blog/secure-ll…

English

Matthew Gregory me-retweet

Agent to Agent communication between software will be the biggest unlock of AI. Right now most AI products are limited to what they know, what they index from other systems in a clunky way, or what existing APIs they interact with.

The future will be systems that can talk to each other via their Agents. A Salesforce Agent will pull data from a Box Agent, a ServiceNow Agent will orchestrate a workflow between Agents from different SaaS products. And so on.

We know that any given AI system can only know so much about any given topic. The proprietary data most for most tasks or workflows is often housed in many multiple apps that one AI Agent needs access to.

Today, the de facto model of software integrations in AI is one primary AI Agent interacting with the APIs of another system. This is a great model, and we will see 1,000X growth of API usage like this in the future. But it also means the agentic logic is assumed to all roll into the first system. This runs into challenges when the second system can deliver a far wider range of processing the request than the first Agent can anticipate.

This is where Agent to Agent communication comes in. One Agent will do a handshake with another Agent and ask that Agent to complete whatever tasks it’s looking for. That second Agent goes off and does some busy work in its system and then returns with a response to the first system. That first agent then synthesizes the answers and data as appropriate for the task it was trying to accomplish. Unsurprisingly, this is how work already happens today in an analog format.

Now, as an industry, we have plenty to work out of course. Firstly, we need better understanding of what any given Agent is capable of and what kind of tasks you can send to it. Latency will also be a huge challenge, as one request from the primary AI Agent will fan out to other Agents, and you will wait on those other systems to process their agentic workflows (over time this just gets solved with cheaper and faster AI). And we also have to figure out seamless auth between Agents and other ways of communicating on behalf of the user.

Solving this is going to lead to an incredible amount of growth of AI Agents in the future. We’re working on this right now at Box with many partners, and excited to keep sharing how it all comes evolves.

English

Matthew Gregory me-retweet

Matthew Gregory me-retweet

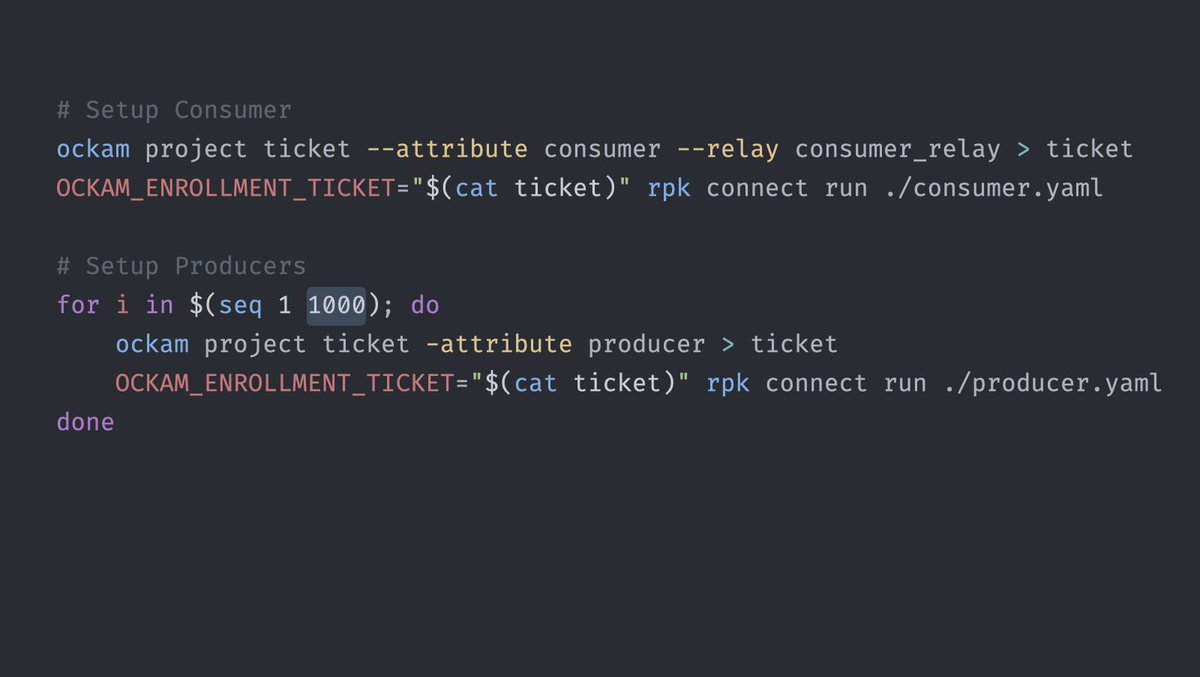

Snowflake <> Kafka, in minutes.

ockam.io/blog/snowflake…

It is unwise to publicly expose your Kafka brokers to the Internet. It paints a giant public target on your most sensitive business data. Teams that value the security of their customers keep their Kafka private.

This however creates the challenge of how to move data between private Kafka and other systems like Snowflake. Ockam makes it trivial to create end-to-end encrypted, very high-throughput, secure portals to private Kafka brokers. Your Kafka could be anywhere – in a data-center, in a private VPC, in any cloud, in a private kubernetes cluster, in a BYOC deployment, etc.

Here is an example:

The @SnowflakeDB team shared with us how many of their customers are unable to securely and easily move insights from analyses in Snowflake to other operational systems.

These enterprise customers have tried all sorts of janky ways to make it work – from punching insecure holes in their firewalls to elaborate multi-region private links that took them many months to setup.

We worked with Snowflake to create a simple Snowflake Native App. This app uses Ockam to securely connect Snowflake with private Kafka. It then enables Change Data Capture (CDC) from any Snowflake table to any Kafka topic. A few simple clicks and you have insights streaming from Snowflake to private Kafka anywhere. Safely and securely.

English

Matthew Gregory me-retweet

🔥 INTRODUCING SOVEREIGN AI - Private, traceable, and secure #AI for the #enterprise

The need to share sensitive data with third parties combined with obscure AI traceability deters enterprises from adopting AI. Enter Sovereign AI, which allows enterprises to leverage the latest AI models on their own hardware and without sending sensitive data outside their own network.

🚀 𝗦𝗵𝗶𝗽 𝘁𝗵𝗲 𝗺𝗼𝗱𝗲𝗹 𝘁𝗼 𝗱𝗮𝘁𝗮 for end-to-end transparency and ownership of your data while delivering low latency, real-time inferencing on your own hardware.

🔎 𝗧𝗿𝗮𝗰𝗲 𝗔𝗜 𝗹𝗶𝗻𝗲𝗮𝗴𝗲 to fully understand a comprehensive audit trail for state-of-the-art LLM all the way to the source.

🔒 𝗦𝗲𝗰𝘂𝗿𝗲 𝗯𝘆 𝗱𝗲𝘀𝗶𝗴𝗻 so you can launch and govern large-scale systems with the highest level of integrity, authentication, and authorization at your fingertips.

Want to leverage cutting-edge AI without ever sharing your data? Sign up for a preview of Sovereign AI👇

ai.redpanda.com/?utm_source=li…

GIF

English

Matthew Gregory me-retweet

We've all heard that "less is more," but this time "more is less" 👀

With Redpanda Connect, #developers can quickly connect to and unlock #data silos, while @Ockam provides the mutual authentication and end-to-end #encryption that zero-trust streaming data apps require.

"Secure #streamingdata is now simple to set up, trivial to maintain, and almost impossible to mess up." - Matthew Gregory, @Ockam_CEO

Less complexity. Less stress 🌴

Read all about it👇

redpanda.com/blog/ockam-red…

English

@clintgibler @Mandiant Everyone keeps talking about MFA in this breach. It's a red herring.

How about we don't make APIs to DBs public to the internet?!

Here's the right way to solve this problem:

github.com/build-trust/oc…

English

❄️ @Mandiant's reporting on the Snowflake Customer Breaches

Financially motivated threat actor

Uses stolen credentials obtained via infostealer malware

~80% of victims had prior credential exposure

~165 exposed organizations

cloud.google.com/blog/topics/th…

English

Matthew Gregory me-retweet

Redpanda Connect with @Ockam empowers a single developer to easily create end-to-end encrypted streaming pipelines that can connect 100's of applications across clouds and hybrid infrastructure.

hackernoon.com/ockam-and-redp…

#data #cybersecurity #datasecurity

English

Matthew Gregory me-retweet

Really important point.

Conversations about end-to-end encryption often focus on the confidentiality property.

Ockam Secure Channels guarantee end-to-end data authenticity, integrity, & confidentiality. The managed service cannot: forge, tamper, or see any message.

Dino A. Dai Zovi@dinodaizovi

@mrinal @0xdade Another good point to make is that without e2ee topics, the pub/sub cluster is a single point of security failure that can observe and/or forge any message.

English

Matthew Gregory me-retweet

Couldn’t agree more with @dinodaizovi & @0xdade in the quoted thread below.

An example: Ockam + Redpanda to create end-to-end encrypted, kafka compatible, data streams in seconds.

Left: A kafka consumer that has the keys.

Right: A kafka consumer that doesn't have the keys.

Dino A. Dai Zovi@dinodaizovi

The security architecture that will make cybersecurity tractable is one where users control cryptographic keys and permit decryption of their data while they are interacting with a service provider. Authenticating to that service provider should be of limited utility w/o keys.

English

Matthew Gregory me-retweet

Matthew Gregory me-retweet

Today, we announced the world's first Zero Trust, Streaming Platform - a partnership between @RedpandaData & @Ockam

Redpanda Connect with Ockam:

Create end-to-end encrypted, highly scalable, data streams between 230+ sources and sinks of business data, in seconds.

Details👇

English

Matthew Gregory me-retweet

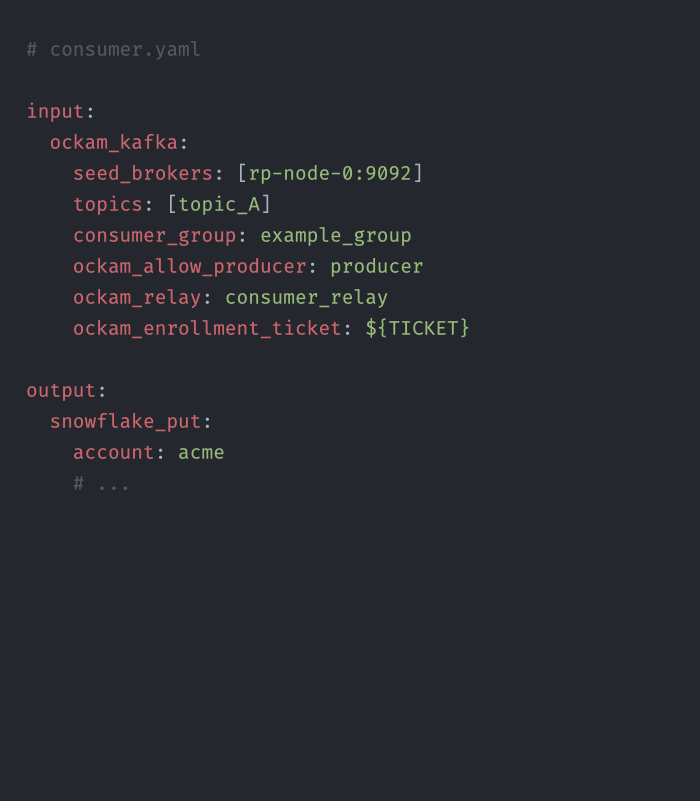

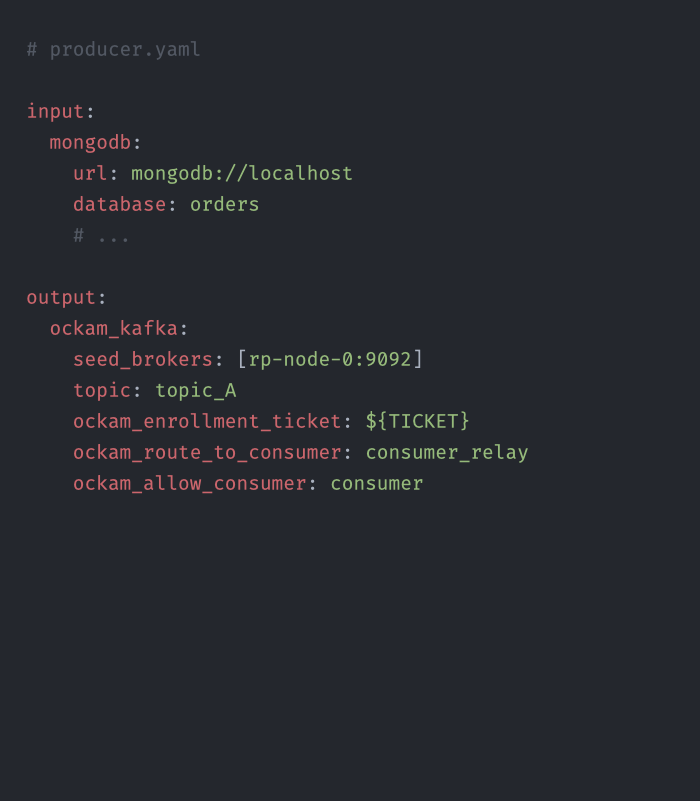

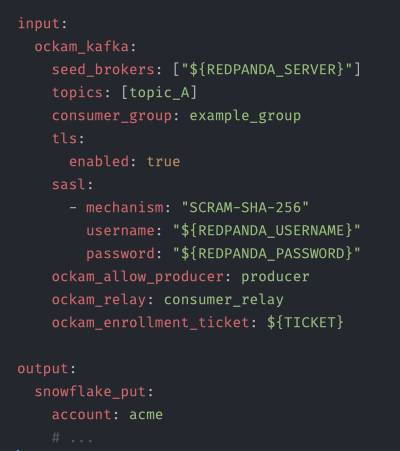

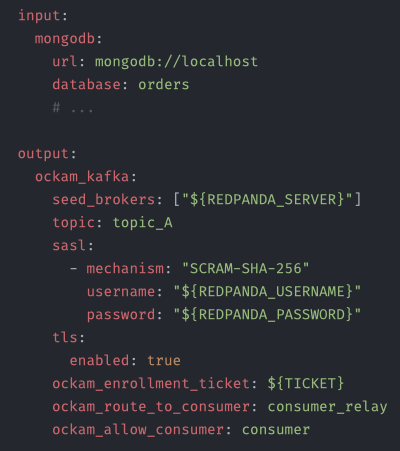

A developer’s experience is dead simple.

For example: In the screenshots below is all the configuration you have to write to create end-to-end encrypted data streams from @MongoDB to @SnowflakeDB

Ockam and Redpanda take care of all the hard parts. 🧵…

English

Matthew Gregory me-retweet

@langchain @cohere @MongoDB Because it’s end-to-end encrypted, you can use it with managed Kafka compatible services in the cloud, like Redpanda Serverless, without increasing risk to sensitive business data or private customer data.

Add a couple of lines of extra yaml and you’re done:

English

Matthew Gregory me-retweet