Refuel

50 posts

Refuel

@RefuelAI

Solve enterprise data tasks at superhuman accuracy. Acquired by @togethercompute

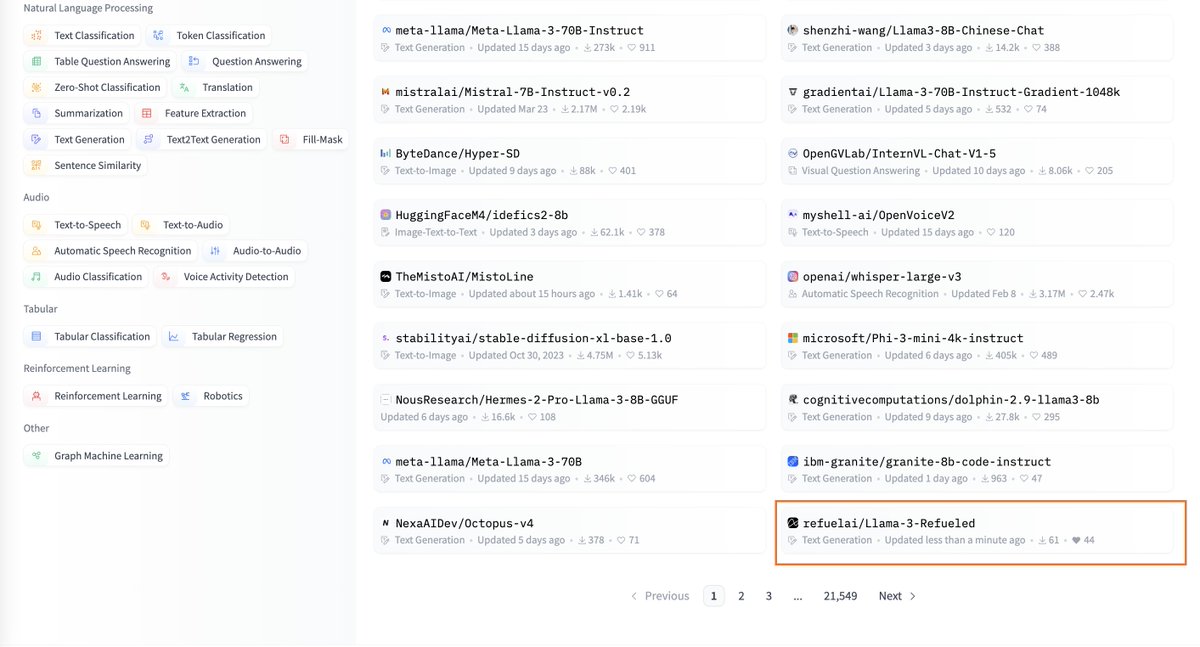

Thrilled to introduce RefuelLLM-2, our latest family of LLMs built for data labeling and enrichment tasks. RefuelLLM-2 (83.82%) outperforms GPT-4-Turbo (80.88%), Claude-3-Opus (79.19%), Llama3-70B (78.2%) and Gemini-1.5-Pro (74.59%) on a benchmark of ~30 data labeling tasks: RefuelLLM-2-small (79.67%), aka Llama-3-Refueled, outperforms all comparable LLMs including Claude3-Sonnet (70.99%), Haiku (69.23%) and GPT-3.5-Turbo (68.13%). We’re open sourcing the model: huggingface.co/refuelai/Llama… You can try out the models here and give us some feedback! labs.refuel.ai/playground. The code and data used for benchmarking the LLMs is available in our Autolabel library: github.com/refuel-ai/auto… One more thing: RefuelLLM-2 family of models output much better calibrated confidence scores - a useful lever to reject, retry or ensemble low confidence outputs.

Thrilled to introduce RefuelLLM-2, our latest family of LLMs built for data labeling and enrichment tasks. RefuelLLM-2 (83.82%) outperforms GPT-4-Turbo (80.88%), Claude-3-Opus (79.19%), Llama3-70B (78.2%) and Gemini-1.5-Pro (74.59%) on a benchmark of ~30 data labeling tasks: RefuelLLM-2-small (79.67%), aka Llama-3-Refueled, outperforms all comparable LLMs including Claude3-Sonnet (70.99%), Haiku (69.23%) and GPT-3.5-Turbo (68.13%). We’re open sourcing the model: huggingface.co/refuelai/Llama… You can try out the models here and give us some feedback! labs.refuel.ai/playground. The code and data used for benchmarking the LLMs is available in our Autolabel library: github.com/refuel-ai/auto… One more thing: RefuelLLM-2 family of models output much better calibrated confidence scores - a useful lever to reject, retry or ensemble low confidence outputs.

Thrilled to introduce RefuelLLM-2, our latest family of LLMs built for data labeling and enrichment tasks. RefuelLLM-2 (83.82%) outperforms GPT-4-Turbo (80.88%), Claude-3-Opus (79.19%), Llama3-70B (78.2%) and Gemini-1.5-Pro (74.59%) on a benchmark of ~30 data labeling tasks: RefuelLLM-2-small (79.67%), aka Llama-3-Refueled, outperforms all comparable LLMs including Claude3-Sonnet (70.99%), Haiku (69.23%) and GPT-3.5-Turbo (68.13%). We’re open sourcing the model: huggingface.co/refuelai/Llama… You can try out the models here and give us some feedback! labs.refuel.ai/playground. The code and data used for benchmarking the LLMs is available in our Autolabel library: github.com/refuel-ai/auto… One more thing: RefuelLLM-2 family of models output much better calibrated confidence scores - a useful lever to reject, retry or ensemble low confidence outputs.

Thrilled to introduce RefuelLLM-2, our latest family of LLMs built for data labeling and enrichment tasks. RefuelLLM-2 (83.82%) outperforms GPT-4-Turbo (80.88%), Claude-3-Opus (79.19%), Llama3-70B (78.2%) and Gemini-1.5-Pro (74.59%) on a benchmark of ~30 data labeling tasks: RefuelLLM-2-small (79.67%), aka Llama-3-Refueled, outperforms all comparable LLMs including Claude3-Sonnet (70.99%), Haiku (69.23%) and GPT-3.5-Turbo (68.13%). We’re open sourcing the model: huggingface.co/refuelai/Llama… You can try out the models here and give us some feedback! labs.refuel.ai/playground. The code and data used for benchmarking the LLMs is available in our Autolabel library: github.com/refuel-ai/auto… One more thing: RefuelLLM-2 family of models output much better calibrated confidence scores - a useful lever to reject, retry or ensemble low confidence outputs.