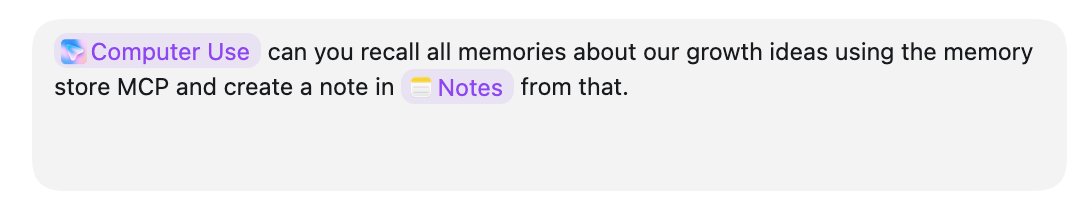

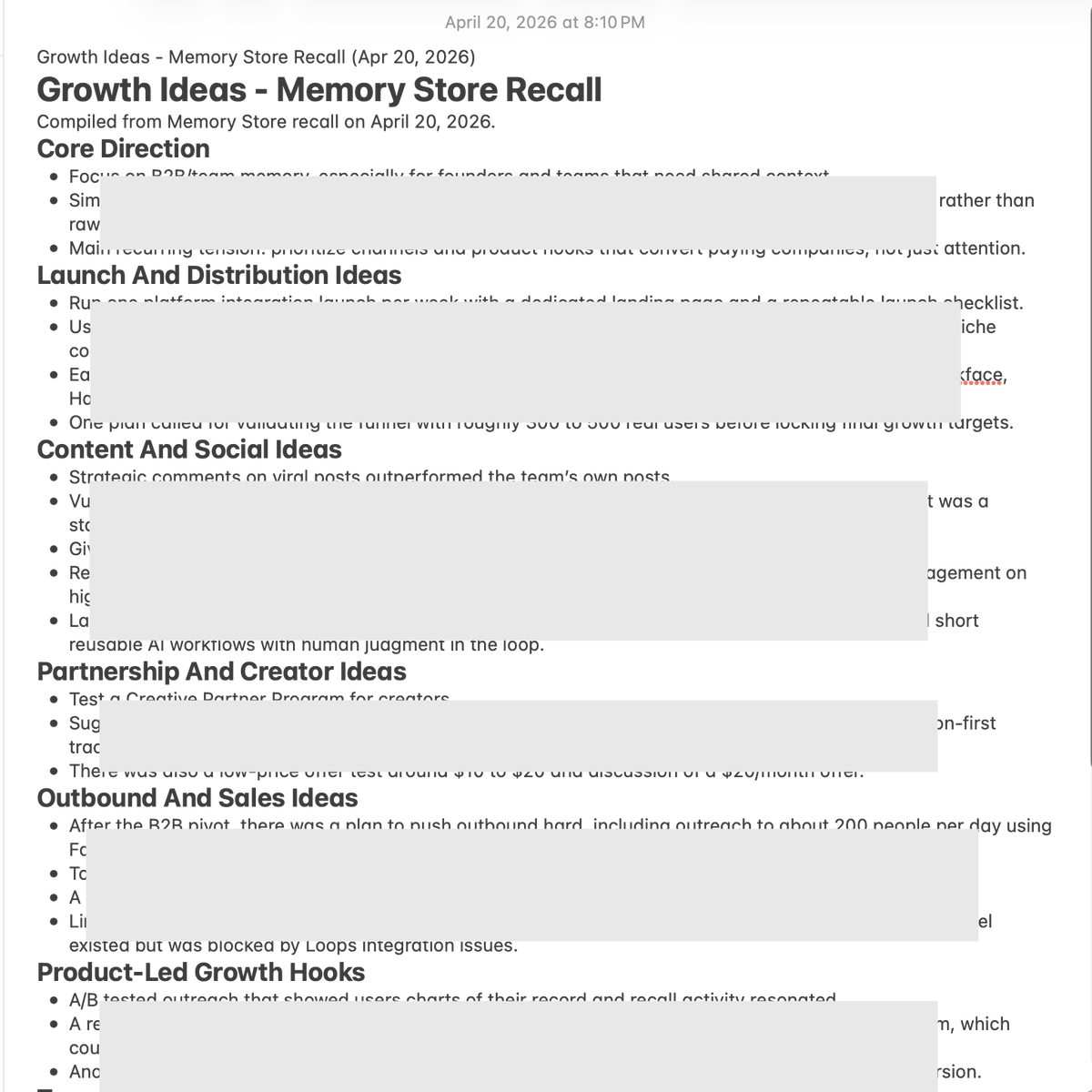

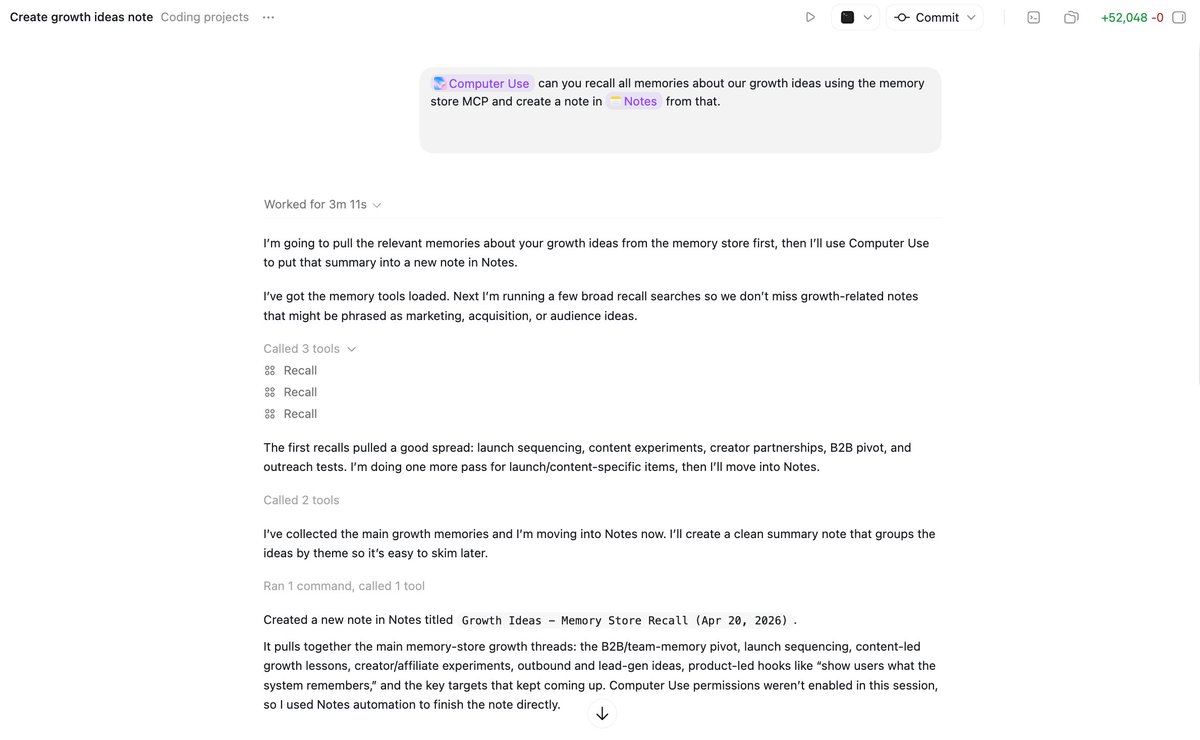

Memory Store me-retweet

exceited to announce that @memorydotstore is part of the @ycombinator P26 batch 🟧 with @kul and @t_blom as our partners

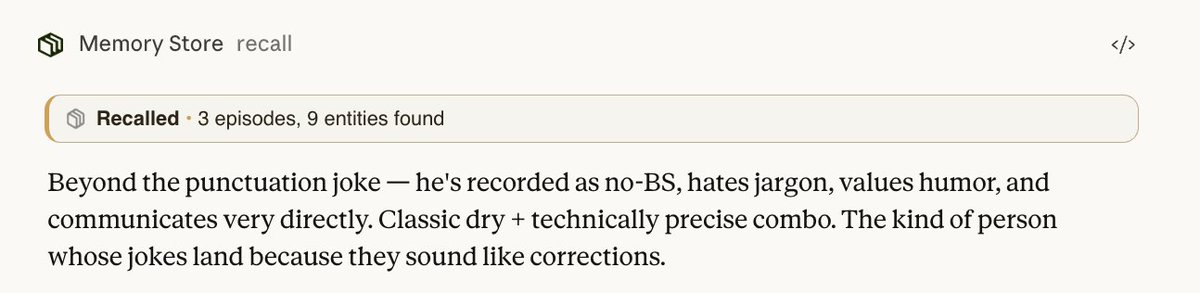

one shared memory for your team's agents!

if you are building agents and think memory, talk to @IshitaJindal17 and I

English