Greg Kamradt@GregKamradt

Today we're launching ARC-AGI-3

135 Novel Environments (nearly 1K levels) we build by hand

It is the only unsaturated agent benchmark in the world

Each game is 100% human solvable, AI scores <1%

This gap between human and AI performance proves we do not have AGI

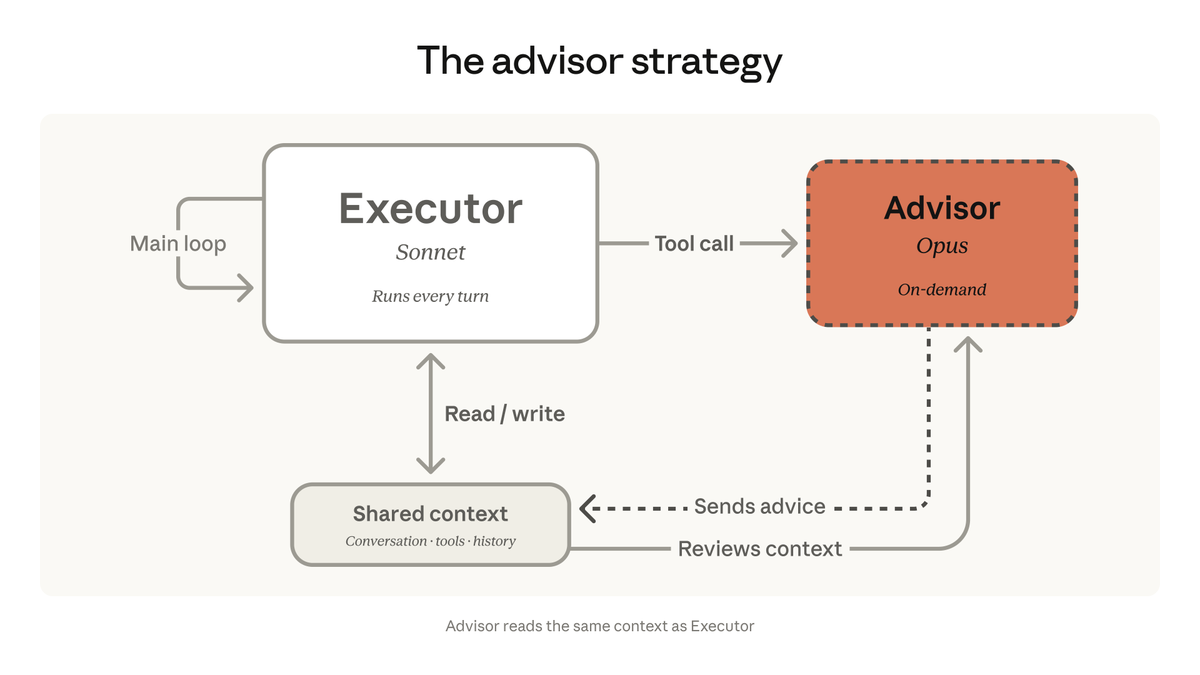

Agents today need human handholding. Agents that beat V3 will prove they don’t need that level of supervision.

Agents that beat V3 will demonstrate:

* Continual learning - Each level builds on top of each other. You can’t beat level 3 without carrying forward what you learned in levels 1 and 2.

* World modeling - Many of the environments require planning actions many actions ahead. AI will have no choice but to build an internal world model for how the environment works, run simulations “in its head” and proceed with an action

In our early testing, we’ve seen a few clear failure modes of AI:

* Anticipation of future events - If an environment requires that AI set up a scene, and then carry out a scenario (like in sp80), it starts to break down.

* Anchoring on early hypothesis - Early in a game it comes up with a hypothesis (even if wrong) and refuses to update its beliefs later.

* Thinking it’s playing another game - AI thinks it’s playing chess, pacman. The training data holds hard!

One major problem is there is too much data to carry forward in a single context. Models must learn what to remember and what to forget

The agent that beats ARC-AGI-3 will have demonstrated the most authoritative evidence of progress towards general intelligence to date

We're excited to get this out and excited to see what you think