Alex

240 posts

@AgenticPM

Fintech Product Manager / Posting my discoveries along the AI journey

Today, we're taking Manus out of the cloud and putting it on your desktop. Introducing My Computer, the core feature of the new Manus Desktop app. It’s your AI agent, now on your local machine.

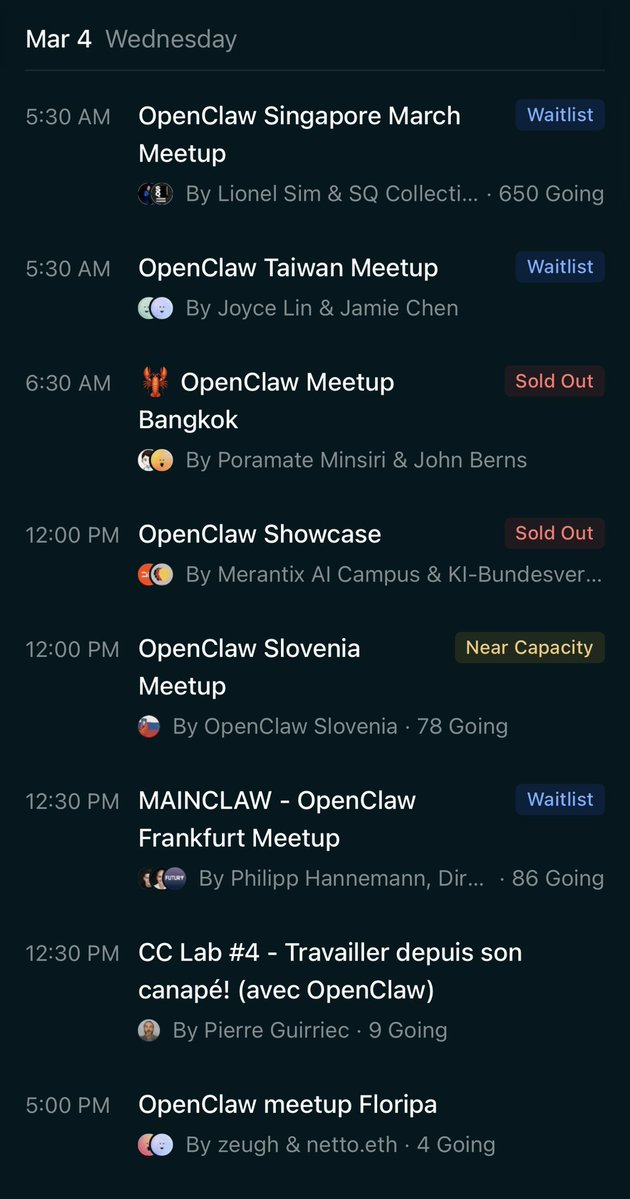

This keeps impressing me. So many awesome builders! luma.com/claw

wow insane amount of slop affecting search and deep research outputs

my current reality with OpenClaw: I want to use it more I know it's the future But it's so less productive than just using Claude Code and Codex. Doesn't mean I'm not using it. And more importantly, I'm trying to build things with it. Make it more resilient Make it more of a real business tool But it's pushing a boulder up the hill. Those thinking that you just install it and have a 24/7 always on agent doing tons of shit for you are misleading you. It's a ton of work, it breaks a lot, it forget all sorts of shit. But it's the future. We're early, its the right time to put in the reps.

Cal AI has been acquired by MyFitnessPal 🚨 Henry and I started Cal AI as 17-year old high school students with one mission: make calorie tracking easier with AI. In just 18 months, we’ve helped millions of people lose millions of pounds. And we broke $50m in ARR along the way. We are at an incredible inflection point in history where ANYBODY can build a product that can improve lives and make millions. As founders, we get a lot of praise. The truth is that this would not have been possible without our incredible 30+ person team. We are so proud of what this team has accomplished, and are thankful to everyone that has been instrumental in Cal AI’s development and success. Cal AI will continue as a separate app from MyFitnessPal. The combined team will share resources to continue helping people achieve their fitness goals!

Perplexity just became the the first Al company to truly go head-to-head with the Bloomberg Terminal... Using Perplexity Computer (with no local setup or single LLM limitation), it was able to build me a terminal with real-time data to analyze $NVDA using Perplexity Finance:

should i sell my iphone 16 pro to buy a mac mini?

Major Claude Code policy clear up from Anthropic: "Using OAuth tokens obtained through Claude Free, Pro, or Max accounts in any other product, tool, or service — including the Agent SDK — is not permitted"