Jason Toevs

1.8K posts

Jason Toevs

@JasonToevs

CTO at UP 🤝 prev: built AI for Adobe, NBC & PGA Tour → | 🏆 2 Exits, 44 Fails 🪦 | Ethical AI + Farm Roots 🌾 | Helping leaders align tech with values.

This video was edited entirely by Claude Code. I just gave it the files. If 50 people comment, I'll share the exact setup.

Wow! AI ASSISTED GARAGE MANUFACTURING IS ABOUT TO EXPLODE! CAD Drawings From Just A Picture! MIT just released something profound for creators and engineers alike. Picture this. You take a photo of an object, upload it, and an AI delivers a fully parametric CAD model, complete with editable code and construction history. This is open source GenCAD, from MIT's Decode Lab. It uses autoregressive transformers and diffusion models, trained on hundreds of thousands of images and CAD files. Input a 2D photo or sketch. Output valid CadQuery Python code that beats models like GPT-4.5 in accuracy. Why does this matter? It speeds up reverse engineering, prototyping, and part searches in vast databases. No more hours spent modeling from scratch. Field repairs, custom designs, education, all transformed. It even retrieves similar parts from libraries of thousands. For industries like manufacturing and aerospace, it cuts costs and boosts innovation. Hobbyists gain pro tools without the steep curve. I am testing it now on random objects and can not believe how much of a super power this is. I can start dozens of companies just on this AI model. This open-source gem is here: gencad.github.io The future of building stuff arrives in a snapshot.

24 hours after opening the TrustMRR API, people have built 20+ apps on top of it. AI has made the idea-to-product loop almost instant. We are going to see so many crazy things in 2026. It's just the beginning. I will post all wrappers below. Tag yourself if I forget.

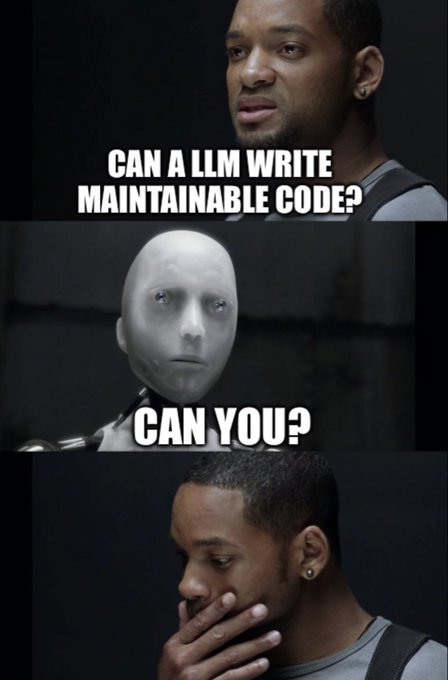

Me reviewing code generated by Claude before pushing to prod

AI is making coding fun again for so many people So many stories like this 🥹

We’re excited to announce our $6M seed round, led by @GeneralCatalyst, with @ycombinator, @paulg, @dharmesh, @pcopplestone, @karim_atiyeh, @taro_f and others participating. Every AI Agent needs its own email inbox.