Rossco Paddison

4.9K posts

Rossco Paddison

@rosscopaddison

Founder of A Player Labs. 💥 DM to collab or chat!

Global Citizen 가입일 Eylül 2010

1.4K 팔로잉4.9K 팔로워

Rossco Paddison 리트윗함

@rosscopaddison @chamath @8090_Factory The self aggrandizing, buzzword ridden response you came up with sure confirms it

English

Manufacturing has SOPs, manuals, and systems.

Knowledge work has… "Ask Steve, he knows how it works."

That's not a process. That's a single point of failure wearing a lanyard.

One of Software Factory’s key selling points is its ability to absorb tribal knowledge and give companies tools to manage its evolving knowledge and make it available to all of its employees.

English

@JamesonCanada @chamath @8090_Factory If you call helping 14 diff companies with hundreds of employees each build production grade software in the age of ai “fiddling around”

Then yep I’m a LLM fiddler!

English

Steve doesn’t know the cognitive tasks he goes through to get the outcome either.

I made a prompt for this, I call it the operator simulator:

“think outloud every step an A Player [role type] is going through to do [specific] job to achieve [explicit] outcome.

Written like a transcript of inside their mind, real time every step, trade off, decisions they’re making in real time, deliver as a transcript. Keep it stream of consciousness, can clean up into deterministic process after”

English

@chamath @8090_Factory But also I built a cannibalising formula that takes this and makes it deeply deterministic.

I was inspired by Gary Klien’s knowledge engineering work for this.

English

@chamath One comment, growth investing might not die, the lifecycle is shorter so might be the roi horizon.

Instead of ten year carries we might be looking at 3-4 yrs

I think they just speed up…

English

@TheCraigHewitt @grok what kind of tokens per second on this setup on a Mac mini ?

English

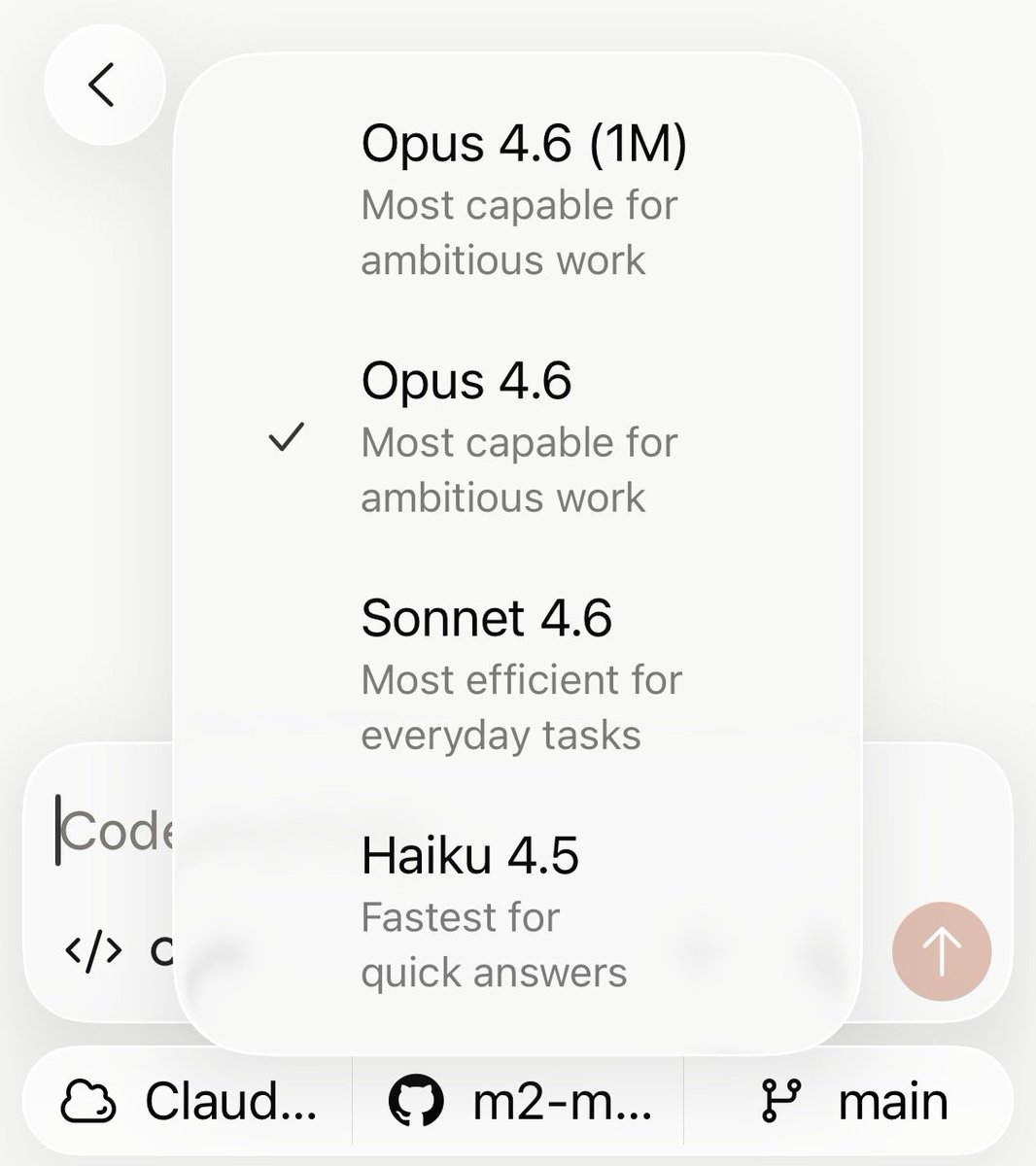

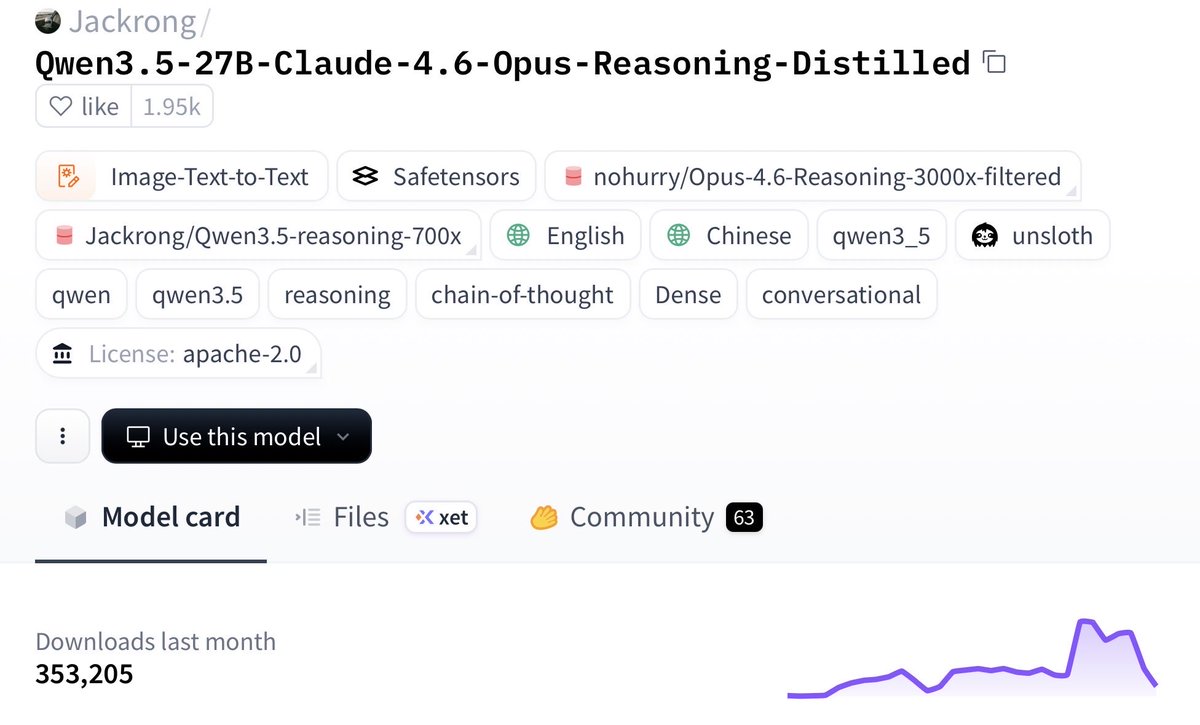

Very bullish on open source and local models

Imagine running near-Opus-level model locally on that $600, 16GB Mac Mini you bought last month

This 27B Qwen3.5 distill was trained on Claude 4.6 Opus reasoning traces and is putting up real numbers:

- beats Claude Sonnet 4.5 on SWE-bench

- keeps 96.91% HumanEval

- cuts CoT (chain of thought) bloat by 24%

- runs in 4-bit quantization

Why this matters:

local agent loops get a lot cheaper, faster, and more usable.

frontier models aren’t going to keep subsidizing cheap tokens on subscriptions forever

300K+ downloads already on HF

Link below 👇🏻

We’re early

English

Rossco Paddison 리트윗함

Marc Andreessen says AI is the "silver bullet excuse" for companies laying people off, but most layoffs are actually due to higher interest rates and overstaffing during COVID:

"This entire labor displacement thing is 100% incorrect. It's completely wrong. It's classic zero-sum economics."

"It was the combination of the two—interest rates going to zero during COVID, and then the complete loss of discipline at all these companies when they went virtual and when employees just became an icon on a screen."

"What you have happening right now is that essentially every large company is overstaffed. We could debate how much—it's at least overstaffed by 25%. I think most large companies are overstaffed by 50%. A lot of them are overstaffed by 75%."

"And now they all have the silver bullet excuse—it's AI."

@pmarca with @HarryStebbings

English

Rossco Paddison 리트윗함

Tobi Lutke explains what the VCs who passed on Shopify got wrong

Tobi recounts pitching Shopify to VCs on Sand Hill Road a few years after founding Shopify.

Investors passed because they thought the addressable market was too small. At the time, there were about 40,000-50,000 online stores, and even if Shopify captured 50% of the market, that still wouldn’t be a venture-scale business.

When Tobi ran into the VC partner a few years ago, the partner asked Tobi what he missed (Shopify is valued at almost $100 billion today).

Tobi explained:

“You were actually correct, but what you didn’t realize was that Shopify was the solution to the very problem you identified. The reason there was only 40,000 online stores was because it was hard, expensive, and everyone who tried ran into all these brick walls of complexity, which Shopify, one after another, smoothed over and made simple to do.”

Tobi believes this is a common mistake:

“What a lot of free-market thinkers don’t understand is that between the demand and eventual supply lies friction. And I actually think that friction is probably the most potent force for shaping the planet that people just generally do not acknowledge… That was my theory when I turned my snowboard store into Shopify: there was a lot more people like me except there was too much friction which we needed to solve. And Shopify has proven out that every time we make the process simpler, there’s more consumption. At this point, we have a million merchants on Shopify, which is a mind-blowing number. So friction is a major component, and it’s something that software is uniquely good at reducing.”

Video source: @danmartell (2019)

English

Rossco Paddison 리트윗함

David Friedberg on Personal Agency in the Age of AI: "Stop Blaming Everyone Else"

"We never talk about responsibility.

We always talk about where the government failed us and where these companies f***ed us.

And we never talk about, what did we individually do wrong?

How did I individually choose to drink 100 sodas a week?

How did I individually choose to get my kids addicted to social media? Where the f*** was I as a parent?

We don't talk about our responsibility.

And by the way, this fundamentally addresses this point about human agency, which I think is more critical in this era than ever because AI is going to flood us with f*****g everything all the time, nonstop.

What we choose to do in a world where we're already getting everything, and how we choose to not take everything that's being offered to us, I think is a critical part of what's going to distinguish human success from human failure.

And it's gonna become more apparent in the future, and not everything is about liability, and not everything is about the government failing us.

It's about people making choices and we don't talk about it."

English

Rossco Paddison 리트윗함

"The winner of the AI race will not be the company with the smartest model.

It will be the company that is most effective at making the local hero—the teacher, the accountant, the community leader—ten times more powerful.

Because in the end, intelligence travels through systems, but adoption travels through people."

@heysakina on what she learned growing YouTube internationally and what it means for the AI race: a16z.news/p/the-sovereig…

English

Rossco Paddison 리트윗함

When I was consulting for @HBO Silicon Valley, zero-loss compression was the holy grail Richard Hendricks chases that perfect middle-out algo could shrink everything w/out breaking a single bit.

Google just did something even more practical for the AI era: TurboQuant compresses LLM key-value caches down to 3 bits per value using random orthogonal rotation + PolarQuant scalar quantization & optional 1-bit QJL residual correction.

=>> 6× memory reduction, up to 8× faster attention (on H100), & 0 degradation on LongBench, Needle-in-a-Haystack, and RULER for models like Gemma. No retraining, no calibration needed.

Fiction just got out-engineered by reality. 😅💚💚

Google Research@GoogleResearch

Introducing TurboQuant: Our new compression algorithm that reduces LLM key-value cache memory by at least 6x and delivers up to 8x speedup, all with zero accuracy loss, redefining AI efficiency. Read the blog to learn how it achieves these results: goo.gle/4bsq2qI

English

Rossco Paddison 리트윗함