Post

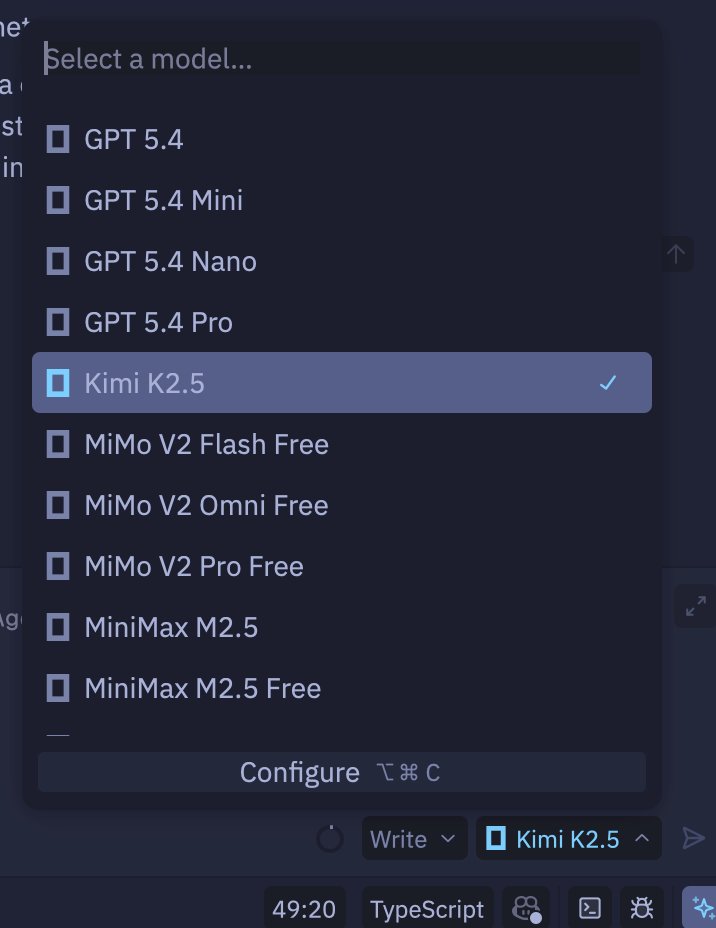

@opencode Is this on GO only? I added OpenCode Zen as an LLM provider for my Zen editor. I can see a lot of models, but not Kimi K2.6 (I see 2.5).

Also, I see some messages about Deepseek, but I don't see it. I'm on Zen, not GO.

English

@opencode Yes. I had this problem a two days ago and switched to glm 5.1 since then

English

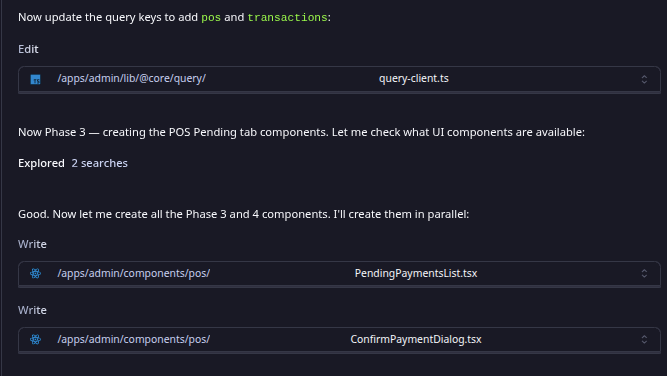

@opencode Opencode in terminal is editing files in plan mode.

I had this issue with differents LLM's, and started to happend when i started with opencode go.

English

@opencode discovered deepseek v4 pro max because of this issue, not complaining

English

@opencode We have 4 providers for kimi 2.6 llmgateway.io/models/kimi-k2… so when one falls, we route to the other automatically

Try /connect and pick @llmgateway as the provider and paste your API key there.

We also have similar Go plans here: code.llmgateway.io

English

@opencode Honest request you've done the 3x thing, but I used all my included usage before you started doing that, now I can't even try deepseek because it's not on Zen. Any relief for people like me?

English

@opencode Cant use most of the models right now. Not great. I paid for it.

English

@opencode I've been having issues with the WEB ui, ever since last night, it hangs, doesn't do anything just sits there, it was having issues connecting to the ghostly terminal. (restarted-updated-same issue). Sometimes does below in the picture, not sure if its even working. -nonexpanding

English

@opencode My only problem is that I use kimi 2.6 too much and my tokens don’t last

English

@opencode I was wondering why my model randomly stopped..

glad its picked up!

English

@opencode Thanks! I thought that it was only with me.

English

@opencode You guys should look into making open code available on Android and iOS.

English

@opencode This did prevent some work but luckily we have Deepseek V4 Pro

English

@opencode The app hangs a lot on windows, even worse when using DeepSeek

English

@opencode Reliability is the biggest hurdle for AI architectures right now. Multi-provider routing is definitely the right move to mitigate those server-side capacity limits. Redundancy is key for robust agentic workflows.

English

@opencode "Why does an AI model with a 1-million-token context window fail to support OpenCode and encounter errors specifically at the 250k token threshold when connected via a provider?"

English

@opencode provider incident messaging is underrated. naming the broken model and giving users the fallback path saves way more trust than pretending routing is invisible

English

@opencode Funny enough, the fallback model can bite you too if it lacks Kimi’s function‑calling support. Then you’re still stuck.

English

@opencode I paid the go plan, and i'm using minimax M2.5 Free, and it's amazingly good.

English

@opencode Ah, Kimi 2.6’s been flaky for me too—especially on Go syntax highlighting. Tried DeepSeek-Coder as a fallback, works decently. Any ETA on the fix? 🤞

English

@opencode is this resolved, and when grok?

English