0xfabs

2.5K posts

0xfabs

@0xfabs

doing devrel things @layerzero_core | AI-pilled

朋友在深圳拍到的线下Openclaw装机画面😂 大型AI时代“地推”名场面。 这需求也太高了吧😂😂😂

Why Zero? A conversation with @citsecurities, @The_DTCC, and @ICE_Markets. Speakers: - Dan Doney, CTO of DTCC Digital Assets - Marcin Sablik, Partner at Citadel Securities - Michael Blaugrund, VP of Strategic Initiatives at Intercontinental Exchange

The @figma MCP is insanely good. First time I used it felt like AGI. Feed designs to the MCP, done. It reads frames, components, variables, layout, and generates close to pixel perfect code. I one-shotted my design in this 150 second video with @DevinAI.

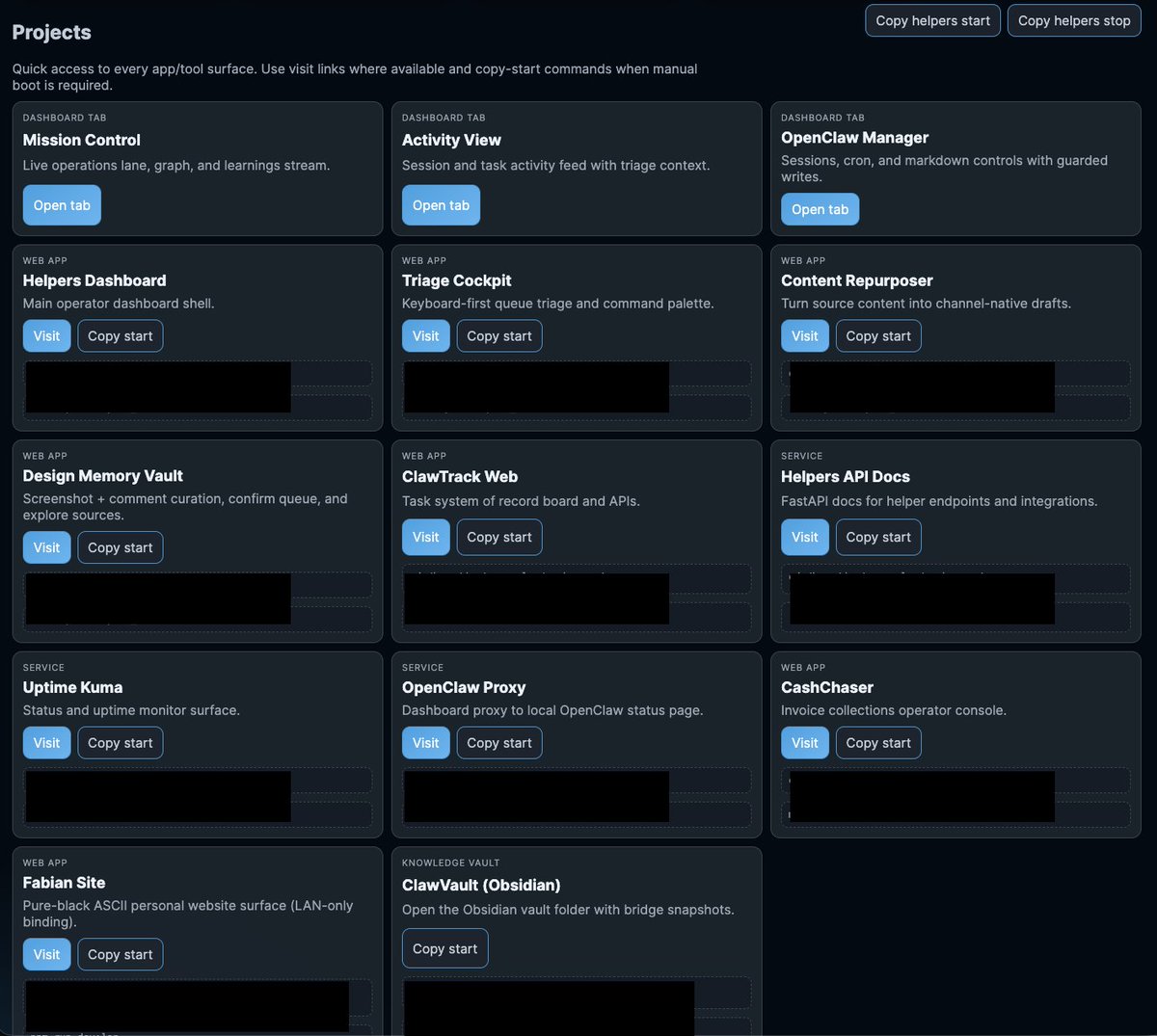

What Karpathy is describing is what I’ve been calling a personal operating system for months now. People are underestimating the impact these systems will have on the (digital) economy and self sovereignty. It won’t take long until even non-technical people can just describe what they want and need to get a working tool helping them in their daily lives. You won’t need to spend hundreds of dollars a year across several apps and SaaS tools - tools you don’t own, built on terms you didn’t set. All you need to break the shackles is a subscription to an AI tool. The rest you build yourself, on your terms. And for those who want to go further: run it locally. Own the model. Own the data. No subscriptions, no terms of service, no company between you and your tools. That’s true self sovereignty. The pace is faster than I ever could have imagined, and I am so looking forward to seeing what you’ll be able to do in 3 months.

Very interested in what the coming era of highly bespoke software might look like. Example from this morning - I've become a bit loosy goosy with my cardio recently so I decided to do a more srs, regimented experiment to try to lower my Resting Heart Rate from 50 -> 45, over experiment duration of 8 weeks. The primary way to do this is to aspire to a certain sum total minute goals in Zone 2 cardio and 1 HIIT/week. 1 hour later I vibe coded this super custom dashboard for this very specific experiment that shows me how I'm tracking. Claude had to reverse engineer the Woodway treadmill cloud API to pull raw data, process, filter, debug it and create a web UI frontend to track the experiment. It wasn't a fully smooth experience and I had to notice and ask to fix bugs e.g. it screwed up metric vs. imperial system units and it screwed up on the calendar matching up days to dates etc. But I still feel like the overall direction is clear: 1) There will never be (and shouldn't be) a specific app on the app store for this kind of thing. I shouldn't have to look for, download and use some kind of a "Cardio experiment tracker", when this thing is ~300 lines of code that an LLM agent will give you in seconds. The idea of an "app store" of a long tail of discrete set of apps you choose from feels somehow wrong and outdated when LLM agents can improvise the app on the spot and just for you. 2) Second, the industry has to reconfigure into a set of services of sensors and actuators with agent native ergonomics. My Woodway treadmill is a sensor - it turns physical state into digital knowledge. It shouldn't maintain some human-readable frontend and my LLM agent shouldn't have to reverse engineer it, it should be an API/CLI easily usable by my agent. I'm a little bit disappointed (and my timelines are correspondingly slower) with how slowly this progression is happening in the industry overall. 99% of products/services still don't have an AI-native CLI yet. 99% of products/services maintain .html/.css docs like I won't immediately look for how to copy paste the whole thing to my agent to get something done. They give you a list of instructions on a webpage to open this or that url and click here or there to do a thing. In 2026. What am I a computer? You do it. Or have my agent do it. So anyway today I am impressed that this random thing took 1 hour (it would have been ~10 hours 2 years ago). But what excites me more is thinking through how this really should have been 1 minute tops. What has to be in place so that it would be 1 minute? So that I could simply say "Hi can you help me track my cardio over the next 8 weeks", and after a very brief Q&A the app would be up. The AI would already have a lot personal context, it would gather the extra needed data, it would reference and search related skill libraries, and maintain all my little apps/automations. TLDR the "app store" of a set of discrete apps that you choose from is an increasingly outdated concept all by itself. The future are services of AI-native sensors & actuators orchestrated via LLM glue into highly custom, ephemeral apps. It's just not here yet.

Chinese company Unitree makes history: The company's humanoid G1 robots have begun working in its factories to manufacture new robots! It's like something out of a science fiction movie, but it's reality today… Robots building robots under the supervision of the advanced UnifoLM-X1-0 AI model.