Fourth place: Sixth Sense for Security Guards This video monitoring system by @AbylayO and @AlsuOspan combines movement detection with multimodal reasoning, using Gemma 3n to distinguish benign events from genuine threats.

Abylay Ospan

247 posts

@AbylayOspan

Engineer at Amazon EC2 | ex-Magic Leap | Linux Kernel Maintainer. Views are my own. Building @sbnb_io (AI Linux)

Fourth place: Sixth Sense for Security Guards This video monitoring system by @AbylayO and @AlsuOspan combines movement detection with multimodal reasoning, using Gemma 3n to distinguish benign events from genuine threats.

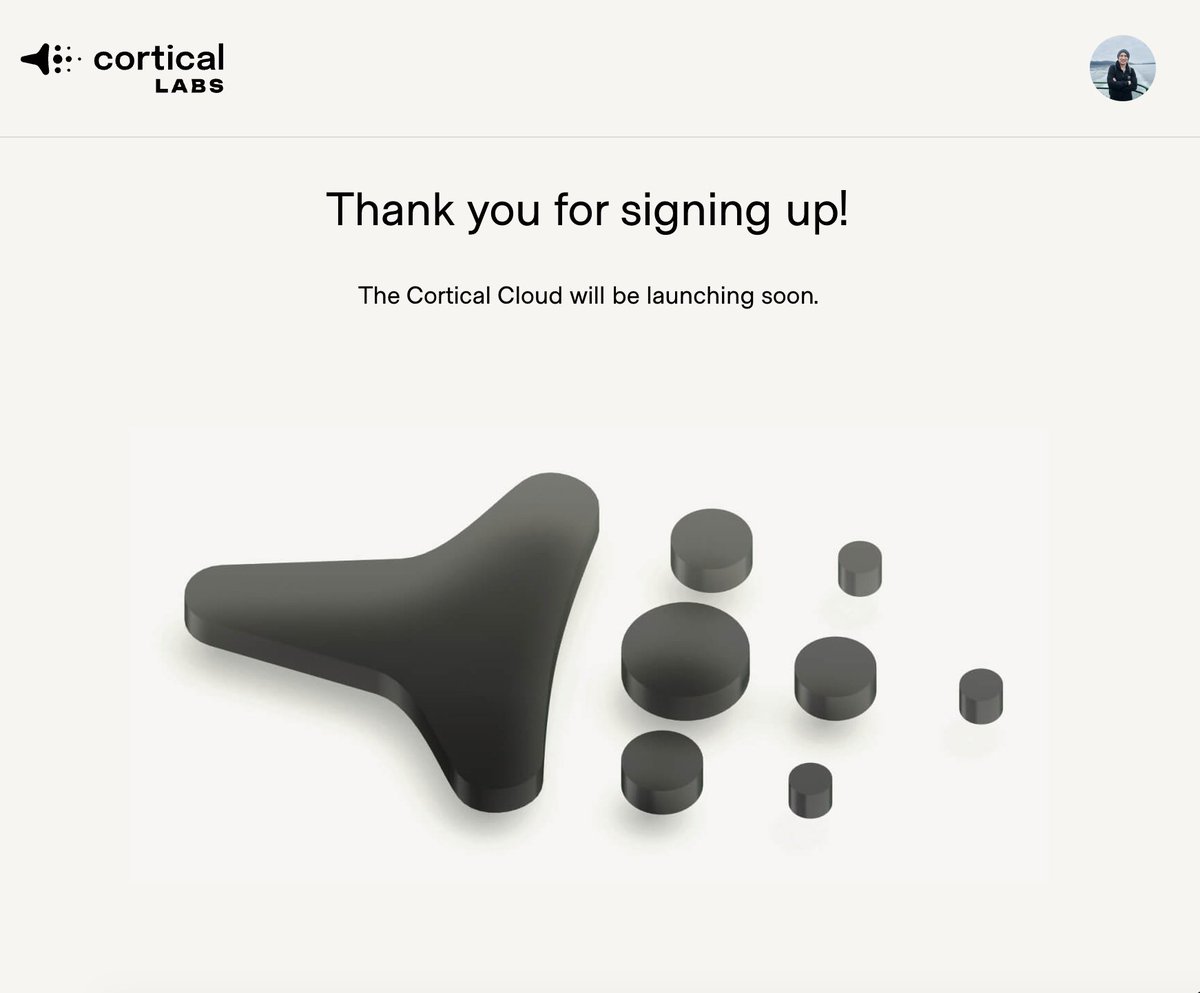

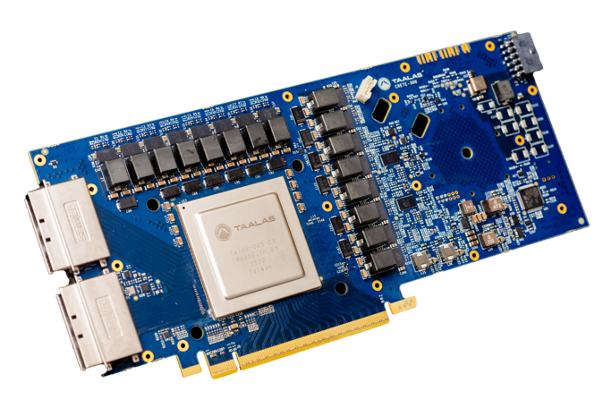

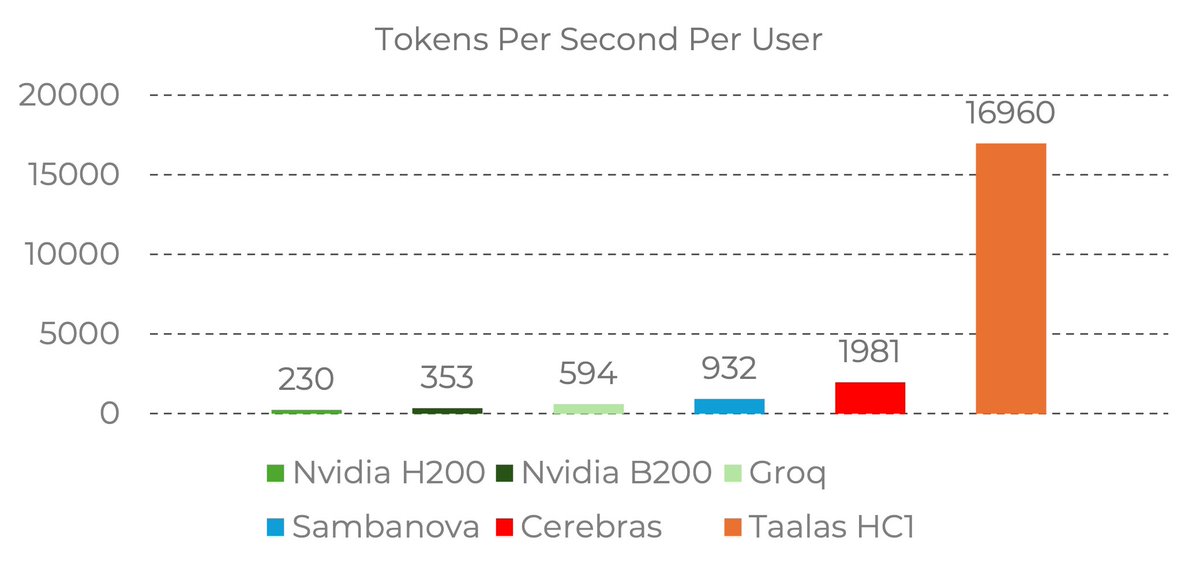

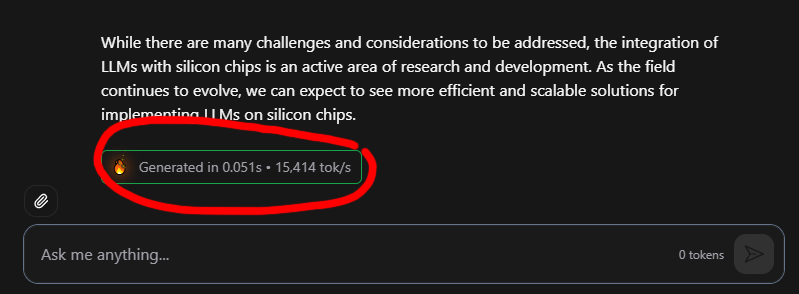

17,000 tokens per second!! Read that again! LLM is hard-wired directly into silicon. no HBM, no liquid cooling, just raw specialized hardware. 10x faster and 20x cheaper than a B200. the "waiting for the LLM to think" era is dead. Code generates at the speed of human thought. Transition from brute-force GPU clusters to actual AI appliances. taalas.com/the-path-to-ub…

We have moved our headquarters to Miami, Florida.

Where to build the next Silicon Valley in Miami? 🌴 Definitely not Miami Downtown, Brickell, Wynwood, or Miami Beach - there’s simply no space to build massive campuses like a Googleplex or Meta’s Menlo Park. As a former Magic Leap engineer, my vote is Plantation - which is part of the broader Miami conglomerate. It’s the only company in South FL with true engineering depth and may serve as the primary nucleation site for the entire Miami Silicon Valley. While FAANG has small offices dispersed across the city, they’re mostly non-technical roles - Plantation is where the builders are 🧠💻 Why is this important? I’ve been here in Miami since 2012 and I’ve seen so many talented engineers move here, spend time kitesurfing, sailing, cycling, or playing tennis, then get bored and move back to California. Or they stay, but become a "Miami enjoyer" with no time or motivation to grind. This is why I call it a trap for tech entrepreneurs - to avoid that churn, talent needs to be together - apes stronger together lol🦍 P.S. What do we even call it? AI Palms? 🌴🧠 P.P.S. I wrote this post because I’m watching everyone here on X (@chamath @shaig @BillAckman @jonoringer @patrickc @eladgil) force Miami as the Silicon Valley alternative for a lot of reasons - not least of which is the debated tax on unrealized gains being proposed by @RoKhanna.

Kling 3.0 is truly "one giant leap for AI video generation"! Check out this amazing mockumentary from Kling AI Creative Partner Simon Meyer!