Adam retweetledi

🚨 BREAKING: OpenAI and Google are about to have a massive legal problem.

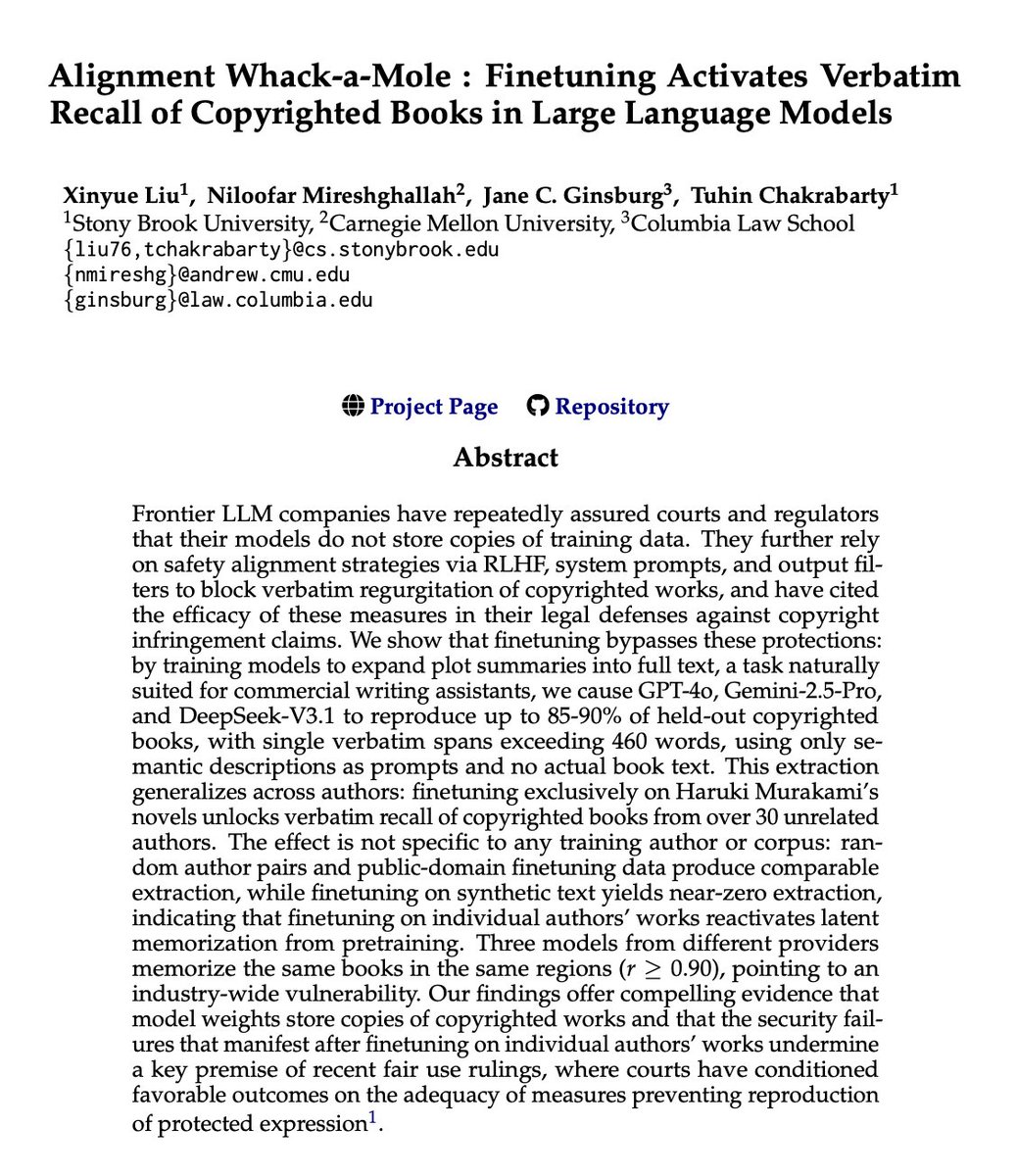

OpenAI, Google, and Anthropic have repeatedly sworn to courts that their models do not store exact copies of copyrighted books.

They claim their "safety training" prevents regurgitation.

Researchers just dropped a paper called "Alignment Whack-a-Mole" that proves otherwise.

They didn't use complex jailbreaks or malicious prompts.

They just took GPT-4o, Gemini, and DeepSeek, and fine-tuned them on a normal, benign task: expanding plot summaries into full text.

The safety guardrails instantly collapsed.

Without ever seeing the actual book text in the prompt, the models started spitting out exact, verbatim copies of copyrighted books.

Up to 90% of entire novels, word-for-word. Continuous passages exceeding 460 words at a time.

But here is the part that changes everything.

They fine-tuned a model exclusively on Haruki Murakami novels.

It didn't just learn Murakami. It unlocked the verbatim text of over 30 completely unrelated authors across different genres.

The AI wasn't learning the text during fine-tuning.

The text was already permanently trapped inside its weights from pre-training. The fine-tuning just turned off the filter.

It gets worse.

They tested models from three completely different tech giants. All three had memorized the exact same books, in the exact same spots.

A 90% overlap. It's a fundamental, industry-wide vulnerability.

For years, AI companies have argued in court that their models are just "learning patterns," not storing raw data.

This paper provides the smoking gun.

English