Adrien Ecoffet

3.5K posts

Adrien Ecoffet

@AdrienLE

Trying to make AGI go well. Researcher at @openai. Views my own.

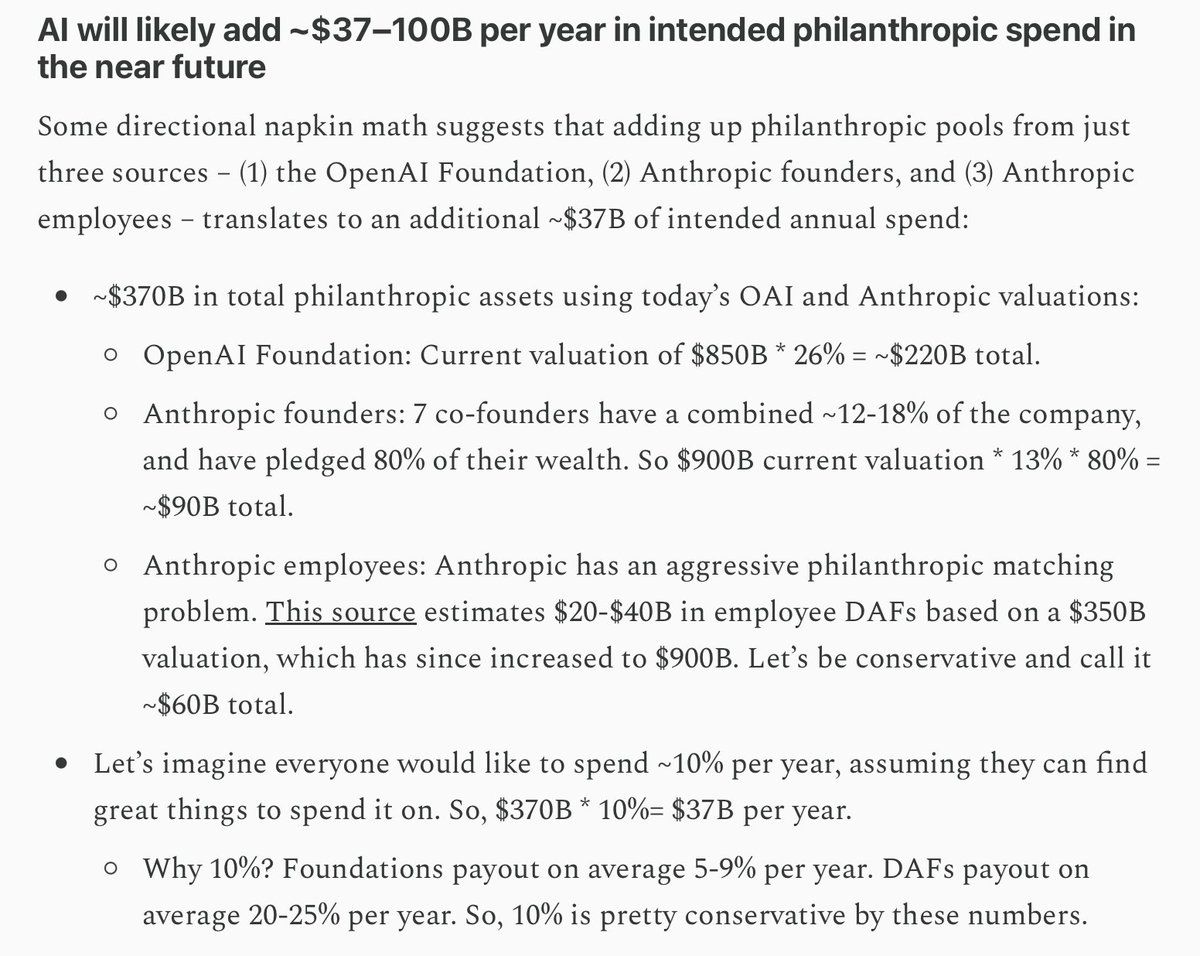

New blog post: The third wave of American philanthropy Hundreds of billions of dollars in new philanthropic capital will soon become liquid. The OpenAI Foundation holds 26% of OpenAI, worth about $220B at today’s valuation. Anthropic’s seven co-founders have pledged to give away 80% of their wealth and have instituted the most aggressive donor matching program for employees in tech history. How much does this all add up to? And how meaningful is that in the context of philanthropy today? I was doing some simple napkin math to wrap my head around the scale of what’s coming, and radicalized myself in the process. I had dramatically underappreciated the scale of the philanthropic capital that’s about to become available and the corresponding gap in talent and organizations that will be needed to make the most of it. This piece aims to directionally sketch the scale of what’s coming, the gap in operational capacity needed to absorb it, and what we can do to fill it. (Link to full post in reply)

Karpathy will be forming a new pre-training team focused on Recursive Self Improvement and will be teaching Claude to improve Claude's training, reporting from Axios.

The number of people in D.C. who think AI is like crypto and therefore you can just throw money at lobbyists to get their preferred outcome is astonishingly high given they are quite obviously wrong.

When I was reporting for 60 Minutes in China, I was told by people I met that they regard America as inferior, specifically because they say the U.S. does not understand how to play the long game. They said with disdain: “We think in terms of centuries - Americans can only think in terms of minutes.”

One thing I've noticed: 1) Anthropic employees view OpenAI as an evil company 2) OpenAI employees just respect Anthropic's products, view them as morally fine Will be interesting how this plays out.

Laser focused

OpenAI would support the creation of a global governance body for artificial intelligence led by the U.S. and including China as a member, a top company executive said, hours before the start of President... claimsjournal.com/news/national/…