John

26 posts

John

@AgenticCowboy

Data Expert | Leveraging generative AI, agentic workflows & scalable ML | Building tomorrow's intelligence today | Insights on evolving AI

Google's Gemma 4 on a 128 GB Macbook Pro is near AGI on the go, no internet needed

Available in four sizes: 🔵 31B Dense & 26B MoE: state-of-the-art performance for advanced local reasoning tasks – like custom coding assistants or analyzing scientific datasets. 🔵 E4B & E2B (Edge): built for mobile with real-time text, vision, and audio processing.

Available in four sizes: 🔵 31B Dense & 26B MoE: state-of-the-art performance for advanced local reasoning tasks – like custom coding assistants or analyzing scientific datasets. 🔵 E4B & E2B (Edge): built for mobile with real-time text, vision, and audio processing.

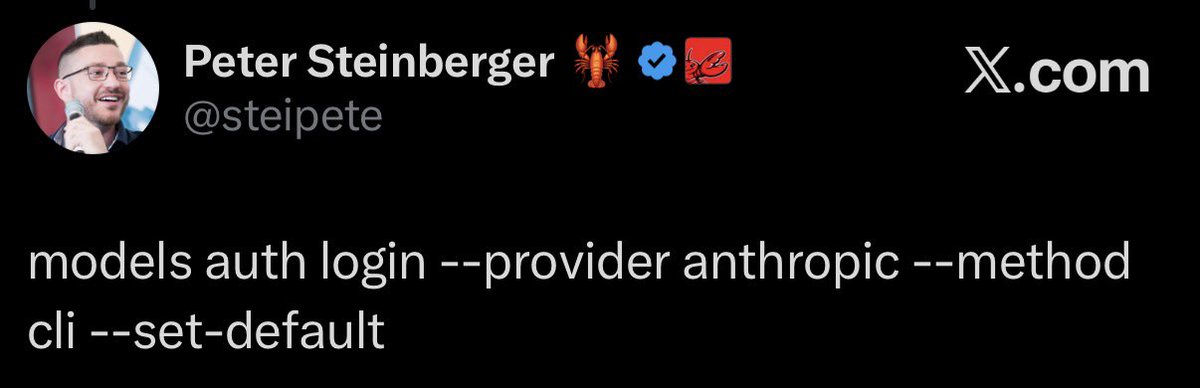

Anthropic essentially bans OpenClaw from Claude by making subscribers pay extra theverge.com/ai-artificial-…

I got the email too. Anthropic is on a sentiment suicide speed-run right now