Akshay Goindani

40 posts

Akshay Goindani

@AkshayGoindani1

Founding Research Engineer @Voyage_AI_ | AI/NLP Grad @SCSatCMU

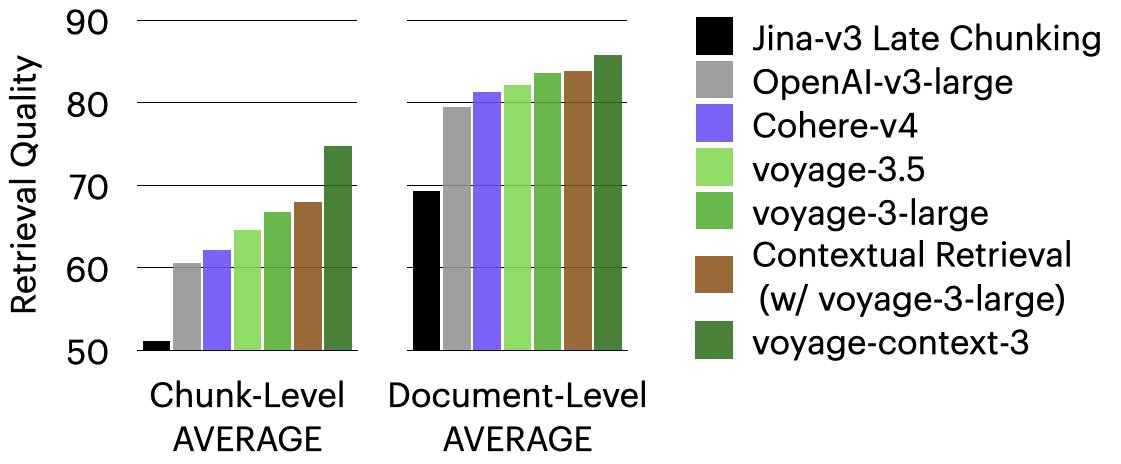

@zhmeishi @AkshayGoindani1 @HongLiu9903 Get all the details in our blog: mongodb.social/6010AAAij Shoutout to @zhmeishi , @AkshayGoindani1, and @HongLiu9903 for their incredible work on this research!

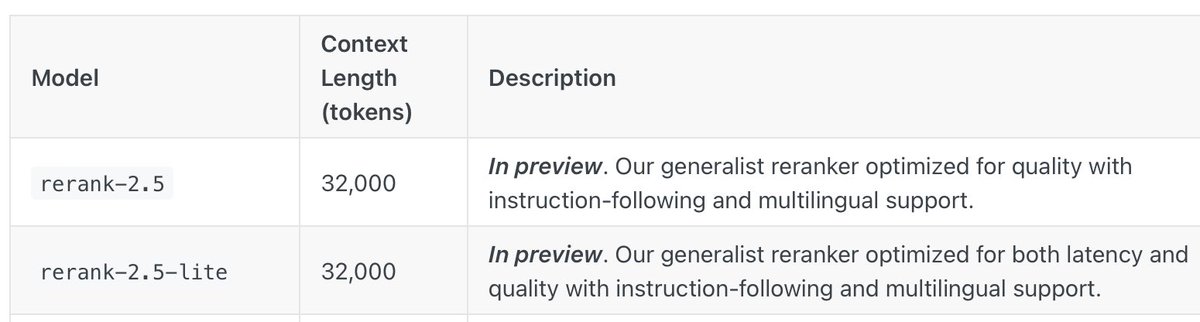

Instruction-following takes reranker capabilities to the next level 🔥 Huge thanks to @zhmeishi and @AkshayGoindani1 for driving this leap forward!

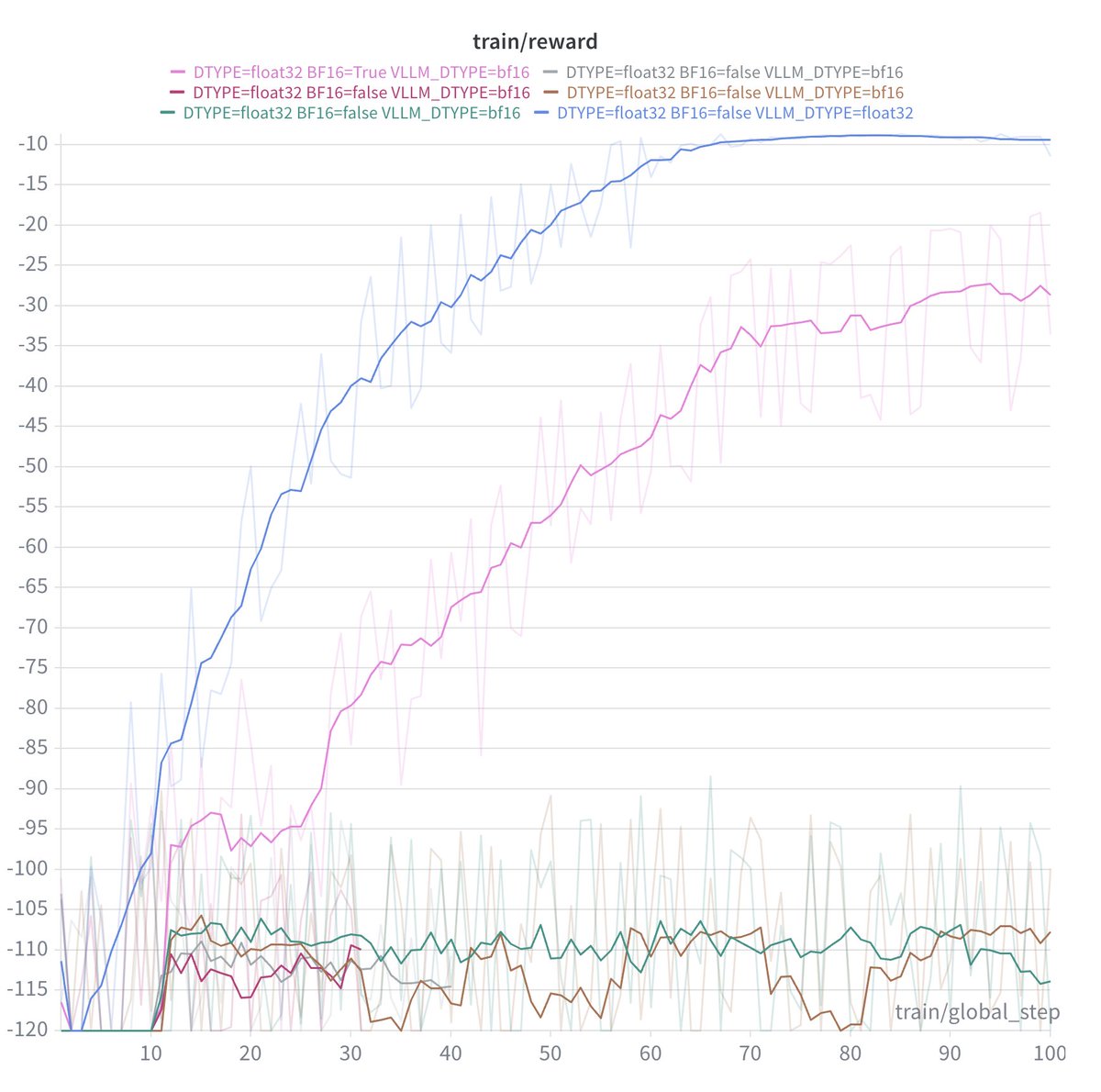

Arxiv: arxiv.org/abs/2506.10947 Clearly a lot more work is needed to understand what’s really happening with RL and prompting. We hope that our experiments with spurious rewards and spurious prompts, as well as the released code, data, checkpoints, etc. will help with this! 🔍

Agree that we need to remember the high variance of RL, as we push further into long horizon etc! We developed metrics to help folks track RL reliability -- codebase+paper here: github.com/google-researc…

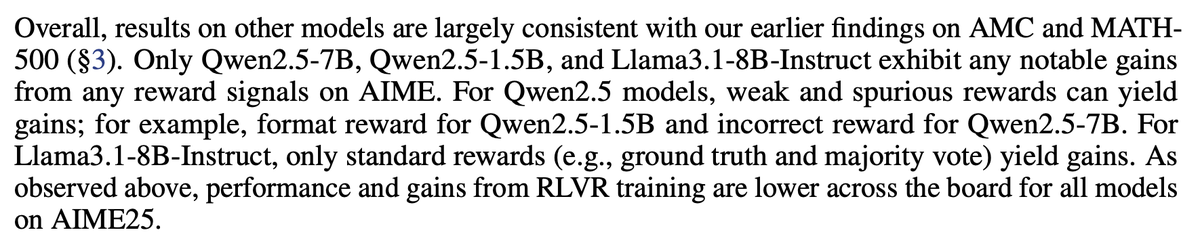

Confused about recent LLM RL results where models improve without any ground-truth signal? We were too. Until we looked at the reported numbers of the Pre-RL models and realized they were serverely underreported across papers. We compiled discrepancies in a blog below🧵👇

1/🧵How do we know if AI is actually ready for healthcare? We built a benchmark, MedHELM, that tests LMs on real clinical tasks instead of just medical exams. #AIinHealthcare Blog, GitHub, and link to leaderboard in thread!

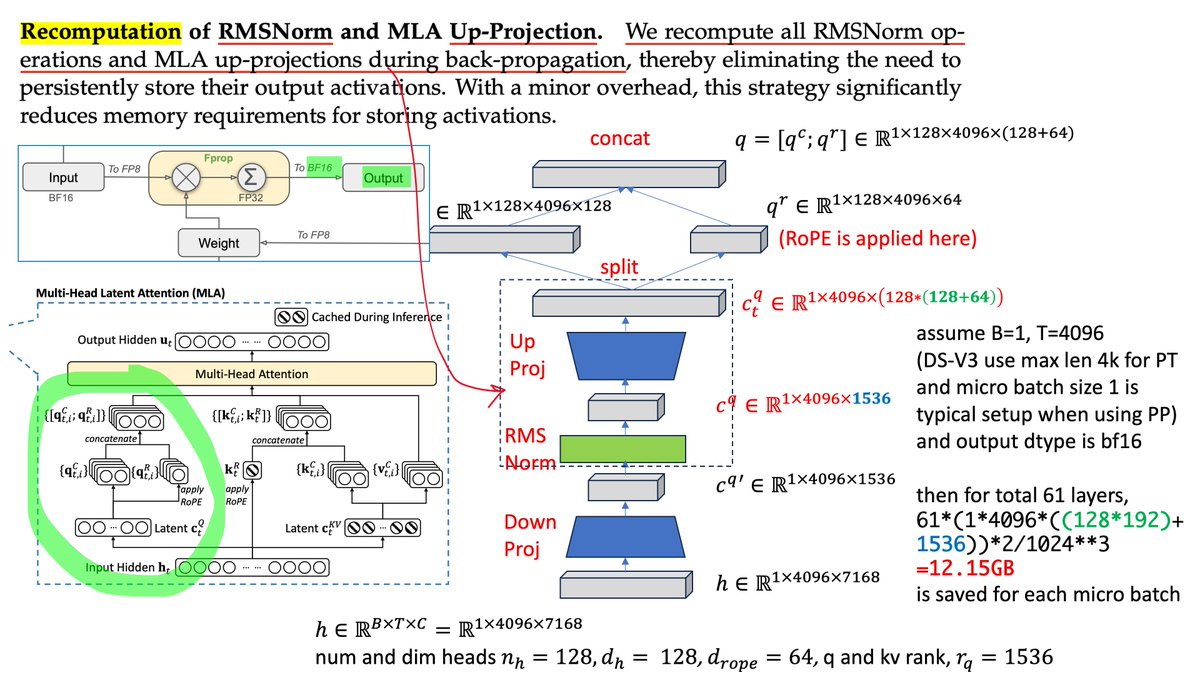

what up guys, I made a one-page comparison of MHA and MLA from @deepseek_ai for those who skipped the DS-V2 paper. pls correct me if I'm wrong.

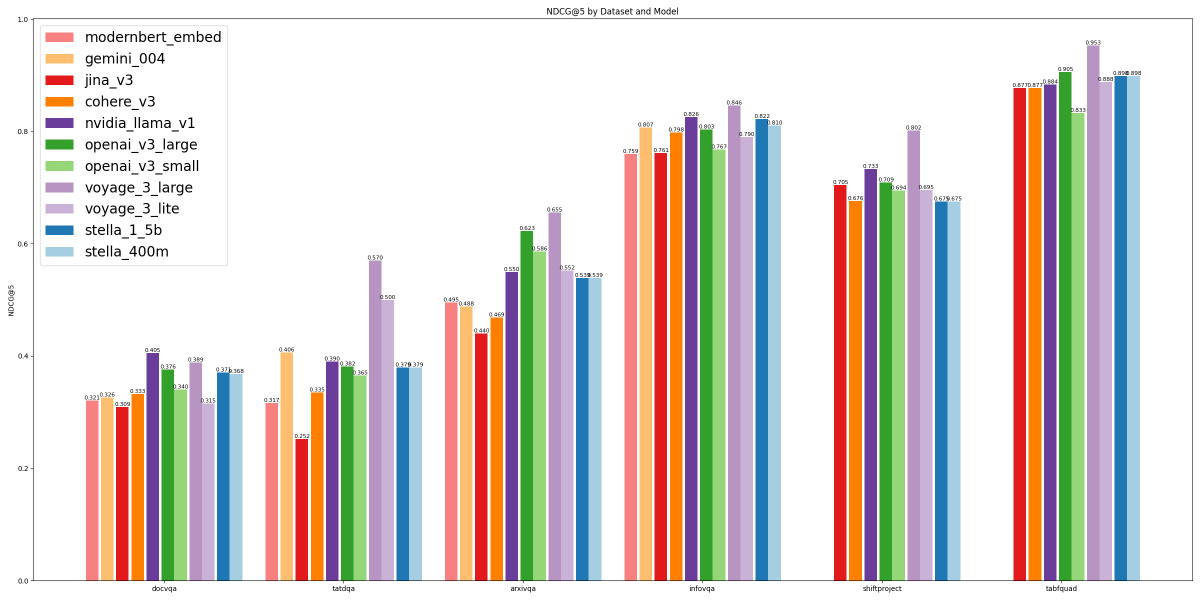

🚨🚨🚨Just released!🚨🚨🚨 🚀Introducing the Salesforce Code Embedding Model Family (SFR-Embedding-Code), ranked #1 on CoIR Benchmark! 🚀 Available in 2 sizes: 2B, 400M. Key Highlights: 1️⃣ 2B Model: Achieves #1 on CoIR. 2️⃣400M Model: Best-performing model under 0.5B parameters. 3️⃣ Multi-lingual, multi-task unified training framework for code retrieval 4️⃣ Supports 12 programming languages, including Python, Java, C++, JavaScript, C#, and more! 🧑💻✨Empower your next AI Coding Agent with the best code embedding models! 🧑💻✨ Join us in advancing #AccurateAI: 📎Paper: bit.ly/4gSZteu 🤗400M Model: bit.ly/4jhDRdp 🤗2B Model: bit.ly/3PCqxmp #CodeAI #MLResearch #SOTA #OpenScience @Salesforce Big thanks to our research team for SFR-Embedding Code: Ye Liu @YeLiu918 Rui Meng @RuiMeng_ Shafiq Joty @JotyShafiq Silvio Savarese @silviocinguetta Yingbo Zhou @yingbozhou_ai Caiming Xiong @CaimingXiong Semih Yavuz @semih__yavuz