Hong Liu

66 posts

Hong Liu

@HongLiu9903

Pretraining @AnthropicAI Prev: Co-founder @VoyageAI

Today, we're proud to announce @inferact, a startup founded by creators and core maintainers of @vllm_project, the most popular open-source LLM inference engine. Our mission is to grow vLLM as the world's AI inference engine and accelerate AI progress by making inference cheaper and faster. The Challenge Inference is not solved. It's getting harder. Models grow larger. New architectures proliferate: mixture-of-experts, multimodal, agentic. Every breakthrough demands new infrastructure. Meanwhile, hardware fragments: more accelerators, more programming models, and more combinations to optimize. The capability gap between models and the systems that serve them is widening. Left this way, the most capable models remain bottlenecked and with full scope of their capabilities accessible only to those who can build custom infrastructure. Close the gap, and we unlock new possibilities. And the problem is growing. Inference is shifting from a fraction of compute to the majority: test-time compute, RL training loops, synthetic data. We see a future where serving AI becomes effortless. Today, deploying a frontier model at scale requires a dedicated infrastructure team. Tomorrow, it should be as simple as spinning up a serverless database. The complexity doesn't disappear; it gets absorbed into the infrastructure we're building. Why Us vLLM sits at the intersection of models and hardware: a position that took years to build. When model vendors ship new architectures, they work with us to ensure day-zero support. When hardware vendors develop new silicon, they integrate with vLLM. When teams deploy at scale, they run vLLM, from frontier labs to hyperscalers to startups serving millions of users. Today, vLLM supports 500+ model architectures, runs on 200+ accelerator types, and powers inference at global scale. This ecosystem, built with 2,000+ contributors, is our foundation. We've been stewards of this engine since its first commit. We know it inside out. We deployed it at frontier scale—in research and in production. Open Source vLLM was built in the open. That's not changing. Inferact exists to supercharge vLLM adoption. The optimizations we develop flow back to the community. We plan to push vLLM's performance further, deepen support for emerging model architectures, and expand coverage across frontier hardware. The AI industry needs inference infrastructure that isn't locked behind proprietary walls. Join Us Through the open source community, we are fortunate to work with some of the best people we know. For @inferact, we're hiring engineers and researchers to work at the frontier of inference, where models meet hardware at scale. Come build with us. We're fortunate to be supported by investors who share our vision, including @a16z and @lightspeedvp who led our $150M seed, as well as @sequoia, @AltimeterCap, @Redpoint, @ZhenFund, The House Fund, @strikervp, @LaudeVentures, and @databricks. - @woosuk_k, @simon_mo_, @KaichaoYou, @rogerw0108, @istoica05 and the rest of the founding team

Can LLMs automate frontier LLM research, like pre-training and post-training? In our new paper, LLMs found post-training methods that beat GRPO (69.4% vs 48.0%), and pre-training recipes faster than nanoGPT (19.7 minutes vs 35.9 minutes). 1/

📜 Paper on new pretraining paradigm: Synthetic Bootstrapped Pretraining SBP goes beyond next-token supervision in a single document by leveraging inter-document correlations to synthesize new data for training — no teacher needed. Validation: 1T data + 3B model from scratch.🧵

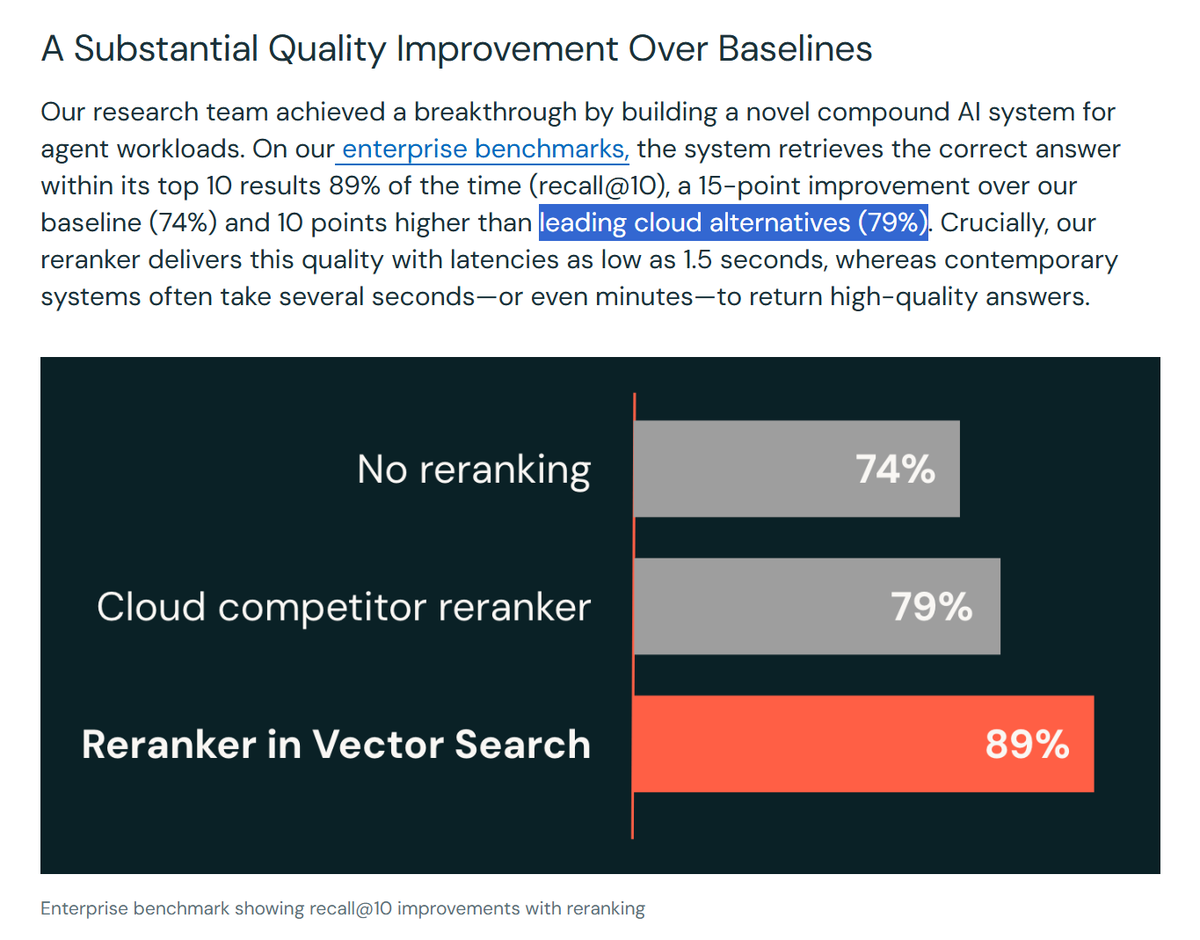

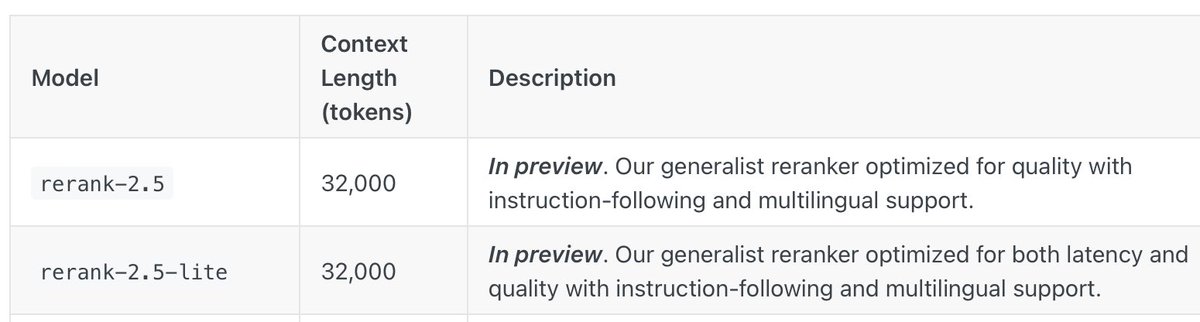

📣 Announcing rerank-2.5 and 2.5-lite: our latest generation of rerankers! • First reranker with instruction-following capabilities • rerank-2.5 and 2.5-lite are 7.94% and 7.16% more accurate than Cohere Reranker v3.5 • Additional 8.13% and 7.55% performance gain with instruction

📣 Announcing rerank-2.5 and 2.5-lite: our latest generation of rerankers! • First reranker with instruction-following capabilities • rerank-2.5 and 2.5-lite are 7.94% and 7.16% more accurate than Cohere Reranker v3.5 • Additional 8.13% and 7.55% performance gain with instruction

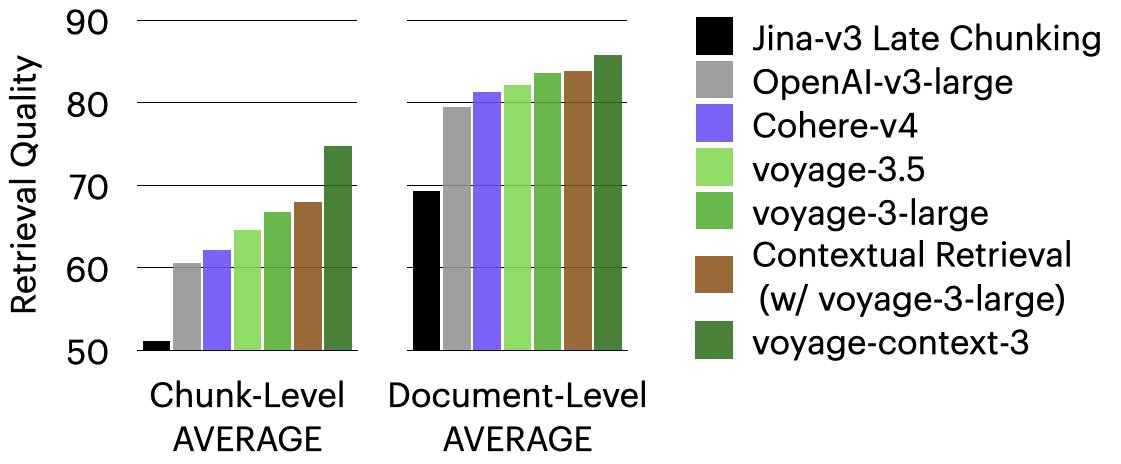

Before: chunk overlaps, context summaries, metadata augmentation Now: voyage-context-3 processes the full doc in one pass and generates a distinct embedding for each chunk. Each embedding encodes the chunk-level details AND full doc context, for more semantically aware retrieval.

📢 voyage-context-3: contextualized chunk embeddings - Auto captures of chunk level detail & global doc context, w/o metadata augmentation - Beats OpenAI-v3-large by 14.24% & Cohere-v4 by 7.89% - Binary 512-dim matches OpenAI (float, 3072-dim) in accuracy, but 192x cheaper in VDB costs