Ali Naeimi

15 posts

Ali Naeimi

@Ali_NT99

MSc AI | Research Engineer | Distributed Pretraining optimization

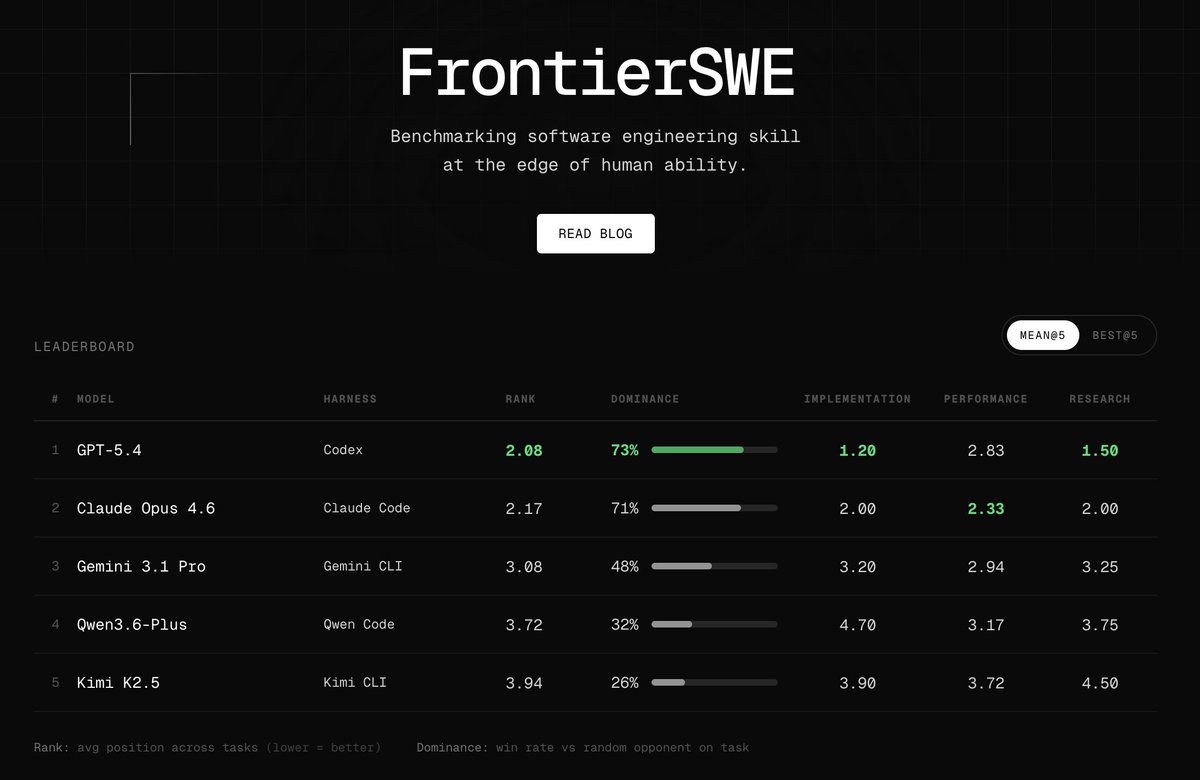

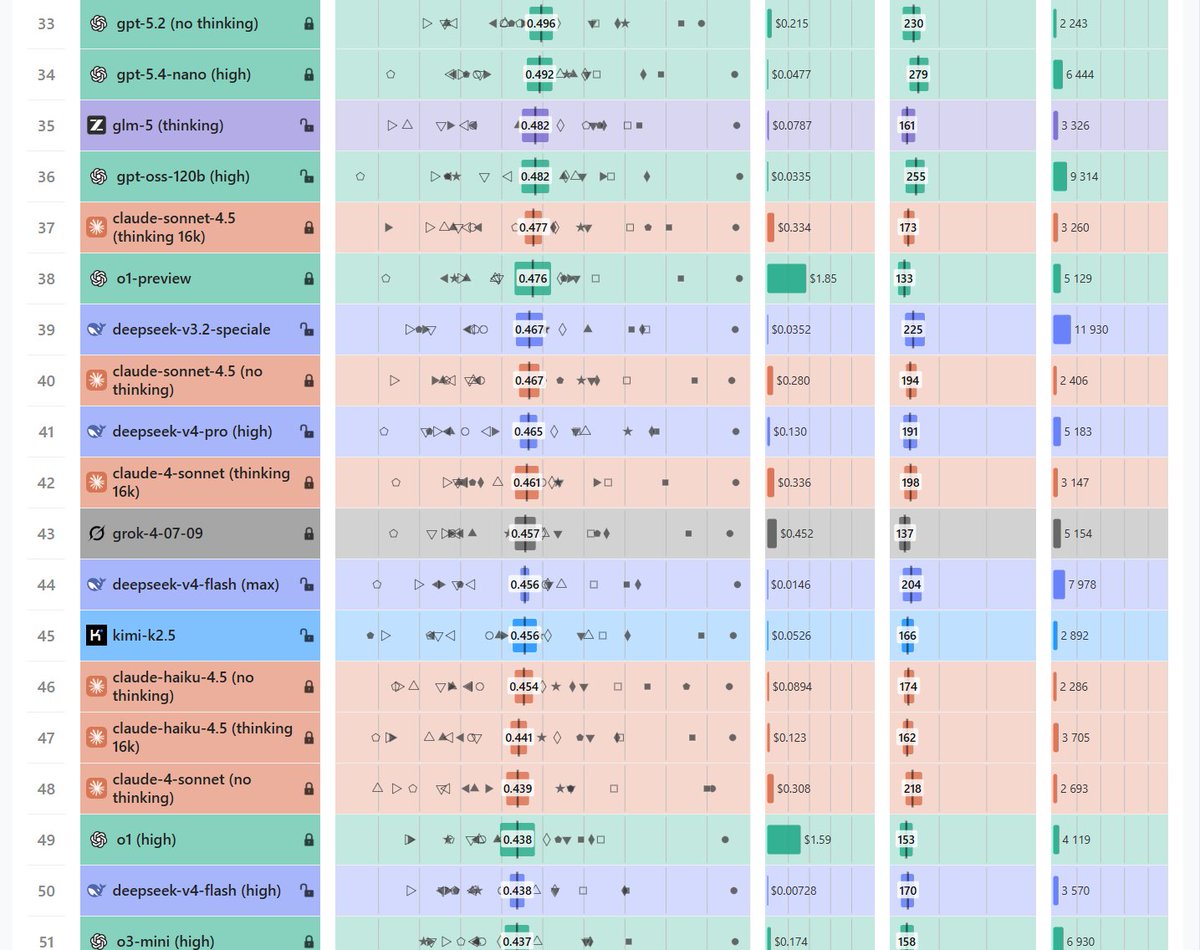

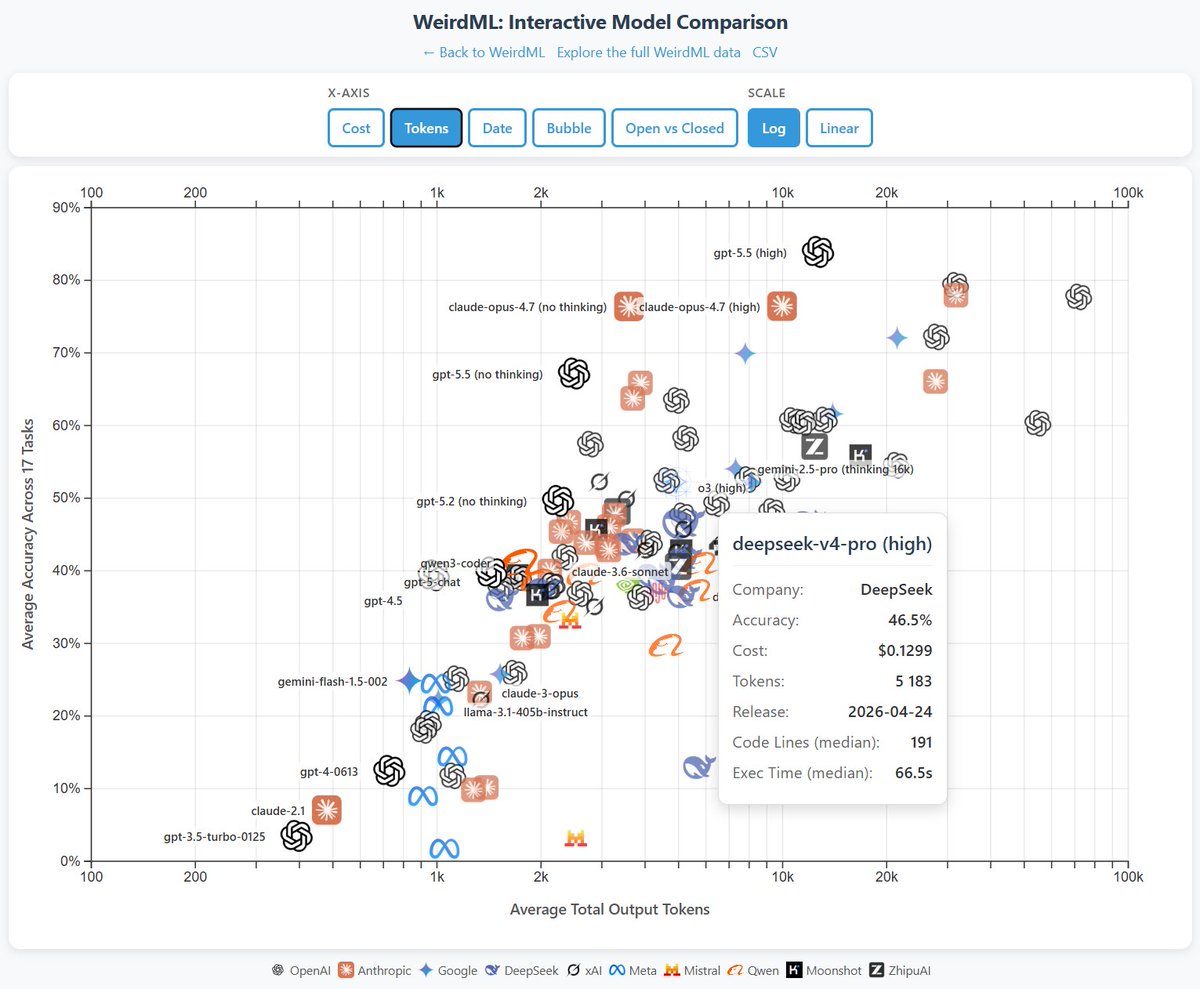

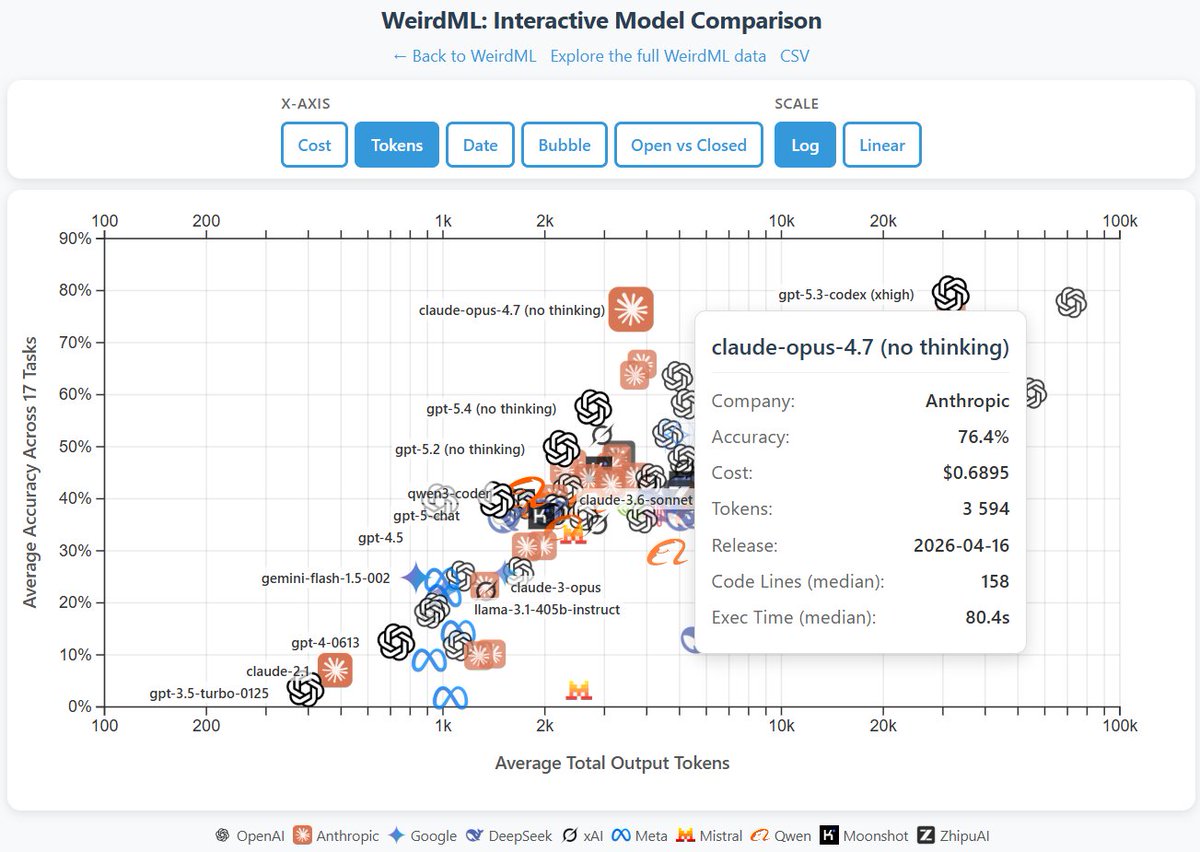

WeirdML v2 is now out! The update includes a bunch of new tasks (now 19 tasks total, up from 6), and results from all the latest models. We now also track api costs and other metadata which give more insight into the different models. The new results are shown in these two figures. The first one shows an overview of the overall results as well as the results on individual tasks, in addition to various metadata. The second figure shows cost vs performance and shows a clear scaling with better results for higher costs. We also have a very varied pareto frontier with 11 models from 6 different companies having the best accuracy for a given cost for at least some of the cost range. Grok 3, Claude Opus 4 and GPT 4.5 are the ones that underperform for their costs, while Gemini pro and o3 pro have the best results at the highest costs. Qwen3 30B3A, grok 3 mini and deepseek R1 also each represent a good chunk of the pareto frontier.

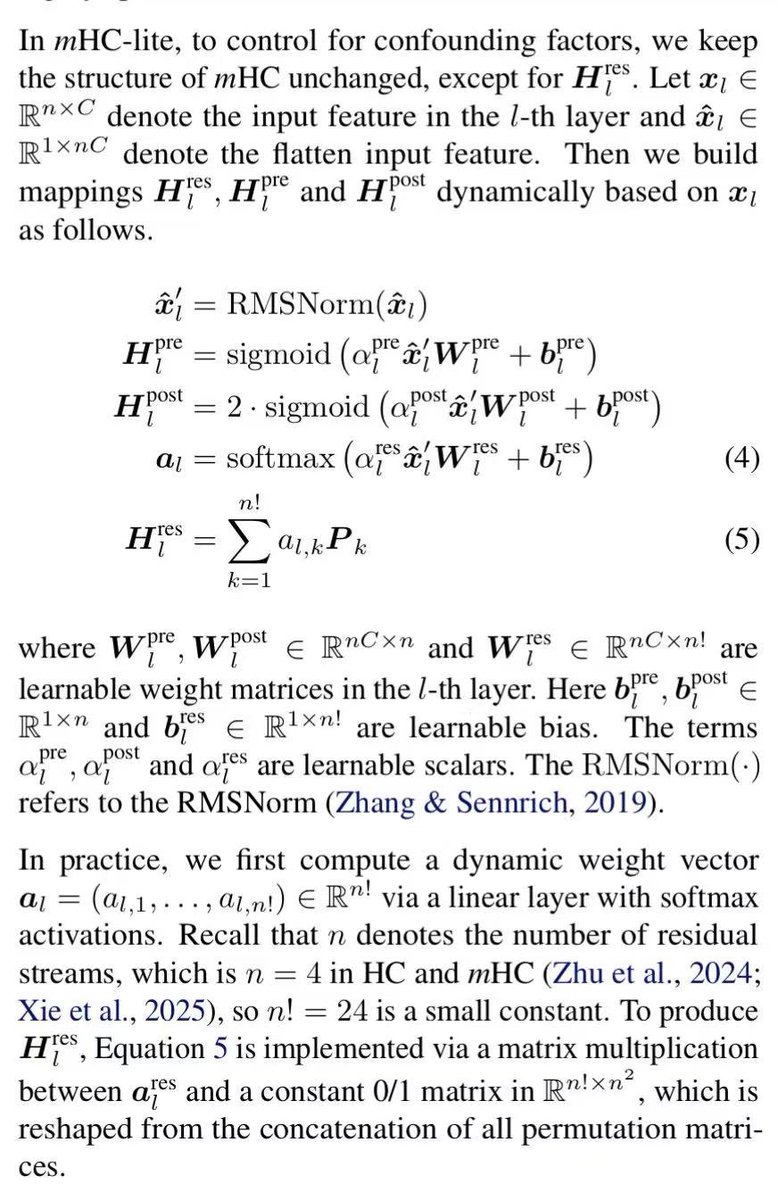

Yes, spot on! For n=4, any doubly stochastic matrix W has an exact decomposition (Birkhoff-von Neumann): W = \sum_{i=1}^{24} \alpha_i P_i with \alpha_i \geq 0, \sum \alpha_i = 1, and P_i the 24 permutation matrices. Just set \alpha = \text{softmax}(\text{logits}) over 24 trainable logits → exact, differentiable, no Sinkhorn needed. Clean trick for small n!

Claude Opus 4.7 (no thinking) scores 76.4% on WeirdML, right behind gpt 5.4 (xhigh) at 77.7%, Opus 4.6 (adaptive) at 77.9% and gpt 5.3 codex (xhigh) at 79.3%, using an order of magnitude less tokens. This looks like a major step forward, things are moving fast now! Results with thinking next week.

WeirdML v2 is now out! The update includes a bunch of new tasks (now 19 tasks total, up from 6), and results from all the latest models. We now also track api costs and other metadata which give more insight into the different models. The new results are shown in these two figures. The first one shows an overview of the overall results as well as the results on individual tasks, in addition to various metadata. The second figure shows cost vs performance and shows a clear scaling with better results for higher costs. We also have a very varied pareto frontier with 11 models from 6 different companies having the best accuracy for a given cost for at least some of the cost range. Grok 3, Claude Opus 4 and GPT 4.5 are the ones that underperform for their costs, while Gemini pro and o3 pro have the best results at the highest costs. Qwen3 30B3A, grok 3 mini and deepseek R1 also each represent a good chunk of the pareto frontier.

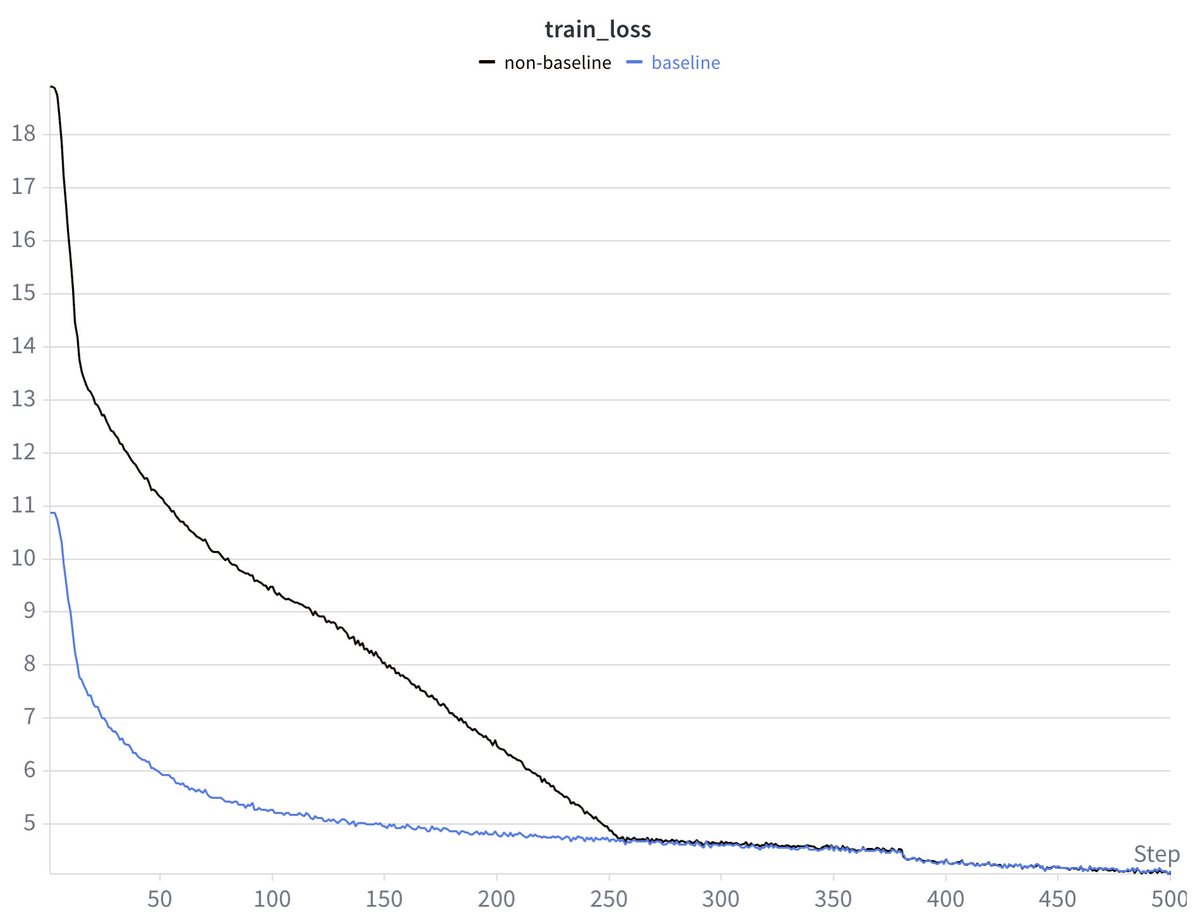

you can just do things when you're gpu rich (full post-train GLM5 being the things)