AlvaBuddha

627 posts

AlvaBuddha

@Alva_Buddha

Ex-McKinsey senior expert. IIT grad. Building games solo. Centrist. Rationalist.

@peter_szilagyi Looking

The degree to which you are awed by AI is perfectly correlated with how much you use AI to code.

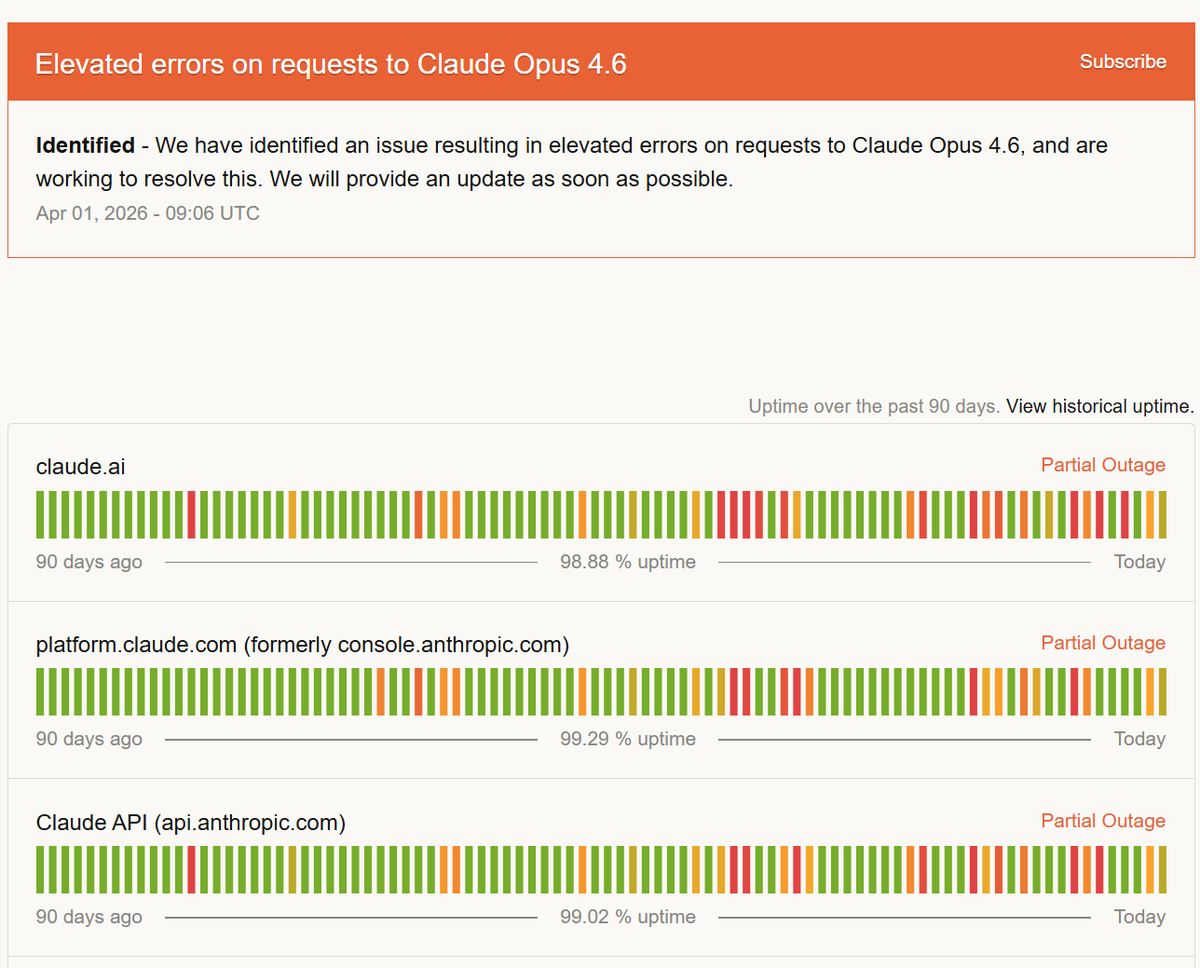

Well, fuck Anthropic. I've bought a 3 month Claude Max sub to a friend as a gift. Sent it to them. 10 days later, their gift is GONE from their account. No trace whatsoever. I go to Anthropic to request a refund: - I can't it's not my gift. - They can't, it doesn't exist. Oh, and you have NO WAY to contact a person who understands the problem, you can only talk to a fucking AI whose job is to get rid of you. It just closes the convo with "End." after you explain what's wrong.

We're going farther than ever before 🚀 Today, the Artemis II crew will break the record for how far humans have traveled from Earth as they fly around the far side of the Moon. Coverage begins at 1 p.m. EDT (1700 UTC). Watch Artemis II make history: nasa.gov/ways-to-watch/

Today's worldbuilding post revealed a flaw in most people's concept of LotR We've been so exposed to the Silmarillion that we've forgotten that the original readers of Lord of the Rings didn't have any of that. They understood none of those references, yet still liked the books

We @btv_vc think there is a unique opportunity to build a deployed intelligence company. 1. Every great technology capability requires a diffusion mechanism into the long tail of the economy, and every major technology wave has created massive businesses on the deployment side 2. Mid-market and SMBs will not self-serve their way into a system of intelligence, and AI product companies, while best in class at building product, need deployment partners to bridge the gap for low to mid-size customers 3. The infrastructure to build this company exists today in a way it did not 12 months ago Read through some of our observations and if you have a point of view on this concept reach out!