ParallelCitizen.xyz

1.3K posts

ParallelCitizen.xyz

@AnalogueUSB

independent researcher covering decentralization, network states, and techno-democracy

BREAKING: Amazon reportedly holds mandatory meeting after “vibe coded” changes trigger major outages.

Lots of non tech friends want openclaws. So far i've set them up on VMs, but this is getting heavy. Are there any good multi-tenant openclaw setups or alt-claws yet that are good enough?

I've been thinking a bit about continual learning recently, especially as it relates to long-running agents (and running a few toy experiments with MLX). The status quo of prompt compaction coupled with recursive sub-agents is actually remarkably effective. Seems like we can go pretty far with this. (Prompt compaction = when the context window gets close to full, model generates a shorter summary, then start from scratch using the summary. Recursive sub-agents = decompose tasks into smaller tasks to deal with finite context windows) Recursive sub-agents will probably always be useful. But prompt compaction seems like a bit of an inefficient (though highly effective) hack. The are two other alternatives I know of 1. online fine-tuning and 2. memory based techniques. Online fine-tuning: train some LoRA adapters on data the model encounters during deployment. I'm less bullish on this in general. Aside from the engineering challenges of deploying custom models / adapters for each use case / user there are a some fundamental issues: - Online fine-tuning is inherently unstable. If you train on data in the target domain you can catastrophically destroy capabilities that you don't target. One way around this is to keep a mixed dataset with the new and the old. But this gets pretty complicated pretty quickly. - What does the data even look like for online fine tuning? Do you generate Q/A pairs based on the target domain to train the model? You also have the problem prioritizing information in the data mixture given finite capacity. Memory based techniques: basically a policy for keeping useful memory around and discarding what is not needed. This feels much more like how humans retain information: "use it or lose it". You only need a few things for this to work: - An eviction/retention policy. Something like "keep a memory if it has been accessed at least once in the last 10k tokens". - The policy needs to be efficiently computable - A place for the model to store and access long-term memory. Maybe a sparsely accessed KV cache would be sufficient. But for efficient access to a large memory a hierarchical data structure might be beter.

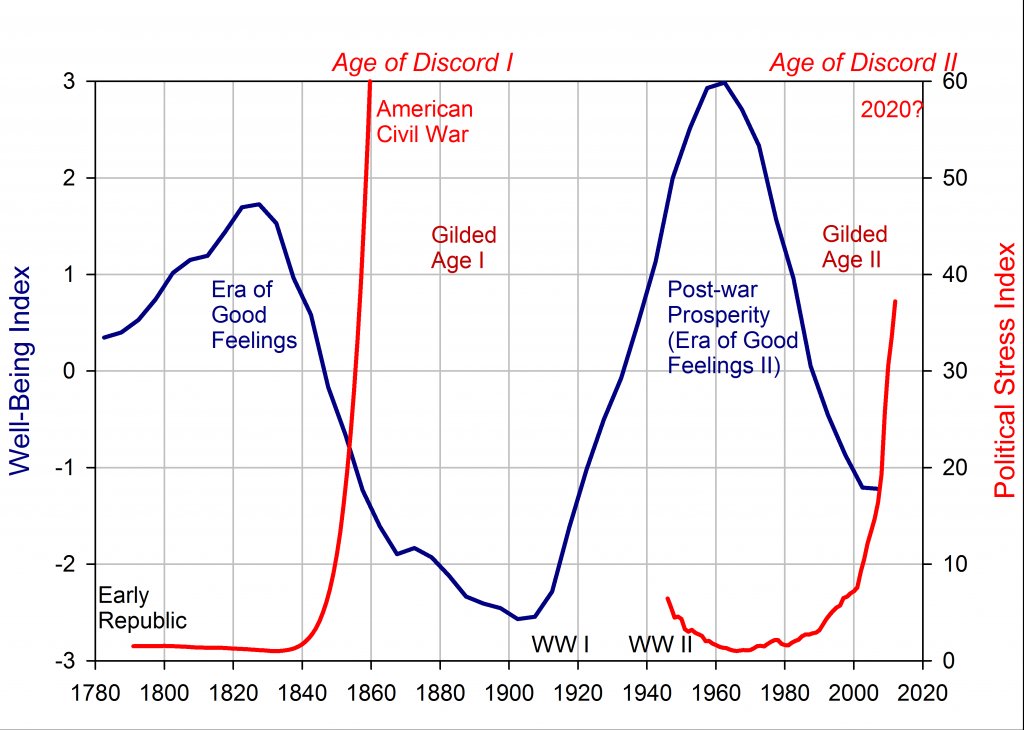

Striking image from the new Anthropic labor market impact report.

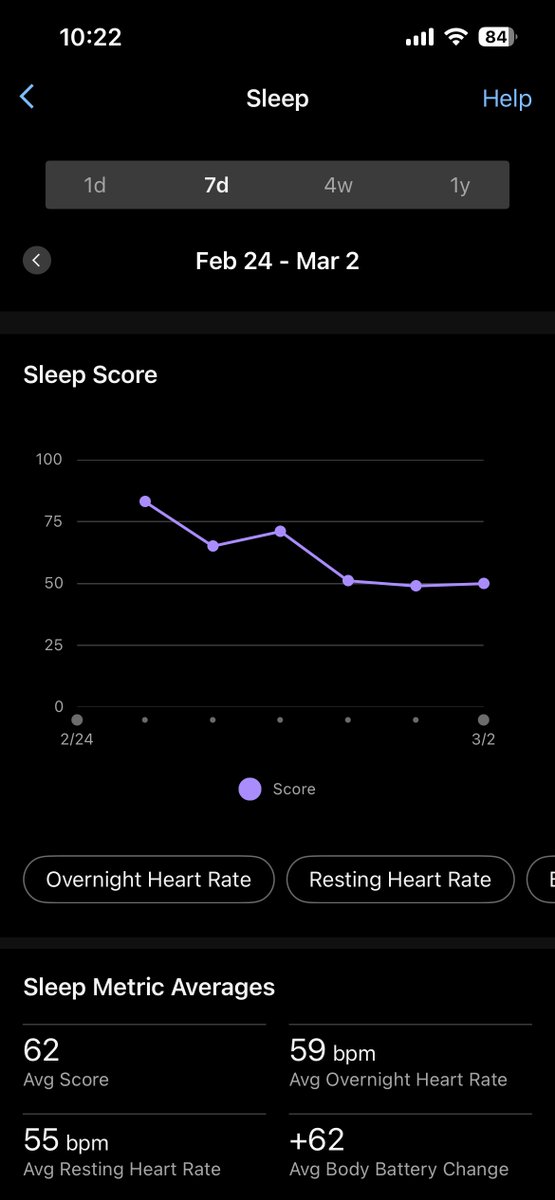

Day 2 Update: > Nauseous throughout the day in waves, esp. at dinner > Tenderness across my entire midsection, both front and back > Sleep wasn't great I ate like 25% of what I normally would at dinner I get excited about trying different foods like I normally would but then remember I have no appetite I am tasting and chewing food more thoroughly because I don't really have a desire to get it down Noticing some minor nootropic effects like clarity, maybe from less "food noise" Even though I took a standard 1mg dose for my first shot, it was too much Will do my next shot in 3-6 days, depending on how I feel, but will definitely cut dosage in half This stuff works, a little too well I finally have empathy for picky eaters and people who have trouble bulking

The only answer Canadians have to this is shaming people like me to stay. “But we have free healthcare!” is not convincing argument for a healthy 21 year old male. 33% marginal tax rate in Seattle, >50% in Vancouver, and Seattle pays more even before currency conversion. All to fund government benefits I can’t use for 50 years.