Jose Andres

39 posts

April usage reports for GitHub Copilot are available to help plan for AI credit billing starting June 1.

github.blog/changelog/2026…

English

@DotCSV jajaja, vivimos al borde del colapso, unos le llaman crisis, otros oportunidad, yo le llamo viernes

Español

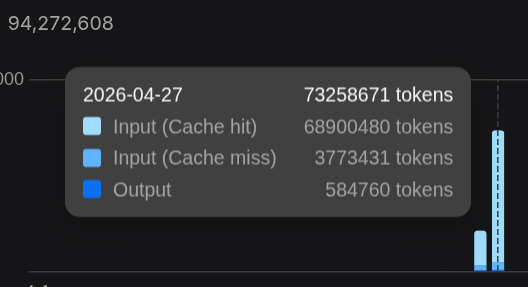

@_yogeshsingh how? i spend up 4B per month, gpt 5.4 for orchestrator and and Chinese models as boilerplate few projects

English

Hot take: if your monthly Codex usage doesn't make you a little uncomfortable, you're not using it enough.

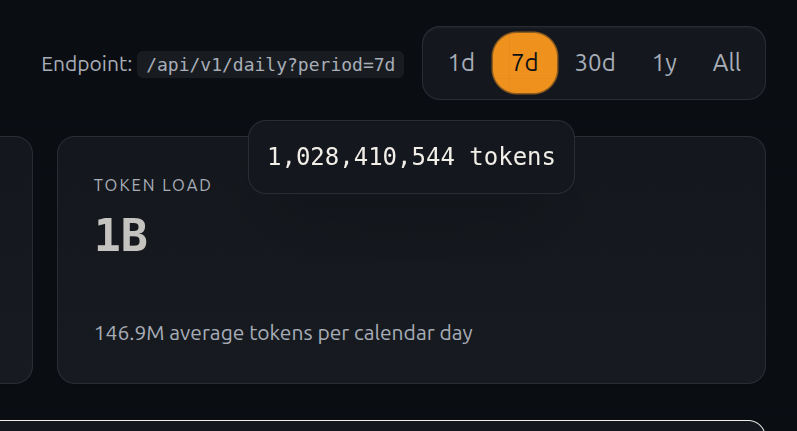

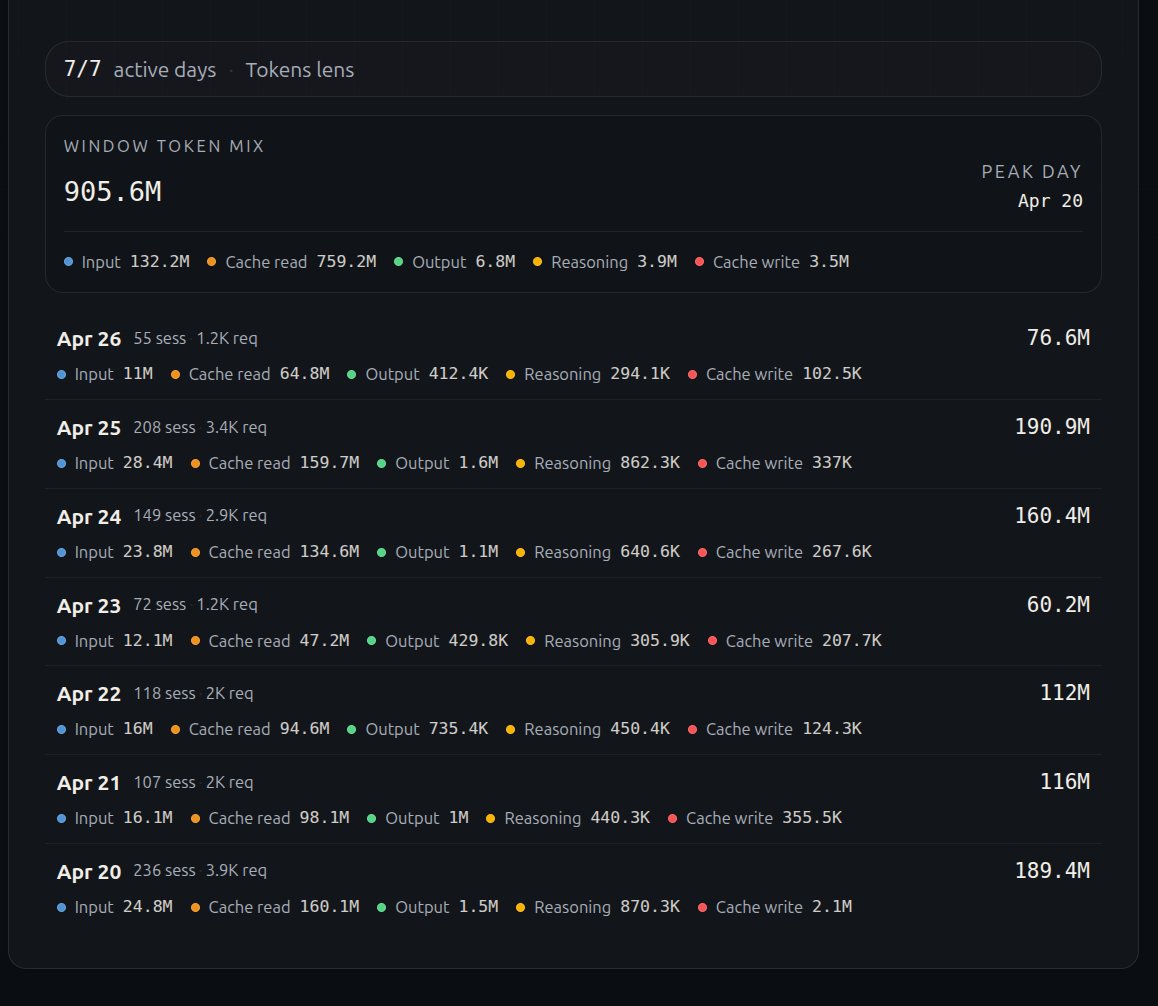

Checked my Codex Lens dashboard today.

513M tokens. 30 days. 49 projects.

Some quick math:

- 17M tokens/day avg

- 22 active coding days

- Top 5 days: 75M, 66M, 55M, 47M, 40M tokens

The way I see it: every token I spend is a junior engineer I didn't have to hire, a review I didn't have to wait for, a prototype that shipped in hours instead of days.

Half a billion tokens isn't a flex. This is what "vibe coding" actually looks like when you stop talking about it and start doing it.

#codex #gpt @OpenAIDevs @sama

English

@nahcrof Only this week only in opencode (i know that coding is very different than chat bot or RP bot)

English

@impuestito_org si no tienes problemas de privacidad, yo ahorita tengo copilot anual (mientras dure 31 usd al mes) y alibaba coding plan 50usd al mes y tengo un uso masivo usando opencode con agentes custom optimizados para sdd y tdd, en tres días se renueva y aún tengo mas del 30% de cuota

Español

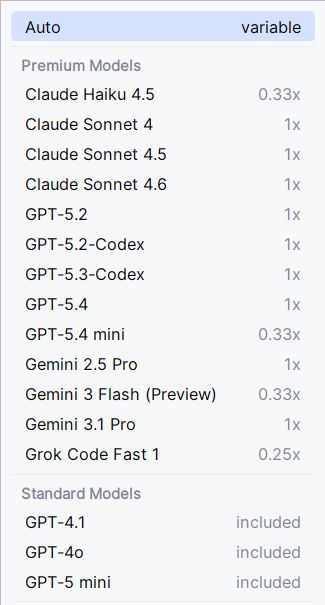

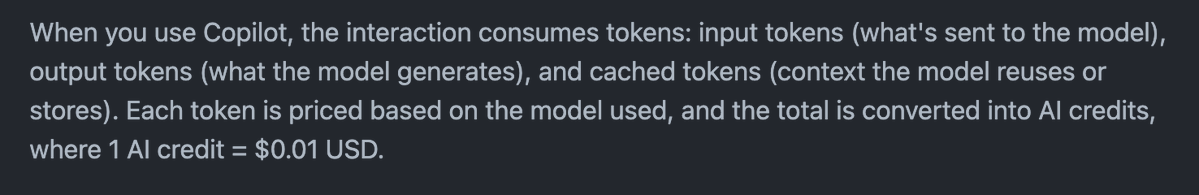

@github Que sentido tiene?, si un crédito es igual a 0.01 usd y los precios de los modelos son los mismos que los proveedores, en ese punto renta mas pagar por los coding plan de anthropic y codex al menos es mas barato que el api billing

Español

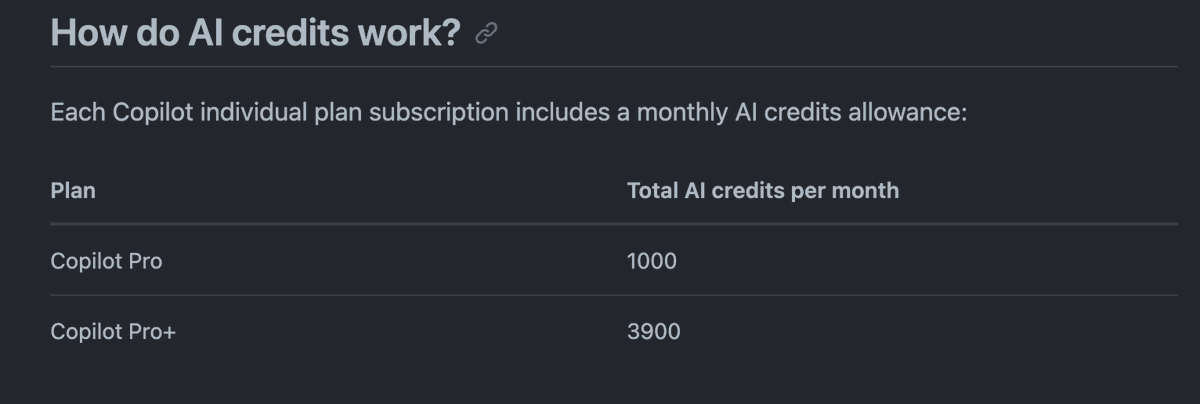

Starting June 1st, GitHub Copilot will move to a usage-based billing model as GitHub Copilot supports more agentic and advanced workflows.

In early May, you'll see a preview bill experience, giving visibility into projected costs before the transition.

👉 Read more about the upcoming change: github.blog/news-insights/…

English

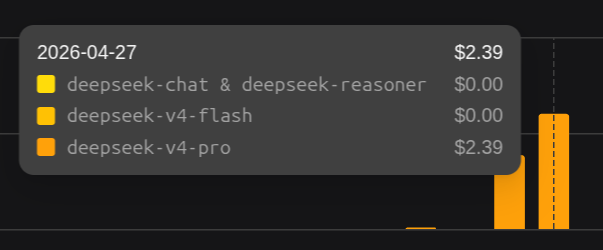

터무니 없는 가격.

이제 미국 프론티어 모델과 수백배의 가격차이가 발생하네요.

성능은 분명히 부족하지만, 가격은 비교가 불가능한 수준.

DeepSeek@deepseek_ai

🔥DeepSeek Input Cache Price Drop! Effective immediately, the price for input cache hits across the ENTIRE DeepSeek API series is reduced to just 1/10th of the original price! Build more efficiently for less. 📌Reminder: The DeepSeek-V4-Pro 75% OFF promotion is still active until May 5th, 2026, 15:59 (UTC Time).

한국어

@AriaWestcott levanté un ticket y me dijeron que los 120k son teóricos usando modelos costo/eficiencia como referencia alibaba tiene un plan similar por 50 usd con 90k requests al mes, para mi son aprox 4-5B tokens al mes (asumiendo el mismo plan de 50usd sigue siendo muy malo este plan)

Español

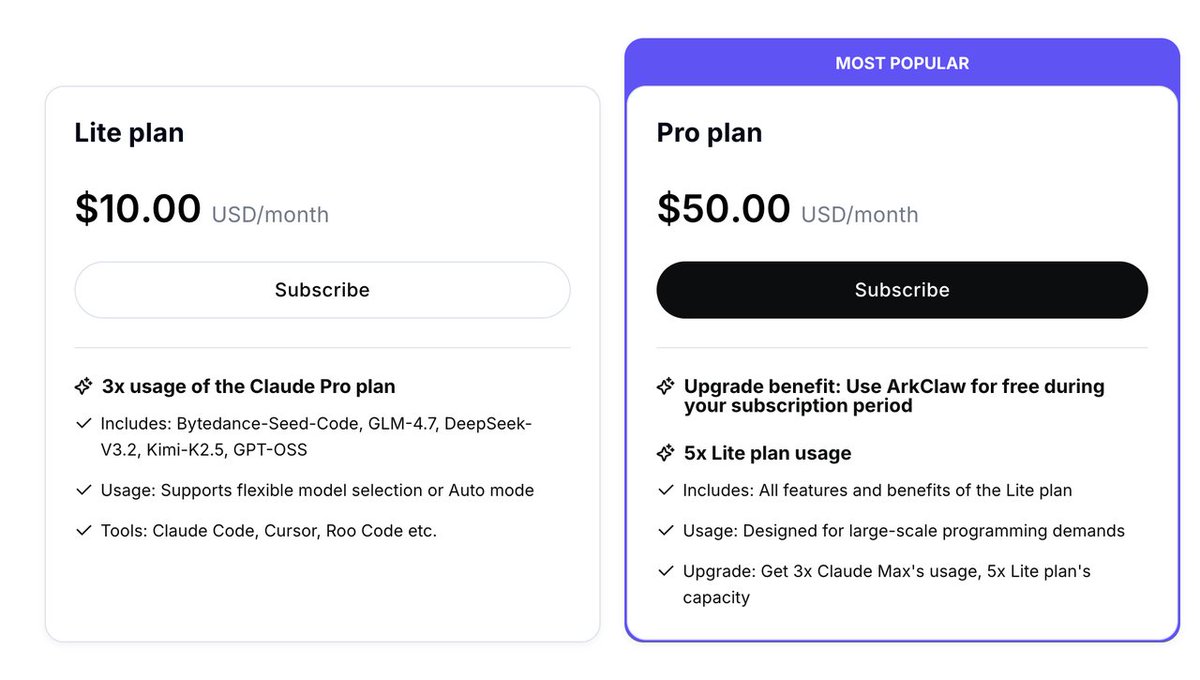

@AriaWestcott el plan de 10 usd la cuota de 5hrs me duró 15 min en una sola run y no terminó, usando glm 5.1, esto parece una estafa no una oferta solo fueron 40 requests de aprox 60k tokens, aprox 500 requests al mes siendo aprox 30M de tokens al mes, es mas rentable opencode por ese precio

Español

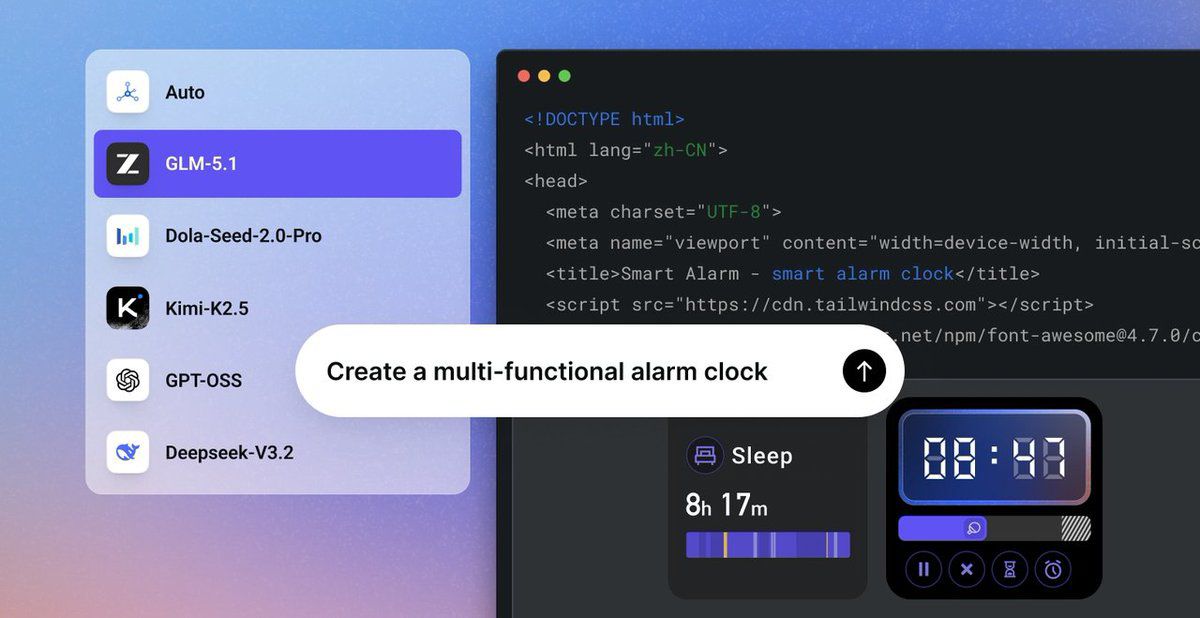

It's really impressive that BytePlus is running full GLM-5.1 at about a fifth of Z.ai's direct pricing.

It's the actual model, same weights, sitting on their Coding Plan and working inside Cursor, Claude Code, TRAE and OpenClaw.

Holds 8 hour autonomous runs without falling apart, which is usually where these setups start drifting. MiniMax M2.7 is on the same plan for agent workflows that tend to break after a few tool calls. Auto routing covers GLM-5.1, DeepSeek V3.2, Kimi K2.5, GPT-OSS and a couple others, so you're not stuck picking the wrong model for the job.

Anyone still paying Z.ai direct should take a look.

byteplus.com/en/activity/co…

#GLM51 #BytePlus #MiniMaxM27

English

@YuriKushch @vlad_mihalcea xD like what? even with the x7.5 on opencode is the cheapest 0.3 usd per prompt is crazy in cursor go up 20 usd with same task

English

@vlad_mihalcea I wouldn’t recommend much the Copilot itself. There are way better alternatives right now.

English

@DotCSV Que ahora requiero menos potencia para correr un modelo mejor? bueno... creo que ahora si vale la pena comprar xD me suena de algo

Español

@arrick007 @BytePlusGlobal XD, one prompt 78% of the 5hr quota, the usage is per token not per request like alibaba, 90k requests per 50 usd are like 4-5B tokens (GLM 5) per month with my workflows, on byteplus its about ~0.22 usd per million tk with glm 5.1, its similar to opencode go in usd/token

English

@BytePlusGlobal I purchased a Lite plan advertised as 1,900 requests per 5 hours, but it depleted within minutes of use. This feels like a scam, especially since my Alibaba coding plan (1,200 requests/5 hours) lasts much longer. I only used it for a 30 mins of coding! @BytePlusGlobal

English

GLM-5.1 is now on BytePlus ModelArk Coding Plan. Starting at just $10/month, ModelArk Coding Plan offers a highly cost-efficient way to access GLM-5.1 alongside other advanced coding models.

GLM-5.1 is Z.AI's latest flagship model, MIT-licensed, open-weight, and built for long-horizon agentic coding. GLM-5.1 ranks among the world's top-tier models across leading coding benchmarks, including SWE-Bench Pro.

What you get with ModelArk Coding Plan:

→ Multiple advanced coding models in one subscription: GLM-5.1, Kimi-K2.5, Dola-Seed-2.0-pro, DeepSeek-V3.2, and more. Switch freely or let Auto mode match the best model to the task.

→ Works with the tools you already use: Claude Code, Cursor, Cline, Codex CLI, Kilo Code, Roo Code, OpenCode, and OpenClaw

→ No throttling. Backed by ByteDance's infrastructure.

→ Activated on purchase. Ready to use immediately.

Also new this month: Dreamina Seedance 2.0 is now available on BytePlus, the official API platform for Seedance models. Learn more: byteplus.com/en/product/see…

Refer friends and earn 10% vouchers on every order with no cap. Your friends get 10% off their first subscription too.

Get started for $10/month → tinyurl.com/4zvkf9kc

#BytePlus #ModelArk #GLM #AIEngineering #DevTools #AIAgent

English

@vidamrr su sistema de billing por créditos está completamente roto, es una locura que por 39 usd al mes pueda usar 2.2B de tokens con sonnet y ni siquiera es exploit es todo dentro de sus ToS y sus capacidades

Español

GitHub literalmente ya no va a dejar que nuevos usuarios se suscriban a Copilot para darle prioridad a los usuarios que ya tienen una suscripción. WTF

Claude Opus ya solo va a estar en el tier de Pro+ WTF

Esto no me lo esperaba para nada, pero creo que es lógico porque estos proveedores siguen quemando dinero a lo bestia y no creo que ni de cerca se traduce en que sea rentable.

GitHub Changelog@GHchangelog

New signups for Copilot Pro, Pro+, and Student plans are paused to maintain service reliability for current users. • Usage limits tightened; Pro+ offers 5X higher limits than Pro github.blog/changelog/2026…

Español

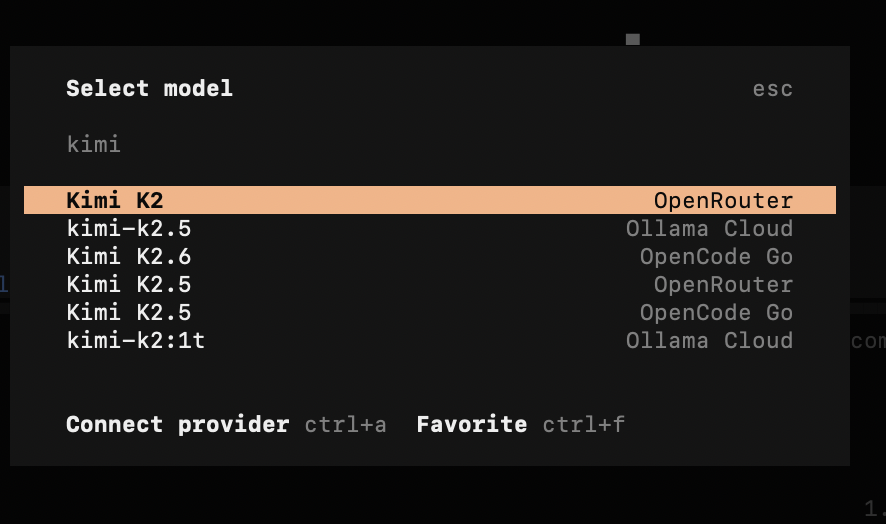

@llmdevguy @ivanfioravanti @opencode opencode go have kimi k2.6 but im not sure if the plan with kimi on open code is using k2.5 or k2.6

English

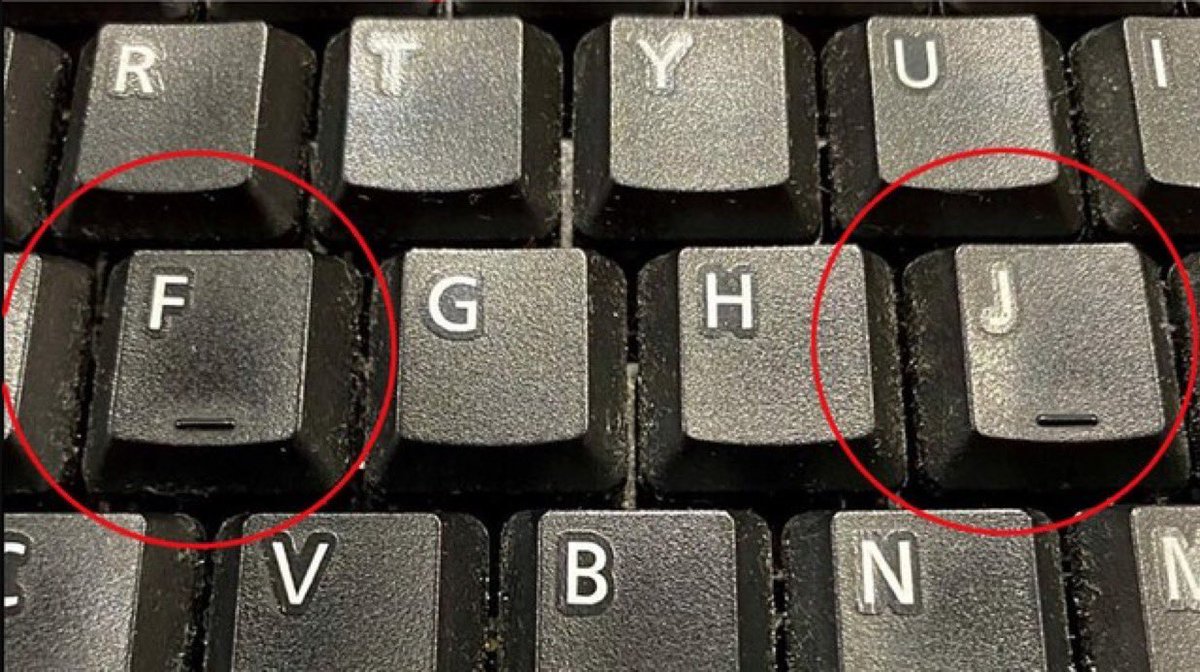

@itsD3lay jajaja, quita esa tecla y te darás cuenta, de la nada olvidas como escribir en teclado

Español

@opencode the docs is not updated, the model name is glm-5.1?

English

@nalinrajput23 in vanilla quality no, in pricing yes, in capabilities and the ability to be better than cursor with custom agents on opencode yes

English