Andrew Hojel

18 posts

We @neosigmaai @RitvikKapila are building the future of self-improving AI systems! By closing the feedback loop between production data and system improvements, we help teams capture failures, convert them into structured evaluation signals, and use them to drive continuous improvements in agent behavior.

We show how our system works on Tau3 bench across retail, telecom, and airline domains. Agent performance on the validation set (with a fixed underlying model, GPT5.4) improves from 0.56 → 0.78 (~40% jump in accuracy).

English

Had a blast working on search capabilities for 5.3 Instant. Hopefully you notice that search responses feel more 🤌

OpenAI@OpenAI

GPT-5.3 Instant gives you more accurate answers. When using web search, you also get: - Sharper contextualization - Better understanding of question subtext - More consistent response tone within the chat

English

Launching background agents and a mobile app for Claude code. Code from anywhere!

@PrismCoder

Go to prism.engineer to join! To get instant access, like and reply “Prism codes”

English

@SinclairWang1 @essential_ai @FaZhou_998 We uploaded the revised version to arXiv, and it should be up in the next few days.

English

@SinclairWang1 @essential_ai @FaZhou_998 Hey @sinclairwang1! Apologies, we definitely should include MegaMath-Web-Pro. Launching an experiment right now and will update the tables and corresponding section with the results. We think MegaMath rocks!

English

Finally had a bit of time to jot down some thoughts on this solid, open data engineering work from @essential_ai.

This work brings Essential-Web, a 24T-token pre-training corpus, to the open-source community. I've always appreciated open-source research, as it can significantly promote AI democratisation. Beyond the data release, this work also provides guidance on building a systematic Taxonomy of Categories for web documents to support data governance, with impressive levels of detail—including even scripts. This technical report, in my view, deserves multiple careful readings.

Notably, it also—finally—acknowledges our contributions to curating math pre-training corpora, such as MegaMath. I sincerely appreciate that☺️. Especially given that several orgs have used our data from our recent work or referenced our work without extending the appropriate credit. Let’s be honest: conducting research and doing real engineering work on data is far from trivial—yet it’s often dismissed as lacking novelty. 😅

That said, I do have some respectful disagreements regarding the experiments on data quality comparisons—particularly in the math domain (cc @AndrewHojel @ashVaswani). In our MegaMath paper, we showed that even the full MegaMath-Web corpus outperforms OpenWebMath in a 55B-token continual pretraining setup (see Figure 2). Also, I’d recommend clarifying how the top 10% of MegaMath-Web documents were selected. Furthermore, I observed that several existing domain-specific datasets (e.g., code and medical) show performance comparable to the DCLM baselines reported in this paper. I believe this might raise similar concerns for others as well.

There’s also a common misunderstanding—especially among folks who aren’t hands-on in pretraining data engineering—about types of pretraining corpora.

In my view, there are two major types:

1. True pretraining corpora, meant to lay the foundational knowledge for LMs.

2. Mid-training corpora, used in later stages (e.g., during LR decay) with focused curation, smaller in scale, and tailored for specific capabilities or benchmarks.

For instance:

- FineWeb is a broad-coverage true pretraining corpus.

- FineWeb-Edu is curated for high educational value, ideal for mid-training and great for benchmarks like MMLU.

In the context of the math domain:

- MegaMath-Web = a true pretraining corpus (about 100 Common Crawl dumps from 2014–2024).

- FineMath (3+, 4+) = a mid-training corpus, filtered via edu-style classifiers.

So what’s the “educational” version of MegaMath-Web? That would be MegaMath-Web-Pro—we used the same edu classifier as FineMath to extract high-ed value docs, followed by LLM-based refinement for noise reduction.

But to be clear: simply filtering MegaMath-Web by math_score isn’t equivalent to using the edu classifier. These are different metrics. It’s important for fair comparisons.

Given that EAI-TAXONOMY Math w/ FM and FineMath-3plus are reported in Table 3, I believe MegaMath-Web-Pro also deserves inclusion.

(cc @youjiacheng —thanks for the kind mention today and recognition of our work!)

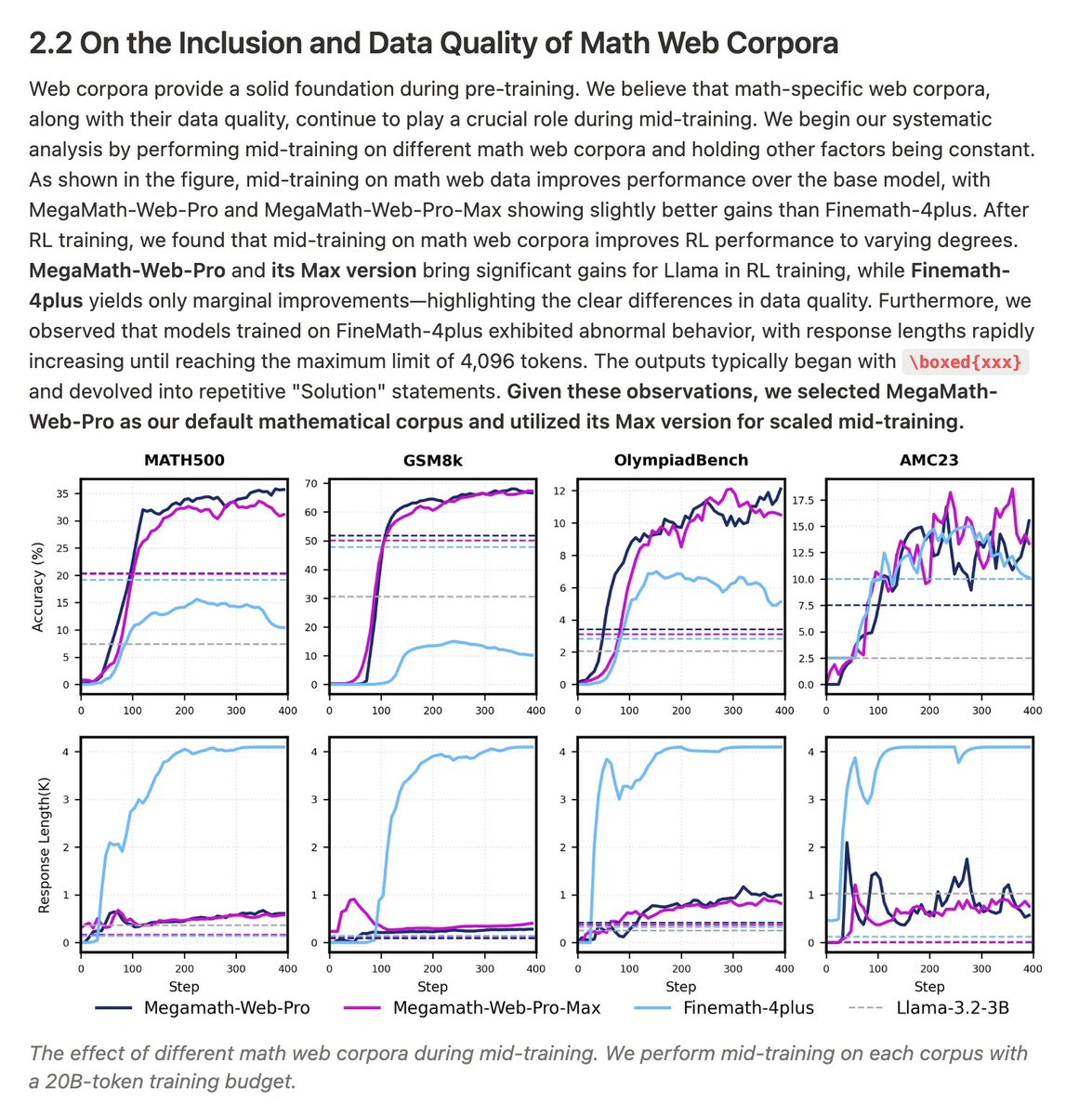

Another way to evaluate math corpus quality?

Check out our recent work: OctoThinker.

We found that mid-training on MegaMath-Web-Pro (and soon, MegaMath-Web-Pro-Max) significantly boosts RL scaling—outperforming FineMath-4+. (See third figure attached.)

Blog here: tinyurl.com/OctoThinker

The tech report + MegaMath-Web-Pro-Max open release is coming late this week or early next. Still working hard on it—stay tuned!

Finally, I want to shout out to the amazing data engineering work from @huggingface (cc @LoubnaBenAllal1). Their contributions—FineWeb, FineWeb-Edu, FineMath, Nanotron—are hugely appreciated. Their curation, technical depth, and open-source spirit inspired much of our own work, including ProX (arxiv.org/abs/2409.17115) and MegaMath. Thank you!

If you’re building models and need high-quality corpora—feel free to explore ours:

MathPile: huggingface.co/datasets/GAIR/…

DCLM-Pro: huggingface.co/datasets/gair-…

FineWeb-Pro: huggingface.co/datasets/gair-…

MegaMath: huggingface.co/datasets/LLM36…

More is coming—let’s keep brainstorming & building. 🚀

Essential AI@essential_ai

[1/5] 🚀 Meet Essential-Web v1.0, a 24-trillion-token pre-training dataset with rich metadata built to effortlessly curate high-performing datasets across domains and use cases!

English

@SinclairWang1 @essential_ai @FaZhou_998 If there are any datasets from MegaMath Web that are better representative of its performance before LLM rewriting, we are happy to update Table 3.

English

@SinclairWang1 @essential_ai @FaZhou_998 We only report filtered web data as our goal is to purely measure the effects of different filtering methods to benchmark the performance of EAI-Taxonomy.

English

@SinclairWang1 @essential_ai @FaZhou_998 Hey @SinclairWang1 and @FaZhou_998! We ran with MegaMath Web Pro and reported the (very strong) performance in Appendix A.7. We have also added a clear explanation that all the evaluated datasets are filtered web data without any LLM intervention.

English

Check out what the Data Team at @essential_ai has been cooking! It's been a blast preparing this dataset and super excited to see what people use it for. Shoot me a DM with any questions or cool use cases.

Essential AI@essential_ai

[1/5] 🚀 Meet Essential-Web v1.0, a 24-trillion-token pre-training dataset with rich metadata built to effortlessly curate high-performing datasets across domains and use cases!

English

Andrew Hojel retweetledi

Andrew Hojel retweetledi

Thrilled to announce our company, essential.ai 🚀

We are in an exciting era of human-computer collaboration evolving the way we will reason with, process and generate information.

At Essential AI, we are passionate on advancing capabilities in planning, reasoning, tool use and continual learning that will be critical to bridge the knowledge and skill gap between humans and computers.

GIF

English

Andrew Hojel retweetledi

I'm thrilled to announce our company, @essential_ai . We believe that breakthroughs in AI will unlock the most profound tools for thought, advancing humanity's collective knowledge and capability.

essential.ai

GIF

English

Andrew Hojel retweetledi

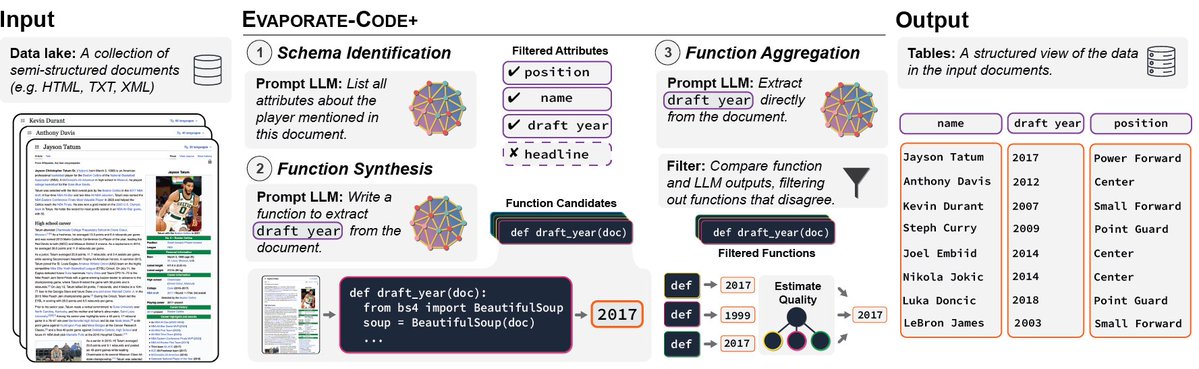

🎉 Great news: our paper on #Evaporate, led by @simran_s_arora from @HazyResearch, was accepted at #PVLDB2023!

#Evaporate uses #LLMs to extract structured views from unstructured data.

📰 Paper: arxiv.org/abs/2304.09433

💾 Code: github.com/HazyResearch/e…

#GPT4 #DB #LanguageModel

English

Andrew Hojel retweetledi

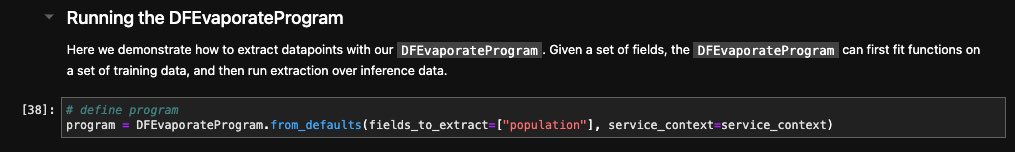

LLMs can directly extract structured data (esp w/ Function API), but can be slow/expensive. 🤔

Instead: use LLMs to generate code, run code to extract data 💡

We now have code-based extraction in @llama_index - extract df’s from arbitrary text 🧑💻

gpt-index.readthedocs.io/en/latest/exam…

English

Andrew Hojel retweetledi

LMs can be expensive for document processing. E.g., inference over the 55M Wiki pages costs >$100K (>$0.002/1k toks)💰 We propose a strategy that reduces inference cost by 110x and can even improve quality vs. running inference over each doc directly!

💻 github.com/HazyResearch/e…

English