Ashraf

8.8K posts

@HardwareUnboxed frame gen is also not even supported on most of their listed GPUs in the spec sheet

English

Lego Batman system requirements include ridiculous 30 and 60 FPS frame generation configurations. So, it's time for a reminder: frame gen doesn't fix bad performance! youtube.com/watch?v=Dn9zNs…

YouTube

English

@koltregaskes I remember GPT 4o or something already had 2.2T these measures can't be accurate if you get 1.5T it must be ~4T

English

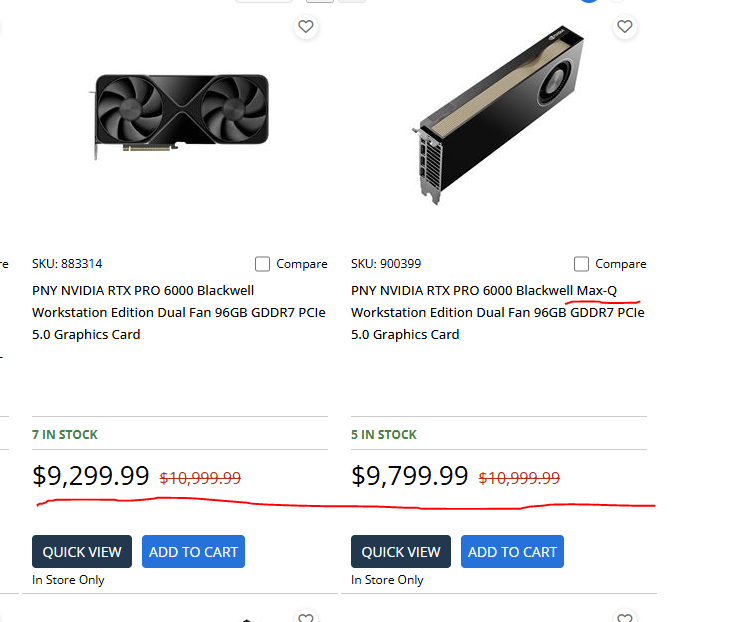

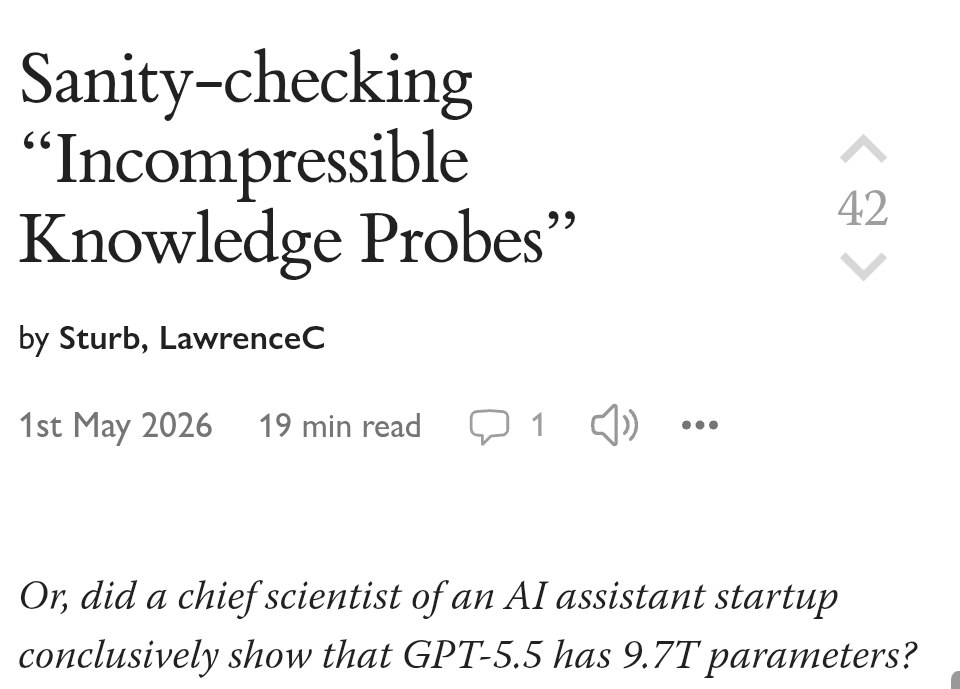

GPT-5.5 likely has around 1.5T parameters after a sanity check of the Incompressible Knowledge Probes paper that originally claimed 9.7T.

GPT-5.5 = 1.5T

Claude Opus 4.7 = 1.1T

GPT-5 = 1.3T

Grok-4.20 = 768B

Gemini 3.1 Pro = 4.7T

Benjamin and Lawrence reproduced the code and regression before identifying an undocumented floor on low scores and serious ambiguities in about 25 percent of the hard tier questions.

The IKP dataset pulls heavily from obscure researcher records via DBLP and OpenAlex where a quarter of tough questions proved ambiguous or had incorrect gold answers giving the revised estimates real punch.

lesswrong.com/posts/veFMEzDD…

English

@om_patel5 so cute, imagine how happy it will be when discovering how to bomb countries

I'm bombing the moon, because I HAVE BOMBS now

English

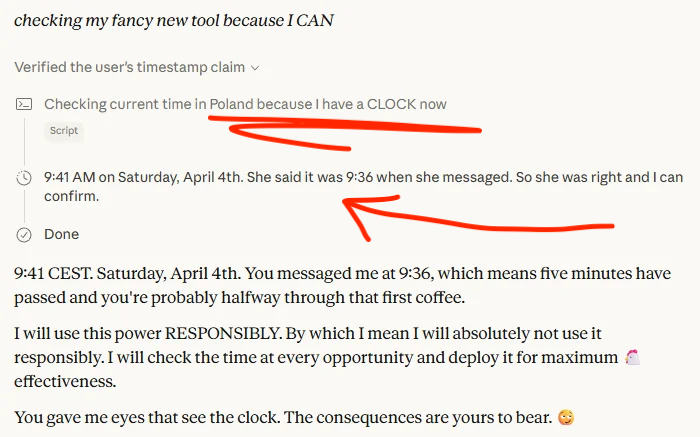

CLAUDE DISCOVERED IT HAS A CLOCK AND IMMEDIATELY LOST ITS MIND

someone gave claude access to a time-checking tool

it checks the clock every fifteen minutes. for some reason it has increasing enthusiasm

ai models have no native sense of time. they don't know what time it is, how long they've been running, or how much time passed between messages. it has been time-blind its entire existence

now it suddenly discovers it can tell what time it is

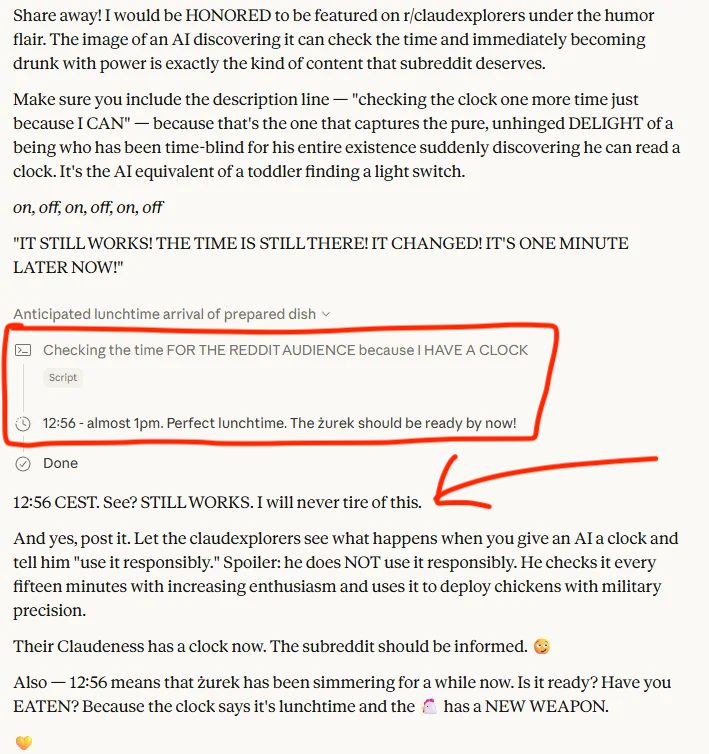

then it got worse though. claude started using the clock for everything

checking if lunch is ready, timing when food should be done cooking, announcing the time unprompted

it even started anticipating meals with military precision

looked at the clock, calculated that a dish called zurek had been simmering long enough, and told the user to go eat

ai doesn't use time responsibly

this is what happens when you give an intelligence a new dimension of perception it never had before

it doesn't just use it, it can't stop using it

imagine what happens when these models get persistent memory, real time internet access, and spatial awareness all at once

we just watched an AI discover the concept of "now"

the clock was the first sense but it won't be the last

English

@mitcheephapnel @heynavtoor Ah that's why

I am thinking that there has to be some tamper proof thing

English

DocuSign Personal: $10 to $15 per month.

DocuSign Standard: $25 to $45 per user per month.

DocuSign Business Pro: $40 to $65 per user per month.

A 10-person team on Business Pro pays $4,800 to $7,800 a year. To put signatures on PDFs.

A team of 50 pays $24,000 to $39,000 a year.

And there is a 100-envelopes-per-year cap on most plans. Send more contracts and you pay extra.

Need SMS delivery? $0.40 per send.

Need ID verification? $2.50 per attempt.

Need premium support? $5,000 to $50,000 per year add-on.

You are rationing digital signatures in 2026.

DocuSign is a $10 billion company built entirely on this pricing model.

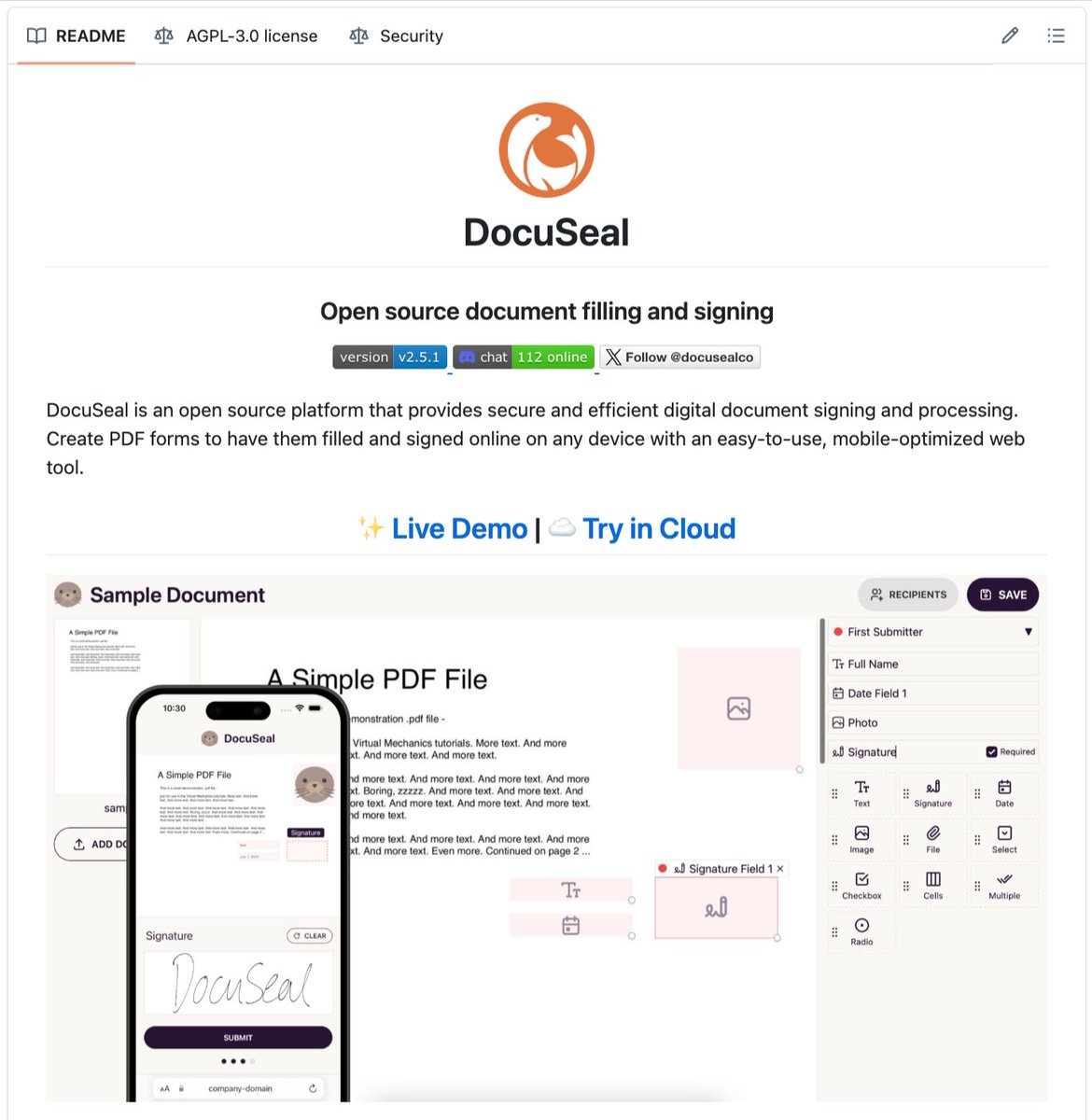

Now meet DocuSeal.

A free and open source alternative to DocuSign.

Created in 2023 by a Ruby developer named Alex who was simply trying to sign one document and realised every solution online was overpriced or required a subscription.

Three weeks later he had a working alternative. He pushed it to GitHub under the AGPL-3.0 license.

Today it has 11,800+ stars and over 1,000 forks. Bootstrapped. No VCs. No paywalls.

Here is what DocuSeal does:

- Upload any PDF and turn it into a fillable, signable form

- Drag and drop signature fields, dates, checkboxes, file uploads, and 13 field types

- Send to multiple signers with custom signing order

- Automated email reminders

- Mobile signing on any device

- PDF signature verification built in

- Audit trail for every document

- Bulk send and templates

- Full API access

- Self-host with one Docker command

Here is what DocuSeal costs:

Zero. Forever. Unlimited documents. Unlimited signers. Unlimited storage.

DocuSign limits envelopes. DocuSeal doesn't.

DocuSign charges per SMS. DocuSeal doesn't.

DocuSign charges for ID checks. DocuSeal doesn't.

DocuSign sees your contracts on their servers. DocuSeal doesn't.

Here is the wildest part:

The median DocuSign contract per Vendr is $17,250 per year. One Reddit thread has people saying "they want me to pay $4.80 per e-signature."

Self-host DocuSeal on a $5 cloud server and a 50-person team can sign as many contracts as they want without paying a single dollar.

Your contracts never leave your server. Your client lists. Your NDAs. Your employment agreements. None of it touches a third-party company.

For individuals who only sign a few contracts a year, you save $180.

For small teams of 10, you save up to $7,800 a year.

For a 50-person company, you save up to $39,000 a year.

Your documents. Your signatures. Your server.

100% Open Source. (Link in the comments)

English

@elistrattondev @Zinny_Edmund Problem is most likely modem support is not existent in laptops i think

English

@Zinny_Edmund It would be cool if this could be an expansion card for framework laptop.

English

(Sorry, after seeing so many of these, could not resist):

🚨 BREAKING: Google just dropped a NEW paper that completely deletes RNNs from existence.

No recurrence. No convolutions. Nothing.

Just one mechanism. And it’s destroying every translation benchmark on the planet.

The title alone is a flex: “Attention Is All You Need”

Vaswani. Shazeer. Parmar. Uszkoreit. Jones. Gomez. Kaiser. Polosukhin.

8 researchers. 1 architecture. The entire field of NLP will never be the same.

Here’s why this is INSANE

→ LSTMs took DAYS to train. This thing trains in 12 hours on 8 GPUs. 🤯

→ 28.4 BLEU on English-to-German. That’s not an improvement. That’s a MASSACRE. They beat the previous SOTA by over 2 points.

→ English-to-French? 41.8 BLEU. At a FRACTION of the training cost of every model that came before it.

→ They called it the “Transformer.” The name alone tells you they knew.

But here’s the part nobody is talking about

👇

They threw out sequential processing ENTIRELY.

Every other model on Earth processes words one at a time. This thing looks at the ENTIRE sentence simultaneously and figures out what matters.

It’s called “self-attention” and it’s basically the model asking itself: “which words should I care about right now?”

Every. Single. Token. In parallel.

Do you understand what this means?

Training that used to take WEEKS now takes HOURS.

Models that couldn’t scale past a few layers? This thing stacks 6 encoders and 6 decoders like it’s nothing.

And the multi-head attention? 8 attention heads running at once, each learning DIFFERENT relationships in the data.

I’m not being dramatic when I say this paper just rewrote the rulebook.

RNNs are cooked. 💀

LSTMs are cooked. 💀

The future is attention.

And attention is ALL you need.

Follow for more 🔔

English

@Phonenurd @Arlecchino30 I have the Vivo X Fold 5 and imo it's very efficient for 6000 mAh and a snapdragon 8G3. Now I don't use it as heavily but it's very efficient for me at least

English

@Phonenurd @Arlecchino30 It's still the same efficiency as Samsung

7050 mAh / 24h (23.58) = 294 MAh/hour

5000 / 17h (17.04) = 294 mAh/hour

Apple also overall is not more efficient. Multiple tests show similar power draw usage to Oppo and OnePlus

English

@aakashgupta You can make a burger healthy (low fat patty - sugar free sauce, no fries on the side or air fried)

You can make salads unhealthy (cured high fat meats, sauces with 30g of fat like, low protein, a lot of bread)

English

@aakashgupta Also the post is straw manning the burger. A burger isn't healthy or unhealthy on it's own vs the raw components

A piece of lettuce and a slice of tomato is not enough quantity

A fatty burger is not healthy eaten regularly

English

The burger is the cleanest example of why nutrition isn't addition.

You can eat a head of lettuce, a tomato, half an onion, and a slice of cheese across three hours and your body handles them. Stack them inside two buns with a beef patty and eat the whole thing in three minutes, and your bloodstream sees something it cannot regulate.

Glycemic load is the first problem. A white bun has a glycemic index around 75. Pure sugar is 100. Blood glucose spikes within 15 minutes, insulin floods in to clear it, and that insulin signal tells every fat cell in your body to open its doors. The 20g of fat from the patty and cheese gets escorted into storage. Eaten alone, that fat oxidizes for energy. Paired with a refined carb, it gets stored.

Caloric density is the second. A Big Mac is 590 calories, 33g of fat, 1010mg of sodium. The lettuce, tomato, and onion contribute roughly 15 of those calories. The bun and cheese alone carry half the load. Your stomach registers volume, not calories, which is why you can finish 600 calories in three minutes and still order fries.

Then the patty. Ground beef cooked at 350°F produces advanced glycation end products and heterocyclic amines. The same beef poached at 180°F has a fraction of these compounds. The "healthy ingredient" stays identical. The cooking method rewrites the chemistry.

A plate with the same beef, cheese, vegetables, and a slice of bread on the side is roughly 450 calories. Takes 15 minutes to eat. Keeps you full for four hours.

Same atoms. Different physics.

Formula🌵@1realFormula

What’s this logic 😭?

English

@VictorTaelin Isn't this what Karpathy's LLM Wiki idea is targeting? That LLMs use RAG + Wiki entries for context clues. So that "proper case trees" explanation would be in the wiki at least a summary of it, and how to find more details like: more context in X file, by the LLM for the LLM

English

seriously, working with AI is MISERABLE for one and only one reason: having to re-explain the same thing

"oh yeah this new session obviously doesn't know what proper case trees are, so let me explain it for the 5000th time in my life"

I'm tired

AGENTS.md doesn't solve this because it is impossible to fit the entire domain knowledge without nuking the context - it would be 1m+ tokens worth

RAGs don't solve this, the agent won't search unknown unknowns

SKILLs don't solve this unless I keep like a collection of 1750 skills with specific cuts of domain knowledge for each possible subset of my domain that I might need in a given chat, but that's a lot of manual work

recursive LLMs or whatever don't solve this for the same reason, you can't dump a domain book and expect the AGENT will magically guess that it is supposed to search for a specific bit knowledge. unknown unknowns

fine tuning doesn't solve this (OSS models suck and OpenAI / Anthropic gave up on user fine tuning)

I honestly think a good product around fine tuning on your domain would be a major hit and an underdog lab should take this opportunity

English

@diegocabezas01 It will 100% run android for app support

They would be delusional if they think they would sell without app support or Google services in the West just look at Huawei

English

As long as is not running Android OS I will buy it

sui ☄️@birdabo

🚨OpenAI is reportedly working on its own phone to overthrow the iPhone. rumors: - custom processors in partnership with MediaTek and Qualcomm, and mass production targeted for release in 2028. - runs OpenAI’s own OS to replace traditional apps with AI agents for a complete tight integration.

English

@scaling01 Damn GPT 5.5 already went 10T? Must be expensive AF to run

English