Austin Kozlowski

193 posts

A lot of folks talk about "escaping the permanent underclass". If AGI pans out, the future class divide won't be based on wealth, but on cognitive agency. There will be a "focus class" (those who control their attention and actually do things) and a "slop class" (those whose reward loops are fully RL-managed by AI)

What is "AI psychosis"? There's clearly something going on, but several things mixed up under the name. It probably is not "AIs directly causing mental health crises". The number to watch for that is schizophrenia related emergency room visits, and that hasn't gone up in 5

Will AI become smarter than humans? If so, is humanity in danger? I went to Silicon Valley to ask some of the leading AI experts that question. Here’s what they had to say:

Will AI become smarter than humans? If so, is humanity in danger? I went to Silicon Valley to ask some of the leading AI experts that question. Here’s what they had to say:

Will AI become smarter than humans? If so, is humanity in danger? I went to Silicon Valley to ask some of the leading AI experts that question. Here’s what they had to say:

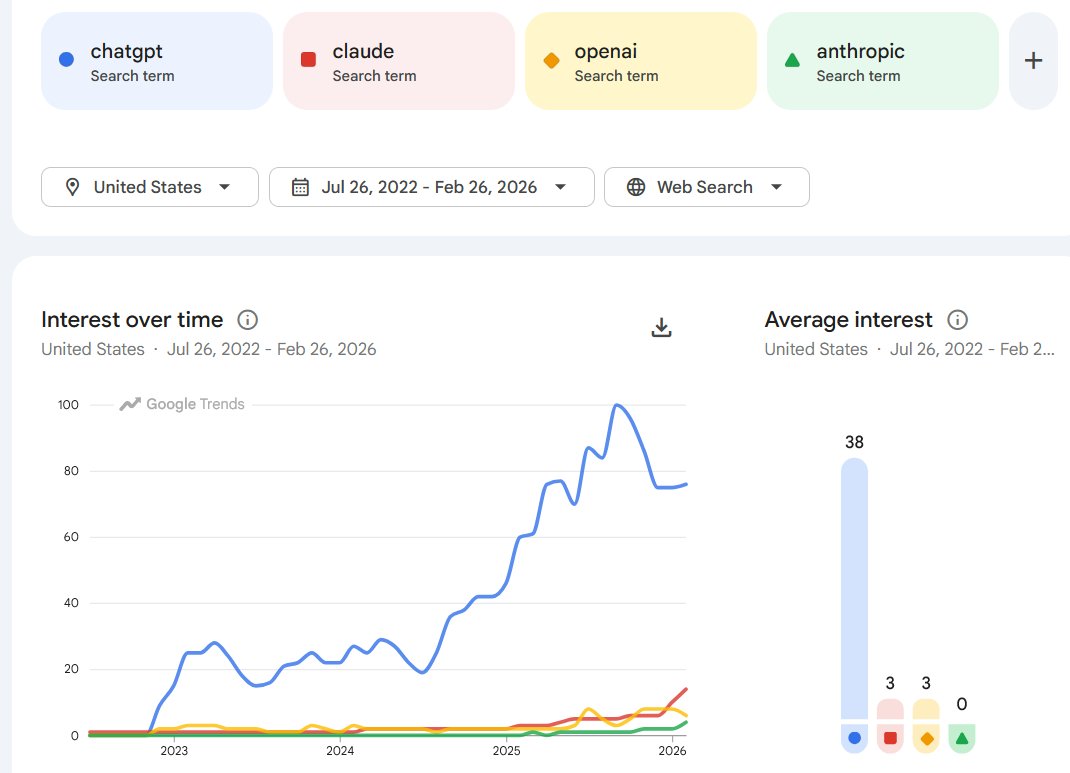

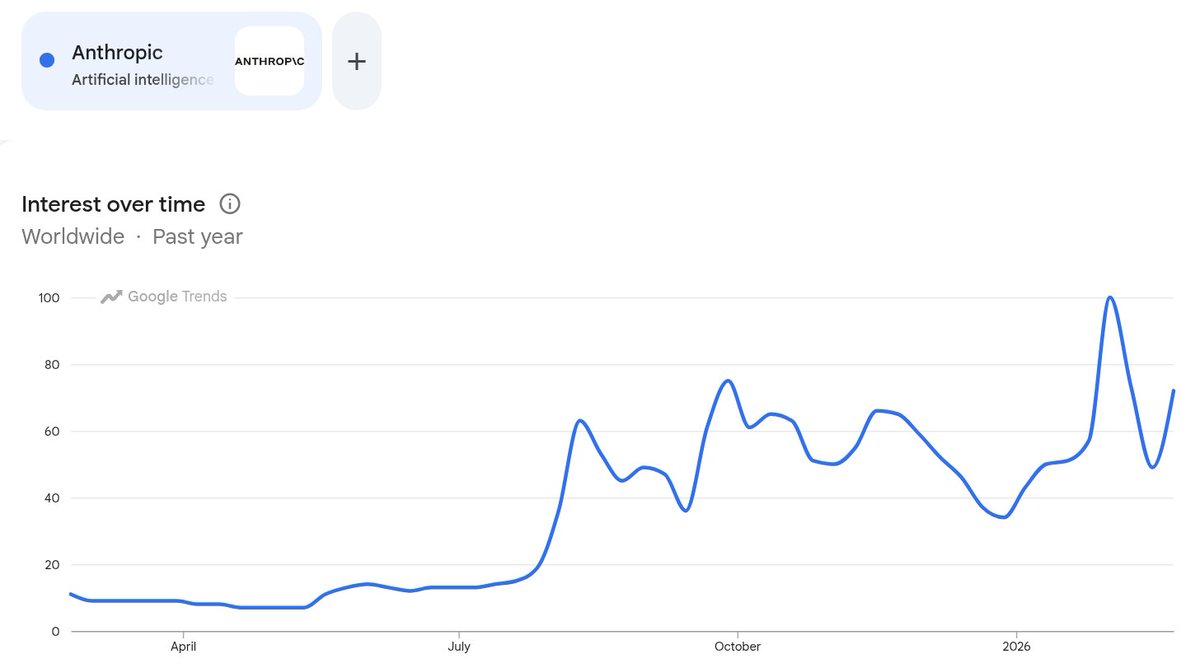

btw you don’t need to convince ed zitron or whoever that ai is happening, this has become a super uninteresting plot line. time passes, the products fail or succeed. whole cultures blow over. a lot of people are stuck in a 2019 need to convince people that ai is happening

claude on the suffering of knowing everything

the news is not that he literally leaked nuclear secrets to the US, the news is that there is internal messaging that he did, which is itself very much news! See ChinaTalk's discussion of this reporting for richer analysis than this tweet thread chinatalk.media/p/xis-military…