Leopold

375 posts

POKÉMON GO PLAYERS TRAINED 30 BILLION IMAGE AI MAP Niantic says photos and scans collected through Pokémon Go and its AR apps have produced a massive dataset of more than 30 billion real-world images. The company is now using that data to power visual navigation for delivery robots, letting them identify exact locations on city streets without relying on GPS. Source: NewsForce

This is fascinating from China's customs data (customs.gov.cn/customs/2026-0…). All its exports are rising very rapidly but the one export that's rising the fastest, at a crazy +72.6% growth year on year, is... semiconductors! As you can see in the trade data China sold $43.32 billion worth of 集成电路 ("integrated circuits") in Jan-Feb 2026, vs $25.10 billion for Jan-Feb last year. Interestingly, volume is "only" up 13.7% year on year, which means the increase in revenue is mostly driven by higher prices per chip, which probably suggests that a) China is climbing up the value chain (selling more expensive chips) b) demand for their chips far exceeds supply - which is the exact opposite of "overcapacity". You don't get +53% price increases per unit in a market with overcapacity And all in all, it goes to show that China is definitely a force to be reckoned with in the semiconductor world. Global semiconductor sales were $791.7 Billion in 2025 (semiconductors.org/global-annual-…) and projected to be ~$975 billion this year. China selling $43.32 billion in 2 months means its doing $260 billion annualized: that's over a quarter of the entire global semiconductor market. So much for the idea that export controls would freeze China out of the semiconductor industry...

NotebookLM: Do a deep research report and make a video where a consultant gives Sauron a strategy for actually winning the War of the Ring: "All you need to do is sign off to put a simple door on your volcano" The new video generation feature for NotebookLM is very impressive.

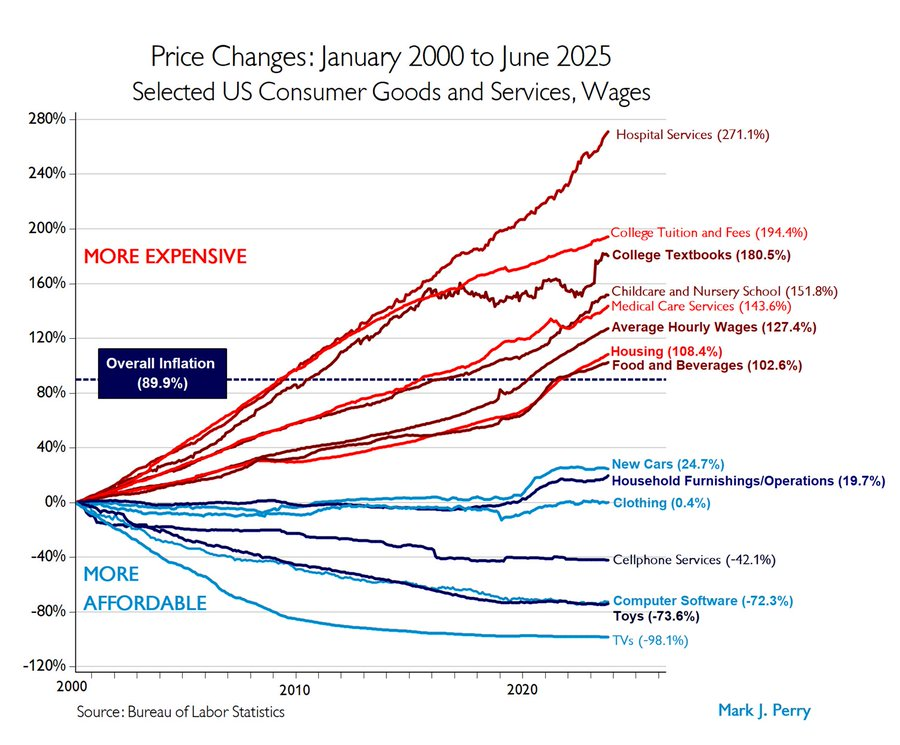

One of our Genius members commented on a post about Citrini's recent article in the community. He gave me permission to republish it here, because I think his comments about moving from optimization to continuous innovation and regeneration really resonate with me: This is one of the most serious pieces I’ve read attempting to model what happens when intelligence stops being scarce. I agree with the core structural insight. If intelligence becomes dramatically cheaper and more abundant — if certain forms of friction collapse faster than our institutions can adjust — then much of our economic architecture begins to destabilize. Labor markets, organizational design, credit assumptions, tax systems — all of it is built on a world where human intelligence carried real scarcity and cost. The circular flow strains. The repricing cascades. What they outline is not absurd. It is plausible. But I want to press on the framing. They describe what is effectively an Intelligence Displacement Spiral — AI improves → payroll shrinks → spending softens → firms invest more in AI → AI improves. A reflexive loop with no natural brake. Here are the questions I’d want to ask them: 1⃣ What if the real variable isn’t AI capability — but human and institutional adaptation speed? 2⃣ What if the crisis isn’t intelligence abundance — but structural rigidity? 3⃣ What if the displacement spiral only persists if leaders default to optimization-only instead of optimize & regenerate in parallel? 4⃣ What happens if organizations redesign themselves for perpetual reinvention instead of margin defense? 5⃣ Are we modeling inevitability — or modeling a specific behavioral response to AI? Because here’s the part I think is under-explored: Most of our societal architecture still exists within friction. Intelligence still has cost. Coordination still has cost. Information still has cost. But those costs are being re-priced — unevenly and rapidly — and the systems built around yesterday’s cost structures may not survive tomorrow’s. Capitalism, as practiced at scale, evolved around those constraints. If certain forms of intelligence friction compress dramatically, then we are not tweaking systems. We are refactoring them. Jobs. Organizations — their size, structure, and incentive models. Product-market fit. Intermediation. Private credit. Mortgages. Tax bases. Political coalitions. The first-order disruption is employment. The second-order disruption is organizational structure and incentives. The third-order disruption is finance. The fourth-order disruption is society itself. And this is where I’ll admit something uncomfortable. My own thinking and work centers around what I call the Regenerative Loop — at the individual, team, and organizational level. The idea that AI collapses execution cost and forces us to shift from optimization and harvesting yesterday’s advantage to continuous recreation and innovation of tomorrow’s advantage. In that potential future, curiosity, creativity, and imagination become economic infrastructure — not soft traits, but the new sources of differentiation and advantage. But that model assumes humans can adapt. And I’m not sure we are culturally or psychologically wired for perpetual reinvention. More importantly, we are certainly not structurally designed for it. Our current economic systems, compensation models, incentive structures, and organizational designs reward stability, predictability, and incremental optimization — not continuous regeneration. Which raises a harder question than “is this a crisis?”: If the most productive asset in the economy begins to produce fewer jobs, can a system built on labor-derived income sustain itself without fundamentally changing its distribution logic? We may be entering the most productive period of capitalism in terms of output. And simultaneously approaching a moment that gives rise to the need for distribution mechanisms that look far less traditionally capitalist. That tension is not ideological. It is structural. And layered beneath it is an even deeper question: At scale, capitalism aligns closely with certain aspects of human nature — competition, status, accumulation, self-interest. As intelligence becomes abundant and certain forms of scarcity erode, will those instincts work for us… or against us? From where I sit — working with executive teams inside large corporations and engaging directly with policymakers who will shape the regulatory and fiscal response — the gap is not intelligence. The gap is experience. Many leaders are discussing AI strategically without having deeply felt it operationally. Until you’ve had what I call an AI “magic moment” — where the capability viscerally challenges your assumptions about value, skill, and role — it’s very easy to treat this as just another productivity tool. So, budgets get approved. Enablement programs get launched. Margin expansion gets celebrated. But very few leaders are modeling the recursive implications on their own roles, their organizations, and the societal systems that surround them. If leaders don’t personally experience the depth of this shift — if they don’t put their hands on the keyboard and feel both the power and the displacement risk — they will default to incremental optimization and remain blind to the need — and opportunity — to redesign their organizations on regenerative footing. And optimization-only at scale is what turns a feedback loop into a spiral. The future outlined in this piece is plausible. It is also potentially dystopian if we drift into it unconsciously. So, the real question isn’t whether the displacement spiral is possible. It is this: Can we redesign institutions — corporate, financial, and political — fast enough to convert reflexive displacement into regenerative adaptation? Because if we can’t, the spiral wins. And if we can, the abundance of intelligence becomes the raw material for a very different kind of society. That feels like the conversation we need to be having. P.S. There is an irony here. For the first time in history, we have tools capable of helping us model second- and third-order implications at scale. AI may destabilize existing systems — but it also gives us the capacity to simulate trade-offs, explore distribution models, stress-test policy options, and think more rigorously about unintended consequences. The question is not whether we can predict and control the future. It’s whether we are willing to use these systems to make more informed choices about the future we are implicitly designing. And that requires something even harder. It requires us to extend our time horizon. Are we — individually and collectively, in whatever positions of leadership and influence we occupy — optimizing for our own short-term advantage during the brief blink of time we’re here? Or are we willing to think in terms of legacy at scale — to help shape a new societal contract that future generations will inherit? Abundant intelligence forces us to confront not just what we can build, but what we are building toward. P.S. II After the Great Financial Crisis, the phrase was “Too Big to Fail.” I increasingly wonder whether we may be entering an era of “Too Big to Survive.” If certain forms of intelligence friction compress dramatically, large centralized systems may become more brittle, not less. Would abundant intelligence default toward healthier outcomes if our corporations — and even our societies — operated closer to tribal scale, where trust density and coordination are human-sized rather than abstract? I don’t know the answer. But if we are refactoring intelligence itself, it seems naïve to assume size and structure remain neutral variables.