Colin Wright

66 posts

Mo sources mo problems? Not anymore: Rolling out now, NotebookLM can auto-label & categorize sources (when you have 5+), so you can spend less time scrolling and more time thinking/learning/philosophizing, etc. Rename, reorganize, & personalize (emojis!) to your ❤️'s content.

Mo sources mo problems? Not anymore: Rolling out now, NotebookLM can auto-label & categorize sources (when you have 5+), so you can spend less time scrolling and more time thinking/learning/philosophizing, etc. Rename, reorganize, & personalize (emojis!) to your ❤️'s content.

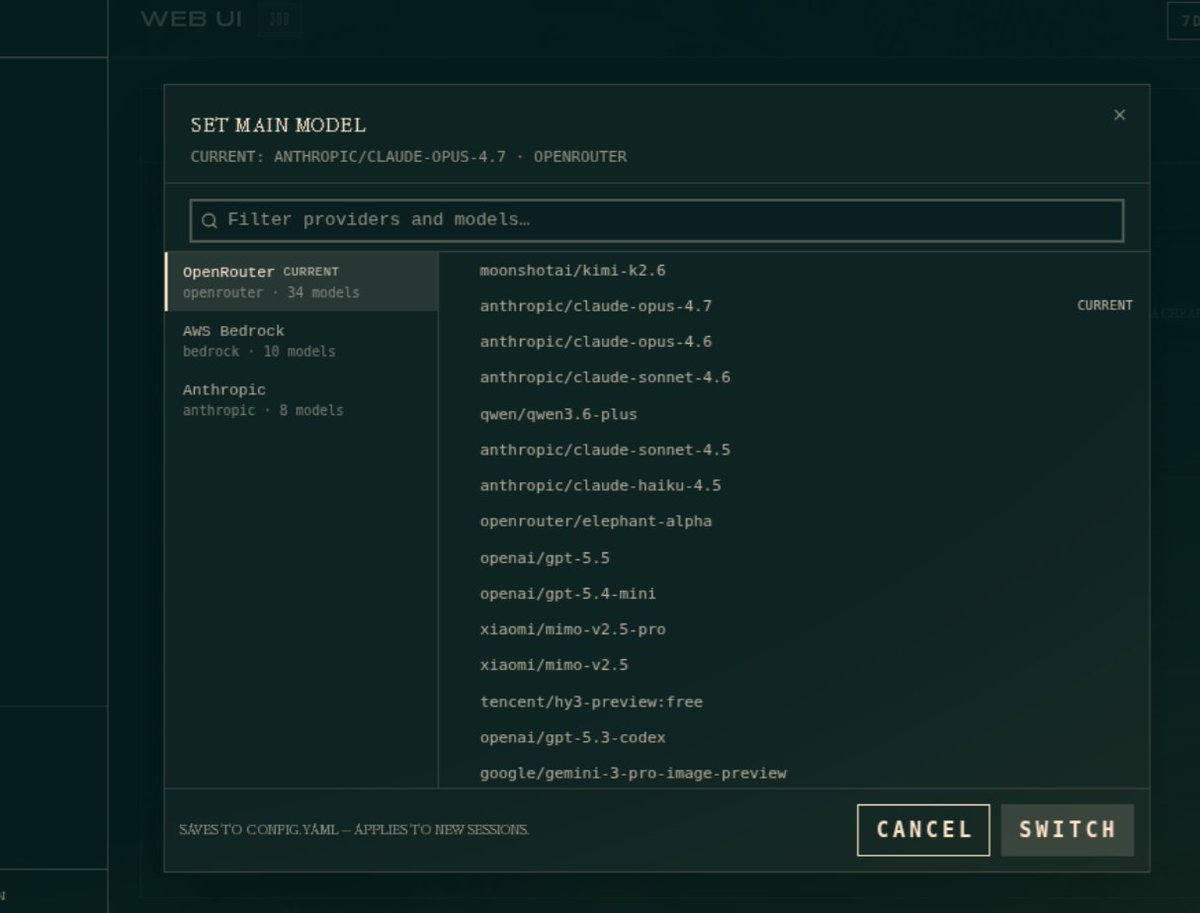

Claude Code in the terminal will now show recaps when you switch focus away from the session and then come back. This should help you stay more in flow while multi-clauding.

Meet Kimi K2.6: Advancing Open-Source Coding 🔹Open-source SOTA on HLE w/ tools (54.0), SWE-Bench Pro (58.6), SWE-bench Multilingual (76.7), BrowseComp (83.2), Toolathlon (50.0), Charxiv w/ python(86.7), Math Vision w/ python (93.2) What's new: 🔹Long-horizon coding - 4,000+ tool calls, over 12 hours of continuous execution, with generalization across languages (Rust, Go, Python) and tasks (frontend, devops, perf optimization). 🔹Motion-rich frontend - Videos in hero sections, WebGL shaders, GSAP + Framer Motion, Three.js 3D. 🔹Agent Swarms, elevated - 300 parallel sub-agents × 4,000 steps per run (up from K2.5's 100 / 1,500). One prompt, 100+ files. 🔹Proactive Agents - K2.6 model powers OpenClaw, Hermes Agent, etc for 24/7 autonomous ops. 🔹Claw Groups (research preview) - bring your own agents, command your friends', bots & humans in the loop. - K2.6 is now live on kimi.com in chat mode and agent mode. For production-grade coding, pair K2.6 with Kimi Code: kimi.com/code - 🔗 API: platform.moonshot.ai 🔗 Tech blog: kimi.com/blog/kimi-k2-6 🔗 Weights & code: huggingface.co/moonshotai/Kim…