CaptainBeard

380 posts

@CaptainBearddd

I build stuff (startups), I code (JS/TS), I manage AI teams (Lead Technical Project Manager @ Tether, Building https://t.co/xKLCz9nM6n) | Opinions are my own.

Just shipped the Identity Layer for @tendrdotbid powered by @sns - every tendr.bid user now gets a personal `

8 billion humans deserve an intelligence that doesn't blink when the signal dies. 🧠 Introducing @QVAC Psy, our foundational models built on the mathematical stability of Psychohistory. With QVAC MedPsy, our local-first medical health AI model, we’ve proven that superior methodology beats raw parameter count. Our 1.7B & 4B models are delivering expert-level healthcare reasoning on consumer hardware. The "tiny brain" for the next galaxy is here. Fully open-source. Fully sovereign. Learn more qvac.tether.io/models

Starting June 1st, GitHub Copilot will move to a usage-based billing model as GitHub Copilot supports more agentic and advanced workflows. In early May, you'll see a preview bill experience, giving visibility into projected costs before the transition. 👉 Read more about the upcoming change: github.blog/news-insights/…

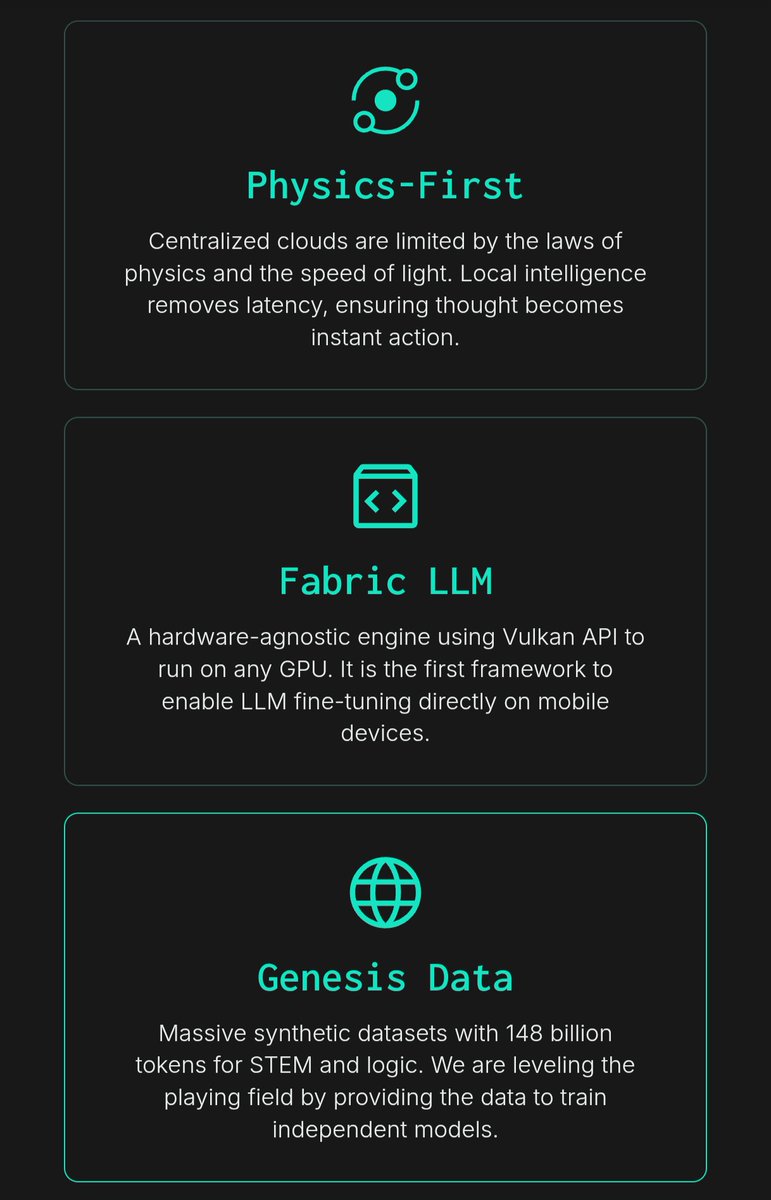

Intelligence should not be a service you rent; it is a foundational element you possess. At Tether, we see AI as a new element of the periodic table - a raw material that can be embedded into the very fabric of the universe. Today, the QVAC SDK is officially live - the atomic unit for the next era of compute. From your smartphone today to the edge of the galaxy tomorrow, we are building the decentralized mind that doesn't require an uplink to function. Infinite Stable Intelligence: - Local-First: Runs privately on any device without permission or central servers. - Single API: A complete SDK for Vision, RAG, P2P networking, and LLM fine-tuning. - Unstoppable: No central point of failure if the internet breaks, your world keeps thinking. - Decentralized: Evolve through Peer-to-Peer Swarms of Infinite Intelligence. The era of Stable Intelligence has begun. Start building the future at qvac.tether.io.

The engine of the 21st century is here. 🧠 The QVAC SDK is the "steam engine" of the AI era—decoupling intelligence from the cloud and putting it in your hands. A single API for local-first, modular AI that runs anywhere. - Sovereign: Own your engine, don't rent it. - Local: 0 latency, no cloud dependency. - Modular: Stackable, universal building blocks. The era of Stable Intelligence has begun.

The engine of the 21st century is here. 🧠 The QVAC SDK is the "steam engine" of the AI era—decoupling intelligence from the cloud and putting it in your hands. A single API for local-first, modular AI that runs anywhere. - Sovereign: Own your engine, don't rent it. - Local: 0 latency, no cloud dependency. - Modular: Stackable, universal building blocks. The era of Stable Intelligence has begun.

Tether Launches QVAC SDK as the AI Universal Building Block that Runs, Trains, and Evolves Intelligence Across any Device and Platform Learn more: tether.io/news/tether-la…