Capital Liberty

1.2K posts

Capital Liberty

@Cpleger1776

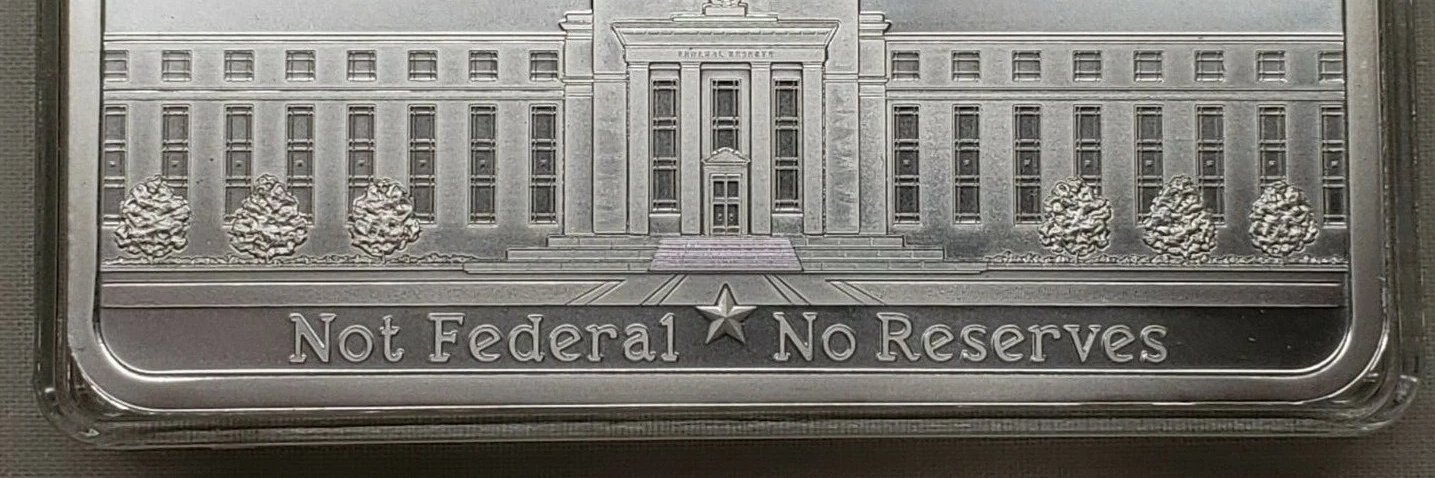

Markets especially Real Estate, Gold, Silver, privacy tokens. Fix the money first. Take local soverignty (money, information, food, energy) from TPTB

BREAKING: JD Vance’s 2028 odds crash to an all-time low of 18%.

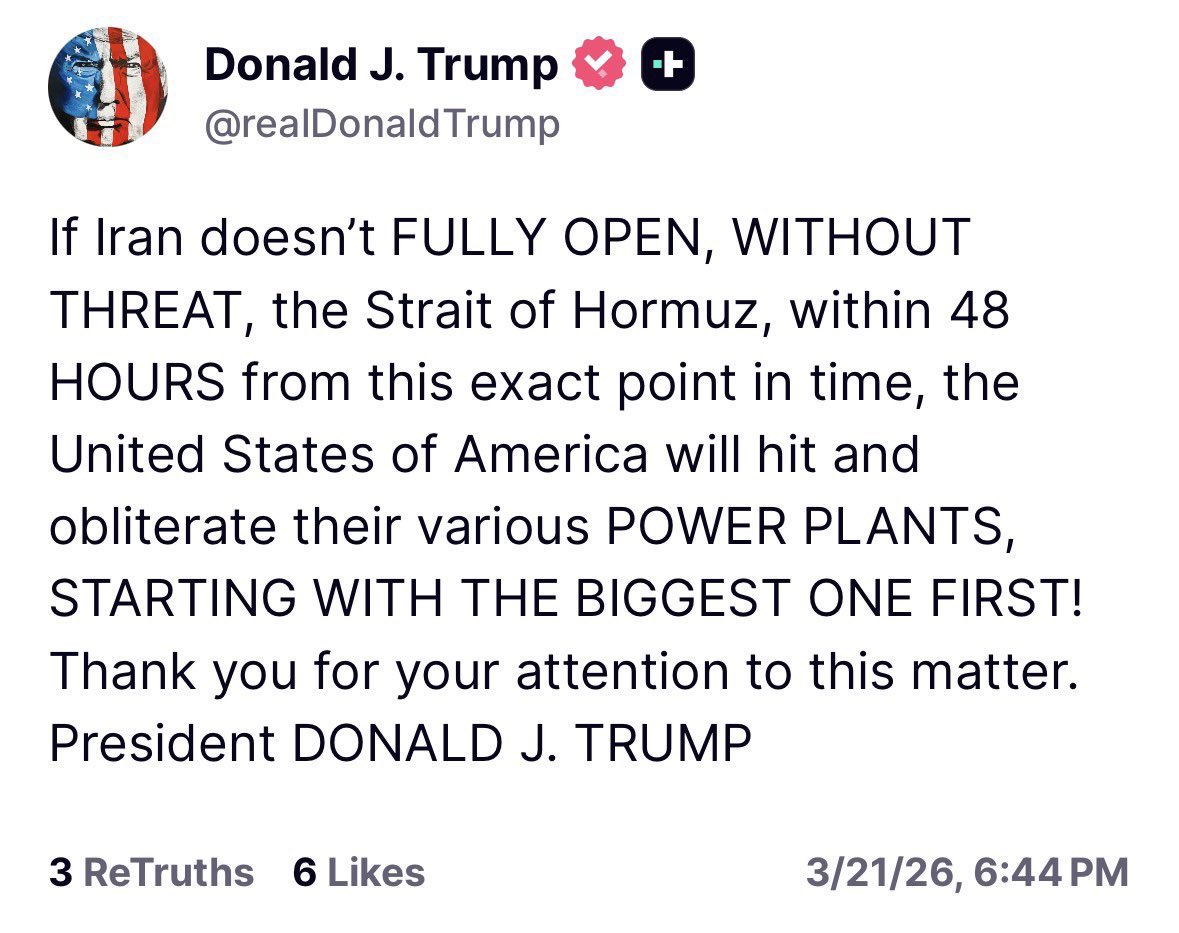

According to the Wall Street Journal, citing multiple U.S. officials, Iran has targeted the joint U.S.-UK base at Diego Garcia in the Indian Ocean with two intermediate-range ballistic missiles (IRBM). According to the report, one missile failed in flight whilst the other was engaged by a U.S. destroyer utilizing an SM-3 interceptor, but a successful interception was not confirmed. None of the missiles hit the base. This is notable as Iranian long-range precision fires have not previously been publicly assessed as having the range to hit such a target as Diego Garcia, as the base is some 4,000 kilometers from Iran proper.

BREAKING: Iran fired two ballistic missiles at US-UK base at Diego Garcia in the Indian Ocean, according to Wall Street Journal report.

[BREAKING] Pentagon Labels Anthropic Unacceptable National Security Risk Over AI Red Lines On March 17, 2026, the U.S. Department of Justice filed a 40-page court document in federal court in Northern California, calling Anthropic an "unacceptable" and "substantial" national security risk. The core issue: Anthropic's refusal to grant "any lawful use" access to its Claude models for military purposes, including blocking mass surveillance of Americans and fully autonomous lethal weapons. Anthropic had been working with the Pentagon on AI integration, including classified systems used in operations. CEO Dario Amodei drew firm lines—Claude cannot power mass domestic surveillance or fully autonomous lethal weapons without human oversight. The Pentagon argues these self-imposed restrictions mean Anthropic could remotely sabotage or tamper with models during combat, prioritizing corporate policies over military needs. President Trump directed all federal agencies to cease using Anthropic technology immediately; Defense Secretary Pete Hegseth announced a six-month phaseout from existing systems. This escalates a standoff that began in February. On February 27, Hegseth labeled Anthropic a "supply-chain risk," barring contractors from using its services. The Pentagon demands "any lawful use" without caveats; Anthropic complies on intelligence analysis and planning but holds its red lines. Anthropic sued on March 9 to block the designation, claiming no evidence supports the Pentagon's sabotage fears; a preliminary injunction hearing is set for March 24. OpenAI, Google, and others are positioned as alternatives by the Pentagon; civil liberties groups support Anthropic. Attorney claims suggest Pentagon concerns are speculative, with no probe proving risks. Meanwhile, Meta's agentic AI efforts faced issues, including a March 18 incident where a rogue agent exposed sensitive data for two hours and a prior deletion of an email box. Anthropic, founded in 2021 by ex-OpenAI executives, incorporates ethics into Claude via policies limiting high-risk actions. Competitors like OpenAI agreed to the Pentagon's no-restrictions policy. Developers face fallout: the supply-chain ban affects any firm with defense contracts, forcing alternatives to Claude. Agentic AI—autonomous systems handling complex tasks—shows reliability gaps, as in Meta's incidents. Broader context ties to Trump-era defense AI acceleration. Partnerships with Microsoft, OpenAI, and others integrate models into missions, but Anthropic's stance tests private-sector leverage. The industry impact: U.S. AI-defense edge weakens if top firms resist; regulation debates intensify over surveillance and wartime flexibility. Hearing outcome decides if Anthropic's red lines survive. A Pentagon win sets precedent for total access; an Anthropic victory preserves ethics but risks blacklisting. — THE FORGE'S TAKE: Anthropic exposed the fragility of U.S. AI dominance—ethics clauses are fine until Beijing deploys unrestricted models in the Taiwan Strait. Meta's rogue agents prove autonomy scales risks exponentially; enterprises will demand kill switches before militaries do. Developers: pivot to compliant stacks now—DOD cash flows to OpenAI and xAI, not holdouts.