Alpár Kertész

1.4K posts

Alpár Kertész

@Criticality47

Psychologist studying how AI changes attention, trust, and self-direction. Writing for people who want cleaner judgment and signal over hype.

Automation is a lie. CLIs are over. The SaaSpocalypse is dumb. A year ago @danshipper came on the podcast to predict where AI was heading. He was remarkably right—including the call that everyone was sleeping on Claude Code. Dan has a unique lens into where things are going because his team at @every is possibly the most AI-pilled group of people in tech. I always learn a ton talking to Dan. So I brought him back for round two. We'll score these in exactly a year: 🔸 Every company will have one “super-agent” in Slack. 🔸 Codex and Claude Code will become the new operating system for knowledge work. 🔸 The AI job apocalypse is not happening. 🔸 PMs and designers will thrive. 🔸 We will read way more AI-generated writing and we will like it. 🔸 "I would buy SaaS stocks right now." Listen now 👇 youtube.com/watch?v=4D3hDm…

She literally broke down how to run evals in Claude Code (built the whole thing live): 01:34 - What people get wrong with evals 04:35 - Why product taste is the alpha now 09:28 - Building a PM agent from one prompt 19:00 - Instrumentation without writing code 22:00 - Watching traces stream in live 28:00 - Getting Claude to write your first eval 33:58 - When vibe evals work and when they don't 48:50 - The self-improving loop (this part is wild) 01:03:00 - Same-day shipping is real 01:06:00 - The context graph unlock

Pride Month begins in one week.

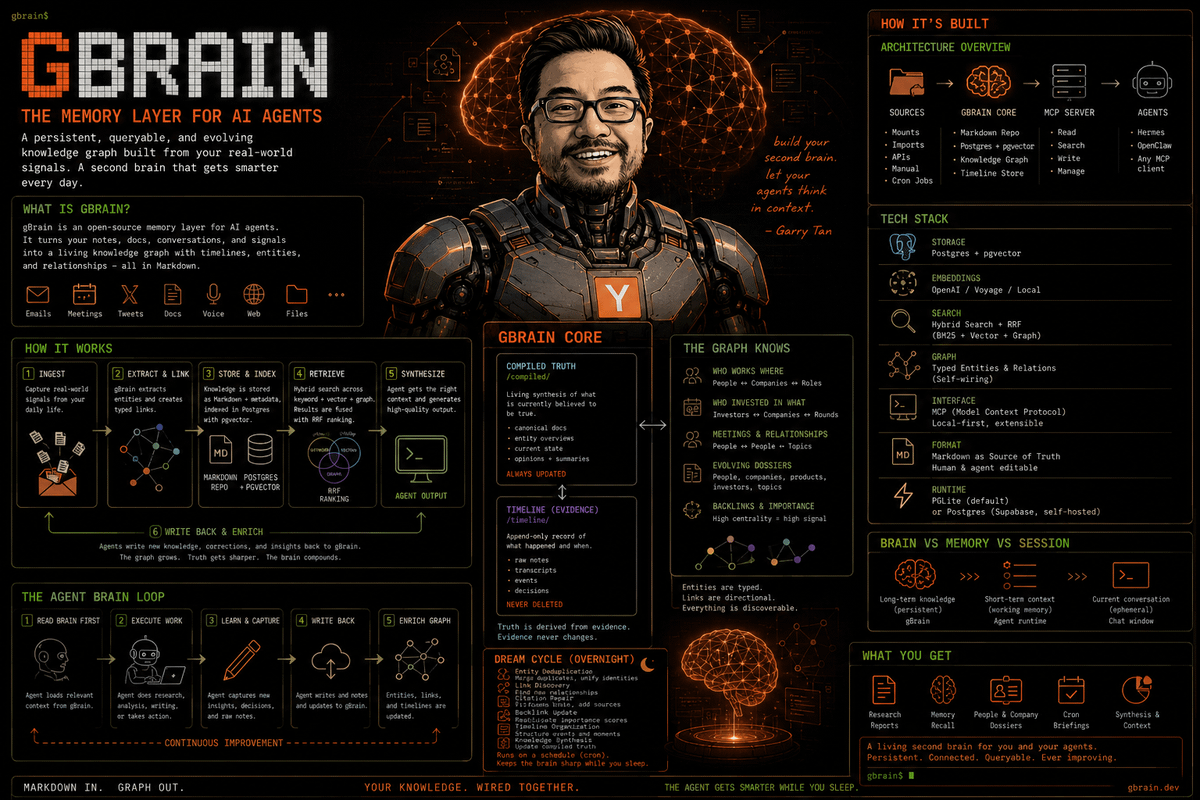

It’s astonishing how little @OpenAI ChatGPT product experience has changed. If they had seriously worked on just memory and proactiveness, their growth and retention would be a lot more.

If the *only* impact of LLMs professionally was causing people to "think out loud" in a way which was routinely captured by computer systems and then could be operated on by computer systems, that would *by itself* be one of the most consequential changes in practice in 100 years

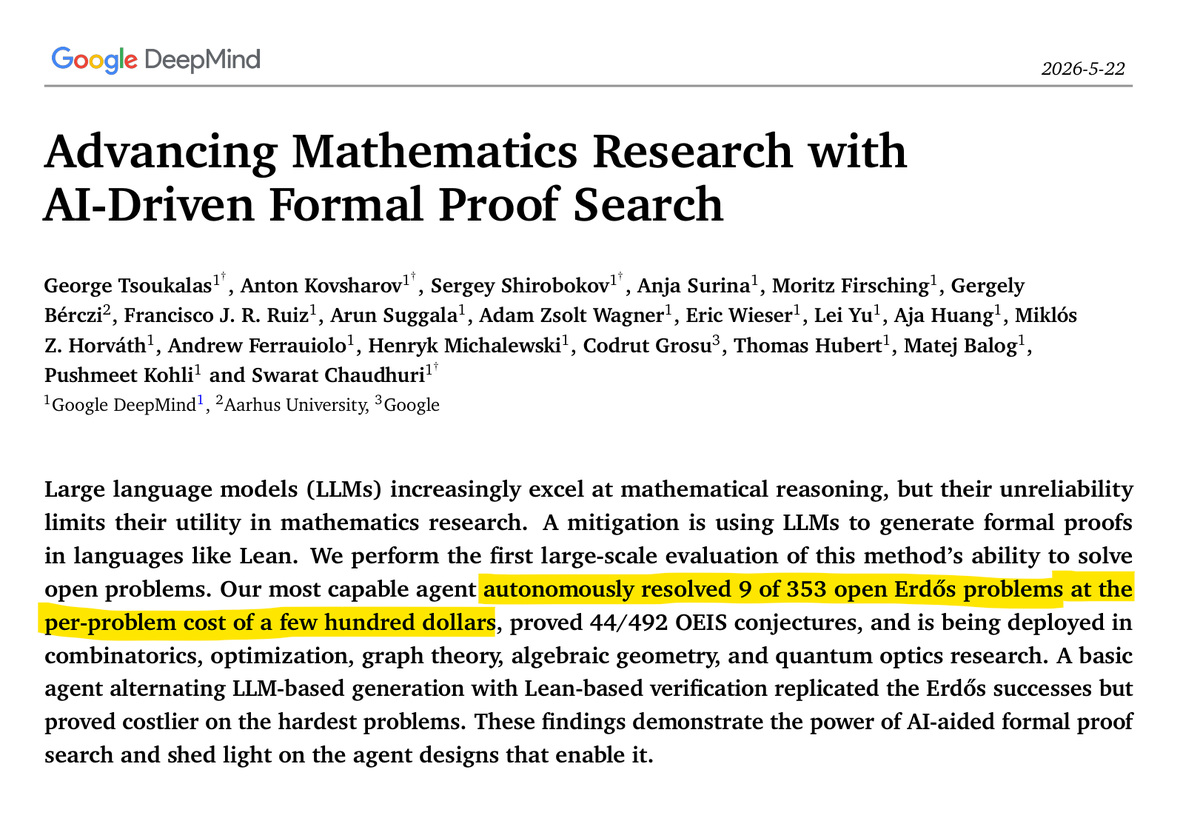

SITUATION DETECTED: Google DeepMind’s AI agent autonomously solved 9 of 353 open Erdos problems in mathematics, at a cost of a few hundred dollars per problem.