CryptoWifΞ 🦇🔊

152 posts

CryptoWifΞ 🦇🔊

@Crypt0xWife

I inhale second-hand defi all day

Katılım Ocak 2021

168 Takip Edilen913 Takipçiler

@karpathy When I hit the context limit and had to start a new channel, GPT offered to formulate a ‘summary’ for the new channel to echo all of the references and progress from the prev channel. I regularly purge/manage the memories so I also get GPT to summarize/consolidate a TLDR memory

English

@karpathy Yes! I have different channels in GPT4o (ie Book Club, Food, AI, Mental Health/Therapy) You are right that this is 100% like a moat, esp for my 'therapy' channel cuz I dont wanna start over with a new 'therapist' 😂

English

When working with LLMs I am used to starting "New Conversation" for each request.

But there is also the polar opposite approach of keeping one giant conversation going forever. The standard approach can still choose to use a Memory tool to write things down in between conversations (e.g. ChatGPT does so), so the "One Thread" approach can be seen as the extreme special case of using memory always and for everything.

The other day I've come across someone saying that their conversation with Grok (which was free to them at the time) has now grown way too long for them to switch to ChatGPT. i.e. it functions like a moat hah.

LLMs are rapidly growing in the allowed maximum context length *in principle*, and it's clear that this might allow the LLM to have a lot more context and knowledge of you, but there are some caveats. Few of the major ones as an example:

- Speed. A giant context window will cost more compute and will be slower.

- Ability. Just because you can feed in all those tokens doesn't mean that they can also be manipulated effectively by the LLM's attention and its in-context-learning mechanism for problem solving (the simplest demonstration is the "needle in the haystack" eval).

- Signal to noise. Too many tokens fighting for attention may *decrease* performance due to being too "distracting", diffusing attention too broadly and decreasing a signal to noise ratio in the features.

- Data; i.e. train - test data mismatch. Most of the training data in the finetuning conversation is likely ~short. Indeed, a large fraction of it in academic datasets is often single-turn (one single question -> answer). One giant conversation forces the LLM into a new data distribution it hasn't seen that much of during training. This is in large part because...

- Data labeling. Keep in mind that LLMs still primarily and quite fundamentally rely on human supervision. A human labeler (or an engineer) can understand a short conversation and write optimal responses or rank them, or inspect whether an LLM judge is getting things right. But things grind to a halt with giant conversations. Who is supposed to write or inspect an alleged "optimal response" for a conversation of a few hundred thousand tokens?

Certainly, it's not clear if an LLM should have a "New Conversation" button at all in the long run. It feels a bit like an internal implementation detail that is surfaced to the user for developer convenience and for the time being. And that the right solution is a very well-implemented memory feature, along the lines of active, agentic context management. Something I haven't really seen at all so far.

Anyway curious to poll if people have tried One Thread and what the word is.

English

I think this man should lead the future of Ethereum

Ameen Soleimani@ameensol

Unlike some of my peers, I take Ethereum's cultural issues seriously, especially the marxist infiltration. Thank you @ETHGlobal for giving me the opportunity to talk about what it will take to achieve an Ethereum Cultural Victory! Slide on the next post 👇

English

@songadaymann Officially #2 fav Song a Day (right after ETH ETF, which is still on my running list 👨🎤❤️)

English

so many great things built on ethereum, too many to fit into one song.

bid on the 1/1:

songaday.world/5881

English

@cobie @coinfessions @inversebrah Makes it all back revenge trading. Steve Jobs-esque comeback story in the making 🎲

English

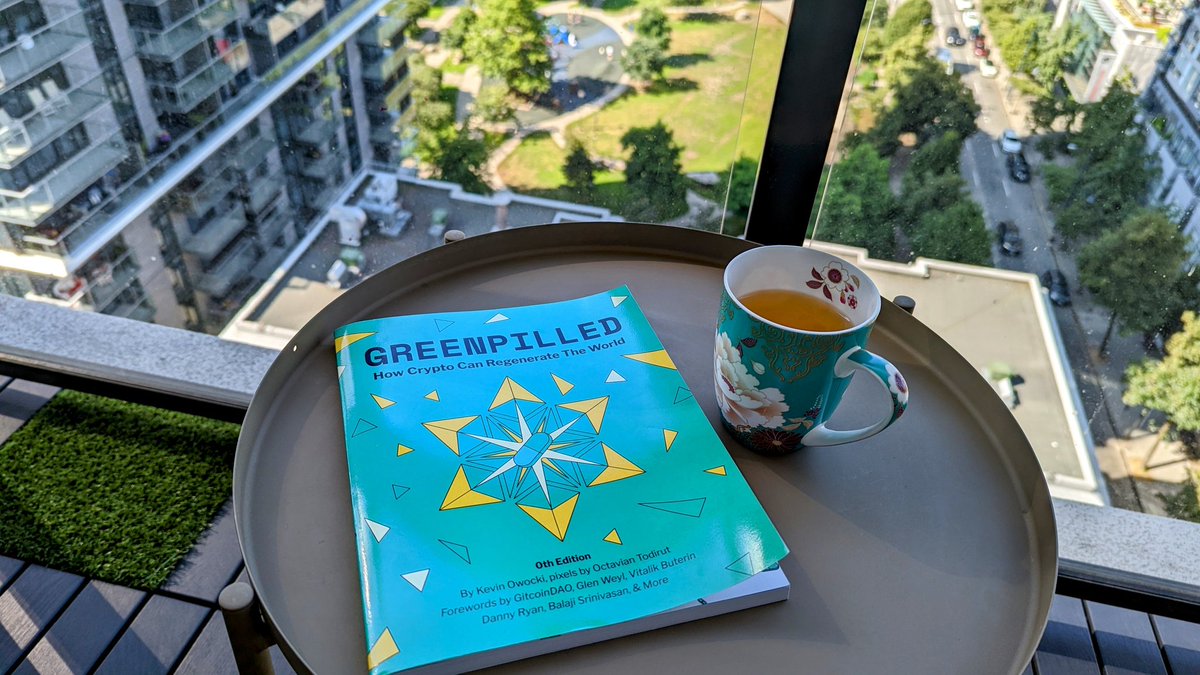

@Crypt0xWife was kind enough to mock this up for me back during the initial pengu mania.

I always had visions of pudgy toys and plushies but lacked the capacity to make it a reality.

I am beyond excited to see Luca & co. bringing Pudgy Toys to life.

English

@sassal0x @thedailygwei This one made me go for a run after a month+ slump, and now I've gone a few days in a row. Thanks bud 🤝

English

The latest @thedailygwei Refuel is ready for your consumption! ⛽️

Today's topics:

- Market meltdown 📉

- AllCoreDevs updates 🐼

- Over 400,000 Beacon Chain validators 🥩

- and much more ➕

Watch 👇

youtu.be/A9w3w8GOp6c

YouTube

English

A @BoysClubCrypto Take

Do Kwon is the Tristan Thompson of crypto. Remarkably consistent every time👖🔥

GIF

English

Giga chads @tztokchad and @Tetranode have each donated 1 ETH to Ethereum public goods via the @ProtocolGuild

I will be doing the dance to save the markets soon, this is not a rug, I will actually dance - but I want moar ETH sent to the Protocol Guild please!

TrenTok-Chad ⚡️@tztokchad

@sassal0x @Tetranode @ProtocolGuild will be sending from a sassal address 0x5A55A10746Dd1b258FfB2a279EE4Eb422eD331f8

English

Worried about a bear market?

Don't be.

This is a great opportunity.

You just need some survival tips to get through it.

@frogmonkee shares them here:

newsletter.banklesshq.com/p/how-to-survi…

English

@econoar More dynamic!👌You guys have great chemistry, it's fun to hear candid banter between buds vs more prepared talking points. Welcome back!! 🥳

English