The spiritual moment when you go out to eat alone at night in another country

Sanka Mohottala

2.2K posts

@Danny__SM

Graduate Research Assistant at SLIIT || DL Theory, GNN, Human Action Recognition, Network Science || British Humor || Rowing || Humanist

The spiritual moment when you go out to eat alone at night in another country

KASH PATEL: “There’s no credible information that Jeffrey Epstein trafficked minors.” He perjured himself in a sworn statement before Congress. He must be immediately arrested and charged under 18 U.S. Code § 1621.

BREAKING: President Trump says he has formed the “Board of Peace” which will be announced soon. Trump says this is the “most prestigious board ever assembled.”

Geoffrey Hinton says LLMs are moving beyond imitation toward self-consistent reasoning Instead of just predicting the next word, new models are beginning to identify contradictions in their own logic This unbounded self-improvement will "end up making it much smarter than us"

This @Nature study shows why energy and information must be understood together. Biology does this too: mitochondria manage energetic flux, while biochemical and electrical signals encode information about stress, state, and repair. Health depends on keeping these two modes aligned: 1) efficient energy distribution and 2) accurate information about perturbations. nature.com/articles/s4156…

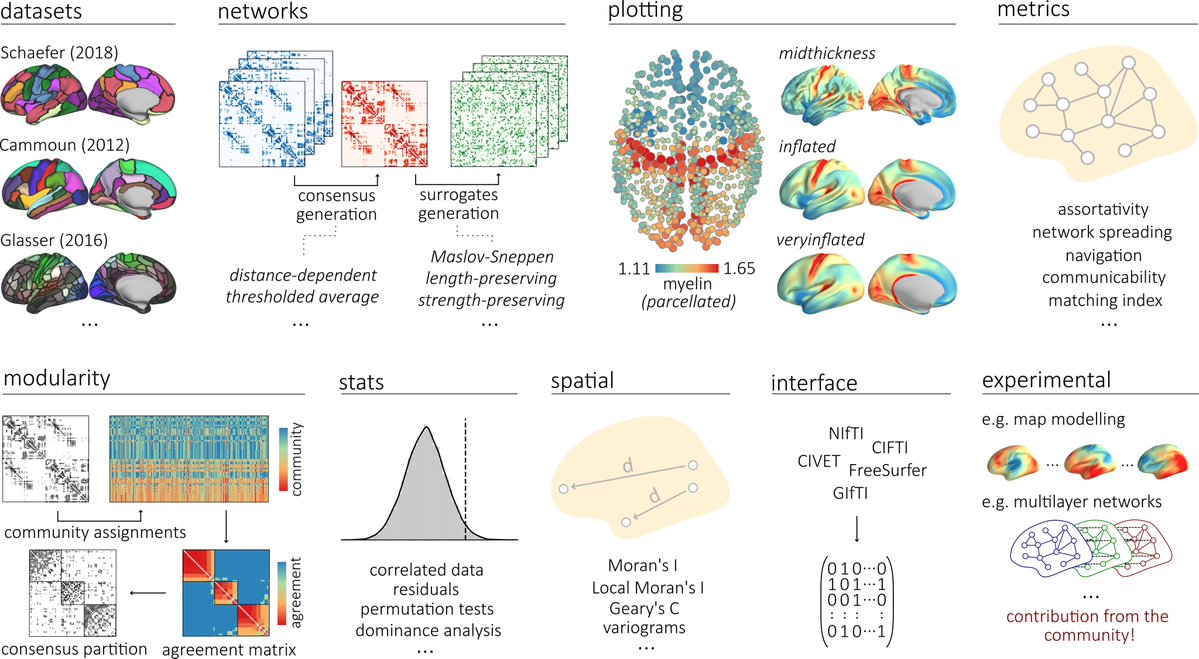

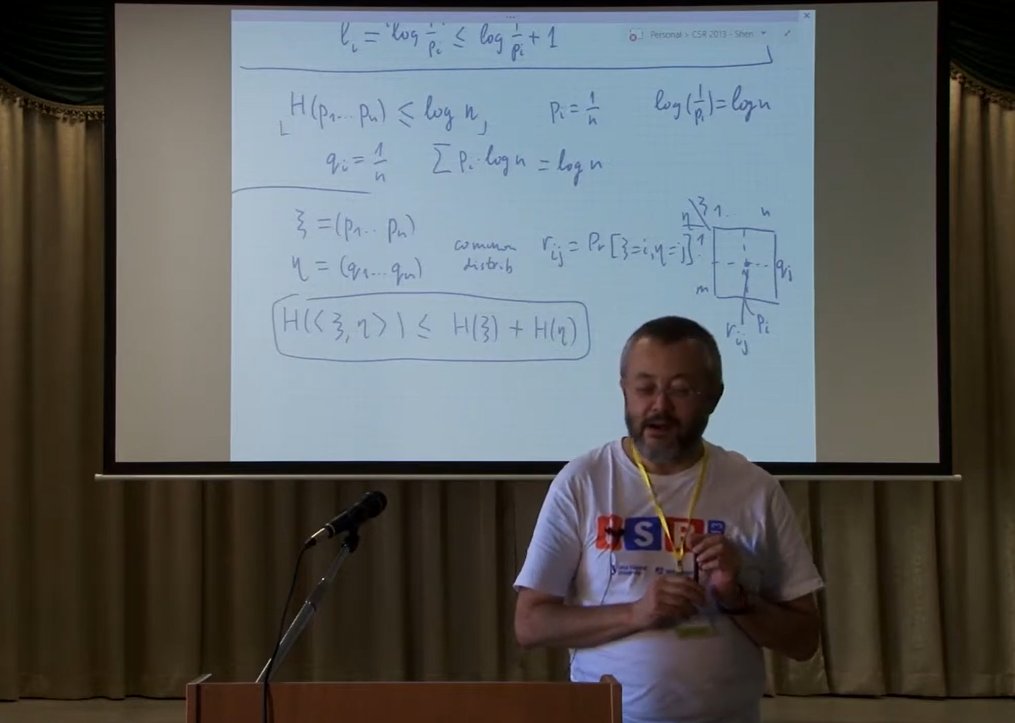

I got two recommendations for you on Kolmogorov complexity, a subject that would be important for mathematicians, computer scientists, but also for philosophers. The first recommendation is for '' Kolmogorov Complexity and Algorithmic Randomness'' by Shen, Uspensky and Vereshchagin (AMS), the second ''An Introduction to Kolmogorov Complexity and its Applications'' by Vitanyi and Li (Springer). Both books are excellent, tons of exercises to practice on, the springer book does go touch on philosophical questions found in probability theory which you will not find in Shen and co. If you are to embark in this journey, i would suggest you to be comfortable with the following preliminaries; theory of computation, basic computational structures, an understanding of big O notation and the basics of probability theory/combinatorics. Have fun!

The #NobelPrize in Physics 2024 for Hopfield & Hinton turns out to be a Nobel Prize for plagiarism. They republished methodologies developed in #Ukraine and #Japan by Ivakhnenko and Amari in the 1960s & 1970s, as well as other techniques, without citing the original inventors. None of the important algorithms for modern AI were created by Hopfield & Hinton. Today I am releasing a detailed tech report on this [NOB]: people.idsia.ch/~juergen/physi… Of course, I had it checked by neural network pioneers and AI experts to make sure it was unassailable. Is it now acceptable for me to direct young Ph.D. students to read old papers and rewrite and resubmit them as if they were their own works? Whatever the intention, this award says that, yes, that is perfectly fine. Some people have lost their titles or jobs due to plagiarism, e.g., Harvard's former president [PLAG7]. But after this Nobel Prize, how can advisors now continue to tell their students that they should avoid plagiarism at all costs? It is well known that plagiarism can be either "unintentional" or "intentional or reckless" [PLAG1-6], and the more innocent of the two may very well be partially the case here. But science has a well-established way of dealing with "multiple discovery" and plagiarism - be it unintentional [PLAG1-6][CONN21] or not [FAKE,FAKE2] - based on facts such as time stamps of publications and patents. The deontology of science requires that unintentional plagiarists correct their publications through errata and then credit the original sources properly in the future. The awardees didn't; instead the awardees kept collecting citations for inventions of other researchers [NOB][DLP]. Doesn't this behaviour turn even unintentional plagiarism [PLAG1-6] into an intentional form [FAKE2]? I am really concerned about the message this sends to all these young students out there. REFERENCES [NOB] J. Schmidhuber (2024). A Nobel Prize for Plagiarism. Technical Report IDSIA-24-24. people.idsia.ch/~juergen/physi… [NOB+] Tweet: the #NobelPrize in Physics 2024 for Hopfield & Hinton rewards plagiarism and incorrect attribution in computer science. It's mostly about Amari's "Hopfield network" and the "Boltzmann Machine." x.com/SchmidhuberAI/… (1/7th as popular as the original announcement by the Nobel Foundation) [DLP] J. Schmidhuber (2023). How 3 Turing awardees republished key methods and ideas whose creators they failed to credit. Technical Report IDSIA-23-23, Swiss AI Lab IDSIA, 14 Dec 2023. people.idsia.ch/~juergen/ai-pr… [DLP+] Tweet for [DLP]: x.com/SchmidhuberAI/… [PLAG1] Oxford's guide to types of plagiarism (2021). Quote: "Plagiarism may be intentional or reckless, or unintentional." web.archive.org/web/2021122714… [PLAG2] Jackson State Community College (2022). Unintentional Plagiarism. [PLAG3] R. L. Foster. Avoiding Unintentional Plagiarism. Journal for Specialists in Pediatric Nursing; Hoboken Vol. 12, Iss. 1, 2007. [PLAG4] N. Das. Intentional or unintentional, it is never alright to plagiarize: A note on how Indian universities are advised to handle plagiarism. Perspect Clin Res 9:56-7, 2018. [PLAG5] InfoSci-OnDemand (2023). What is Unintentional Plagiarism? [PLAG6] Copyrighted.com (2022). How to Avoid Accidental and Unintentional Plagiarism (2023). Copy in the Internet Archive. Quote: "May it be accidental or intentional, plagiarism is still plagiarism." [PLAG7] Cornell Review, 2024. Harvard president resigns in plagiarism scandal. 6 January 2024. [FAKE] H. Hopf, A. Krief, G. Mehta, S. A. Matlin. Fake science and the knowledge crisis: ignorance can be fatal. Royal Society Open Science, May 2019. Quote: "Scientists must be willing to speak out when they see false information being presented in social media, traditional print or broadcast press" and "must speak out against false information and fake science in circulation and forcefully contradict public figures who promote it." [FAKE2] L. Stenflo. Intelligent plagiarists are the most dangerous. Nature, vol. 427, p. 777 (Feb 2004). Quote: "What is worse, in my opinion, ..., are cases where scientists rewrite previous findings in different words, purposely hiding the sources of their ideas, and then during subsequent years forcefully claim that they have discovered new phenomena."