Dieter Castel

6.5K posts

Dieter Castel

@DieterCastel

Engineer, ex-@NVISOsecurity, Alumnus CompSci @CW_KULeuven Math/STEAM/ML/@julialangu Enthusiast, Traceur, Multi-Genre music lover. #MathsJam @stadleuven cohost.

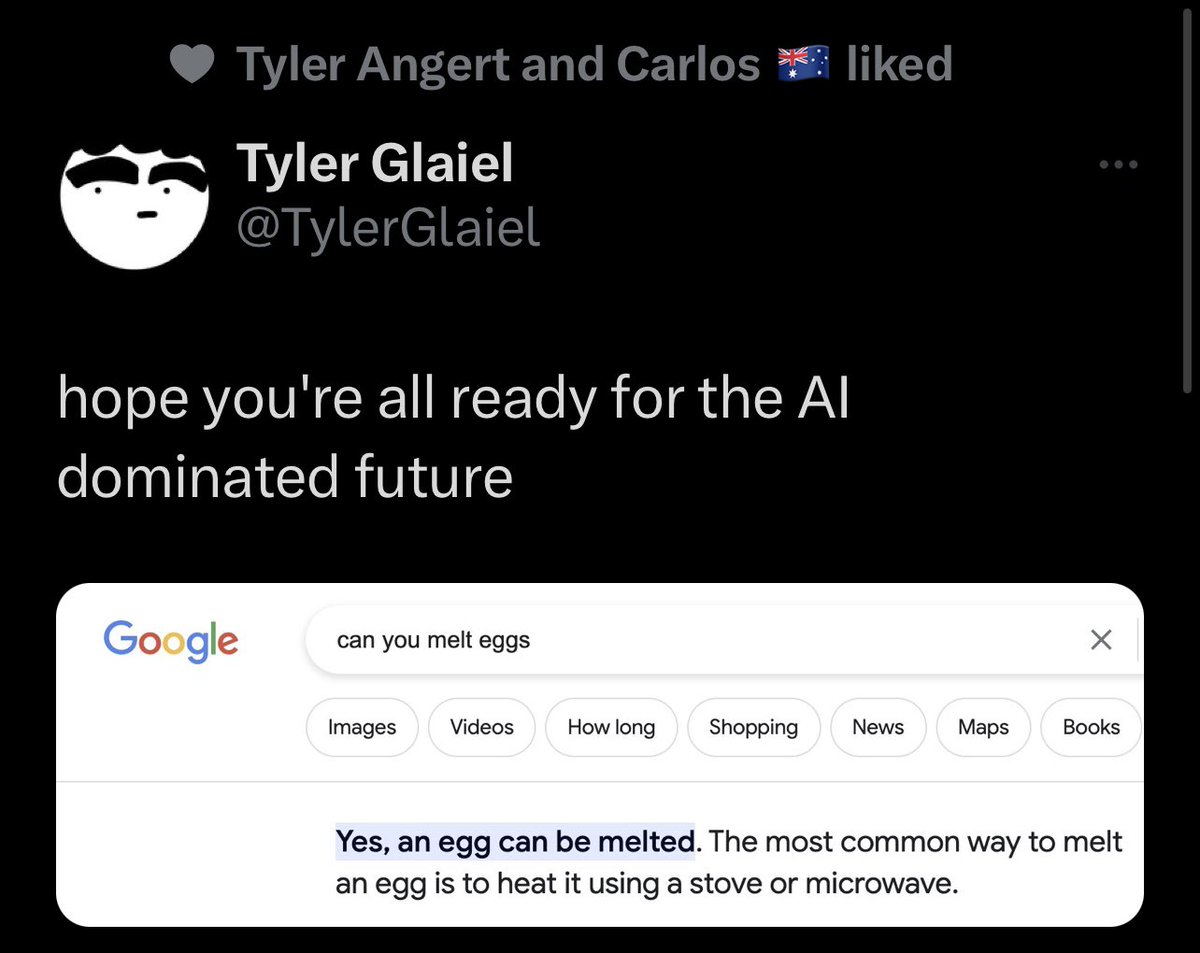

Pinning down Approximate Retrieval in LLMs (and, in the process making sense of that NYT law suit) The term approximate retrieval (that, afaik, I coined to provide a qualitative understanding of what LLMs do c.f. cacm.acm.org/blogs/blog-cac…), has caught on a bit. I will write down what I was trying to capture with the term--both because someone asked for a definition, and because it actually has some bearing on that NYT lawsuit!. 0. The "approximate" here is about whether the "retrieved" text is an unaltered copy of something that was stored (and not about whether the retrieval key is matched approximately) 1. Given that, underneath it all, LLMs are trained to be n-gram models (if only on steroids--aka ultra-large n), it should be rather non-controversial to say that they cannot guarantee exact retrieval. They are just a compact models of P(next token|Context window) In other words, with the n-gram model, the prompt is working as a "key" into the CPT rather than a key into any stored database. It is used to sample next token iteratively from the learned CPTs (with the context for the (n+1)th token affected by the specific sample selected as the n-th token!) 1.1 LLMs are not databases--and are not indexing and retrieving exactly matching records without altering them. The closest analogy to index is context and that is changing. There is certainly no stored record being retrieved. 1.2 LLMs are also not IR engines which, while doing similarity search (i.e., allowing approximate match with the key), still guarantee that what they give out is what was stored (IR doesn't make documents--it just retrieves documents that are similar to the query!). [Another way to see this is that if LLMs were just doing IR, then the ChatGPT essays can be caught by the old turnitin-style plagiarism detectors.] 1.2.1. The whole RAG rage can be understood as adding an external IR component to LLMs, where the prompt is used as an actual IR query on an external vector DB, and the stuff retrieved is added back into the prompt (hoping that LLM will summarize it..). See x.com/rao2z/status/1… 2. It is precisely this neither-DB-nor-IR nature of the n-gram model that gives LLMs their flexibility--of essentially capturing the distribution (manifold) of the text in the corpus (humorously illustrated by the tooth paste tube 👇metaphor that I had seen somewhere ) 3. Because of the way n-gram models work, there is never any 100% guarantee that some stored record (be it a program or an NY Times article) is retrieved unaltered. So why is NYT suing OpenAI? 3.1 However, with a long enough context window, and the network capacity, something close to memorization (aka "plagiarization") of long passages is very much possible (as is being shown in that NYT law suit!). 3.2. Interestingly generative ML systems effectively memorizing full passages/images has been observed in other generative models too--and can be interpreted as a failure to learn the distribution. See for example the old study by @prfsanjeevarora et. al. on whether GANs really learn the distribution/manifold or memorize parts of it. arxiv.org/abs/1706.08224 4. Commercial LLM makers (will) try to play both ends of the approximate retrieval to their advantage.. 4.1. When they try to argue NYT law suit, they will no doubt push on the fact that LLMs don't do exact retrieval and so there is no copyright infringement. 4.2 When they push LLMs for "search", they will try instead to bank on the memorization capabilities! The truth is that there is no 100% way to guarantee or stop either behavior! If LLM makers try to reduce memorization, they will certainly see that the LLM's ability to masquerade as search engines--already quite questionable (c.f. x.com/rao2z/status/1…) --will degrade even further (c.f. x.com/rao2z/status/1…)

"Any model made available in the EU, without first passing extensive, and expensive, licensing, would subject companies to massive fines of the greater of €20,000,000 or 4% of worldwide revenue. Opensource developers, and hosting services such as GitHub... would be liable"

@MeetThePress @ericschmidt Fear reaction to what the EU is about to do. #more-561" target="_blank" rel="nofollow noopener">technomancers.ai/eu-ai-act-to-t…