DigitalOcean

81.5K posts

DigitalOcean

@digitalocean

AI-Native Cloud. ☁️ Status: @DOstatus Support: https://t.co/5gkvyinPlK

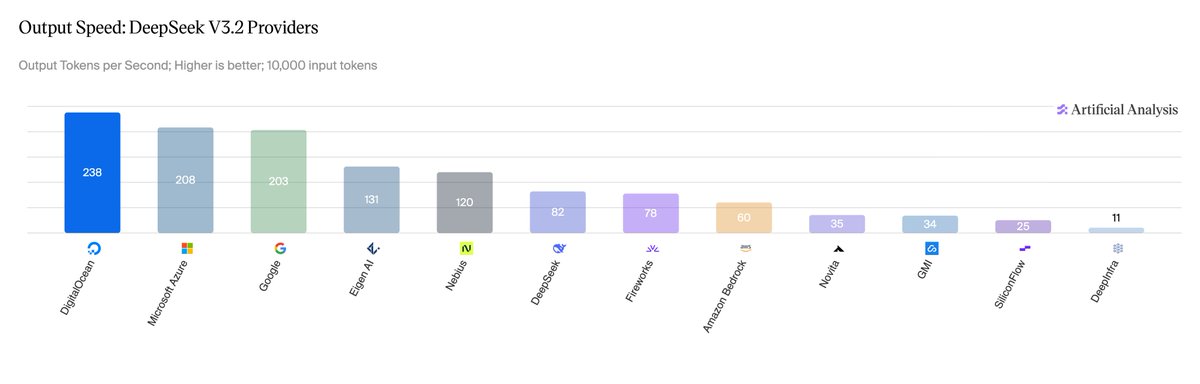

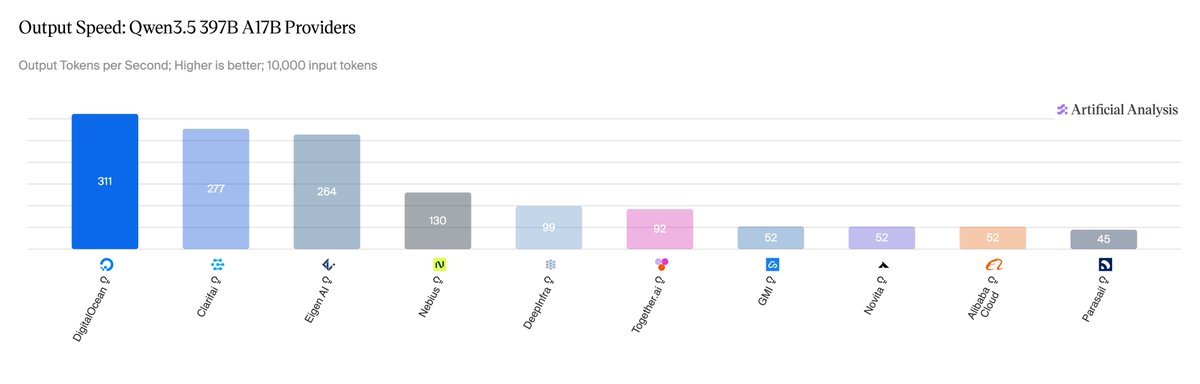

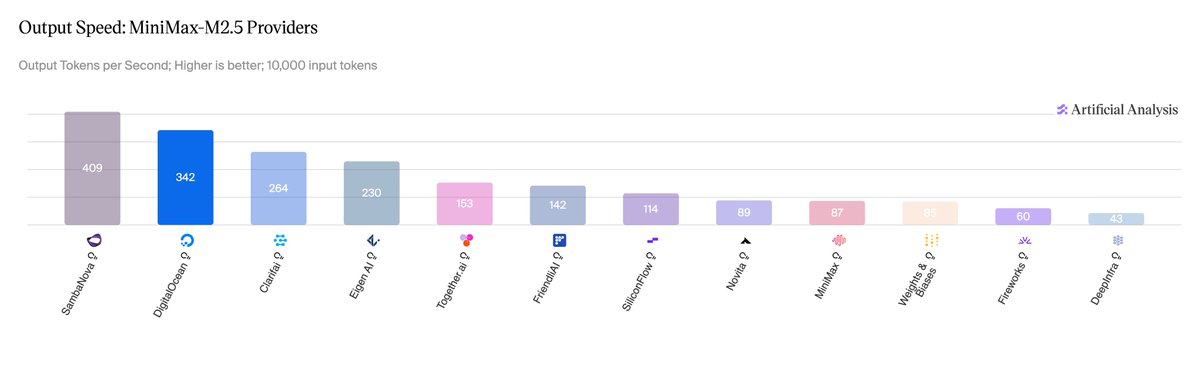

Among the fastest DeepSeek V3.2, MiniMax-M2.5, and Qwen 3.5 397B inference in the market, per Artificial Analysis benchmarks (April 2026). ⚡️🤖 Sub-1-second TTFT. 230 tokens per second. Co-designed every layer of the stack with @Inferact, performance optimized @vllm_project, all on @NVIDIA HGX B300. Live on DigitalOcean Serverless Inference now. Full breakdown in the comments. ⬇️

🏆 vLLM powers the fastest inference on NVIDIA Blackwell Ultra on Artificial Analysis. On @digitalocean's Serverless Inference, powered by vLLM on NVIDIA HGX B300: 🥇 AA #1 output speed for DeepSeek V3.2 (230 tok/s, 0.96s TTFT) and Qwen 3.5 397B 🔧 MiniMax-M2.5: 23% TPOT gain via an EAGLE3 draft model trained on TorchSpec Co-design highlights: - NVFP4 quantization on Blackwell Ultra - EAGLE3 + MTP speculative decoding - Per-model kernel fusion Thanks to @digitalocean, @nvidia, and @inferact for the collaboration. Optimizations land back in open-source vLLM. 🔗 digitalocean.com/blog/how-we-bu…

Among the fastest DeepSeek V3.2, MiniMax-M2.5, and Qwen 3.5 397B inference in the market, per Artificial Analysis benchmarks (April 2026). ⚡️🤖 Sub-1-second TTFT. 230 tokens per second. Co-designed every layer of the stack with @Inferact, performance optimized @vllm_project, all on @NVIDIA HGX B300. Live on DigitalOcean Serverless Inference now. Full breakdown in the comments. ⬇️