Inferact

69 posts

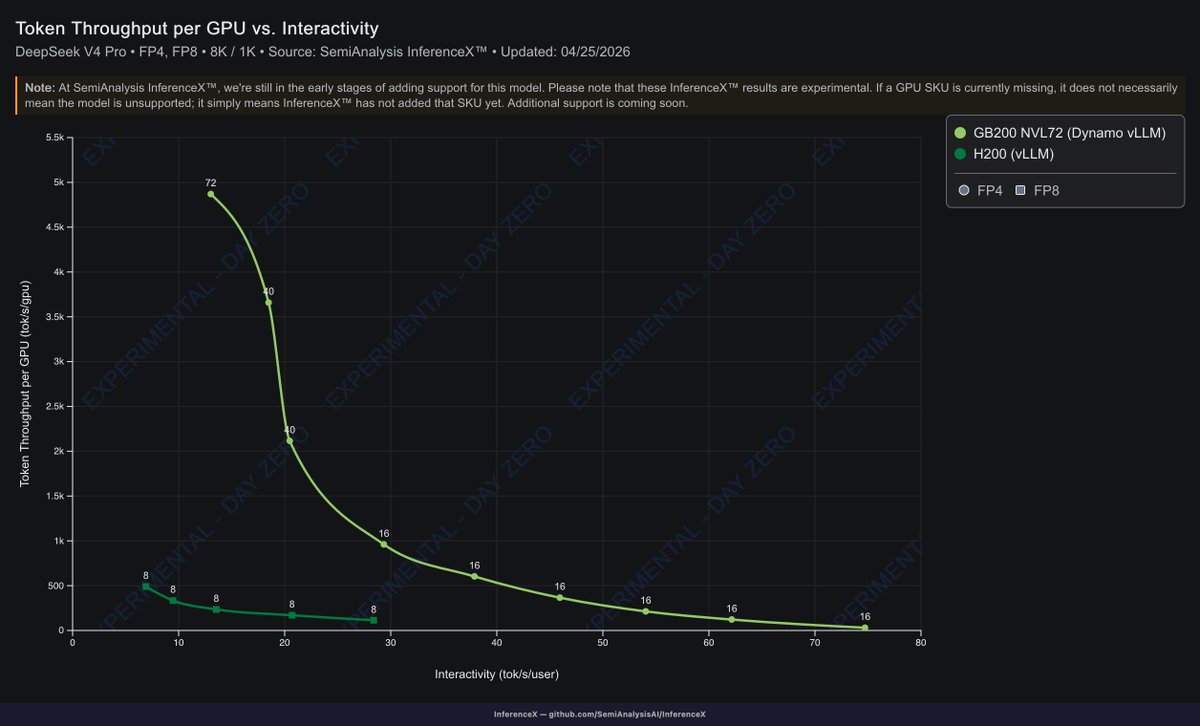

DAVIS, APRIL 25, 2026 — InferenceX has added DeepSeekv4 for @vllm_project 's day 0 support for GB200 disagg! Great work to @flowpow123 @rogerw0108 @NVIDIAAIDev @inferact for the fast support and engineering!

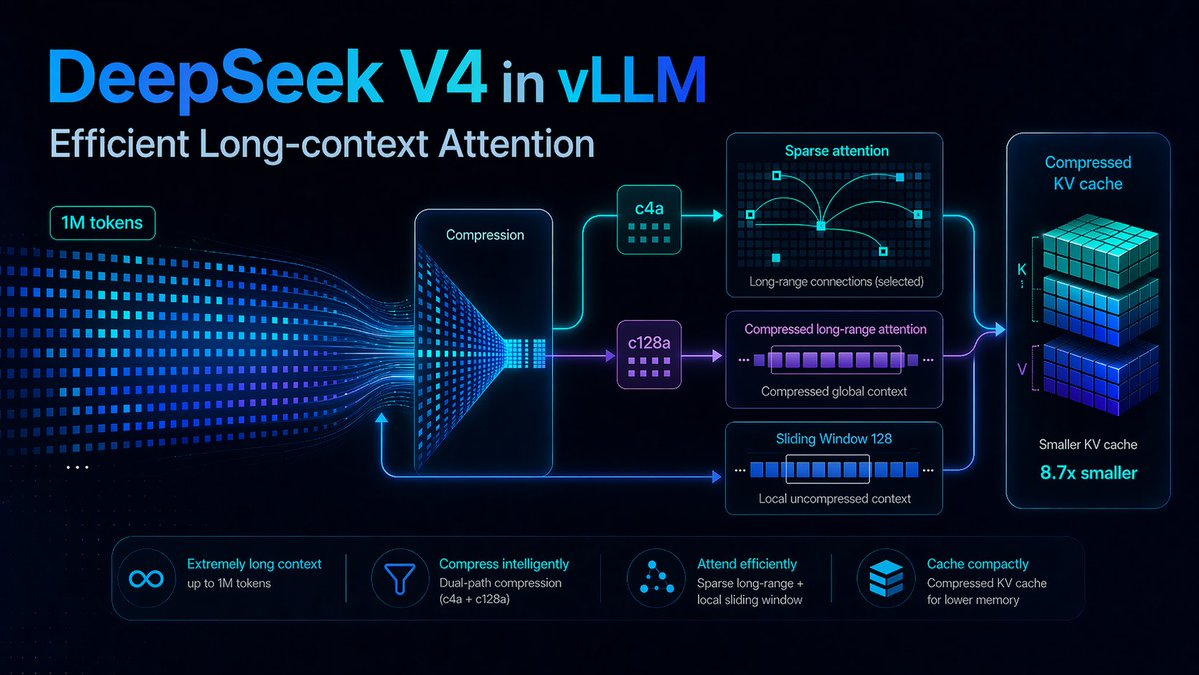

🚀 DeepSeek-V4 Preview is officially live & open-sourced! Welcome to the era of cost-effective 1M context length. 🔹 DeepSeek-V4-Pro: 1.6T total / 49B active params. Performance rivaling the world's top closed-source models. 🔹 DeepSeek-V4-Flash: 284B total / 13B active params. Your fast, efficient, and economical choice. Try it now at chat.deepseek.com via Expert Mode / Instant Mode. API is updated & available today! 📄 Tech Report: huggingface.co/deepseek-ai/De… 🤗 Open Weights: huggingface.co/collections/de… 1/n

We are thrilled to announce that @nvidia is the latest investor in @inferact. We look forward to continuing the momentum driven by our deep collaboration: (1) Engineering velocity: a significant uptick in @nvidia pull requests to the @vllm_project repo. (2) Product synergy: close integration with NVIDIA Dynamo, ModelOpt, Nemotron, and more products! It’s an exciting time for the growth and development of vLLM, the world's AI inference engine!

We are thrilled to announce that @nvidia is the latest investor in @inferact. We look forward to continuing the momentum driven by our deep collaboration: (1) Engineering velocity: a significant uptick in @nvidia pull requests to the @vllm_project repo. (2) Product synergy: close integration with NVIDIA Dynamo, ModelOpt, Nemotron, and more products! It’s an exciting time for the growth and development of vLLM, the world's AI inference engine!

Today, we're proud to announce @inferact, a startup founded by creators and core maintainers of @vllm_project, the most popular open-source LLM inference engine. Our mission is to grow vLLM as the world's AI inference engine and accelerate AI progress by making inference cheaper and faster. The Challenge Inference is not solved. It's getting harder. Models grow larger. New architectures proliferate: mixture-of-experts, multimodal, agentic. Every breakthrough demands new infrastructure. Meanwhile, hardware fragments: more accelerators, more programming models, and more combinations to optimize. The capability gap between models and the systems that serve them is widening. Left this way, the most capable models remain bottlenecked and with full scope of their capabilities accessible only to those who can build custom infrastructure. Close the gap, and we unlock new possibilities. And the problem is growing. Inference is shifting from a fraction of compute to the majority: test-time compute, RL training loops, synthetic data. We see a future where serving AI becomes effortless. Today, deploying a frontier model at scale requires a dedicated infrastructure team. Tomorrow, it should be as simple as spinning up a serverless database. The complexity doesn't disappear; it gets absorbed into the infrastructure we're building. Why Us vLLM sits at the intersection of models and hardware: a position that took years to build. When model vendors ship new architectures, they work with us to ensure day-zero support. When hardware vendors develop new silicon, they integrate with vLLM. When teams deploy at scale, they run vLLM, from frontier labs to hyperscalers to startups serving millions of users. Today, vLLM supports 500+ model architectures, runs on 200+ accelerator types, and powers inference at global scale. This ecosystem, built with 2,000+ contributors, is our foundation. We've been stewards of this engine since its first commit. We know it inside out. We deployed it at frontier scale—in research and in production. Open Source vLLM was built in the open. That's not changing. Inferact exists to supercharge vLLM adoption. The optimizations we develop flow back to the community. We plan to push vLLM's performance further, deepen support for emerging model architectures, and expand coverage across frontier hardware. The AI industry needs inference infrastructure that isn't locked behind proprietary walls. Join Us Through the open source community, we are fortunate to work with some of the best people we know. For @inferact, we're hiring engineers and researchers to work at the frontier of inference, where models meet hardware at scale. Come build with us. We're fortunate to be supported by investors who share our vision, including @a16z and @lightspeedvp who led our $150M seed, as well as @sequoia, @AltimeterCap, @Redpoint, @ZhenFund, The House Fund, @strikervp, @LaudeVentures, and @databricks. - @woosuk_k, @simon_mo_, @KaichaoYou, @rogerw0108, @istoica05 and the rest of the founding team

Today, we're proud to announce @inferact, a startup founded by creators and core maintainers of @vllm_project, the most popular open-source LLM inference engine. Our mission is to grow vLLM as the world's AI inference engine and accelerate AI progress by making inference cheaper and faster. The Challenge Inference is not solved. It's getting harder. Models grow larger. New architectures proliferate: mixture-of-experts, multimodal, agentic. Every breakthrough demands new infrastructure. Meanwhile, hardware fragments: more accelerators, more programming models, and more combinations to optimize. The capability gap between models and the systems that serve them is widening. Left this way, the most capable models remain bottlenecked and with full scope of their capabilities accessible only to those who can build custom infrastructure. Close the gap, and we unlock new possibilities. And the problem is growing. Inference is shifting from a fraction of compute to the majority: test-time compute, RL training loops, synthetic data. We see a future where serving AI becomes effortless. Today, deploying a frontier model at scale requires a dedicated infrastructure team. Tomorrow, it should be as simple as spinning up a serverless database. The complexity doesn't disappear; it gets absorbed into the infrastructure we're building. Why Us vLLM sits at the intersection of models and hardware: a position that took years to build. When model vendors ship new architectures, they work with us to ensure day-zero support. When hardware vendors develop new silicon, they integrate with vLLM. When teams deploy at scale, they run vLLM, from frontier labs to hyperscalers to startups serving millions of users. Today, vLLM supports 500+ model architectures, runs on 200+ accelerator types, and powers inference at global scale. This ecosystem, built with 2,000+ contributors, is our foundation. We've been stewards of this engine since its first commit. We know it inside out. We deployed it at frontier scale—in research and in production. Open Source vLLM was built in the open. That's not changing. Inferact exists to supercharge vLLM adoption. The optimizations we develop flow back to the community. We plan to push vLLM's performance further, deepen support for emerging model architectures, and expand coverage across frontier hardware. The AI industry needs inference infrastructure that isn't locked behind proprietary walls. Join Us Through the open source community, we are fortunate to work with some of the best people we know. For @inferact, we're hiring engineers and researchers to work at the frontier of inference, where models meet hardware at scale. Come build with us. We're fortunate to be supported by investors who share our vision, including @a16z and @lightspeedvp who led our $150M seed, as well as @sequoia, @AltimeterCap, @Redpoint, @ZhenFund, The House Fund, @strikervp, @LaudeVentures, and @databricks. - @woosuk_k, @simon_mo_, @KaichaoYou, @rogerw0108, @istoica05 and the rest of the founding team

Big congrats on @inferact! Since we initiated vLLM’s earliest research push back in 2023, it has been incredible to watch @vllm_project become the OSS inference engine for so many teams. Building a project like this takes persistence across everything: research breakthroughs, ruthless engineering, performance + stability work, ecosystem integration, and the unglamorous grind of docs/CI/issues/releases. Huge gratitude to the maintainers & contributors—can’t wait to keep upstreaming new inference ideas in 2026 with the greater community and @inferact 🚀

We co-led Inferact's $150M seed round to support them in their mission to build the inference engine for all current and future AI. In this episode of The Investment Memo, Lightspeed's Bucky Moore and James Alcorn sit down with Simon Mo (Co-Founder & CEO @inferact) to cover: - How vLLM grew to 60K+ GitHub stars - Why inference is shifting to the majority of compute - How vLLM evolved from a research project into the industry standard - Why building a company was the next step to push open-source inference forward 00:00 Introduction 02:03 The investment memo 04:47 Latency vs throughput vs cost 06:19 Paged attention explained 08:04 The evolution of attention 09:42 Growing the vLLM open source community 11:41 Working with hardware vendors 14:45 Deploying vLLM at large scale 16:03 Inferact's culture of openness 18:45 Building an open ecosystem and horizontal stack 19:45 Inferact's approach to fundraising 22:14 What is the future of inference? @simon_mo_ @buckymoore @JamesAlcorn94

We co-led Inferact's $150M seed round to support them in their mission to build the inference engine for all current and future AI. In this episode of The Investment Memo, Lightspeed's Bucky Moore and James Alcorn sit down with Simon Mo (Co-Founder & CEO @inferact) to cover: - How vLLM grew to 60K+ GitHub stars - Why inference is shifting to the majority of compute - How vLLM evolved from a research project into the industry standard - Why building a company was the next step to push open-source inference forward 00:00 Introduction 02:03 The investment memo 04:47 Latency vs throughput vs cost 06:19 Paged attention explained 08:04 The evolution of attention 09:42 Growing the vLLM open source community 11:41 Working with hardware vendors 14:45 Deploying vLLM at large scale 16:03 Inferact's culture of openness 18:45 Building an open ecosystem and horizontal stack 19:45 Inferact's approach to fundraising 22:14 What is the future of inference? @simon_mo_ @buckymoore @JamesAlcorn94

vLLM has grown to 2000+ contributors scale with a diverse community of model, hardwares, and applications. I see @vllm_project on the path of becoming the world's inference engine and @inferact to accelerate AI progress. We cannot be more excited about the road ahead.

Today, we're proud to announce @inferact, a startup founded by creators and core maintainers of @vllm_project, the most popular open-source LLM inference engine. Our mission is to grow vLLM as the world's AI inference engine and accelerate AI progress by making inference cheaper and faster. The Challenge Inference is not solved. It's getting harder. Models grow larger. New architectures proliferate: mixture-of-experts, multimodal, agentic. Every breakthrough demands new infrastructure. Meanwhile, hardware fragments: more accelerators, more programming models, and more combinations to optimize. The capability gap between models and the systems that serve them is widening. Left this way, the most capable models remain bottlenecked and with full scope of their capabilities accessible only to those who can build custom infrastructure. Close the gap, and we unlock new possibilities. And the problem is growing. Inference is shifting from a fraction of compute to the majority: test-time compute, RL training loops, synthetic data. We see a future where serving AI becomes effortless. Today, deploying a frontier model at scale requires a dedicated infrastructure team. Tomorrow, it should be as simple as spinning up a serverless database. The complexity doesn't disappear; it gets absorbed into the infrastructure we're building. Why Us vLLM sits at the intersection of models and hardware: a position that took years to build. When model vendors ship new architectures, they work with us to ensure day-zero support. When hardware vendors develop new silicon, they integrate with vLLM. When teams deploy at scale, they run vLLM, from frontier labs to hyperscalers to startups serving millions of users. Today, vLLM supports 500+ model architectures, runs on 200+ accelerator types, and powers inference at global scale. This ecosystem, built with 2,000+ contributors, is our foundation. We've been stewards of this engine since its first commit. We know it inside out. We deployed it at frontier scale—in research and in production. Open Source vLLM was built in the open. That's not changing. Inferact exists to supercharge vLLM adoption. The optimizations we develop flow back to the community. We plan to push vLLM's performance further, deepen support for emerging model architectures, and expand coverage across frontier hardware. The AI industry needs inference infrastructure that isn't locked behind proprietary walls. Join Us Through the open source community, we are fortunate to work with some of the best people we know. For @inferact, we're hiring engineers and researchers to work at the frontier of inference, where models meet hardware at scale. Come build with us. We're fortunate to be supported by investors who share our vision, including @a16z and @lightspeedvp who led our $150M seed, as well as @sequoia, @AltimeterCap, @Redpoint, @ZhenFund, The House Fund, @strikervp, @LaudeVentures, and @databricks. - @woosuk_k, @simon_mo_, @KaichaoYou, @rogerw0108, @istoica05 and the rest of the founding team