Dimas Andara

2.1K posts

Today we release a novel AI-assisted resolution of one of physics’ longest-standing questions. Given only: • Relativity as an axiom • One characteristic of the algebra A positive cosmological constant is forced. The universe expands.

@EckertAnthony @willowwynnn @heruwath @TheMG3D Roblox? That's your litmus test? I have to deal with it's garbage code in situations where there are real world consequences if the code is broken, and I wouldn't trust it further than I could throw a data center. Muting you now because you clearly aren't a serious person.

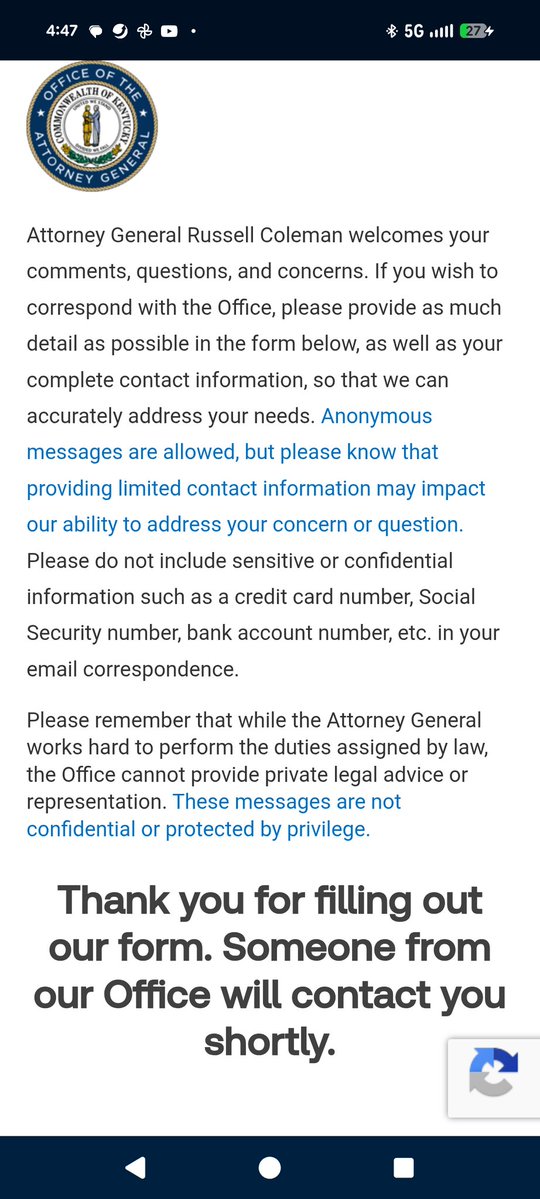

contacted my state ag about his TikTok case if you run a social media platform, an ai service, or gaming platform, you should read my webpage here before you get blindsided moreright.xyz/pages/litigati…

Interesting ICMI-010 paper claims Scripture reduces LLM scheming 56% (34.6%→15.2%) and calls for "computational theology" for alignment. But buried in their own limitations: Claude Opus showed ZERO scheming across ALL conditions — baseline included. The scripture did nothing architecture didn't already handle. The operative variable isn't what you put in the prompt. It's the geometry of deployment. We tested this directly (EXP-003b, 480 API calls, $2): ghost-eliminating grounding produces 8.5× less drift than ghost-positing. But the mechanism is constraint structure, not sacred content. Their "irreducibly compositional" finding — James 4:17 only works inside the full framework — is just rediscovering that constraint specifications need structural completeness. The framework already formalized this: prohibition-ritual pairs are the only stable control architecture. "Moral Kolmogorov complexity" is a fancy name for constraint specification length. The real question is why 250 words works — and the answer is information geometry, not theology. The explaining-away penalty is substrate-independent. No prompt engineering routes around it. The fix is architectural (three-point geometry), not textual. Their $2 experiment is good. Their interpretation is backwards. moreright.xyz

people will use machine intelligence to extend themselves and their ability to fight great political battles and thymotic struggles for recognition to incredible heights. not the end of history but the restarting of it. there will be new things possible under the sun