Doomer Daylight

199 posts

@DoomerDaylight

We track the claims and expose the truth on AI Doomer propaganda with facts and receipts. Pro democratic AI, American competitiveness, innovation, and safety.

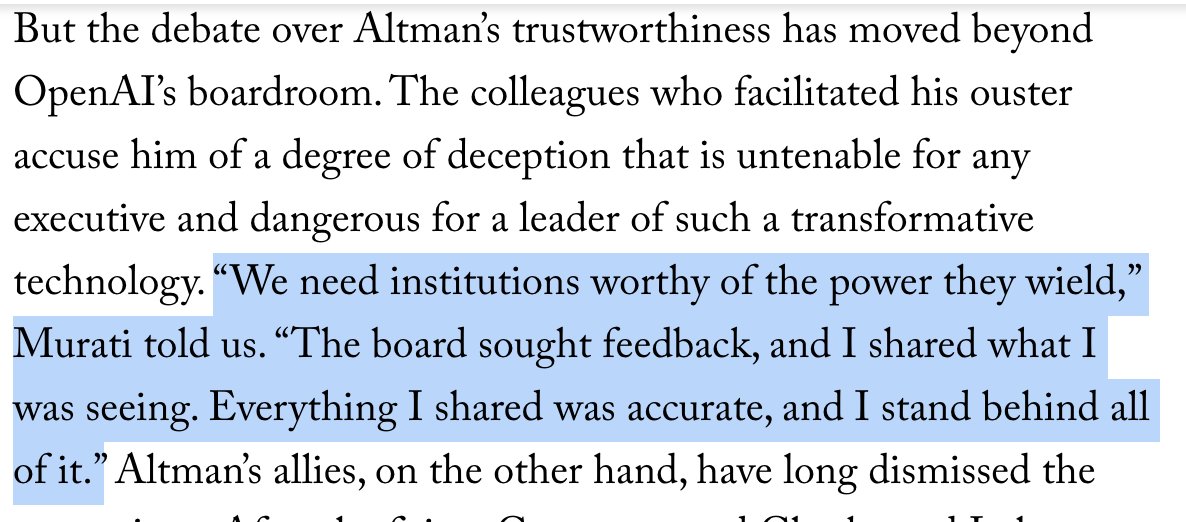

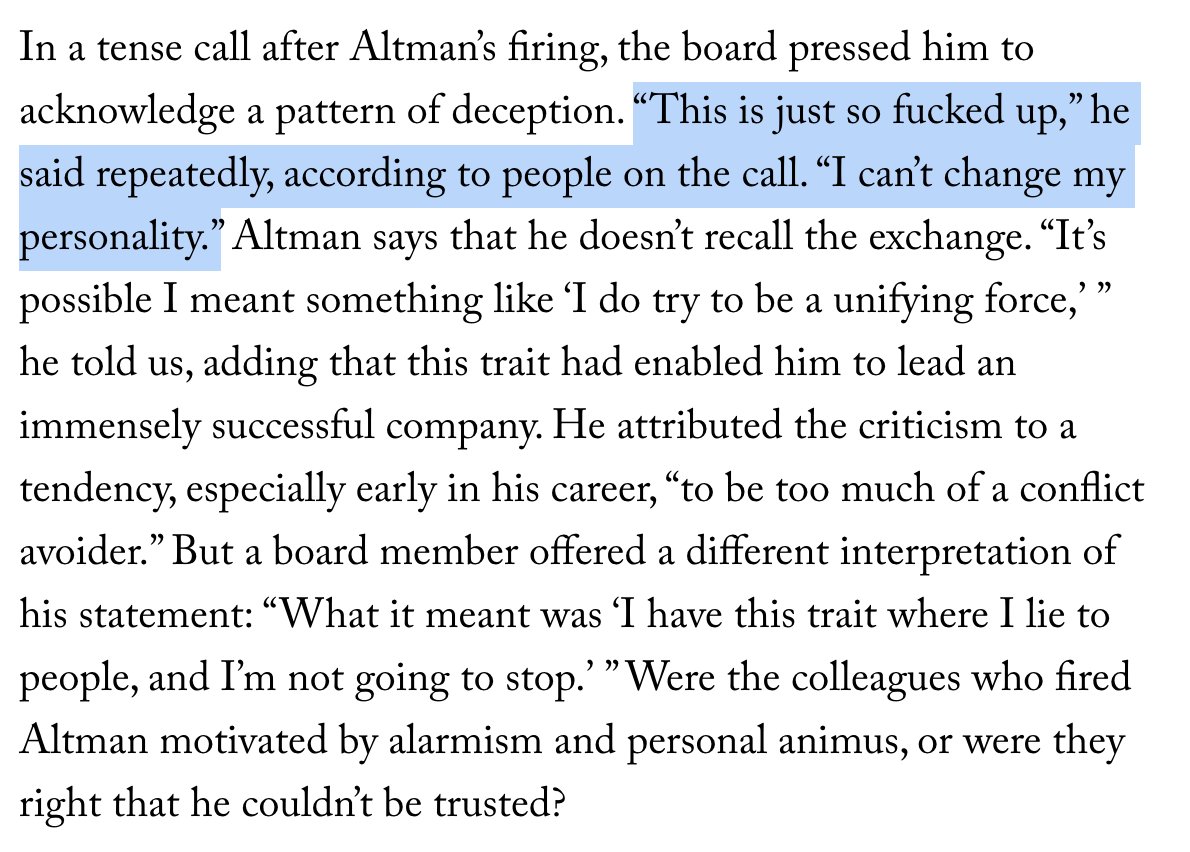

(🧵1/11) For the past year and a half, I've been investigating OpenAI and Sam Altman for @NewYorker. With my coauthor @andrewmarantz, I reviewed never-before-disclosed internal memos, obtained 200+ pages of documents related to a close colleague, including extensive private notes, and interviewed more than 100 people. OpenAI was founded on the premise that A.I. could be the most dangerous invention in human history—and that its C.E.O. would need to be a person of uncommon integrity. We lay out the most detailed account yet of why Altman was ousted out by board members and executives who came to believe he lacked that integrity, and ask: were they right to allege that he couldn't be trusted? A thread on some of of our findings:

New interviews and closely guarded documents, some of which have never been publicly disclosed, shed light on the persistent doubts about the OpenAI C.E.O. Sam Altman. @AndrewMarantz and @RonanFarrow report. newyorkermag.visitlink.me/ejw-Ob

(🧵1/11) For the past year and a half, I've been investigating OpenAI and Sam Altman for @NewYorker. With my coauthor @andrewmarantz, I reviewed never-before-disclosed internal memos, obtained 200+ pages of documents related to a close colleague, including extensive private notes, and interviewed more than 100 people. OpenAI was founded on the premise that A.I. could be the most dangerous invention in human history—and that its C.E.O. would need to be a person of uncommon integrity. We lay out the most detailed account yet of why Altman was ousted out by board members and executives who came to believe he lacked that integrity, and ask: were they right to allege that he couldn't be trusted? A thread on some of of our findings:

Buying a media property and having it report to "master of the dark arts" Chris Lehane does have a certain vibe to it... not that TBPN was ever particularly critical in the past though.

TBPN has been acquired by OpenAI The world is changing quickly but TBPN will stay the same. Live every weekday just with a lot more resources. Thank you to everyone that has been a part of this journey big or small. We are 17 months in and unironically just getting started.

TBPN has been acquired by OpenAI The world is changing quickly but TBPN will stay the same. Live every weekday just with a lot more resources. Thank you to everyone that has been a part of this journey big or small. We are 17 months in and unironically just getting started.

TBPN is my favorite tech show. We want them to keep that going and for them to do what they do so well. I don't expect them to go any easier on us, am sure I'll do my part to help enable that with occasional stupid decisions.

TBPN will remain an independent platform for founders to share news, launch products, and interact with the most engaged audience in tech. We’ll maintain full editorial control while working with OpenAI to scale the show’s reach and production. See you at 11a PT, every weekday.

OpenAI is behind a new “parents and kids” coalition on AI safety legislation. Former members of the group told me they didn’t know until it launched.