Sabitlenmiş Tweet

Einthecorgi

1.1K posts

Einthecorgi

@Einthecorgi2

climber, struggling artist, beer enthusiast, embedded systems engineer.

Katılım Ekim 2019

305 Takip Edilen827 Takipçiler

@ashen_one GLM5.1 at those quants for 256 isnt very good, learned from exp.

English

@p1nosaur But even still, how will it handle many large context datasheets, i can see that getting expensive. I have messed around with a test tool where it will hold the KV-cache per datasheet, but this is till way to much data. Maybe mempalace could help.

English

@Einthecorgi2 yeah really depends on if it found the datasheet for it

English

i run a pcb design firm and we ship about a board a week

the things that burn us are never the big design calls, its always a wrong footprint or a swapped pin or a part that went out of stock

so we spent a month building an automated review stack that catch all of this. we check hundreds of parts and flag numerous issues daily

comment "roast me" for early access

English

@rohanpaul_ai look at the glue on that thread, repeatability is easy. Arbitrary pickup thread and put through needle is a very different task.

English

Einthecorgi retweetledi

@wildmindai 8B models run pretty fast anyway. Would be cool to see this done with a larger model.

English

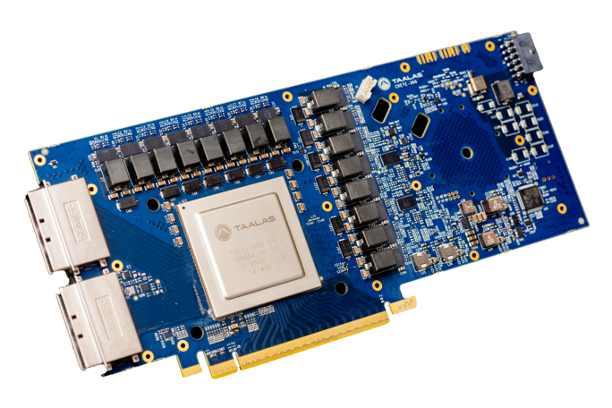

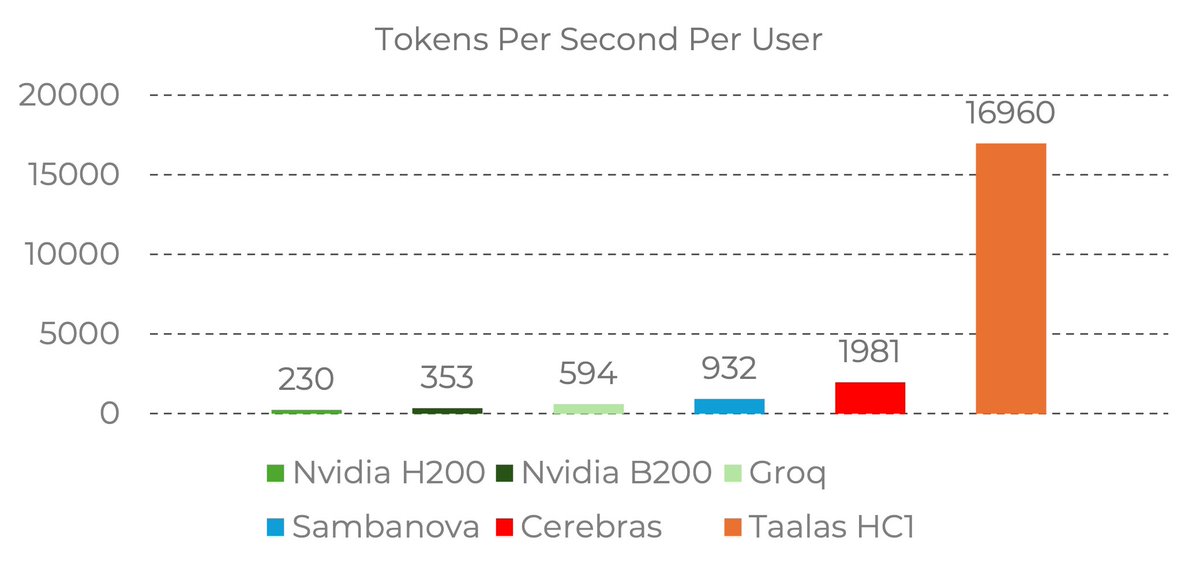

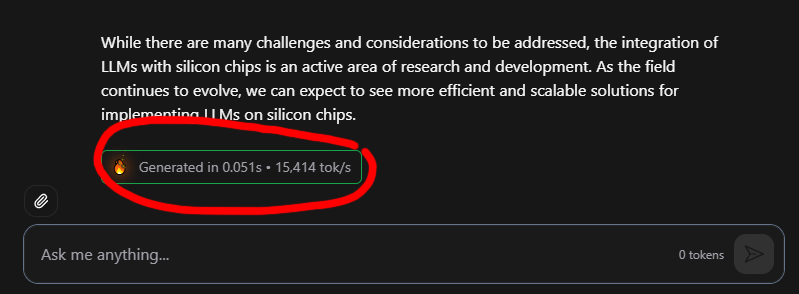

17,000 tokens per second!! Read that again!

LLM is hard-wired directly into silicon. no HBM, no liquid cooling, just raw specialized hardware. 10x faster and 20x cheaper than a B200.

the "waiting for the LLM to think" era is dead. Code generates at the speed of human thought.

Transition from brute-force GPU clusters to actual AI appliances.

taalas.com/the-path-to-ub…

English

@SamuelBeek whats the difference between cursor+platformio? Just curious.

English

Einthecorgi retweetledi

Toronto runner Mac Bauer (instagram.com/514runner) raced the Finch West LRT on foot yesterday - beat it by 18mins - 39mins of running time, 46mins w/ stops for lights. The LRT & passenger took 1 hour 4mins total. LRT was also 27mins slower than driving which took 37mins

English

SaaS is Over:

" SaaS apps were built at a time when software was relatively hard to build.

Now many of these AI applications are easy to build on top of these LLMs.

Most companies will start building custom software super easily." @jonsidd

Love to hear your thoughts on this @destraynor @dharmesh @craigzLiszt @jasonlk @FabianHedin @spenserskates

English

@Zephyr_hg soon it will just be ai scraping ai articles posting ai articles for the ai to scrap.

English

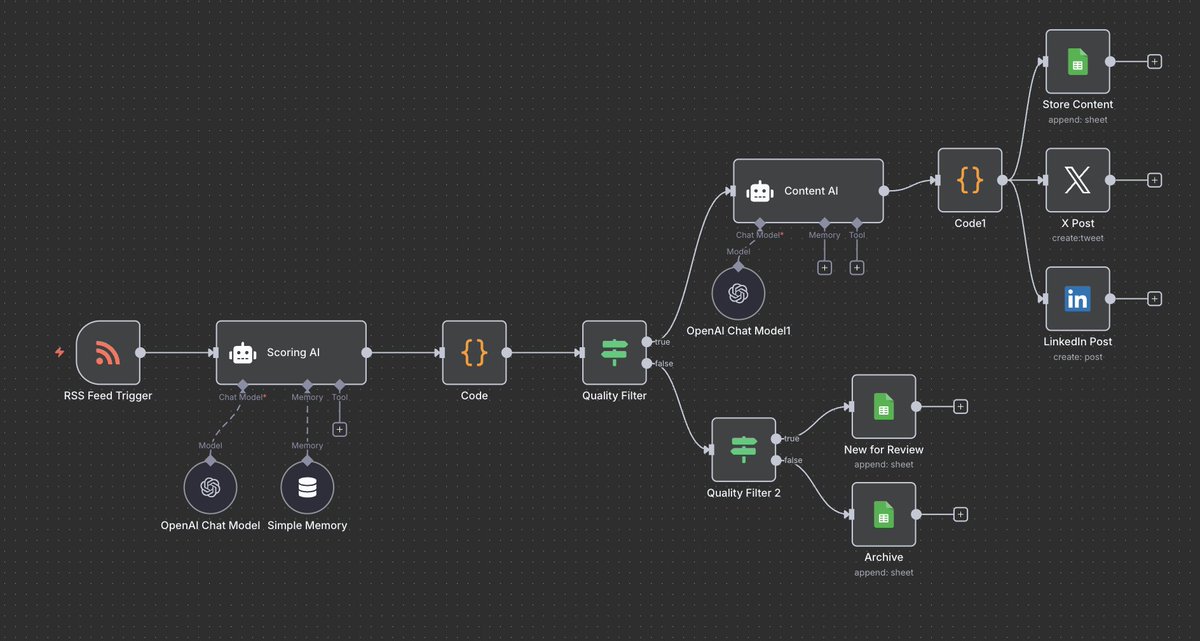

I never run out of content to post anymore.

Built an automation that monitors 50+ news sources, scores articles for relevance, and writes social posts automatically.

It finds trending topics in my niche before they explode everywhere else.

Saves me 15-20 hours monthly and keeps me ahead of every trend.

Comment "NEWS" and I'll DM it to you (must be following)

English

@elonmusk some feature where videos could be captured & uploaded in a closed loop, allowing users to see if a video is real or AI generated. Will add immense value to this platform as other information sources will not be able to guarantee footage source.

English

The enshittification of Arduino begins? Qualcomm starts clamping down #Arduino @itsfoss

blog.adafruit.com/2025/11/21/the…

English

@akshay_pachaar Nobody is sleeping on it, it’s fantastic and all over x

English

Everyone is sleeping on Meta's SAM 3 release.

But it's actually a big deal. Here's why:

Companies spend millions paying humans to label images and videos frame by frame. A single autonomous driving dataset? Months of work, hundreds of annotators, millions in cost.

Without labeled data, you can't train custom models. Without custom models, you're stuck with generic solutions. This is why most companies never move past pilots.

SAM 3 breaks this cycle.

First let's look at the evolution:

SAM 1 segmented objects when you clicked on them. Revolutionary, but one object at a time.

SAM 2 added video tracking with memory. Game-changing, but you still manually prompted every object.

SAM 3 changes everything with text prompts.

Type "yellow school bus" and it finds ALL of them in your image or video. Not just one. Every instance across thousands of frames.

Now here's where people get confused:

"Can't I just use GPT-5 or Gemini for this?"

No, and here's why that's a terrible approach.

Large multimodal LLMs are great for reasoning, but they're slow and expensive for production visual tasks. You're paying API costs per image, waiting seconds for responses, getting inconsistent results.

SAM 3 runs in 30 milliseconds on a single GPU for 100+ objects. That's 100x faster, and you own the infrastructure.

More importantly, SAM 3 gives you precise pixel-level masks, not descriptions. Try asking an LLM to segment every defective part on a manufacturing line in real-time. It won't work. SAM 3 does this effortlessly.

The real breakthrough is their data engine.

Meta built an AI-human hybrid system that's 5x faster for complex annotations. They trained SAM 3 on 4 million unique visual concepts - 50x more than existing benchmarks like LVIS.

SAM 3 is trained on 4 million unique visual concepts, it handles everything:

- Text-based concept search

- Interactive refinement with clicks

- Video tracking across frames

- Zero-shot detection of new concepts

The model is open source. Weights, code, and benchmarks are on GitHub.

If you're building computer vision applications, this is the foundation model to evaluate. The annotation time savings alone will pay for integration costs within weeks.

Find the relevant links in the next tweet!

English

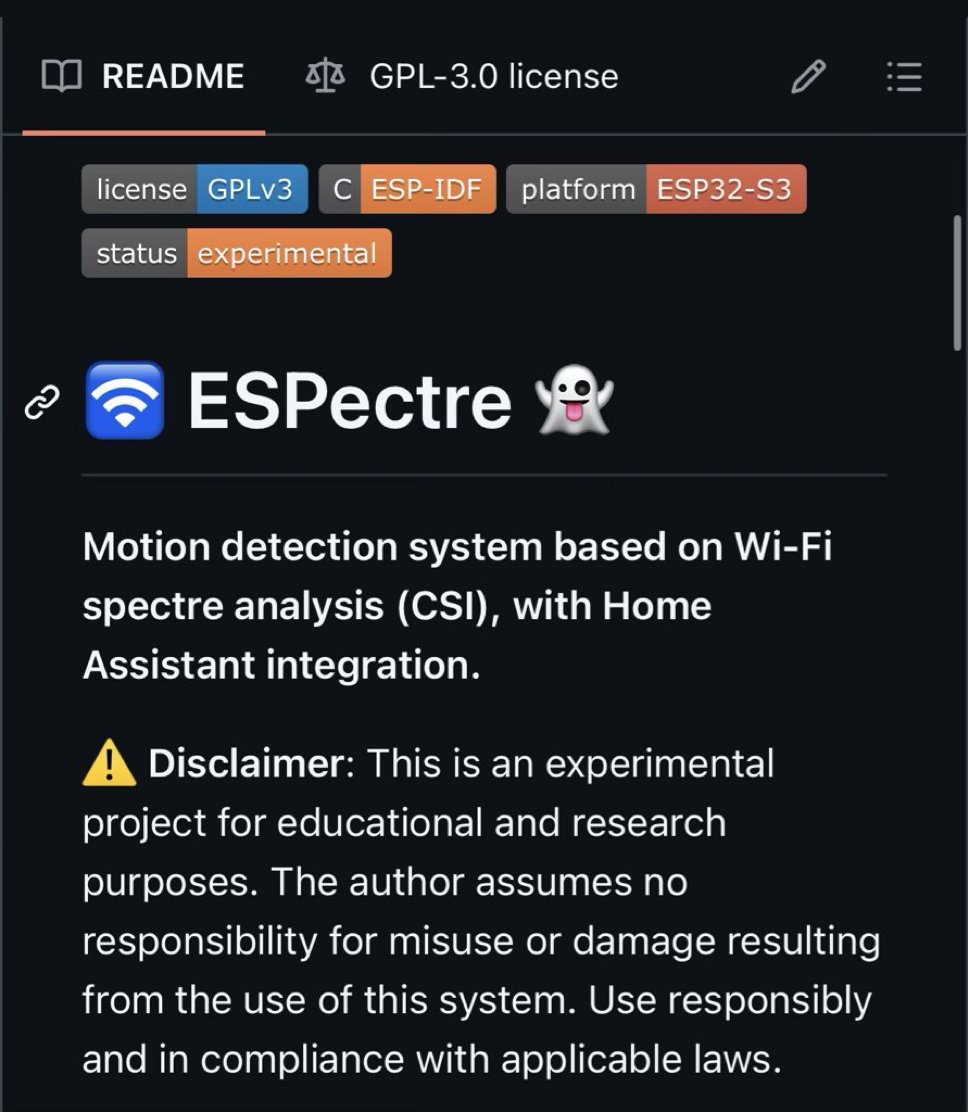

@GithubProjects Anyone tried it with a fan running in the room? I hear wifi motion detection systems don’t like fans

English