Filip Pavetić

32 posts

Gemini 2.0 Flash's video understanding is here 🚀 Think: search in videos via timecodes, extract text from moving camera footage, analyze screen recordings in real-time interactions with native audio out 🔊 Come and try it aistudio.google.com 😀 youtu.be/Mot-JEU26GQ?si…

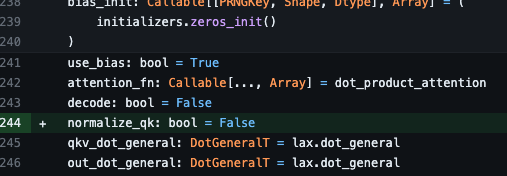

QK normalization now available in Flax, thanks to @PiotrPadlewski github.com/google/flax/co…

What do you think are the primary limitations or design choices that feel unnatural when it comes to using Transformers for computer vision (images, videos, ...)?

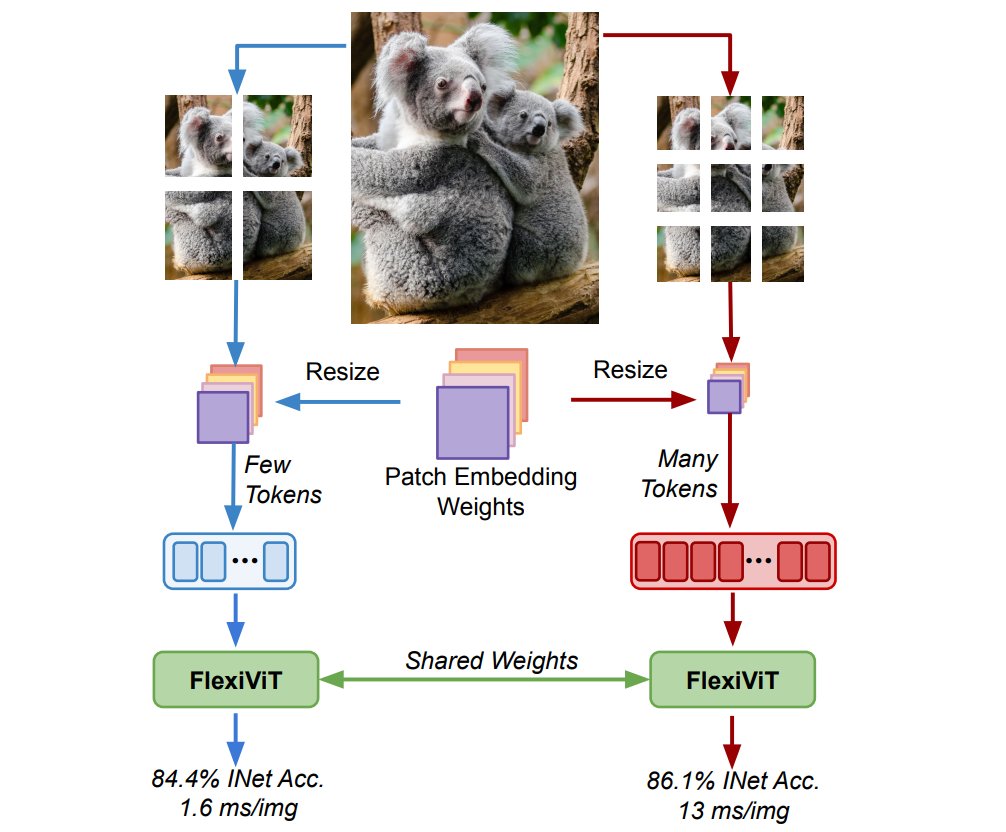

1/ Excited to share "Patch n' Pack: NaViT, a Vision Transformer for any Aspect Ratio and Resolution". NaViT breaks away from the CNN-designed input and modeling pipeline, sets a new course for ViTs, and opens up exciting possibilities in their development. arxiv.org/abs/2307.06304

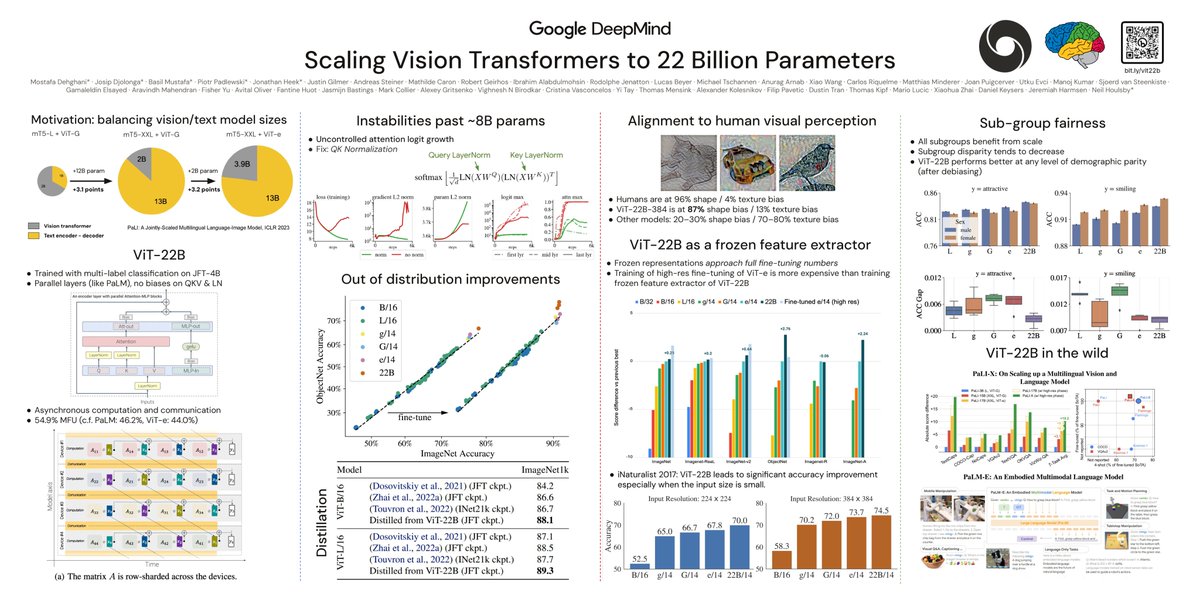

This was a collaboration with an amazing group of people including @PiotrPadlewski, @_basilM, @m__dehghani, @JonathanHeek, @jmgilmer, @AndreasPSteiner, @MJLM3, @mcaron31, @ibomohsin, @RJenatton, @rikelhood, @mechcoder, @anuragarnab, @brainshawn, @giffmana, @mtschannen,...

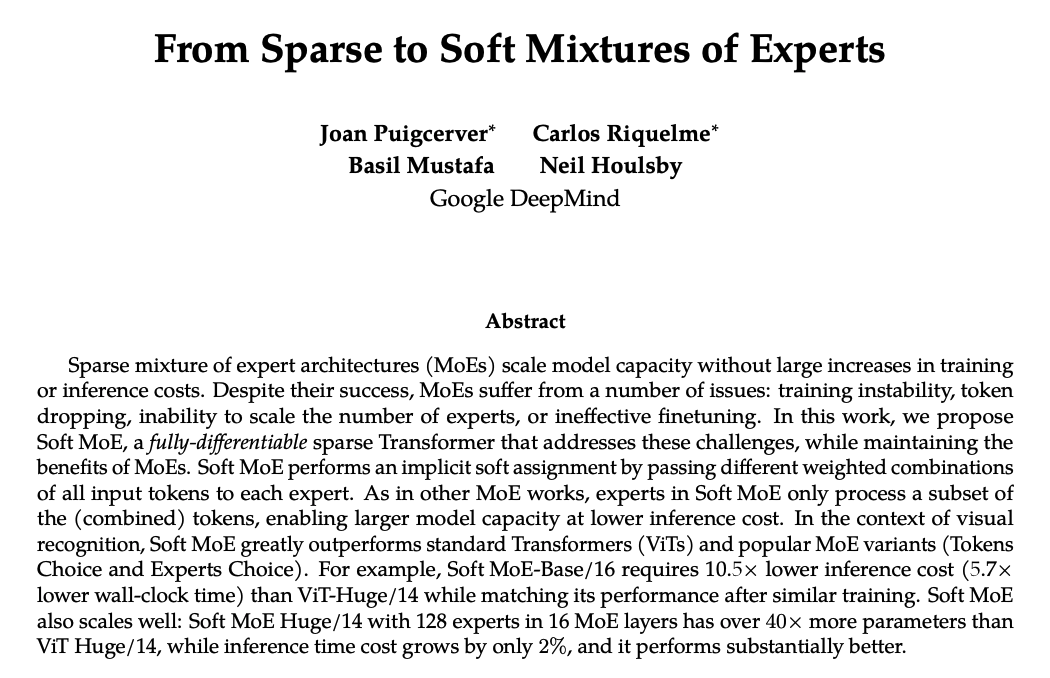

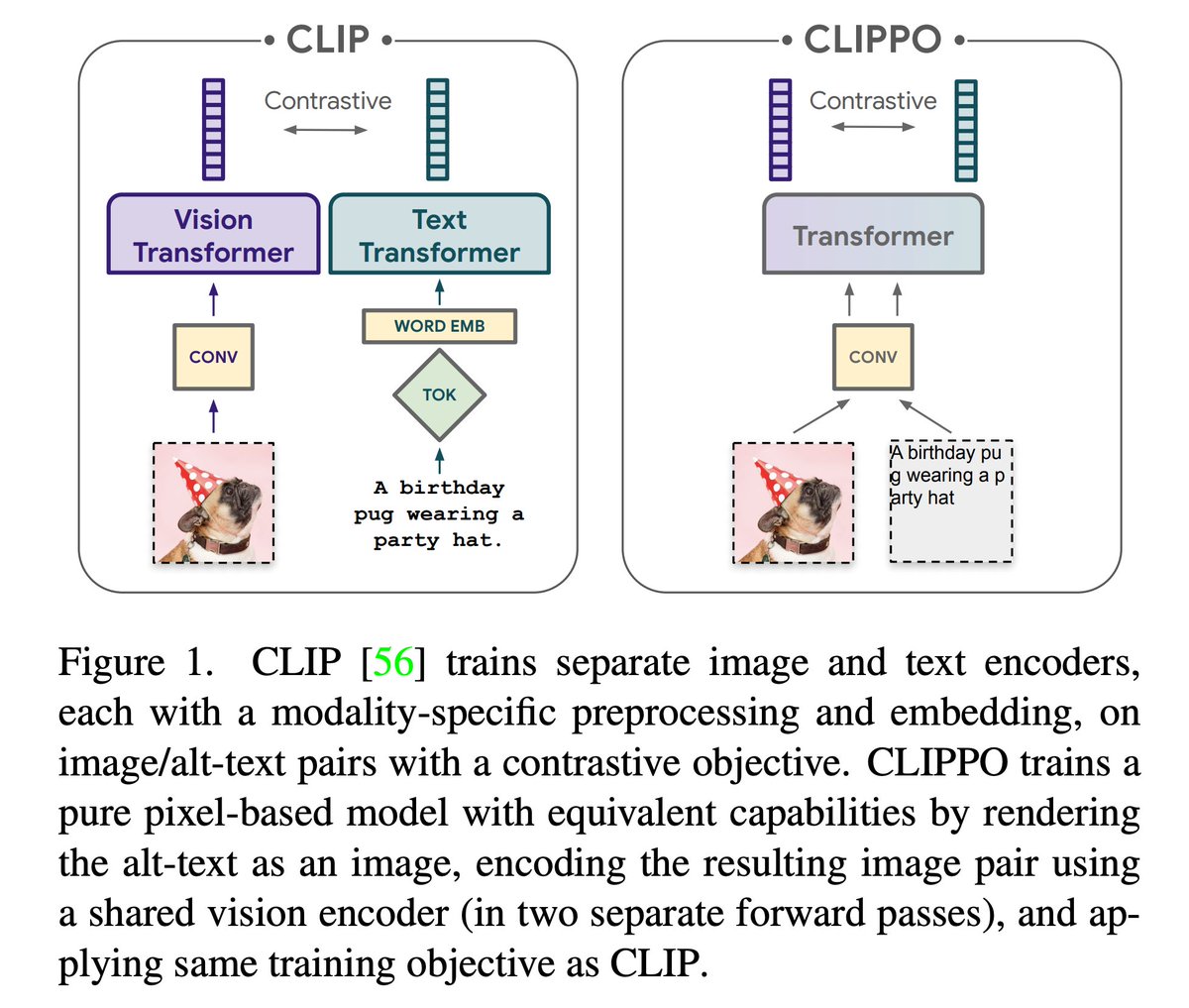

Beep beep! Introducing LIMoE, the Language Image Mixture of Experts: a single model, processing both modalities for contrastive image-text modelling. Cruises straight to 84.1% 0shot ImageNet accuracy without any modality-specific architectures or pre-training. (1/10)