Fabio Angela

2.4K posts

Fabio Angela

@FabioAngela79

L'arroganza umilia anche quando hai ragione, l'umiltà esalta anche quando hai torto. Longtime developer, so many ideas, so much passion, too much maybe!

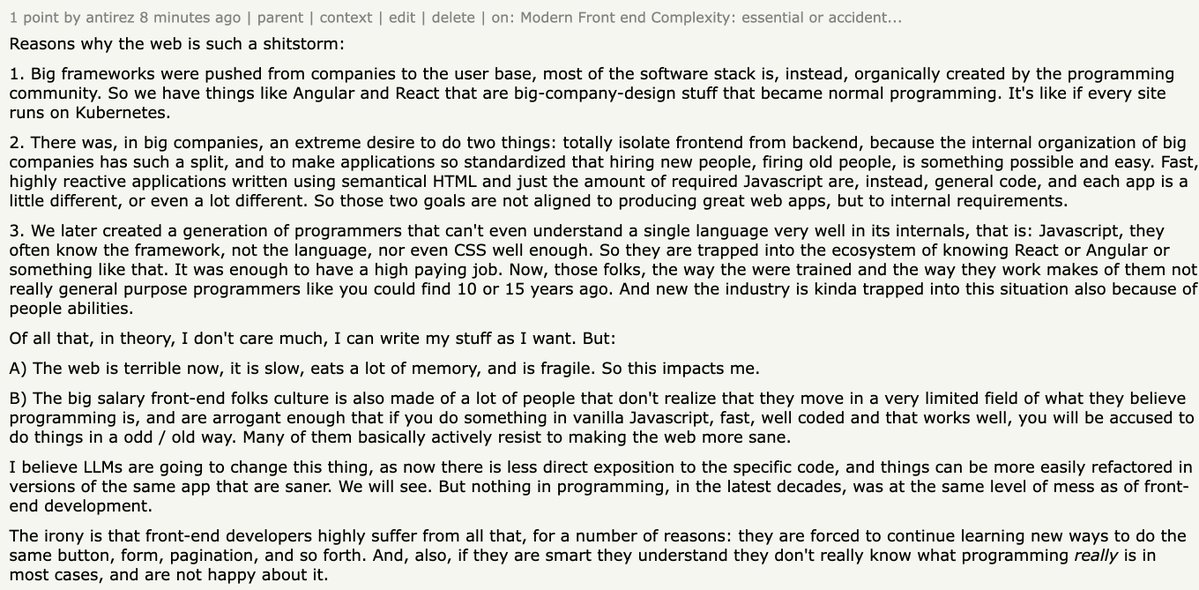

OAI has to be prioritising low/no thinking GPT-5.5 inference, it's so fast. I think this is better than high thinking, so much less annoying, so much more token efficient.

GPT-5.5 just dropped TL;DR: This is another o1 / o3 moment - Shorter, more human, actual personality - Clearly pushing into personal agents (OpenClaw) - Higher info / Token density → Cheaper to reach GPT-5.4-level intelligence It codes different: - Less bloat - Cleaner, readable output Codex: - Frontier agentic coding - Backend > Claude Opus 4.6 - Give it specs and it will build it - Handles large codebases, long runs, visual iteration loops GPT-5.5 Pro: - Does 30-90 min runs cohesively like it’s nothing - Writes full docs, uses tools well - Can solve basically anything Tradeoffs: - Slower than Opus - More expensive per token - (but more efficient overall) GPT-5.5 is the new bar