Chaofei

127 posts

Chaofei

@FanChaofei

Stanford PhD Student | Advancing Brain-Computer Interfaces 🧠 | Decoding the Language of Thoughts 💭

Palo Alto Katılım Haziran 2009

516 Takip Edilen328 Takipçiler

After some reflection, I think I’ll share my experience of moving from a safe place toward something more exciting but risky. It’s less about whether BCI or AI is more promising—that’s personal and depends a lot on timing—and more about the universal question: How do we balance our fear of risk with passion for what we love?

English

@FanChaofei Curious, what was the motivation? I moved the opposite direction (neuro to AI for science). Lots of interesting overlap

English

4 yrs ago, I jumped from AI into Brain-Computer Interface (BCI) research—with almost zero neuroscience experience.

Today, I'm at NPTL, one of the world's leading BCI labs, contributing to breakthroughs like restoring speech for people with ALS and developing a robust handwriting BCI.

There's never been a more exciting time to enter the BCI field!

I'm writing a blog about my journey and advice for newcomers.

What questions do YOU need answered?

Ask below 👇

English

@neurosutras Tough to put a number on it. Neuroscience is kind of like the physics behind rockets—it gives us the core principles. Then we build cool BCI on top. Plus, there’s a flywheel: better BCIs help us learn more about the brain, which makes the next BCIs even better.

English

BCI experts & professors: I'd appreciate your retweets to help reach curious newcomers!

@SergeyStavisky @chethan @nishalpshah @NirEvenChen @JonAMichaels @tuxedocat @djseo_ @SussilloDavid @SumnerLN

English

Chaofei retweetledi

Congratulations to @CathyrenOleande for winning the Brain-to-Text Benchmark '24 (eval.ai/web/challenges…). Her entry drove the word error rate down from 9.72% (our baseline) to 5.81%, a substantial improvement! Excited to see what we can learn from this approach and others.

English

Chaofei retweetledi

Our silent speech preprint is live! Using a cross-modal training technique enhanced by LLMs, we set a new state-of-the-art for silent speech (12.2% word error rate, open vocabulary) and brain-to-text (8.9% WER; Rank 1 on Brain-to-Text Benchmark '24) arxiv.org/abs/2403.05583

English

Chaofei retweetledi

Are you a postbac interested in neural engineering / BCIs? 🧠🤖🗣️

Come join our team!! Work directly with our amazing BCI participants.

Great exposure for prospective grad/med school applicants! snel.ai/positions

English

Chaofei retweetledi

If you're attending SfN 2023 and you're interested in speech decoding, come check out my poster on Wednesday morning! We demonstrate a very high accuracy and rapidly calibrating brain-to-text BCI for restoring communication.

PSTR488.12 / JJ23

abstractsonline.com/pp8/#!/10892/p…

English

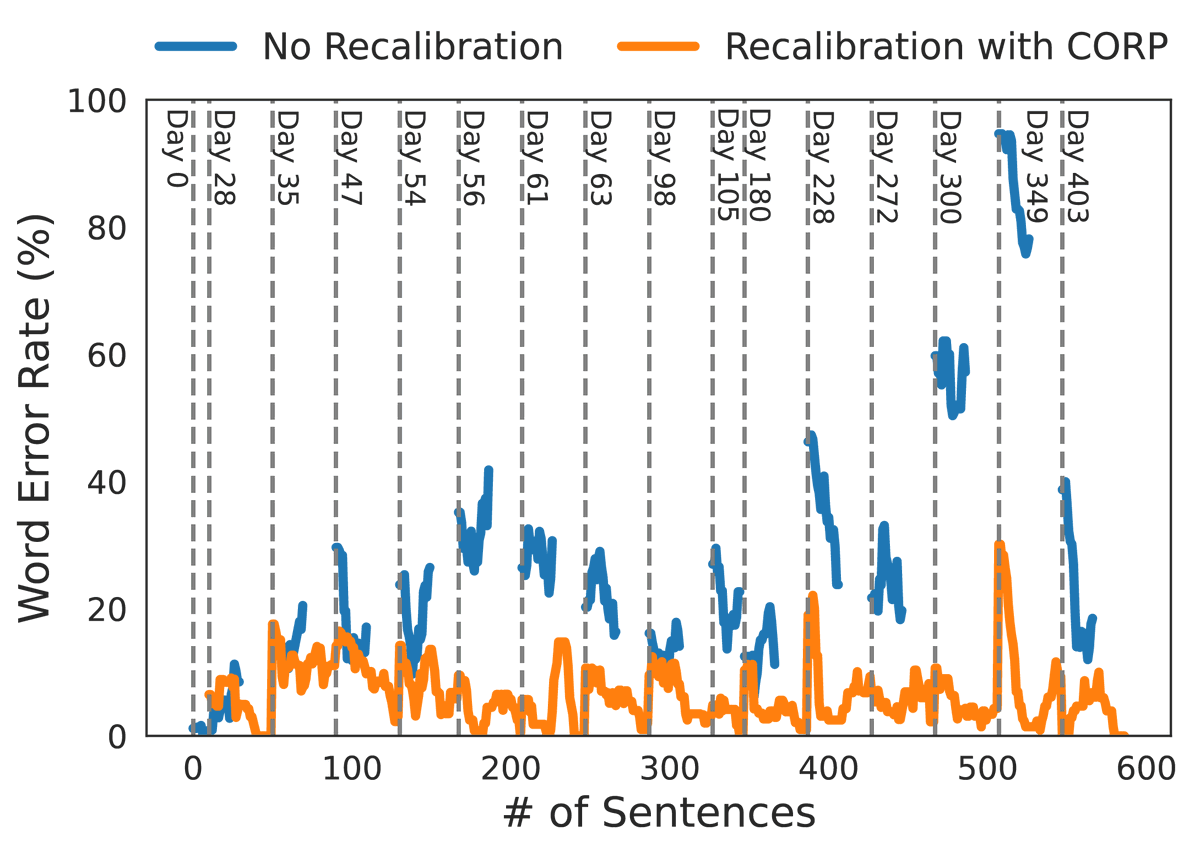

💭Imagine relying on a brain-computer interface (BCI) for your only means of communication, but it's inconsistent and needs frequent recalibration. For those who can't move or speak, this isn't just frustrating; it's a huge barrier.

🌟Our #NeurIPS2023 paper introduces a promising solution: Continual Online Recalibration with Pseudo-labels (CORP). Over an entire year (403 days), CORP demonstrated remarkable stability in an online handwriting BCI task. With a 6.16% word error rate, it significantly outperforms existing recalibration methods. 🥇To the best of our knowledge, this is the longest demonstration of intracortical BCI plug-and-play stability to date.

⚙️Technical Insight:

1⃣CORP leverages large language models to auto-correct BCI outputs. The outputs are accurate enough to be used as pseudo training labels, enabling unsupervised recalibration without user interruption.

2⃣We also implemented a replay buffer and data augmentation strategies to tackle the challenges of continual learning.

🤝We encourage the research community to build on our findings. Our data and code are open for further research and collaboration.

Paper: arxiv.org/abs/2311.03611

Code: github.com/cffan/CORP

Data: doi.org/10.5061/dryad.…

This work was the result of amazing collaboration with @WillettNeuro, @xoxo_meme_queen, Nick Hahn, Foram Kamdar, Donald Avansino, Leigh Hochberg, Krishna Shenoy, Jaimie Henderson, and our clinical-trial participant T5!

English

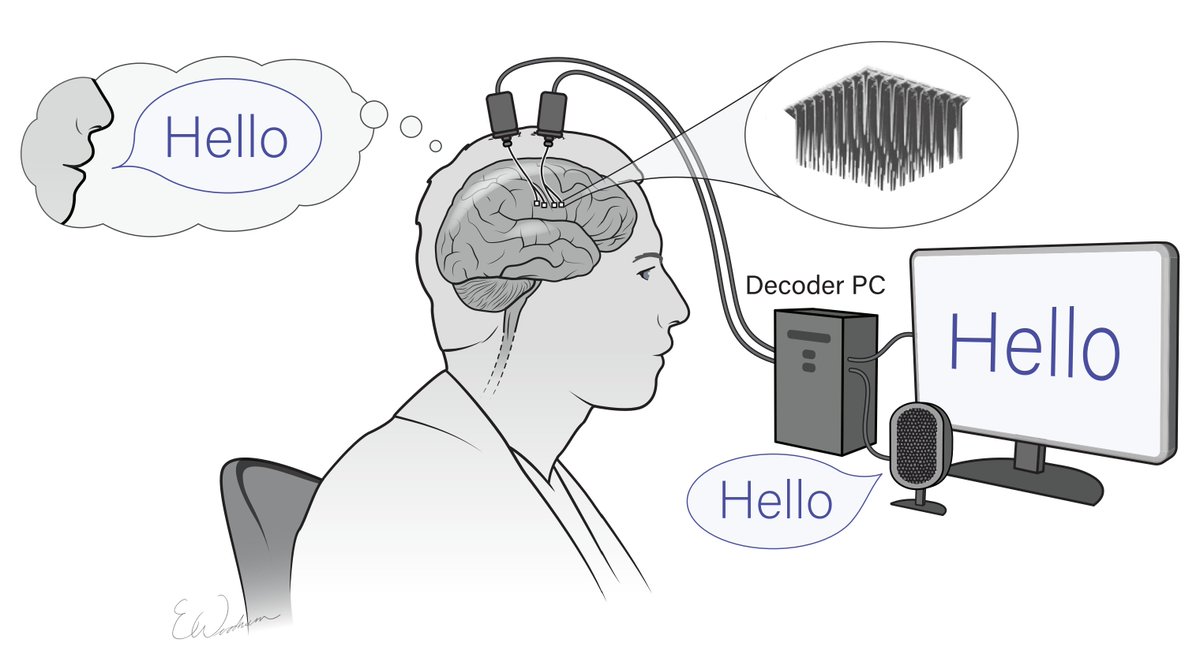

An incredible advancement! This development is a pivotal step toward making speech BCI accessible and usable in our everyday lives. 🧠🗣️

Nick Card@NS_Card

We're so excited that our speech neuroprosthesis project won the 2023 BCI Award! Thanks to all the coauthors for all their hard work @Maitreyee_W @SergeyStavisky @DrDavidBrandman @ca_rrina @BrainGateTeam @neuroleigh @WillettNeuro @pearlsandpython @FanChaofei @JaimieHenderson

English

Brain-to-speech stands as a compelling new research area, especially since neural activity diverges significantly from audio. Our paper just begins to touch upon the depth of the issue. We invite the community to delve deeper with us

Frank Willett@WillettNeuro

We are publicly releasing all data and code, and are hosting a machine learning competition! Can you do better than us at translating neural activity into text? 2/3 eval.ai/web/challenges…

English

Chaofei retweetledi

Our new study is out today in Nature! We demonstrate a brain-computer interface that turns speech-related neural activity into text, enabling a person with paralysis to communicate at 62 words per minute - 3.4 times faster than prior work. 1/3 nature.com/articles/s4158…

English

@_KarenHao Are you suggesting that no new technology could be developed until there is guarantee for equal access?

English