Curly Chen

416 posts

Curly Chen

@Fangchen0105

健身,右派偏自由主义

@mylifcc arxiv.org/pdf/2511.00739 乔治亚理工跟英特尔的合写的一篇报告有研究工具处理阶段cpu占总延迟比例。第二个我也没看到相关数据,

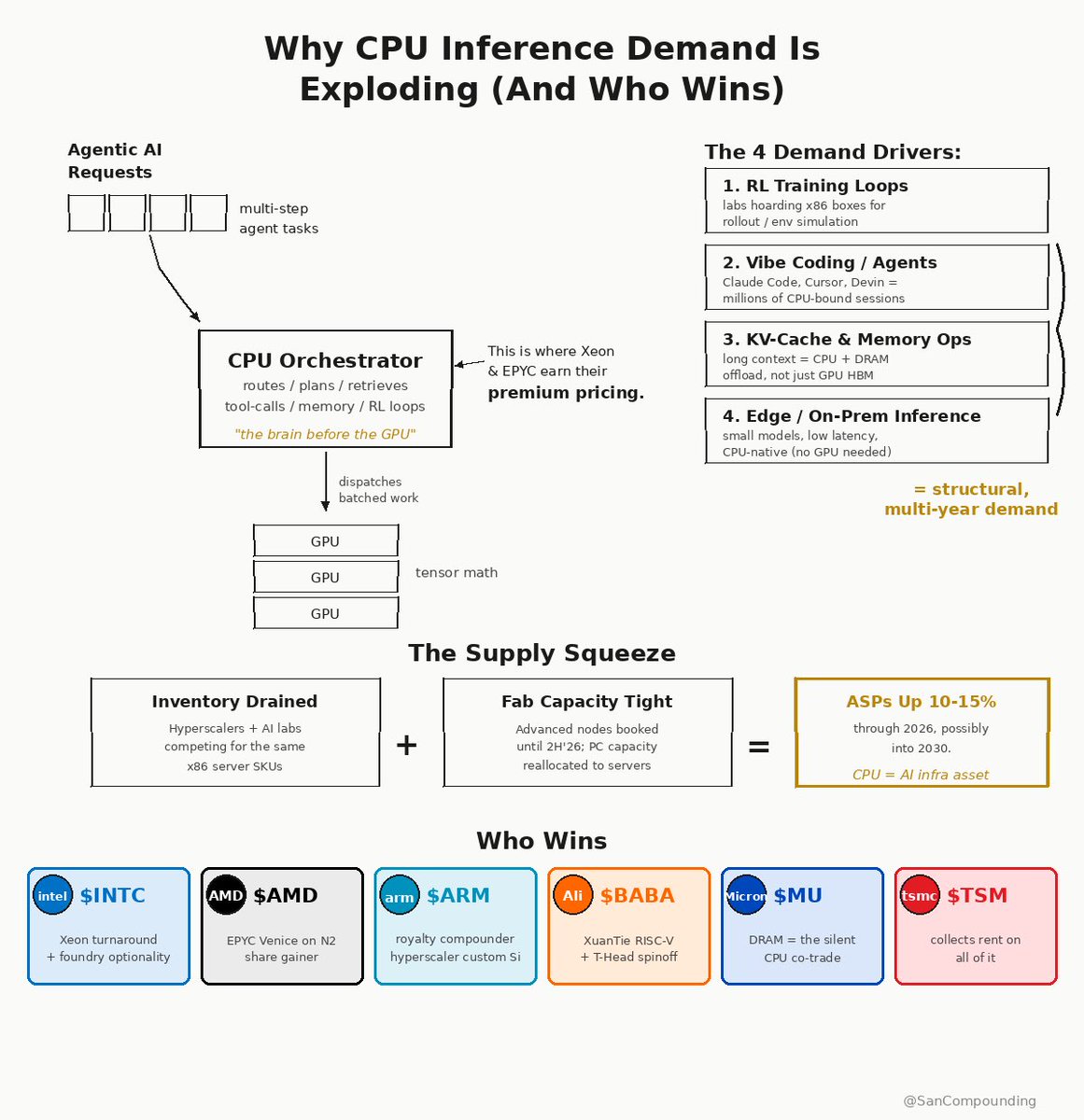

生成式AI往代理式AI迁移中,新的卡脖子环节又出现了,这次是CPU。之前市场关于算力紧缺的讨论都在GPU、HBM、光模块、电力等环节,其实对于CPU的关注比较少。其实Cpu的紧缺传了一段时间了,看最近英特尔、AMD走势最核心驱动力就是来自cpu开始出现紧缺了,甚至连过往不怎么受待见的港股联想集团,最近两周走的也很强。 1、为什agentic ai时代CPU占比会扩大? 传统AI(主要是大模型训练/推理)高度依赖GPU,因为Transformer的核心是并行矩阵运算,GPU擅长高吞吐的并行计算。这时CPU主要只负责“辅助”:数据路由、内存压缩、GPU调度等,导致数据中心CPU:GPU比例很低(典型1:4~1:8,甚至1颗CPU管8颗GPU)。CPU利用率低,基本是配角。 Agentic AI完全不同,它不是单次“问答”,而是自主多步循环(Planning → Tool Use → Act → Observe → Reflect → Iterate),涉及: 1)编排:调度子任务、多智能体协作、分支逻辑、重试机制。 2)工具调用:网页搜索、API调用、代码执行、数据库查询、向量检索(RAG)、文件处理等。 3)其他CPU密集任务:上下文管理、KV Cache处理、强化学习(RL)仿真评估、数据预/后处理。 这些任务高度串行、I/O密集、逻辑分支多,GPU并不擅长(甚至会闲置)。研究显示:工具处理阶段在CPU上可占总延迟的50%~90.6%(GPU在等待CPU)。Agentic工作流中CPU动态能耗占比可达44%,比传统AI高3~4倍。 简单说,Agentic AI把“思考”交给GPU,但把“做事/协调”交给CPU。CPU从“管家”变成了“总指挥”,必须大幅增加才能让整个系统高效运转。这就是CPU占比扩大的核心驱动(Intel、AMD、Arm、TrendForce等一致观点)。 2、CPU成为新紧缺环节的现实证据 今年Q1 Intel/AMD服务器CPU交期已经拉到6-12周,部分型号基本售罄,价格也提了10%以上。厂商自己都说“demand far exceeded expectations”。不是产能不够,而是Agentic AI把CPU从“可有可无”直接干成了“必须配足”的总指挥。 数据中心项目现在除了电力,就是CPU卡脖子最严重。传统x86(Intel/AMD)高功耗+产能紧张,供应链直接打爆。 3、CPU缺口会有多大? 行业共识是CPU:GPU比例将显著拉近,CPU需求大幅提升:从传统1:4~1:8(CPU:GPU)转向1:1~1:2(部分场景甚至1.4:1,即CPU比GPU还多)。看之前Arm估算,每GW算力需要的CPU核心从3000万激增到1.2亿(4倍增长) CPU算力份额:在Agentic工作流中,CPU承担的算力比未来机架/集群可能从“GPU主导”转向更平衡,甚至出现专用CPU rack来支撑Agentic编排;AMD/NVIDIA新一代平台已开始按1:2~1:4设计 这就带来了CPU需求的真实拐点,是实打实的硬件重构。 4、特别要说下ARM服务器CPU会更受益一些? Agentic AI最需要的就是“高核心数+低功耗+稳定串行处理”。ARM天生多核可扩展、perf/watt领先:Arm AGI CPU(136核,TDP仅300W)对比x86同规格功耗低40%+,每机架性能直接翻倍。风冷机架就能塞8000+核,液冷更能到4万+核,完美解决数据中心的“功耗墙”。 更狠的是生态大转向:AWS Graviton、Google Axion、Microsoft Cobalt早就自研ARM,云巨头集体“去x86化”。Arm 3月直接下场自研AGI CPU(首款量产芯片),Meta、OpenAI、Cerebras都是首发伙伴,OEM有联想、Supermicro。 Counterpoint预测:AI ASIC服务器CPU里,ARM份额从2025年25%干到2029年90%。Arm自己说,这波能把数据中心CPU TAM从30亿版税干到1000亿+,未来几年服务器CPU营收很可能超手机,成为最大增长极。 看下周和5月初英特尔、amd的财报电话会上,cpu实际出货量的变化、以及cpu的真实价格变化。这能说明真的有多紧缺。 5、CPU紧缺哪些公司会受益? 梳理了下哪些公司会受益,后续关注起来: 美股最核心: Intel (INTC)ntel 依然是服务器 CPU 市场的霸主。短缺潮会提升其过往型号的利润率,且其 Gaudi 与 Xeon 的组合在代理推理端有强劲需求。 AMD (AMD):理由:在 Agentic AI 服务器市场,AMD 的 EPYC 处理器因多核心优势和高性价比,目前在云厂商中的市占率持续提升,是 GPU+CPU 均衡配置趋势下的首选。 Arm Holdings (ARM):越来越多的云厂商(亚马逊、微软、谷歌)开始自研基于 ARM 架构的 CPU。无论谁赢,只要 Agent 需求推高 CPU 核心数,Arm 的授权费就会大涨。 港股(制造与分销关键点) 中芯国际 (0981):虽然其在最先进制程受限,但大量非核心逻辑控制芯片(支持 CPU 运作的辅助芯片)和中端 CPU 的需求外溢,会显著提升其产能利用率。 联想集团 (0992):全球第一大服务器与 PC 厂商。在短缺潮初期,拥有强大供应链管理能力和库存的大厂能通过提价和保证供应,抢占更多政企市场份额。 A股(国产替代与配套产业链) 海光信息 (688041):国产 x86 服务器 CPU 的龙头。在 Agentic AI 时代,由于其架构与全球生态兼容性最好,国内算力中心在补齐 CPU 短缺时,海光是第一顺位替代品。 龙芯中科 (688047):自主架构 CPU 的代表。随着国产自主可控需求增强,在党政和关键基础设施的 Agent 应用中受益。 深南电路 (002916) / 沪电股份 (002463):理由:配套受益。CPU 核心数增加和 GPU+CPU 配比调整,要求更复杂的 PCB(印制电路板)和封装基板,这些公司是全球高端服务器 PCB 的主力供应商。 澜起科技 (688008):内存接口芯片龙头。只要 CPU 多,内存条就多。Agent 时代对内存带宽要求极高,其 MRDIMM 和内存接口芯片是 CPU 性能爆发的必需品。 投资逻辑核心其实两点: 1)量价齐升:CPU 厂商(AMD, Intel, arm、海光)最直接。 2)卖铲子的人:由于 Agent 需要高带宽,内存配套(澜起)和先进封装/基板(深南)的需求甚至比 CPU 本身更稳。