Fanglin Lu retweetledi

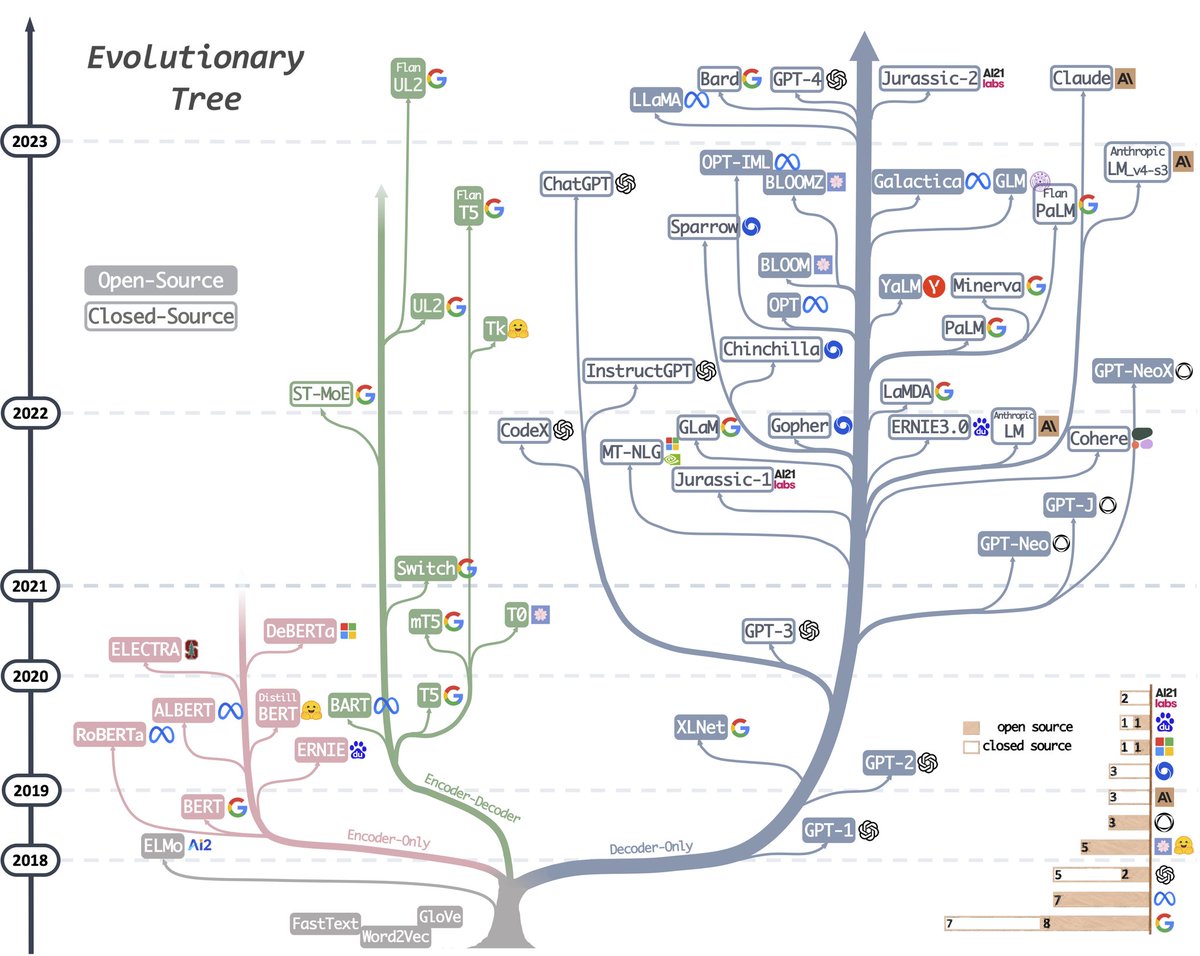

How long have you been "planning to understand" how modern LLM inference works?

We just gave you a readable version of SGLang you can finish over the weekend.

Introducing mini-SGLang ⚡

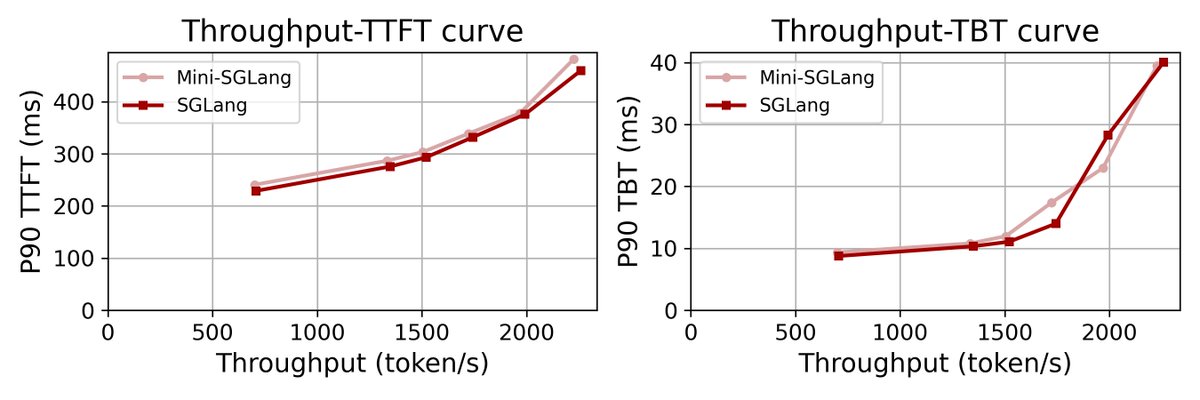

We distilled SGLang from 300K into 5,000 lines. Kept the core design, cut the complexity. Without sacrificing performance — nearly identical to SGLang online.

It is built for engineers, researchers, and students who want to see how inference really works and learn better from code than papers.

⭐ Star us on GitHub: github.com/sgl-project/mi…

🧵 (1/3)

English