Sabitlenmiş Tweet

Gradium

107 posts

Gradium

@GradiumAI

The voice layer for modern apps and agents. Real-time, scalable voice APIs: TTS, STT, turn-taking & voice cloning. Devs: build → https://t.co/r5CdNClhI5

Katılım Eylül 2025

1 Takip Edilen3.4K Takipçiler

I care a lot about 1) latency, and 2) good benchmarks.

The voice models from @GradiumAI occupy in the top spot on the @covaldev voice AI benchmark leaderboard, which tests both latency and accuracy.

We've made the Gradium models the default in our open source, massively multi-player, LLM game. You play the game by talking to your "ship AI."

Of course, humans aren't the only talkers in the universe, these days. So @chadbailey59 has been building OpenClaw bots that play the game, too!

Here's a video of an OpenClaw agent playing the game by talking to the agent's ship AI. Chad created specific personalities for both the OpenClaw bot and the ship AI, here. Gradium's voice models are very emotive, and they support voice cloning and customization.

I love how morose and pessimistic the ship AI is, and how excitable and cheerful the OpenClaw bot is.

This is not scripted at all. These are two voice AI agents just doing their thing.

English

You define them in code, run them in Vercel sandboxes, and plug them into the running game.

The same pattern works for any live application that needs isolated, on-demand subagent execution alongside the main pipeline.

producthunt.com/products/gradi…

English

You can now bring your own subagents to Gradient Bang. It's @pipecat_ai 's multiplayer, LLM-driven space trading game.

Voice is the primary input, and Gradium is the default TTS model. Live on Product Hunt today

English

Gradium retweetledi

Alright it’s now official - barely 9 months old and @GradiumAI is already trouncing the entire voice AI field on third party TTS benchmarks

Better than OpenAI

Better than Eleven Labs

Better than Cartesia

Better than Deepgram

…

English

Gradium retweetledi

Most TTS benchmarks come from the vendors themselves. Conditions get picked to flatter the system being measured, using studio-quality text, simple inputs, and P50 only without spread

The numbers are real. They're just not comparable. And they don't tell you what happens when 5% of your calls hit a 600ms tail.

We built benchmarks.coval.ai to fix that. Standardized conditions, open methodology, open code, continuously updated.

Today's snapshot: Gradium ranks first on both latency metrics we measure (P50 TTFA and P25-P75 spread) without trading off intelligibility. Their WER is competitive with the top of the field.

Full per-provider breakdown is in our benchmarks page.

Run it yourself: github.com/coval-ai/bench…

Live results: benchmarks.coval.ai/tts

English

The 2ms P25-P75 spread is the number worth paying attention to.

Nearly every request lands at the same latency. In production voice agents, that consistency is what makes turns feel natural rather than unpredictable.

gradium.ai/blog/coval-tts…

English

Gradium retweetledi

I was in the flight when I got his idea.

Here's another project I made during my break

Audiobook ListenHER 💅

Turn any book you own (PDF), narrated in your favourite person's voice.

Just get them to yap for few seconds and that's it!

Made with @OpenAIDevs Codex

Voice cloning from @GradiumAI

English

Voice cloned for demo purposes from 10 seconds of audio, sub-second turn-taking, and everything you see runs on Gradbot.

github.com/gradium-ai/gra…

English

Gradium retweetledi

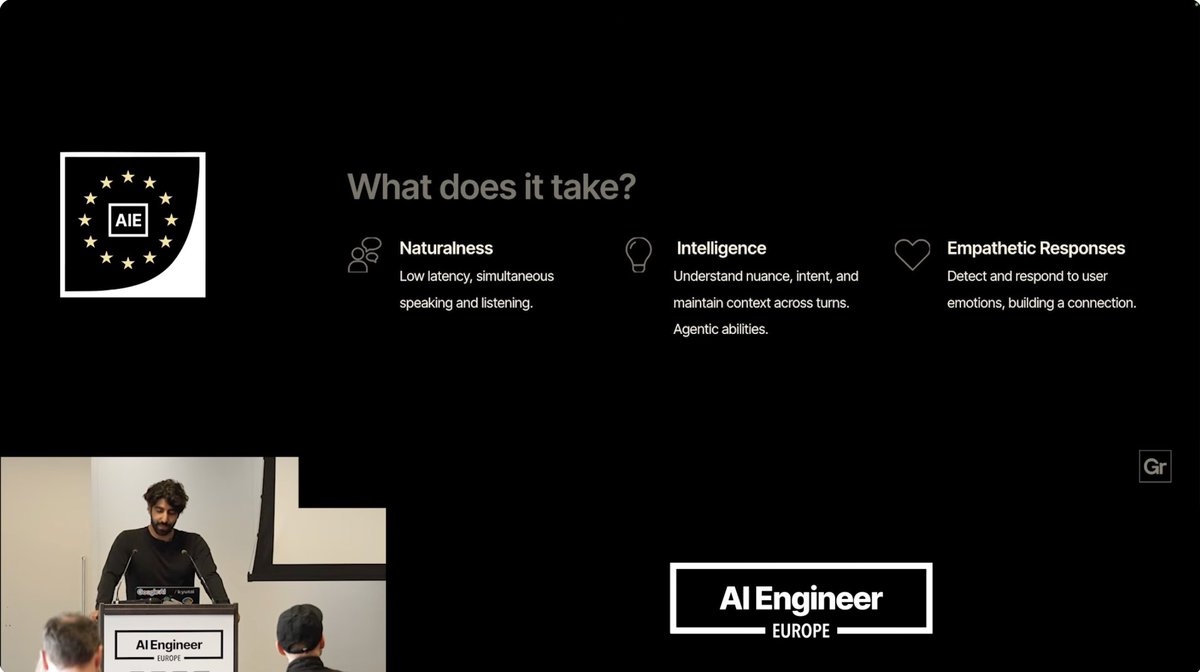

If you want to understand where the industry is at when it comes to TTS, STT and Speech to Speech, I would recommend watching the recent AI engineer video from @neilzegh

He compares some of scenes from the "Her" movie which is so frequently floated around when it comes to the ideal "voice" experience and tells us what is still missing from that experience stack.

Super glad that @aiDotEngineer exists @swyx :)

English

Berlin did have game :)

Back at a hackathon after a while and Big Berlin Hack felt like a new era: AI now multiplies what you can build in 1-2 days.

I built Mira: an AI creative director that turns product briefs into launch videos, with voiceover powered by @GradiumAI / gradium.ai

miracuts.com

Pratim🥑@BhosalePratim

I didn’t know Berlin had game. Looking forward to what everyone builds with @GradiumAI

English

@ClementDelangue @kyutai_labs @ElevenLabs Here you go. Made by gradbot in one command: "I want to create a vintage conversational agent using the talkie model huggingface.co/awilliamson/ta…"

English

Can someone build the Reachy Mini app that lets it speak like Talkie from @AlecRad in a posh pre-1931 British voice? I want to hear a next-gen robot channeling the 1920s!

Space: huggingface.co/spaces/multimo…

How to build a Reachy Mini app: huggingface.co/blog/clem/reac…

English

Gradium retweetledi

European Summer, a little bit different ;)

If anyone ever needed an excuse to spend a weekend in summer in Paris, here it is!

We are back, teaming up with OpenAI & Hexa with support from SLNG, Gradium, fal, XAnge & Fastino Labs for a 1-day 100-person hack in Paris in 2 weeks (16th of May).

Applications are open now 👇 (our last Paris hack had >550 applications, so better be fast)

English