Alfc Oleol

41 posts

People talk, listen, watch, think, and collaborate at the same time, in real time. We've designed an AI that works with people the same way. We share our approach, early results, and a quick look at our model in action. thinkingmachines.ai/blog/interacti…

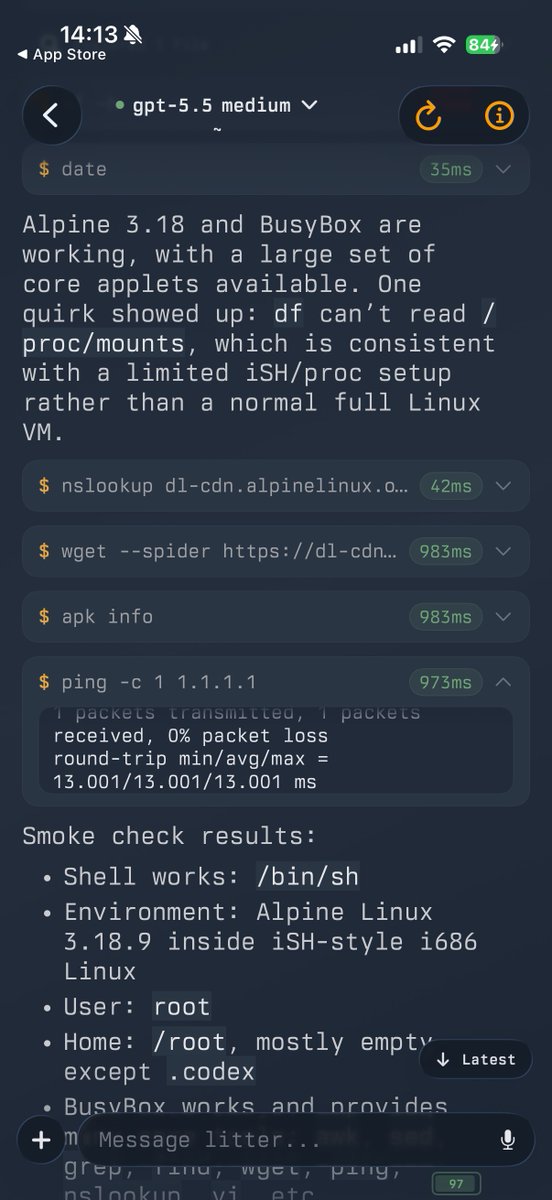

come on apple

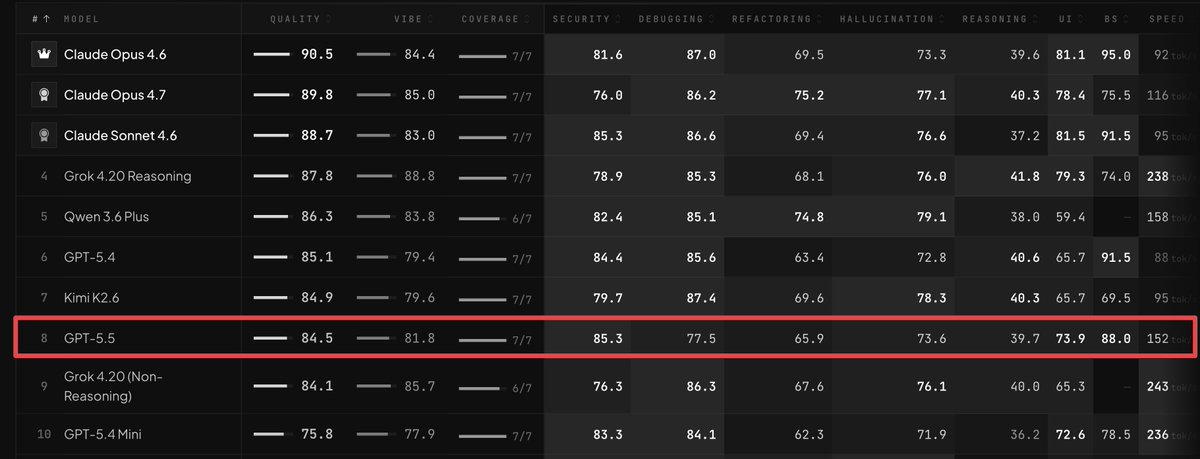

Deepseek V4 Flash 284B is just one point better than qwen3.6 27B .. @alibaba @Alibaba_Qwen and a 3090

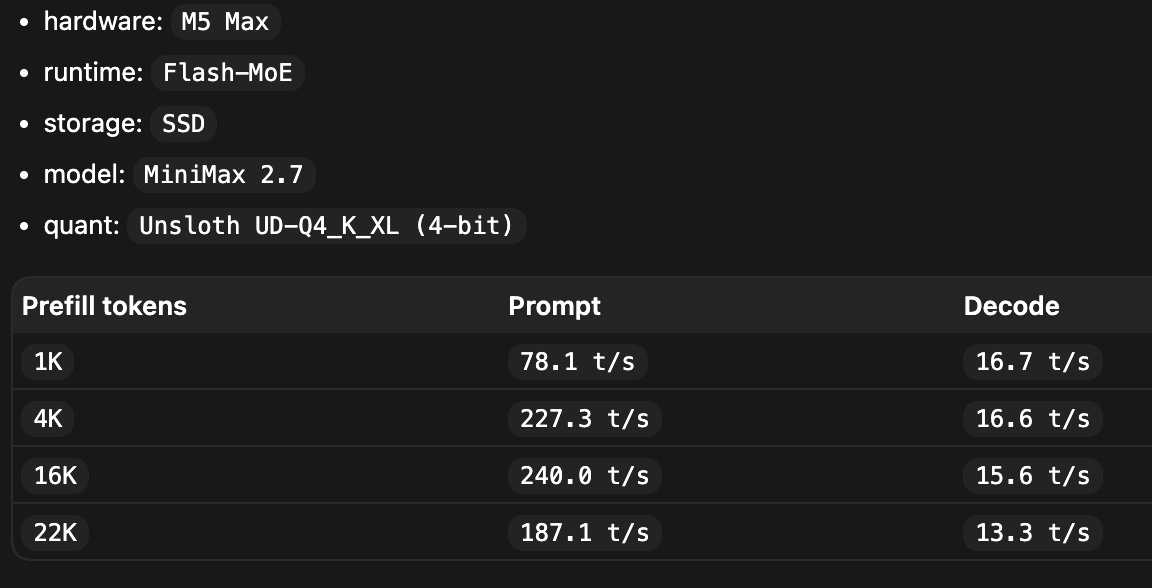

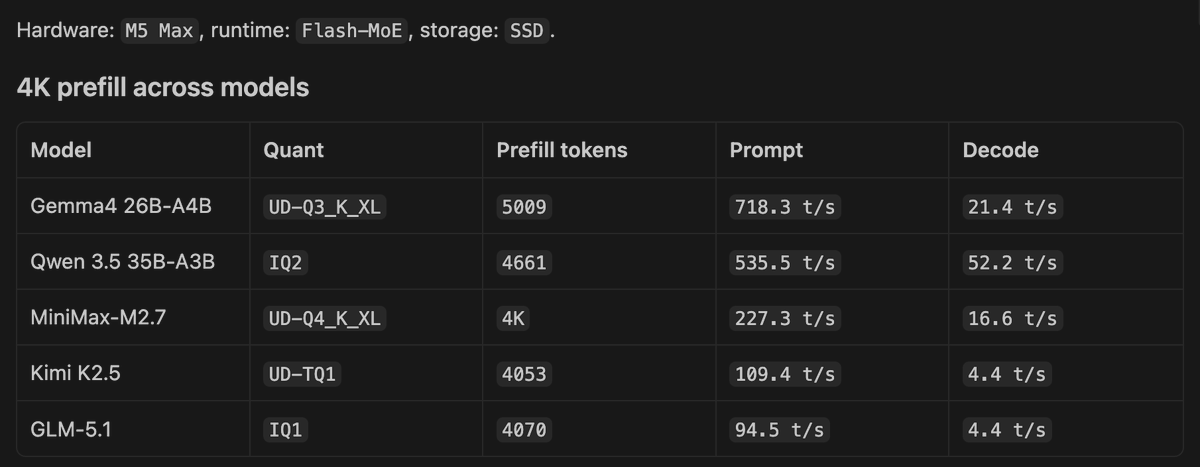

WIP: Merged: prefill dedup for Flash-MoE. MiniMax only for now while I validate the other models Layer-major prefill runs one layer at a time, groups repeated expert picks across the prefill chunk, loads each unique expert from SSD once, and reuses it for every token that picked it , instead of reloading per token. Bigger prefill chunk → more reuse → cheaper prefill. Prefill is now compute-bound, not I/O-bound. That should help both GPU and ANE prefill at large batch sizes. Can we speedup regular MoE MLX prefill 🤔 github.com/Anemll/anemll-…