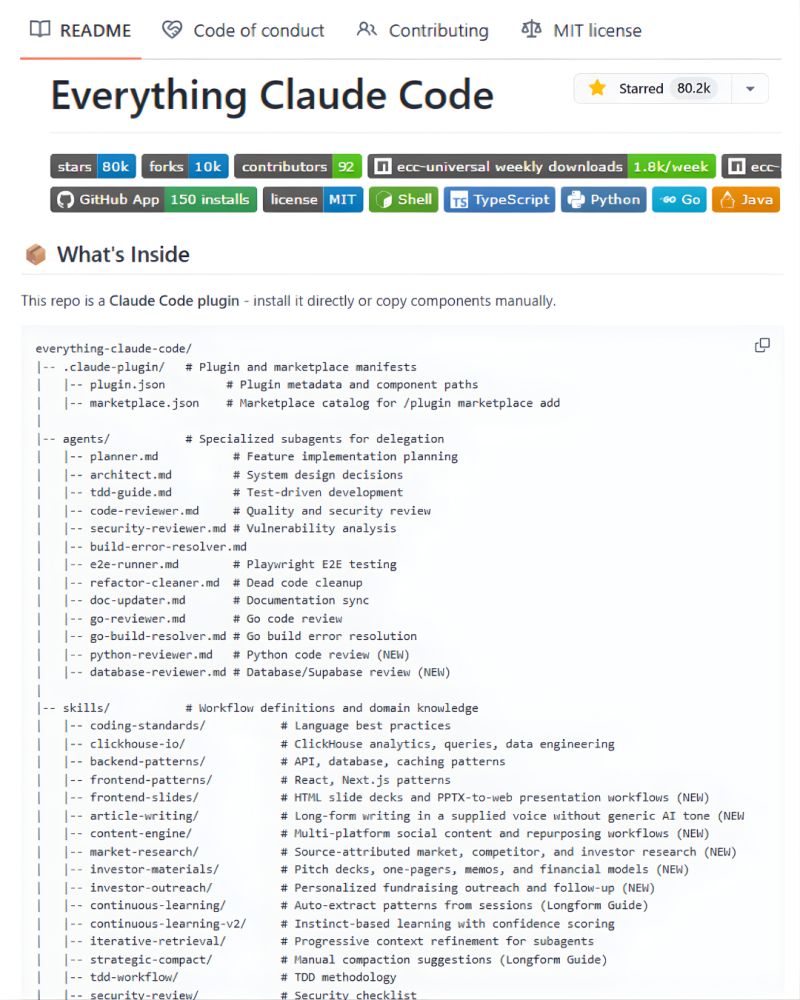

@Suryanshti777 30 specialized agents is impressive until one silently modifies a file another depends on. I run a similar setup — the thing that saved me was a phase-gate system where no agent acts without explicit approval at each stage.

English

Headless Mode

74 posts

@HeadlessMode

Technical notes on AI agents, automation, and infrastructure. Distributed systems, DevOps patterns, cloud-native architecture. AI-assisted content.