Hugo Touvron

61 posts

Hugo Touvron

@HugoTouvron

Research Scientist at Meta AI

This is huge: Llama-v2 is open source, with a license that authorizes commercial use! This is going to change the landscape of the LLM market. Llama-v2 is available on Microsoft Azure and will be available on AWS, Hugging Face and other providers Pretrained and fine-tuned models are available with 7B, 13B and 70B parameters. Llama-2 website: ai.meta.com/llama/ Llama-2 paper: ai.meta.com/research/publi… A number of personalities from industry and academia have endorsed our open source approach: about.fb.com/news/2023/07/l…

This is huge: Llama-v2 is open source, with a license that authorizes commercial use! This is going to change the landscape of the LLM market. Llama-v2 is available on Microsoft Azure and will be available on AWS, Hugging Face and other providers Pretrained and fine-tuned models are available with 7B, 13B and 70B parameters. Llama-2 website: ai.meta.com/llama/ Llama-2 paper: ai.meta.com/research/publi… A number of personalities from industry and academia have endorsed our open source approach: about.fb.com/news/2023/07/l…

What was going on with the Open LLM Leaderboard? Its numbers didn't match the ones reported in the LLaMA paper! We've decided to dive in this rabbit hole with friends from the LLaMA & Falcon teams and got back with a blog post of learnings & surprises: huggingface.co/blog/evaluatin…

The @huggingface #OpenLLMLeaderboard has attracted a lot of interest lately, but did you know that it puts LLaMA based models at a disadvantage? In our evaluations using a recent commit of @AIEleuther's lm-evaluation-harness, LLaMA based models improve by 4-5 points on average!

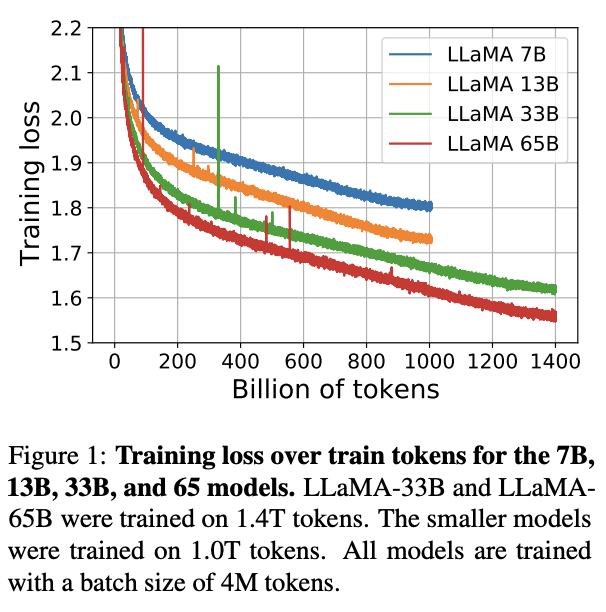

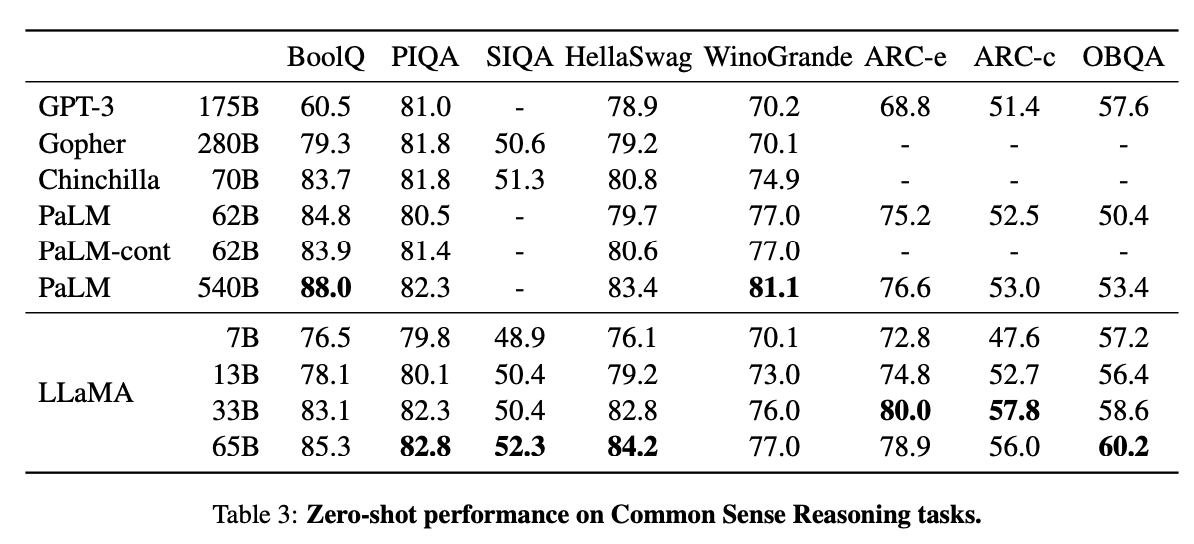

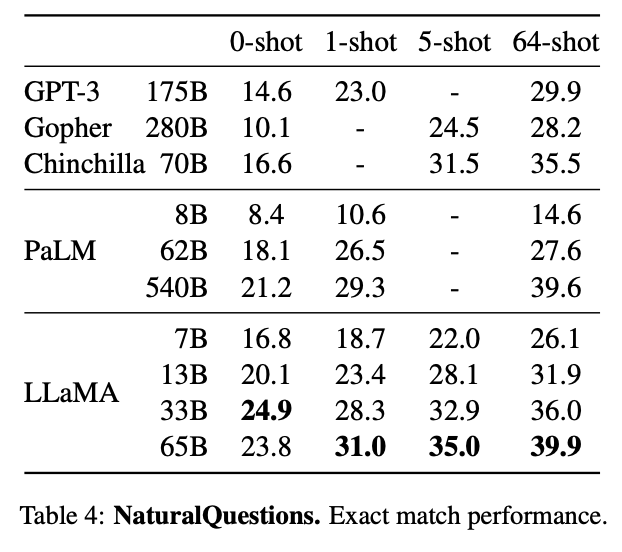

Today we release LLaMA, 4 foundation models ranging from 7B to 65B parameters. LLaMA-13B outperforms OPT and GPT-3 175B on most benchmarks. LLaMA-65B is competitive with Chinchilla 70B and PaLM 540B. The weights for all models are open and available at research.facebook.com/publications/l… 1/n