Praveenkumar

291 posts

Praveenkumar

@Im_PK_R

Computer Vision / AI Research Engineer, Neubility📍Seoul 🇰🇷 From 🇮🇳

Long overdue but here's a new blogpost on training LLMs in the wilderness from the ground up 😄🧐 In this blog post, I discuss: 1. Experiences in procuring compute & variance in different compute providers. Our biggest finding/surprise is that variance is super high and it's almost a lottery to what hardware one could get! (!?!) 😱 2. Discussing "wild life" infrastructure/code and transitioning to what I used to at Google 🤣 3. New mindset when training models. 😶🌫️ Writing can be quite therapeutic. I should write more but for now, link below: 👇

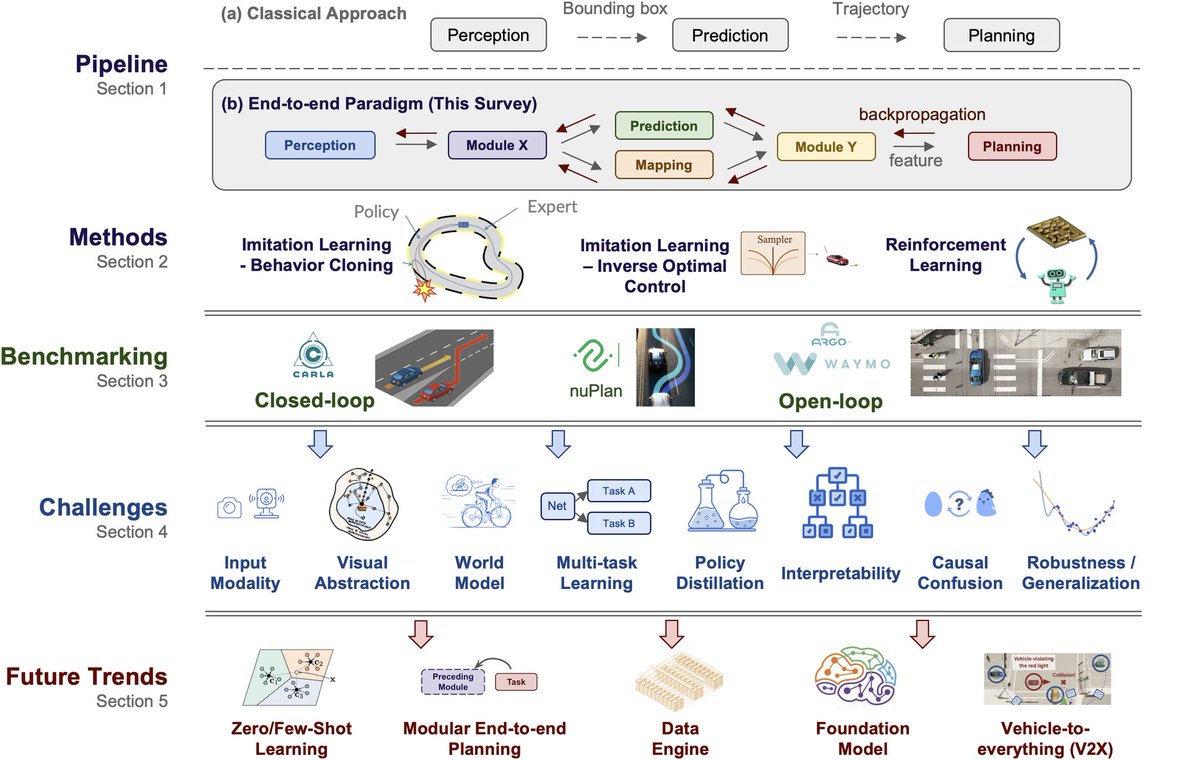

CA Votes YES on Full Self-Driving Taxi Rollout in San Francisco in win for @Cruise and @Waymo The California Public Utilities Commission (CPUC) voted 3-1 today to allow autonomous vehicle companies Waymo and Cruise’s self-driving taxi operations in San Francisco to operate 24/7 and charge fares. Prior to the vote, Waymo and Cruise were authorized to beta-test commercial service with limits on the number of passengers, fees, and areas of operation. Now, the companies will be able to charge for rides and operate throughout the city 24/7. Both companies have argued that self-driving cars will reduce rideshare costs and save lives; in their first million driverless miles, Waymo vehicles were in only two reportable collisions, and Cruise reported 73 percent fewer collisions with “meaningful risk of injury,” than their human performance benchmark. Self-driving cars have faced strenuous opposition from a coalition of labor unions, local activists, and leftist politicians in San Francisco. As we detailed in Labor’s Shadow War With Self-Driving Cars (link below), many city officials (like Aaron Peskin, Jeffrey Tumlin, and Fire Chief Jeanine Nicholson) opposed to autonomous vehicles in SF say they’re concerned about the cars’ safety, but it’s more likely that naked partisanship, conflicts of interest, and loyalty to local labor interests animate them much more than a desire to protect San Franciscans. -Sanjana Friedman (@metaversehell) Labor’s Shadow War With Self-Driving Cars x.com/piratewires/st… Waymo first million driverless miles data waymo.com/blog/2023/02/f… Cruise first million driverless miles data getcruise.com/news/blog/2023…

🆕 Essay: The Rise of the AI Engineer latent.space/p/ai-engineer Keeping up on AI is becoming a full time job. Let's get together and define it.

Stable Diffusion is now capable of creating photo realistic full 3D models from single images. The amount of ways it could be used in video games and the metaverse blows my mind. AI is getting closer and closer to futuristic sci-fi movies!

Woohoo! You can now chat with @ykilcher's YouTube channel! 🥳 @YannicKilcher" target="_blank" rel="nofollow noopener">youtube.com/@YannicKilcher

400 videos of quality ML research, news, and memes :D (bc Yannic) Yannic had a massive impact on a whole generation of up-and-coming ML engs & researchers Ortus: chrome.google.com/webstore/detai… 1/👇

Yesterday, i made a mistake and didn't pay enough attention about that the FollowYourPose @gradio demo uses MMPose instead of OpenPose 🫢 So there you go: convert any video or gif to MMPose sequence on @huggingface —› huggingface.co/spaces/fffilon…