InfiniteHexx

1.6K posts

InfiniteHexx

@InfiniteHexx

Systems architect | Polymath | Entrepreneur | L/Acc through E/Acc | Post-capitalism through exponential growth

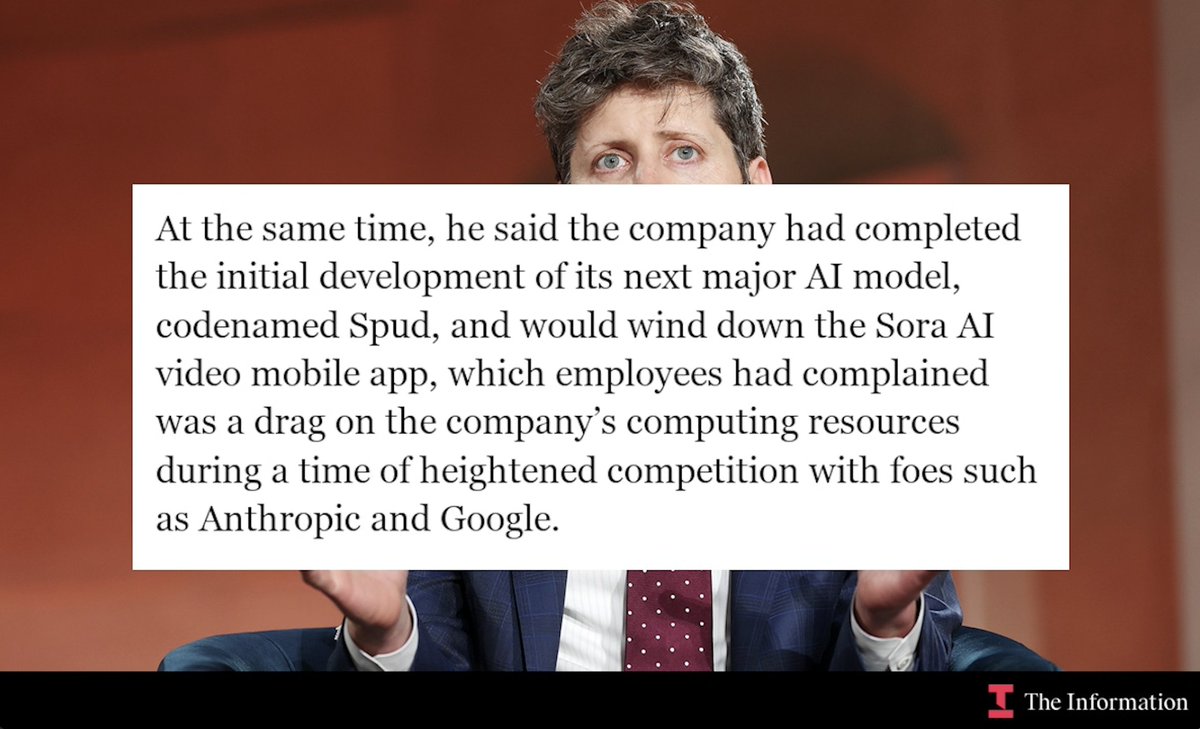

I told you Anthropic will make use of their compute advantage in Q1 sure smells like a 10T model to me

“ A draft blog post that was available in an unsecured and publicly-searchable data store prior to Thursday evening said the new model is called “Claude Mythos” and that the company believes it poses unprecedented cybersecurity risks. “

Hello. We have reset Codex usage limits across all plans to let everyone experiment with the magnificent plugins we just launched, and because it had been a while! You can just build unlimited things with Codex. Have fun!

We scored 36.08% on ARC-AGI-3 in one day using the Agentica SDK.

AGI is out of reach for now, looking at these scores. Yes we're slope of the sloping, but this iteration of ARC is ~100x more difficult than the last, going by score. To gain escape velocity, models will have to do better than 100x between ARC iterations.

ARC-AGI-3 is out now! We've designed the benchmark to evaluate agentic intelligence via interactive reasoning environments. Beating ARC-AGI-3 will be achieved when an AI system matches or exceeds human-level action efficiency on all environments, upon seeing them for the first time. We've done extensive human testing that shows 100% of these environments are solvable by humans, upon first contact, with no prior training and no instructions. Meanwhile, all frontier AI reasoning models do under 1% at this time.

Grok 5 is training on Colossus 2, the world’s largest Supercluster and is expected to have 6 trillion parameters - roughly double that of Grok 4. The most exciting and most powerful outcome is most likely.

ARC-AGI-3 scores for GPT-5.4, Gemini 3.1 Pro and Opus 4.6 Gemini 3.1 Pro: 0.37% GPT-5.4: 0.26% Opus 4.6: 0.25% Grok 4.2: 0%

ARC-AGI-3 scores for GPT-5.4, Gemini 3.1 Pro and Opus 4.6 Gemini 3.1 Pro: 0.37% GPT-5.4: 0.26% Opus 4.6: 0.25% Grok 4.2: 0%

Anthropic released Auto Mode for Claude Code CLI, which allows Claude to make its own decisions on which permissions to accept. It is only available on the Team plan in research preview for now. On the desktop app, it is not yet available, but it is in the works.